PP2/PP3: Musicaltereality

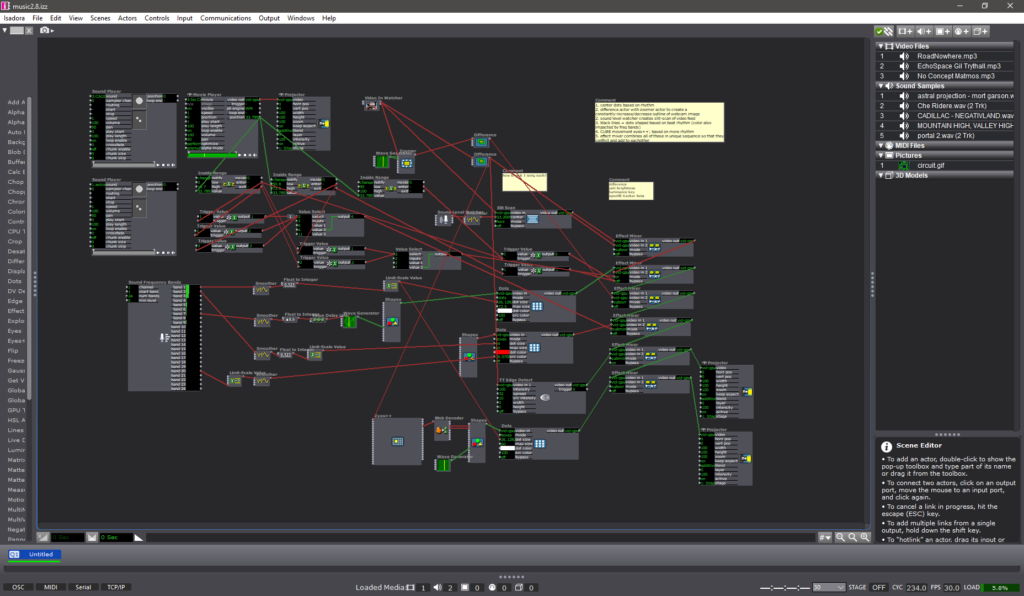

Posted: October 29, 2023 Filed under: Arvcuken Noquisi, Pressure Project 2, Pressure Project 3, Uncategorized Leave a comment »Hello. Welcome to my Isadora patch.

This project is an experiment in conglomeration and human response. I was inspired by Charles Csuri’s piece Swan Lake – I was intrigued by the essentialisation of human form and movement, particularly how it joins with glitchy computer perception.

I used this pressure project to extend the ideas I had built from our in-class sound patch work from last month. I wanted to make a visual entity which seems to respond and interact with both the musical input and human input (via camera) that it is given, to create an altered reality that combines the two (hence musicaltereality).

So here’s the patch at work. I chose Matmos’ song No Concept as the music input, because it has very notable rhythms and unique textures which provide great foundation for the layering I wanted to do with my patch.

Photosensitivity/flashing warning – this video gets flashy toward the end

The center dots are a constantly-rotating pentagon shape connected to a “dots” actor. I connected frequency analysis to dot size, which is how the shape transforms into larger and smaller dots throughout the song.

The giant bars on the screen are a similar setup to the center dots. Frequency analysis is connected to a shapes actor, which is connected to a dots actor (with “boxes” selected instead of “dots”). The frequency changes both the dot size and the “src color” of the dot actor, which is how the output visuals are morphing colors based on audio input.

The motion-tracking rotating square is another shapes-dots setup which changes size based on music input. As you can tell, a lot of this patch is made out of repetitive layers with slight alterations.

There is a slit-scan actor which is impacted by volume. This is what creates the bands of color that waterfall up and down. I liked how this created a glitch effect, and directly responded to human movement and changes in camera input.

There are two difference actors: one of them is constantly zooming in and out, which creates an echo effect that follows the regular outlines. The other difference actor is connected to a TT edge detect actor, which adds thickness to the (non-zooming) outlines. I liked how these add confusion to the reality of the visuals.

All of these different inputs are then put through a ton of “mixer” actors to create the muddied visuals you see on screen. I used a ton of “inside range”, “trigger value”, and “value select” actors connected to these different mixers in order to change the color combinations at different points of the music. Figuring this part out (how to actually control the output and sync it up to the song) was what took the majority of my time for pressure project 3.

I like the chaos of this project, though I wonder what I can do to make it feel more interactive. The motion-tracking square is a little tacked-on, so if I were to make another project similar to this in the future I would want to see if I can do more with motion-based input.