Cycle 3 – Interactive Immersive Radio

Posted: May 1, 2025 Filed under: Uncategorized | Tags: Cycle 3 Leave a comment »I started my cycle three process by reflecting on the performance and value action of the last cycle. I identified some key resources that I wanted to use and continue to explore. I also decided to focus a bit more on the scoring of the entire piece, since many of my previous projects were very loose and open ended. I was drawn to two specific elements based on the feedback I had received previously. One of which was the desire to “play” the installation more like a traditional instrument. This was something that I had deliberately been trying to avoid in past cycles, so I decided maybe it was about time to give it a try and make something a little more playable. The other element I wanted to focus on was the desire to discover hidden capabilities and “solve” the installation like a puzzle. Using these two guiding principles, I began to create a rough score for the experience.

I addition to using the basic MIDI instruments, I also wanted to experiment with some backing tacks from a specific song, in this case, Radio by Sylvan Esso. In a previous project for the Introduction to Immersive Audio class, I used a program called Spectral Layers to “un-mix” a pre-recorded song. This process takes any song and attempts to separate the various instruments into their isolated tracks, to varying degrees of success. It usually takes a few try’s, experimenting with various settings and controls to get a good sounding track. Luckily, the program allows you to easily unmix and separate track components and re-combine elements to get something that is fairly close to the original. For this song I was able to break it down into four basic tracks; Vocals, Bass, Drums and Synth. The end result is not perfect by any means, but it was good enough to get the general essence of the song when played together.

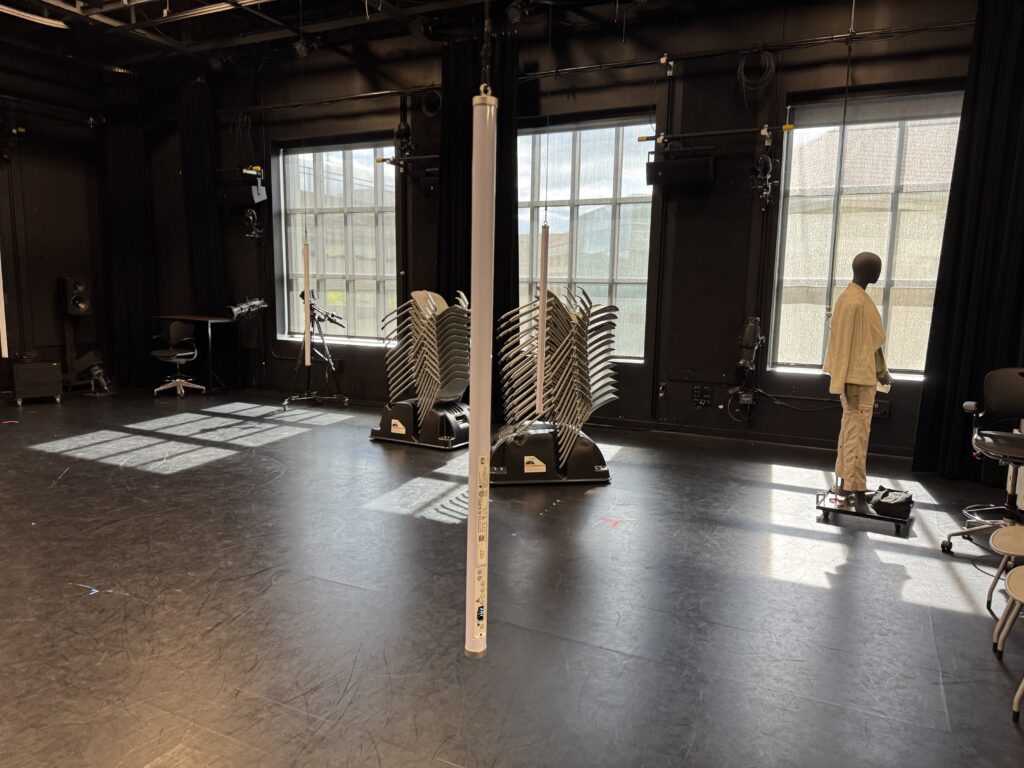

Another key element I wanted to focus on was the lighting and general layout and aesthetic of the space. I really enjoyed the Astera Titan Tubes that I used in the last cycle and wanted to try a more integrated approach to triggering the lighting console from Touch Designer. I received some feedback that people were looking forward to a new experience from previous cycles, so that motivated me to push myself a little harder and come up with a different layout. The light tubes have various options for mounting them and I decided to hang them from the curtain track to provide some flexibility in placement. Thankfully, we had the resources already in the Motion Lab to make this happen easily. I used spare track rollers and some tie-line and clips left over from a previous project to hang the lights on a height adjustable string that ended up working really well. This took a few hours to put together, but I think this resource will definitely get used in the future by people in the Motion Lab.

In order to make the experience “playable” I decided to break out the bass line into its component notes and link the trigger boxes in Touch Designer to correspond to the musical score. This turned out to be the most difficult part of the process. For starters, I needed to quantify the number of notes and they cycles they repeat in. Essentially, this broke down into 4 notes, each played 5 times sequentially. Then I also needed to map the boxes that would trigger the notes into the space. Since the coordinates are Cartesian x-y and I wanted the boxes arranged into a circle, I had to figure out a way to extract the location data. I didn’t want to do the math, so I decided to use my experience in Vectorworks as a resource to map out the note score. This ended up working out pretty well and the resulting diagram has an interesting design aesthetic itself. My first real life attempt in the motion lab was working as planned, but the actual playing of the trigger boxes in time was virtually impossible. I experimented with various sizes and shapes, but nothing worked perfectly. I settled on some large columns that a body would easily trigger.

The last piece was to link the lighting playback with the Touch Designer triggers. I had some experience with this previously and more recently have been exploring the OSC functionality more closely. It took a few tries, but I eventually sent the correct commands and got the results I was looking for. Essentially, I programmed all the various lighting looks I wanted to use on “submaster” faders and then sent the commands to move the faders. This allowed me to use variable “fade times” by using the “Lag” Chop in Touch Designer to control the on and off rate of each trigger. I took another deep dive into the ETC eos virtual media server and pixel mapping capabilities, which was sometimes fun and sometimes frustrating. It’s nice to have multiple ways to achieve the same effect, but it was sometimes difficult to find the right method based on how I wanted to layer everything. I also maxed out the “speed” parameter, which was unfortunate because I could not match the BPM of the song, even though the speed was set to 800%.

I was excited for the performance and really enjoyed the immersive nature of the suspended tubes. Since I was the last person to go, we were already running way over on time and I was a bit rushed to get everything set up. I had decided earlier that I wanted to completely enclose the inner circle with black drape. This involved moving all 12 curtains in the lab onto different tracks, something that I knew would take some time and I considered cutting this since we were running behind schedule. I’m glad I stuck to my original plan and took the extra 10 minutes to move things around because the black void behind the light tubes really increased the immersive qualities of the space. I enjoyed watching everyone explore and try to figure out how to activate the tracks. Eventually, everyone gathered around the center and the entire song played. Some people did run around in the circle and activate the “base line” notes, but the connection was never officially made. I also hid a rainbow light cue in the top center that was difficult to activate. If I had a bit more time to refine, I would have liked to make more “easter eggs” hidden around the space. Overall, I was satisfied with how the experience was received and look forward to possible future cycles and experimentation.

Cycle 3: It Takes 3

Posted: May 1, 2025 Filed under: Uncategorized | Tags: Cycle 3, Interactive Media, Interactive Shadow, Isadora, magic mirror Leave a comment »This project was the final iteration of my cycles project, and it has changed quite a bit over the course of three cycles. The base concept stayed the same but the details and functions changed as I received feedback from my peers and changed my priorities with the project. I even made it so three people could interact with it.

I wanted to focus a bit more on the sonic elements as I worked on this cycle. I started having a lot of ideas on how to incorporate more sonic elements, including adding soundscapes to each scene. Unfortunately I ran out of time to fully flesh out this particular idea and didn’t want to incorporate a half baked idea and end up with an unpleasant cacophony of sound. But I did add sonic elements to all of my mechanisms. I kept the chime when the scene became saturated, as well as the first time someone raised their arms to change a scene background. I did add a gate so this only happened the first time, to control the sound.

A new element I added was a Velocity actor that caused the image inside the silhouettes to explode, and when it did, it triggered a Sound Player with a POP! sound.This pop was important because it drew attention to the explosion to indicate that something happened and something they did caused it. This actor was also plugged into a Inside Range actor that was set to trigger a riddle at a certain velocity just below the range to trigger the explosion.

The other new mechanism I added was based on the proximity to the sensor of one of the users. The z-coordinate data for Body 2 was plugged into a Limit-Scale Value actor to translate the coordinate data into numbers I could plug into the volume input to make the sound louder as the user gets closer. I really needed to spend time in the space with people so I could fine-tune the numbers to the space, which I ended up doing during the presentation when it wasn’t cooperating. I also ran into the issue of needing that Sound Player to not always be on, otherwise that would have been overwhelming. I decided to have the other users have their hands raised to turn it on (it was actually only reading the left hand of Body 3 but for ease of use and riddle-writing, I just said both other people had to have them up).

I have continued adjusting the patch for the background change mechanism (raising the right hand of Body 1 changes the silhouette background and raising the left hand changes the background). My main focus here was making the gates work so it only changes one time while the hand is raised (gate doesn’t reopen until hand goes down), so I moved the gate to be in front of the Random actor in this patch. As I reflect on this, I think I know why it didn’t work; I didn’t program it to turn the gate on based on hand position, it only holds the trigger until the first one is complete, which is pretty much immediately. I think I would need an Inside Range actor to tell the gate to turn on when the hand is below a certain position, or something to that effect.

I sat down with Alex to work out some issues I had been having, such as my transparency issue. This was happening because the sensor was set to colorize the bodies, so Isadora was seeing red and green silhouettes. This was problematic because the Alpha Mask looks for white, so the color was not allowing a fully opaque mask. We fixed this with the addition of an HCL Adjust actor between the OpenNI Tracker and the Alpha Mask, with the saturation fully down and the luminance fully up.

The other issue Alex helped me fix was the desaturation mechanism. We replaced the Envelope Generators with Trigger Value actors plugged into a Smoother actor. This made for smooth transitions between changes because it allowed Isadora to make changes from where it’s already at, rather than from a set value.

The last big change I made to my patch was the backgrounds. Because I was struggling to find decent quality images of the right size for the shadow silhouettes, I took the information of one image that looked nice and created six simple backgrounds in Procreate. I wanted them to have bold colors and sharp lines so they would stand out against the moving backgrounds and have enough contrast both saturated and not. I also decided to use recognizable location-based backdrops since the water and space backdrops seemed to elicit the most emotional responses. In addition to the water and space scenes, I added a forest, mountains, a city, and clouds rolling across the sky.

These images worked really well against the realistic backgrounds. It was also fun to watch the group react, especially to the pink scene. They got really excited if they got a sparkle full and clear on their shadow. There was also a moment where they thought the white dots in the rainbow and purple scenes were a puzzle, which could be a cool idea to explore. I did have an idea to create a little bubble-popping game in a scene with a zoomed-in bubble as the main background.

The reactions I got were overwhelmingly positive and joyful. There was a lot of laughter and teamwork during the presentation, and they spent a lot of time playing with it. If we had more time, they likely would have kept playing and figuring it out, and probably would have loved a fourth iteration (I would have loved making one for them). Michael specifically wanted to learn it enough to manipulate it, especially to match up certain backgrounds (I would have had them go in a set order because accomplishing this at random would be difficult, though not impossible). Words like “puzzle” and “escape room” were thrown around during the post-experience discussion, which is what I was going for with the addition of the riddles I added to help guide users.

The most interesting feedback I got was from Alex who said he had started to experience himself ‘in third person’. What he means by this is that he referred to the shadow as himself while still recognizing it as a separate entity. If someone crossed in front of the other, the sensor stopped being able to see the back person and ‘erased’ them from the screen until it re-found them. This prompted that person to often go “oh look I’ve been erased”, which is what Alex was referring to with his comment.

I’ve decided to include my Cycle 3 score here as well, because it has a lot of things I didn’t get to explain here, and was functinoally my brain for this project. I think I might go back to this later and give some of the ideas in there a whirl. I think I’ve learned enough Isadora that I can figure out a lot of it, particularly those pesky gates. It took a long time, but I think I’m starting to understand gate logic.

The presentation was recorded in the MOLA so I will add that when I have it :). In the meantime, here’s the test video for the velocity-explode mechanism, where I subbed in a Mouse Watcher to make my life easier.