Virtual Reality Storytelling & Mixed Reality

Posted: December 18, 2016 Filed under: Final Project Leave a comment »For my final project, I jumped into working with VR to begin creating an interactive narrative. The title of this experience is “Somniat,” and it’s hoped to revisit feelings of childhood innocence and imagination in its users.

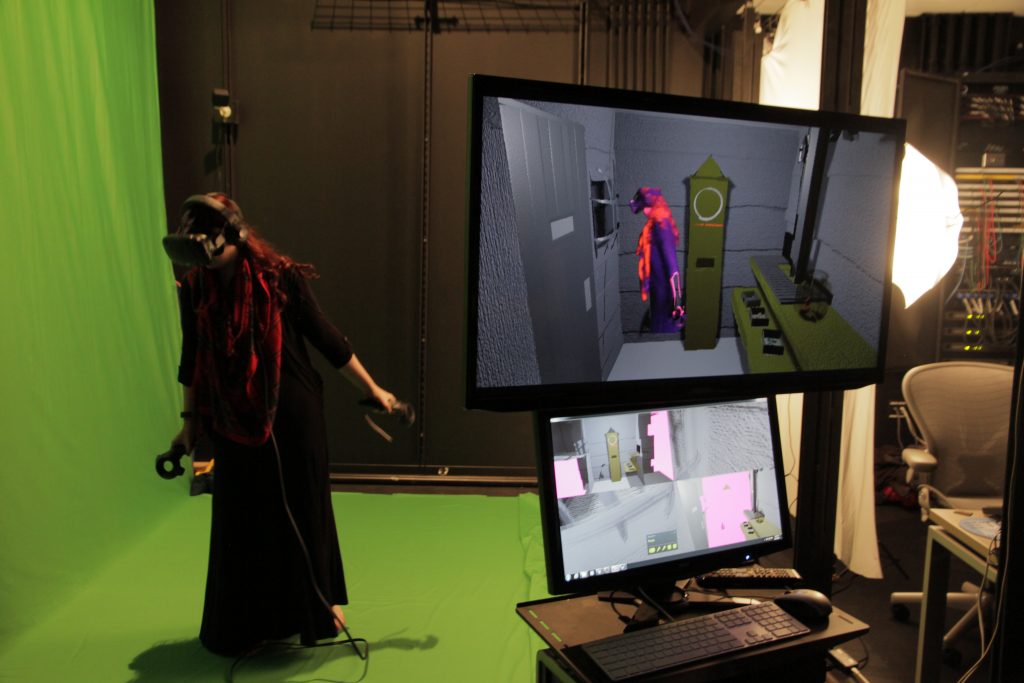

This project was built upon across three separate ACCAD courses – “Concept Development for Time-Based Media,” “Game Art and Design I,” and “Devising Experiential Media Systems.” In Concept Development, I created a storyboard, which eventually became an animatic of the story I hoped to create. Then in Game Design, I hashed out all the interactive objectives, challenges, and user cues, building them into a functional virtual reality experience. And finally, in DEMS, I brought all previous ideas together and built the entire experience, both inside and out of VR, into a complete presentation! A large focus of this presentation was the mixed reality greenscreen. Mixed reality is is where you record the user in the virtual world as well as the real world, and then blend both realities together! An example of this mixed reality from my project can be seen below:

I had a great time working on this project. I’d have to say my biggest takeaway from our class has been to start thinking of everything that goes into a user’s experience; from first hearing about the content to using it for themselves to telling others about it after. My perspective on creating experiential content has been widened, and I look forward to using this view on work in the future!

Horse Bird Muffin Cycles

Posted: December 17, 2016 Filed under: Uncategorized Leave a comment »For my final project, I used Isadora, Vuo, a Kinect sensor, buttons and two projectors to create a sort of game or test to determine one’s inherent nature as horse, bird, or muffin, or some combination of them.

Through a series of instructed interactions, a person first chooses an environment from 3 images projected onto a screen. As a person walks toward a particular image, their own moving silhouette is layered onto the image. Text appears to instruct them to move closer to the place they’ve chosen, and the closer they get, the louder an ambient sound associated with their chosen image gets.

Through a series of instructed interactions, a person first chooses an environment from 3 images projected onto a screen. As a person walks toward a particular image, their own moving silhouette is layered onto the image. Text appears to instruct them to move closer to the place they’ve chosen, and the closer they get, the louder an ambient sound associated with their chosen image gets.

Once within a certain proximity, if all goes well, the first part of the test is complete. So they start with a subtly, self-selected identity.

So they start with a subtly, self-selected identity.

In the next scene, the participant can interact with an image of their choice: the image mirrors their body moving through space and the image changes size based on the volume of sound the participant and their surroundings create. Stomping loud feet and claps make the image fill the screen and also earn the participant at least one horse ranking, while lot’s of traveling through space accumulates to earn the participants a bird ranking. Little or in place movement after a designated, as many guessed, puts them in ranks with muffins.

Any of the three events trigger a song affiliated with their rank and the next evaluation to begin.

This last scene never quite reached my imagined heights, but it was intended for the participant to see themselves on video in real time and delay, still with the moving silhouettes tracked and projected through a difference actor on Isadora. This worked and participants enjoyed dancing with themselves and their echoes onscreen. Parts that needed work were a few shapes actors that were designed to follow movements in different quadrants of the Kinect’s RGB camera field and a patch that counted the number of times the participant crossed a horizontal field completely (to trigger a final horse ranking) or moved from high to low in vertical field, or again, observed themselves with small or no movements until a set time was up.

These functions sometimes worked and did not demonstrate robustness…..

Nonetheless, each participant was instructed to push a button at the end of their assessment and were able to discover if they were a birdhorsemuffin, a birdhorsehorse, triple horse or some other combination.

Pressure Project 3: Only the Lonely

Posted: December 16, 2016 Filed under: Uncategorized Leave a comment »For the third pressure project involving dice, I wanted the dice to trigger a music player, with the location of where dice landed determining which instrumental parts of a song would play. I decided to use a Kinect’s depth sensor to detect the presence of dice in the foreground, mid-ground and background of a marked range of space.

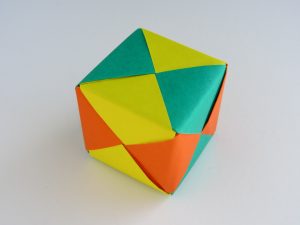

I made origami cubes to use as dice so that they were a bit larger and easy to detect and also not as volatile and thereby easier to keep within the range I’d set.

Using a Syphon Receiver in communication with Vuo, I connected the computer vision to 3 discrete ranges of depth and used a crop actor to cut out any information about objects, like people, that might come into the camera’s field of vision.

(This only partially worked and could use some fine tuning). In retrospect, I wonder if there is an adjustment on Eyes ++ I could use to require the presence of an object to last a certain amount of time before registering its brightness in order to prevent interfering messages besides the location of dice.

Each Eyes actor was connected to a smoother and an inside range actor which looked for the brightness detected within the set luminescent range for each one to reach a minimum in order to trigger a series of signals. The primary signal was to play that sections sound (because the brightness indicated an object present) or to stop it (because below minimum brightness indicated the absence of an object).

Because the three instrumental music files were designed to play together, it was possible for 3 dice to land in the 3 respective ranges, with the goal being that all three files started together, playing a song with drums, bass line and keyboard melody (reminiscent of Roy Orbison’s Only the Lonely). To make this work and not have the sound files start in split seconds of each others, I set up a system of simultaneity actors, trigger delays and gates, that I can’t actually explain in detail, but I understand conceptually as delaying action to look first for simultaneous presence of objects in multiple ranges, then sending a signal for 2 or all based on information gathered, and barring that, continuing signals through for an individual part to play.

For example, if a dice landed in the mid-ground and 2 in the foreground, the inside range enter triggers from both those ranges would send triggers to individual trigger delays that in 2 seconds send a message to play a single file. BUT, if the simultaneity actor for the mid-ground and foreground is triggered, it signals a gate to turn off on those two delays, preventing the individual file triggers and sending a shared trigger to both the bass and the piano files to start at the same time.

For whatever reason I can only get a zip file of garageband file to upload, but this is all 3 parts together: dice-player-band

The entire Isadora patch is here: http://osu.box.com/s/eyjn9zge850ywc81yhdoj4cpbic1rkka

Chance music….

Pressure Project #3: Augmented Backgammon

Posted: December 16, 2016 Filed under: Uncategorized Leave a comment »Backgammon is the first board game ever in human history.

It is a mysterious mix of choices, strategy and luck. Properties of life I enjoy the most.

On my latest visit to Istanbul Turkey, I got a beautiful backgammon board. I decided then, to bring it back and let my fellow artists co workers play with the augmented reality version of this game I created.

Thanks ACCAD for the awesome video on this piece.!

Axel Cuevas Santamaría VJ axx

https://axxvjs.wordpress.com/

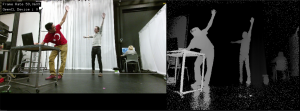

Pressure Project #2: Dancing Depths

Posted: December 16, 2016 Filed under: Uncategorized Leave a comment »After Presure project 1: Staring at the Sun, I developed this new iteration.

It was an attempt to deliver a delightful automatic digital art piece experiment.

… an individual walks into a room in darkness where a MIDI controller interface awaits.

The device, once activated by the individual, lets Isadora create a visually pleasing and self generating patch of shapes, lines, and color

Visual textures are assigned to the dancing silhouettes of people inside the room

This was my first experiment using kinect v2 and Isadora together

Also, my first interactive dancing experience where my audience got activated

After this, I continued developing a more complex and integrated experiential media system for an interactive dance performance titled Microbial Skin

Thanks Alex Olizsewski and ACCAD for all the support!

Axel Cuevas Santamaría VJ axx

https://axxvjs.wordpress.com/

Pressure Project #1: Staring at the Sun

Posted: December 16, 2016 Filed under: Uncategorized Leave a comment »For this project, we experienced yellow color invading our spatial perception.

Inspired by Pi, the American surrealist psychological thriller film from 1998 written and directed by Darren Aronofsky, this is the project I developed.

The experiential media system for this project provided a mock-up for an immersive environment that allows users to shift our spatial perception.

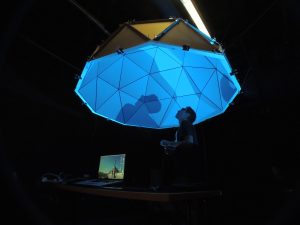

After this experiment, I built a geodesic dome to test immersive possibilities.

I started building cardboard domes, for delivering immersive experiences.

Axel Cuevas Santamaría VJ axx

https://axxvjs.wordpress.com/

folding_fours game

Posted: December 16, 2016 Filed under: Paper & Pen Leave a comment »Playing connect four as part of class was a great experience!

Encouraged by Alex to find new possibilities, me and Ece figured a new challenge in this old game by adding the possibility of folding the paper every third turn. This, after going deeper on the game with Ashlee, added a new experience: materiality.

The folding of the paper makes a richer physical level experience.

motiondetection01

Posted: December 16, 2016 Filed under: Uncategorized Leave a comment »As I started with fast motion detection action, I uploaded a first test patch. Works best with lots of light in the room (darn) and with Input Menu/Start Live Capture to play. hope it works

Download Patch in this link:

axtest03_sept08_motiondetection01-izz

Pressure Project II

Posted: December 16, 2016 Filed under: Pressure Project 2, Robin Ediger-Seto, Uncategorized Leave a comment »For our second pressure project we were tasked with creating a project with a Single person experience and a interface. As I was already thinking about story for my final project I wanted integrate intimacy and ideas of story told by the audience. I wanted to create a space in which the viewer was prompted to tell a story and then be audience to other stories about the same topic. I also want to using unconventional tactile Interface.

In the end I create a system which read one of my shirts and looked at the color to determine if it was there or not. if the shirt was present it would trigger a text on the screen, that instructed the viewer to tell a story. This then would be captured to the computer and would be played along with all the other stories after the party was done. In theory this would work but in practice it kind of failed. I don’t give myself time and the space to calibrate the computers understanding of the shirt under new lighting conditions. And I had not quite figured out how do use Isadora’s captured to disk actor.

There where ideas in it that I were very attracted and will continue to work on. I really like the idea of a tactile interface being an everyday object, especially when the object hold significance or comfort. I was also interested in the different stories that me emerge from someone simply talking into a computer.

Pressure Project 3

Posted: December 15, 2016 Filed under: Uncategorized Leave a comment »For the Pressure Project 3 I used 2 colors as a dice to activate different interactivity modes. Firstly I created a square shaped, paper, two sided (red & blue) colored dice on a piece of string.

I introduced the dice to the viewer and asked her to experiment with it by showing it to the camera. In order to see the “trick” she needs to match the right side of the camera and the dice. I divided the screen in two half with the crop object and assigned red or blue colors to each side. Blue is tracked only on the left side of the camera/ screen and red is only on the right.

Blue color activates a different sound and blue visuals also the color picker triggers a type object that indicates “ “ in addition to a whisper soundtrack on the background. Red color activates a jackpot! İllustration with a changing scale based on the movement value and a clap soundtrack in order to indicate the “win”.

I used the dice as a symbolization of two different image and sound results that are presented to the viewer. Red symbolizes a winner theme while blue symbolizes a random/neutral trigger with the murmur and whisper sounds on the background. I tried to use blue for keep trying to find the right side and red as a finish point of the game (with a win). It seemed to work on the audience. They found both red and blue sides.

I tried to come up with a simplistic dice idea in order to enable the motion and flexibility of the object in front of the camera. Color code seemed to work well, which is an important feature to use/learn in visual programming. Also in addition to using chroma key object, I used inside range and measure color object for the first time. Measure color object works great, especially for measuring the exact numbers of RGB pixel values. I am surprised how much I learned from a dice concept. A dice can be anything that triggers different functions.

Here is the Isadora file: karaca_pp3-izz

Two sided paper dice

Video is captured from the screens perspective, so when we see red side of the dice on the video, camera recognizes the blue and vice versa for red.