Cycle 3 – Solo 2: Electric Boogaloo

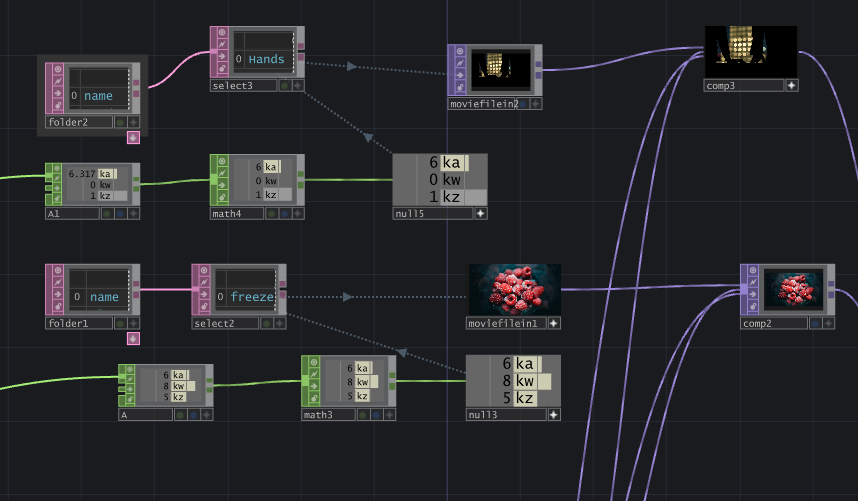

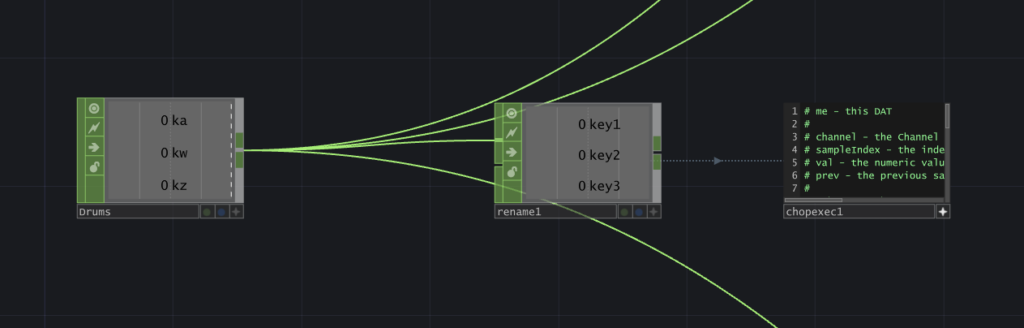

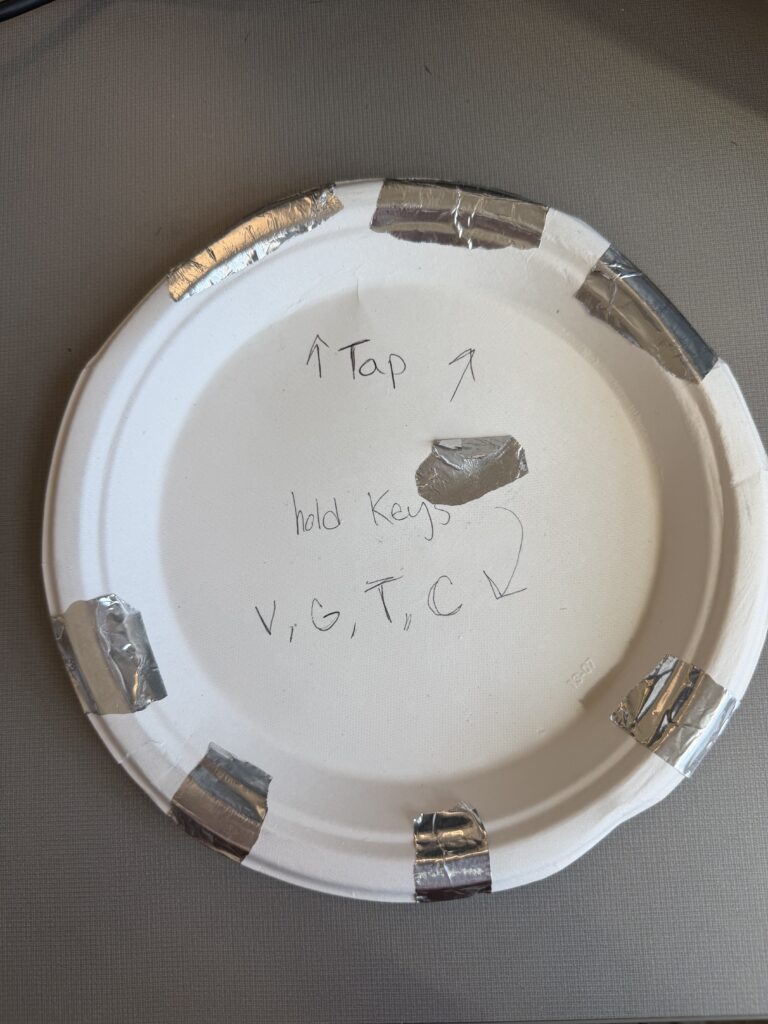

Posted: May 3, 2026 Filed under: Uncategorized Leave a comment »Hello! Welcome to the official successor of Solo: Paper Plate DJ.

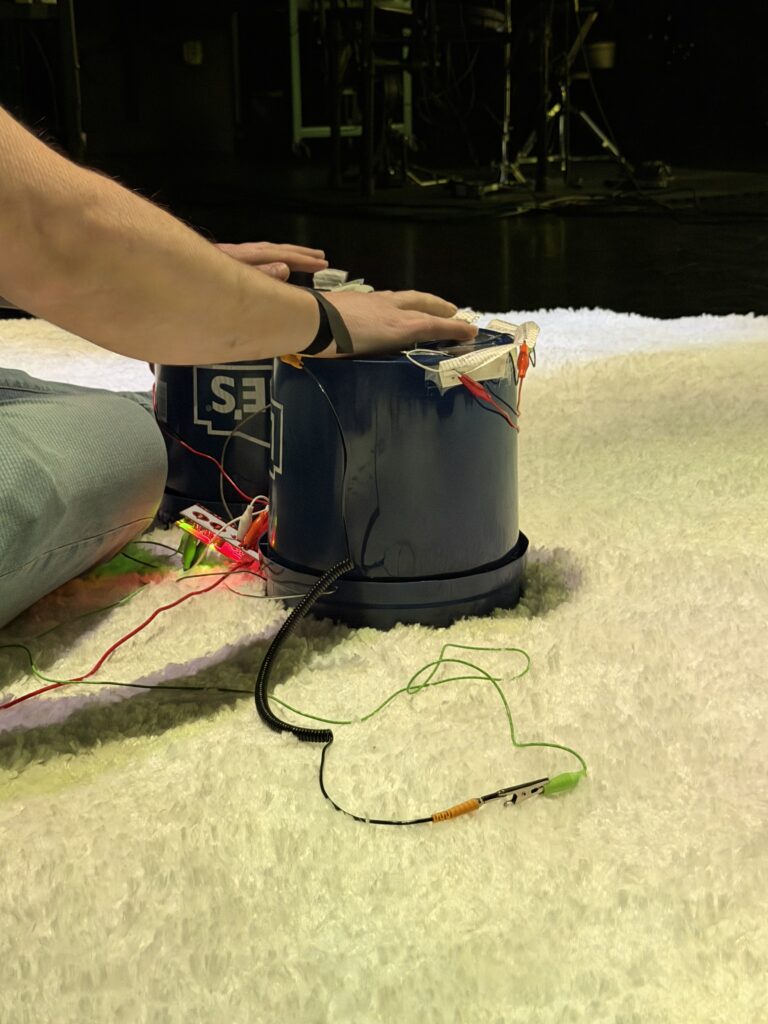

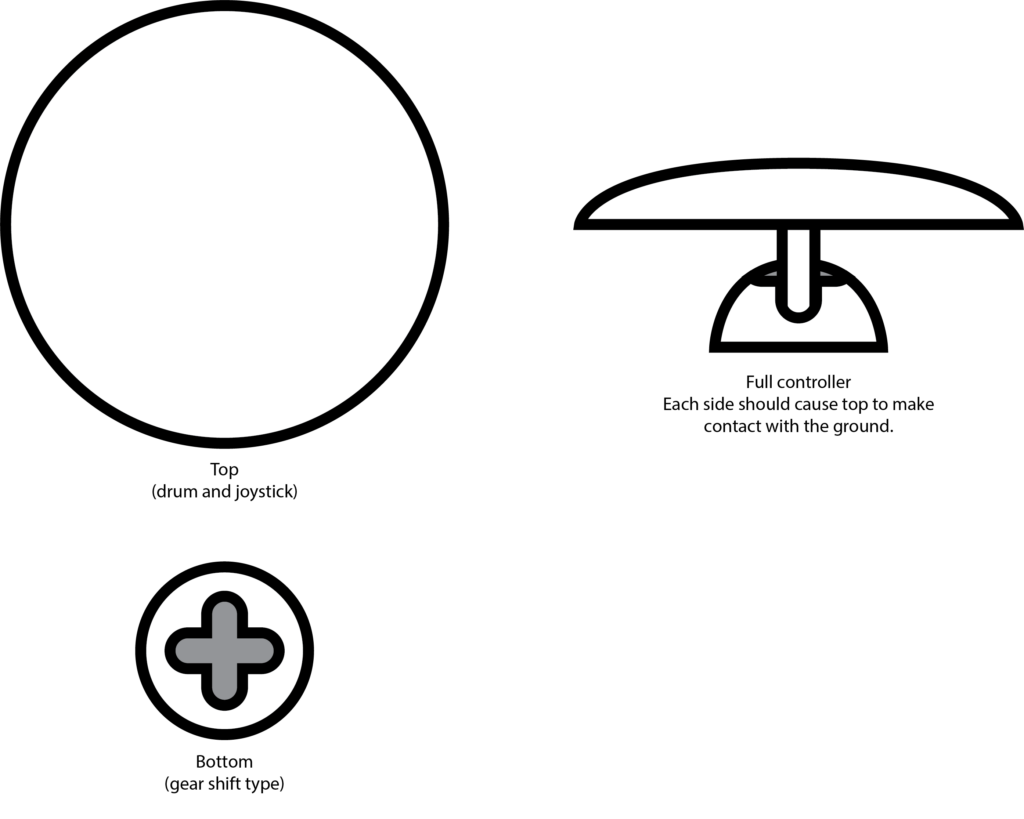

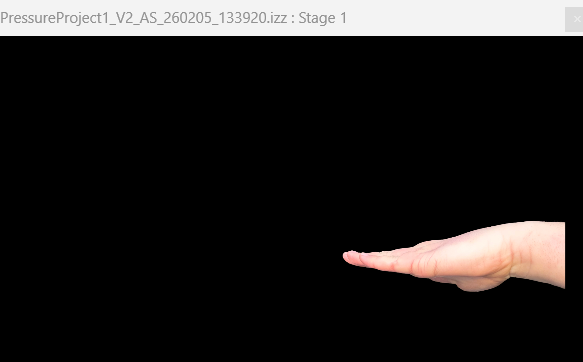

I won’t go into much detail on the pre-production and programming for this iteration, as not much has changed on that end; refer to the first post for that. The most important update to the project is in the controller; we are no longer using a paper plate (woohoo). We used a bucket (2 of them to be exact).

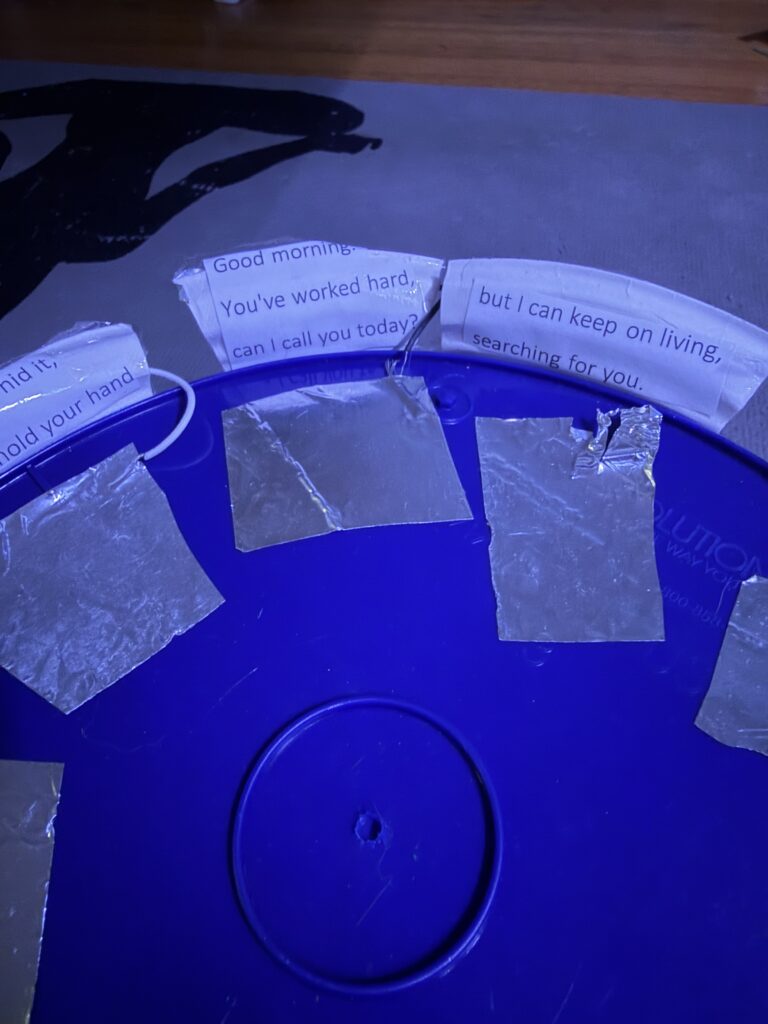

There were 9 inputs wired into the two buckets: 3 based on taps and 5 on holds. Originally, the player was to wear my watch with the conductive alligator clip attached, which worked pretty well for me, but stressfully, during the presentation, it was not working for Zarmeen. Michael came in with a save by lending me a bracelet designed to do exactly what my jimmy-rigged watch was meant to do (be a bracelet that makes the user conductive). The user finger-tapped their way through the visuals, each labeled with a lyric from the song (what the visuals were connected to).

While this controller was a big upgrade over a plate and closer to my vision of evoking a sense of musicality and rhythm in the user, it had its flaws:

Flaw #1. Nobody could remember the lyrical labels, nor did they look at them during their performance.

SO yeah, turns out it’s hard to read and hit buttons that make visuals appear at the same time. I was not shocked at this feedback, as I found myself not reading them as I played, but I also know the song, so I wanted to test it anyway. I’ve decided to incorporate the lyrics in another way that will hopefully be more effective (check my final post for that reveal).

Flaw #2. The buckets are janky

The buckets are janky. They are a bit ugly and easily disconnect from their inputs (the Makey Makey)… I will try my best to make this less the case in the next cycle.

Flaw #3. The meaning of the song did not matter to the users/was not clear.

Because no one remembered the lyrics and felt they were mostly preoccupied with making the coolest visual combos possible. I hope that by incorporating the lyrics into the visuals, the user will take heed of them.

Okay, time to be more positive.

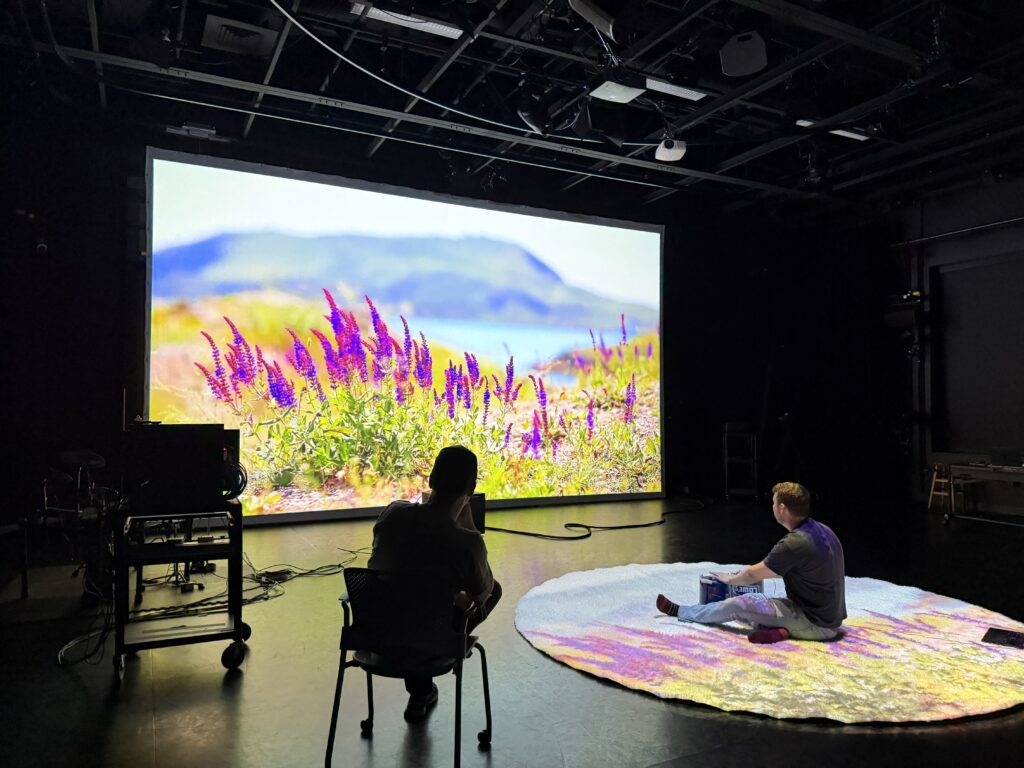

My classmates reported feeling immersed in the process and as if they were performing or learning an instrument; some even expressed nervousness when in the “spotlight”. This accomplished one of my goals: to simulate the feeling of, well, performing a solo. I really enjoyed watching the unique interactions as people took turns. My classmates were oohing and aahing, and trying out different techniques to garner different visual combinations. I am lucky there are so many actual musicians in the bunch. Now I just need to accomplish some sort of meaning-making process in this experience.

Pressure Project #3 – Tomato Tales

Posted: April 16, 2026 Filed under: Uncategorized Leave a comment »From my understanding this pressure project was to create an audio-based experience tied to a cultural subject close to you. We only had 5 hours total to work on it. Maybe due to the amount of work, I had ahead of me at the time, in this course and others I decided to go with the first idea that came to me. I thought of tomatoes.

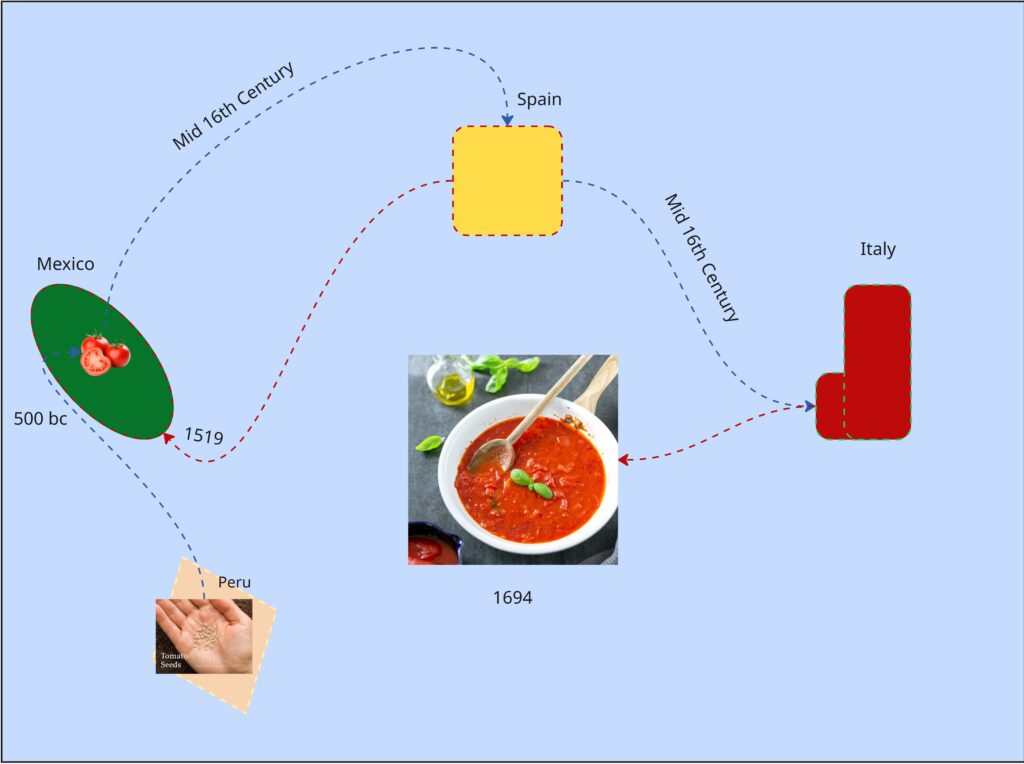

In a design course taken in the prior semester, we did an on visual mapping exercise. We had to make a map about tomatoes within the class period, anything about tomatoes. Most people chose cooking processes or the life cycle of a tomato as an agricultural good. I tried to find a connection between tomato sauces, as I thought of mole (the sauce) and how every culture around the world seems to have their own version of tomato sauce…so where did tomatoes come from and how did they get to Italy?

It was colonialization, because of course it was. But Tomatoes originate from South America in the Inca Empire and flourished in the Aztec Empire.

It’s not the prettiest chart but I spent most of the exercise learning about tomatoes.

So, I made an audio experience using tomatoes as the means of interaction. While we weren’t allowed to show any visuals through the first playthrough I planned to reveal the control panel for the sounds with a second playthrough (which was allowed). I prompted the audience to try to piece together a narrative taking place, before and after the second playthrough.

As for the audio I built a library of 15th century Inca instruments, Aztec instruments and 16th century Spanish instruments. I began the recording with a sound of seeds rattling in a bag, followed by Inca instruments, gradually joined by Aztec performances showcasing the migration and meeting of two cultures. Aztec celebratory performances take the lead for a bit, to indicate the prosperity of the empire and cultivation of the crop. What follows is a change of lighting and sound. Waves and grumbling can be heard, followed with heavy footfall. A Spanish guitar plays a riff and is met with an Aztec Death Flute (scary ass sound) but is quickly cut off by the boom of a musket. This was to show way weapons (guns) from Spanish and other Western colonial forces were able to lethally end even the mightiest of native empires. To conclude waves and ship sailing sounds ensue again; a ren faire sounding band performance plays (apparently the sound of Italy at the time) along with chopping noises (the tomato is being used).

Shout out to Luke to basically nailing the message on the head when I prompted everyone to guess.

The recording process was very stupid. I used the Makey Makey and their soundboard app, however it doesn’t have a feature that lets you record in browser. So, I set up a microphone beside my speaker, and I am so shocked it didn’t sound horrible. I realized I more made a tactile activity for myself and gave far more cerebral activity for my audience (thinking game).

I chose this topic because colonialization is the primary driving force that has led to the life I live today. Colonialization is what makes me an American and not some random person in Ireland or Germany right now (where my ancestors are from). Colonialization is what provides us with iPhones, clothes…bananas most stuff really. My partner is here because her ancestors were stolen from their country and brought here as slaves. Behind almost every invention, creature comfort, and privilage that makes up the West is covered in a history of bloodshed… Including spaghetti if you follow the threads enough. This isn’t something to make people feel bad or anything. Knowing this type of thing is impotrant to me, how much violence makes up the creature comforts we have today. Who am I harming or partcipating in the harm of by consuming in the empire I live in now? How do I react to learning this things?

The good news is, I don’t really like tomatoes anyway.

Mole is pretty good though.

Cycle 1 -Solo (but at this moment) Paper Plate DJ

Posted: April 9, 2026 Filed under: Uncategorized Leave a comment »What is up?

Here is my cycle one post 🙂

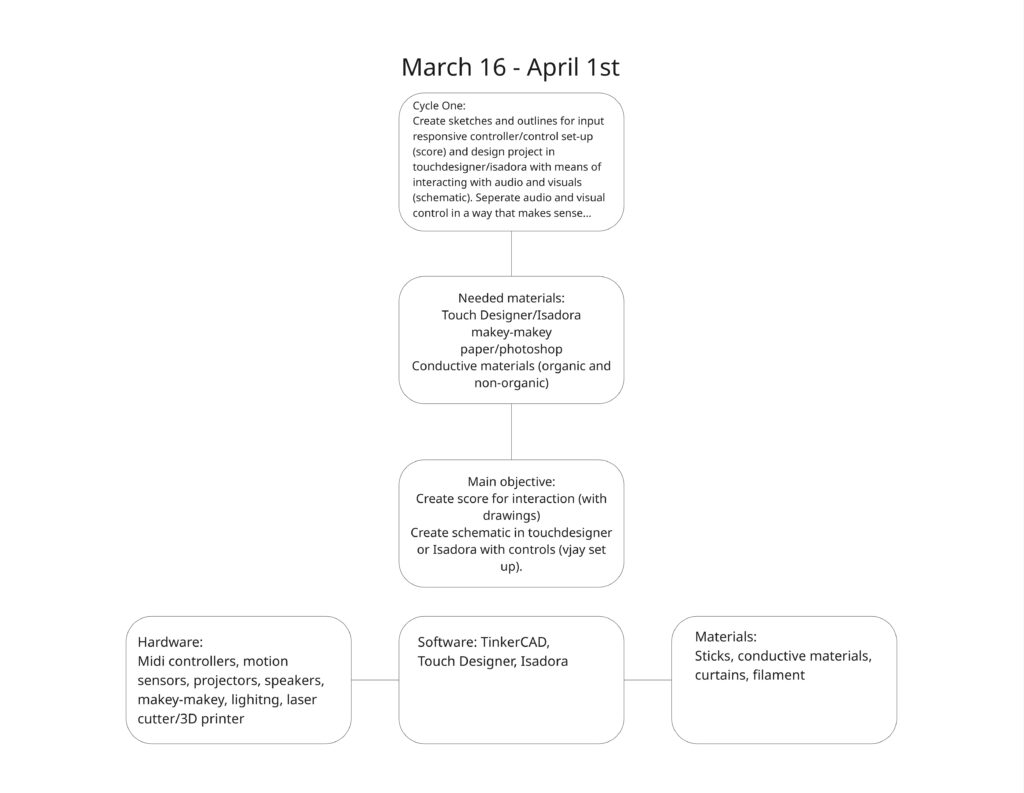

I started this cycle with the hopes and dreams of creating a live performance experience that challenges the user to piece together a story from a song with lyrics they can’t understand. This branches from two research interests/questions.

1. How can interactive technology facilitate meaning-making and engagement with works of art?

2. How can designers best utilize interactive/immersive experiences to invoke a sense of power within their participants?

I tapped into my own personal lived experiences to explore these questions; I feel the most powerful when moving to music and playing rhythm games. So I set out to recreate that feeling of being pretty good at a rhythm game. Demonstrated in the brainstormed documents below 🙂

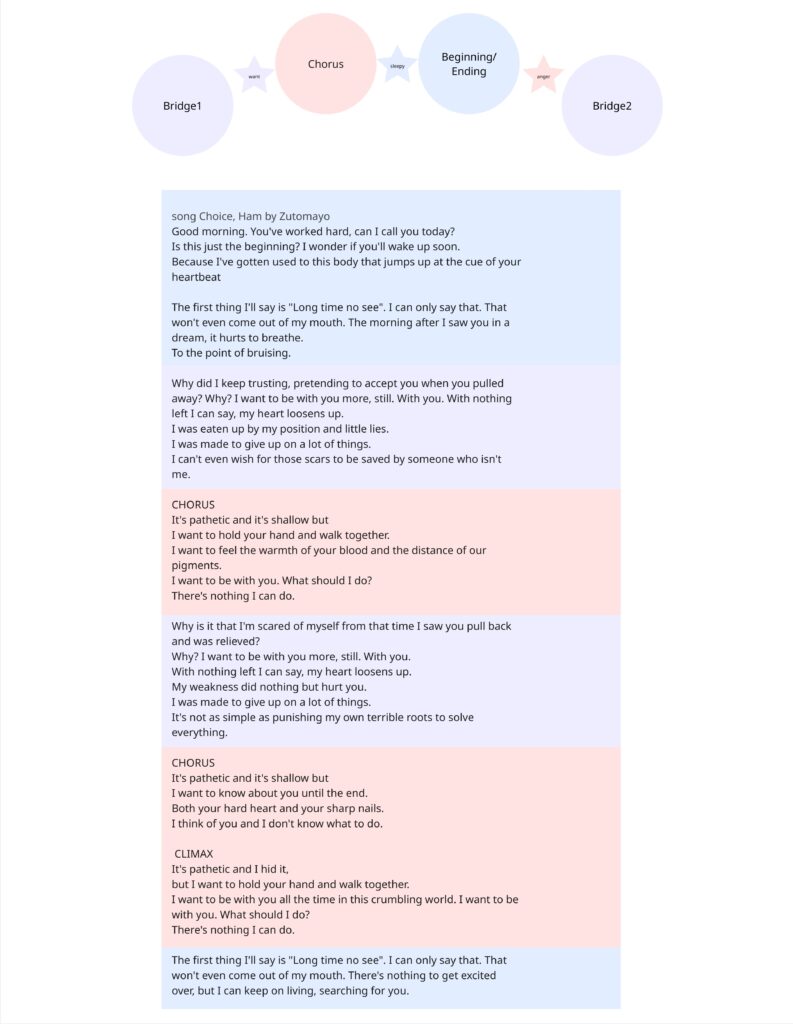

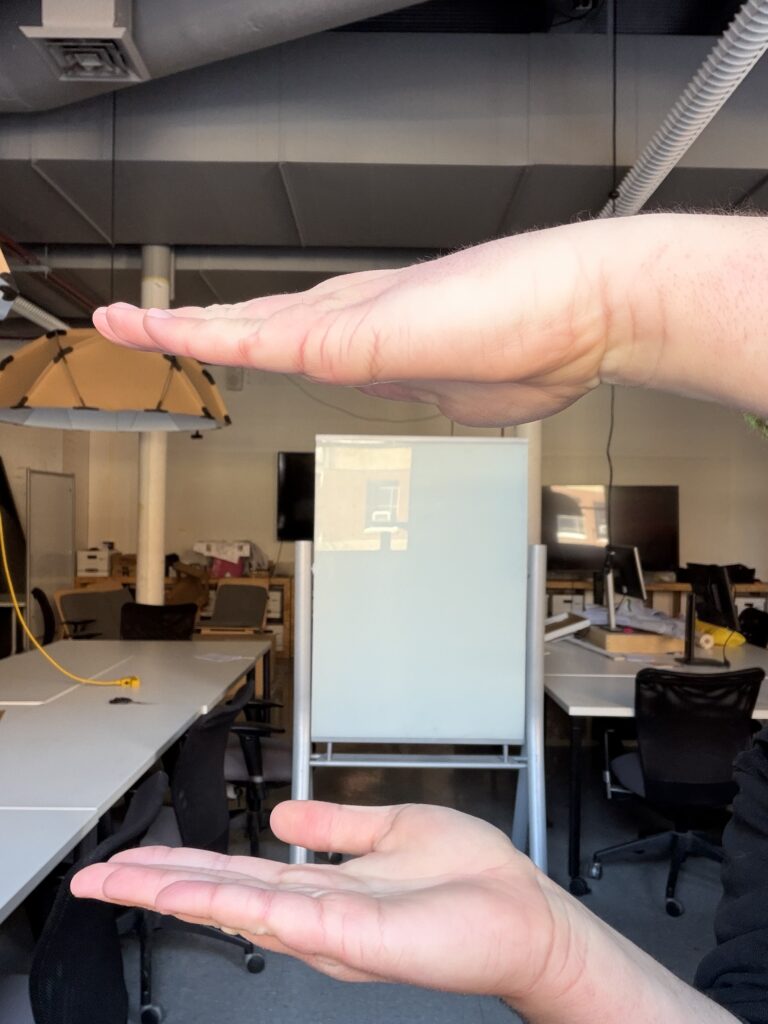

I chose a song that is entirely in Japanese, with the knowledge that no one in my class knows Japanese. I sectioned off a translation of the lyrics to attach to 4 different inputs that align with the imagery described. I also used the music video as a reference. I also planned to buy buckets. The user drums on various parts of the bucket that are labeled with lines of lyrics from the song. They will keep the tempo in the area of the drum where they believe the lyrics are being sung.

I decided to create the control scheme first – focusing entirely on that before setting up the physical controller.

This test was accompanied by instrumental music. I didn’t want to reveal the song yet, and wanted to focus on the physical reactions with the controls to influence how I construct the controller in cycle 2. Most of the songs were slower-paced, but once a faster one came on, Chad (shown in the video above, but the specific moment wasn’t captured on film) stood up and rapidly switched between visuals, which contrasted with the slow, exploratory manner he had before when testing out the various interactions. The song I chose is a bit funkier and faster-paced than the music played before, so I think it will add some excitement to the interactions.

I received feedback that having the grounding element be the user holding their thumb to the center of the plate felt more natural than the watch, and avoiding tangled wires. I also received feedback on the controls being confined to the desk. I agreed as I plan to have the control be in the center of the room, but I didn’t have that prepared for this cycle… So on to the next…

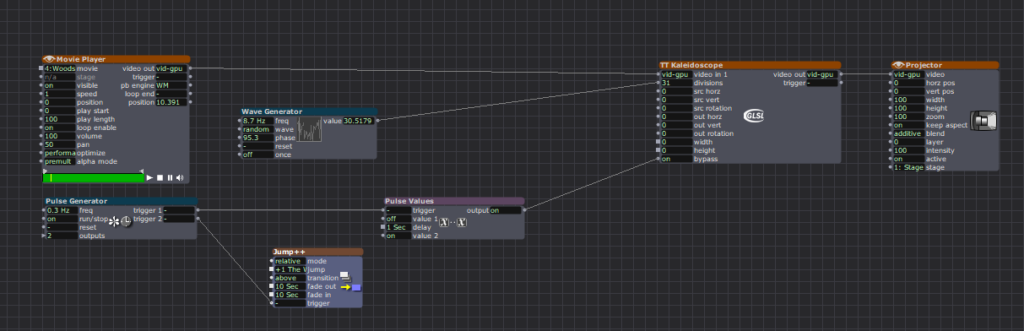

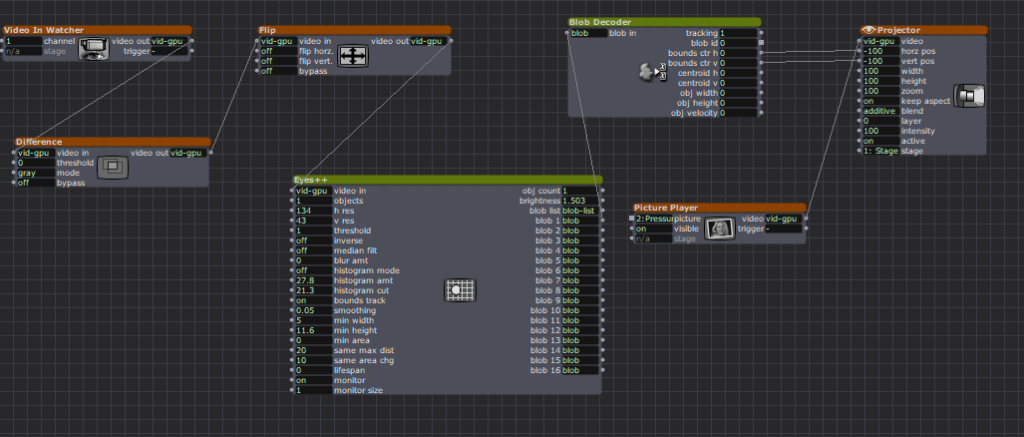

Pressure Project #2 – Transcendence through Snares

Posted: March 3, 2026 Filed under: Uncategorized Leave a comment »- Description of my cell-block:

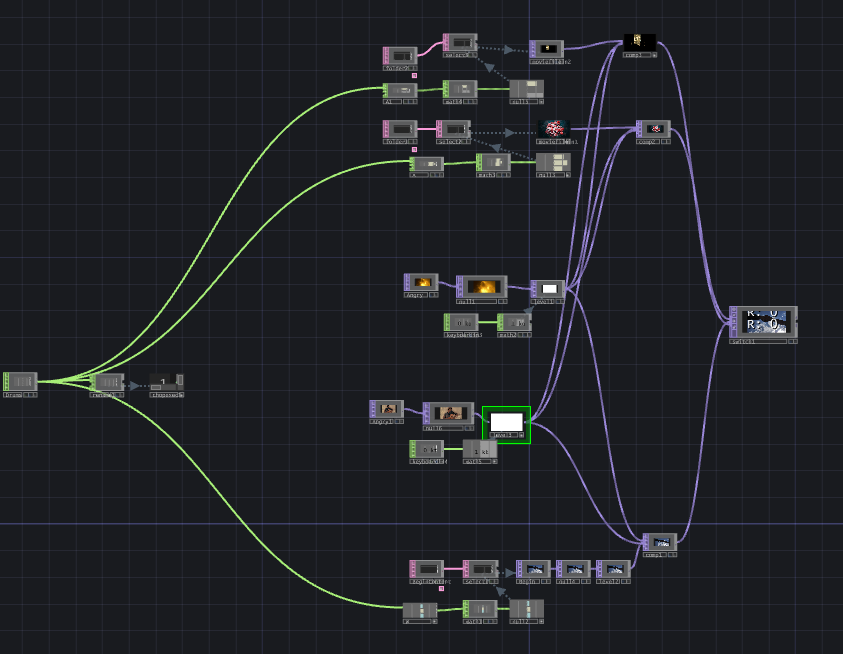

- Independently, my cell-block uses the snare of the audio provided to cycle through a set of mouth shapes to simulate lip syncing (albeit not realistic lip syncing). It also takes the video input and through Ramp and Displace, warps the image based on the “mid” registered from the audio as well. Without outside input the audio used is “Position Famous” by Frost Children, and the video is a looping timelapse POV of a subway traveling underground. This was to create a sense of motion and exhilaration (the movement of the subway and displacement), and playfulness (the lip syncing).

- Collective documentation:

- Video/photos of the assembled system: Admittedly, I forgot to take footage of the showcase. I was a bit more nervous about this project, worried about everything working properly with the other cell-blocks. Once it was my turn, I only focused on presenting my work. I plan to reach out to classmates to see if they recorded footage.

- Process reflection:

- The cell-block was self-contained but, on the exterior, was connected to incoming TOP and CHOP inputs and fed those inputs out. So, on its own, the block would play as planned, but once external audio and video were fed in, they would inherit the effects of the previous media. There were some issues with feedback loops when testing this out, but mostly, it worked. The lips were a last-minute add-on and therefore independent… so no matter what, the lips stayed on screen; how it reacted depended on the audio input.

- I chose to control the level of flashiness and movement in my visuals. It’s easy to fall into producing loud and flashy imagery with programs like TouchDesigner or even After Effects, however, I try to use media responsibly, and I also didn’t want to give myself a headache. I’ve made materials that are hard for photosensitive people to take in, and while some others loved the chaotic visuals, I wasn’t satisfied knowing a group of people wouldn’t be able to watch it (and enjoy it).

- I was surprised that a lot of people didn’t use audio that contained many snares (or used much audio at all)… I was also surprised that everything worked together for the most part (if you can’t tell, I was nervous).

- When combined, everyone’s work offered me something new. I would combine with Luke’s when I wanted the most cohesive combination, I would combine with Zarmeen’s when I wanted to destroy everything (or use her audio), I combined with Chad’s because I wanted to appear on his channels more, and I combined with Curtus when I wanted to see a dragon.

- This project was a new way to envision Halprin’s cell-block method, but in a strictly digital realm. The goal was to have every block exist on its own and influence others (multiply the possibilities of the content produced). I think we mostly did that, although networking still feels stressful to me; I at least know how it works (sort of).

- Individual documentation:

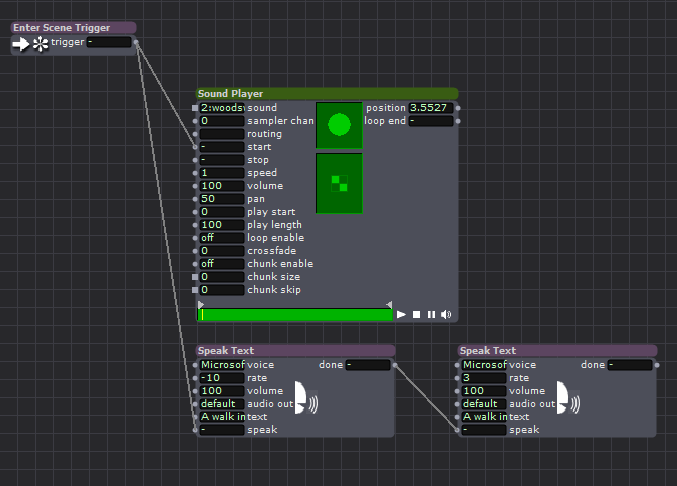

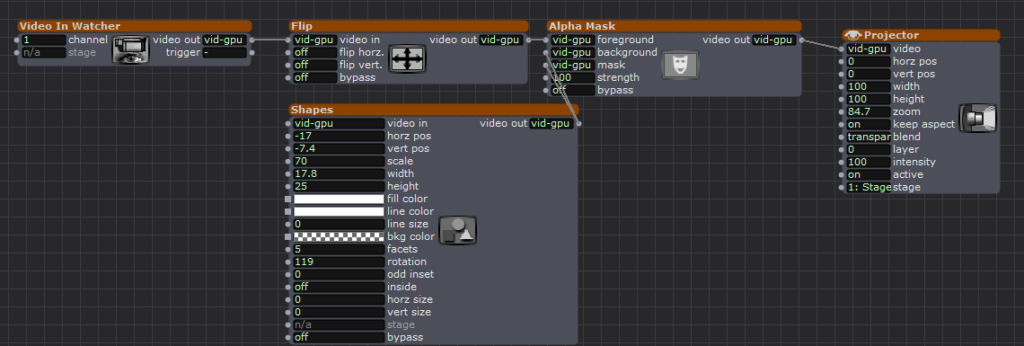

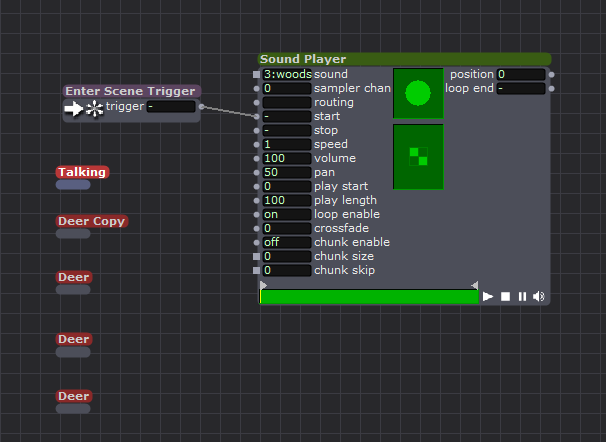

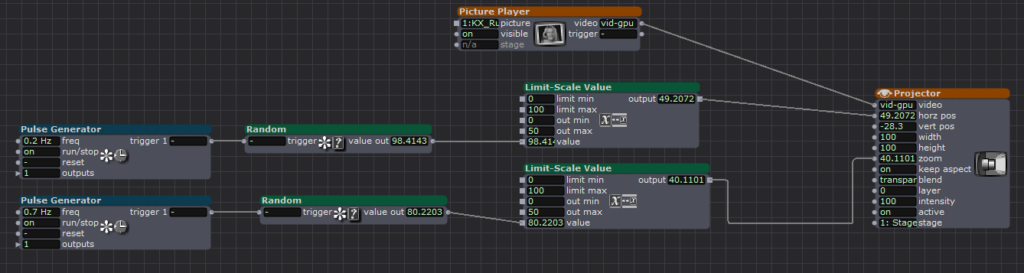

Pressure Project #1 – A Walk In Nature

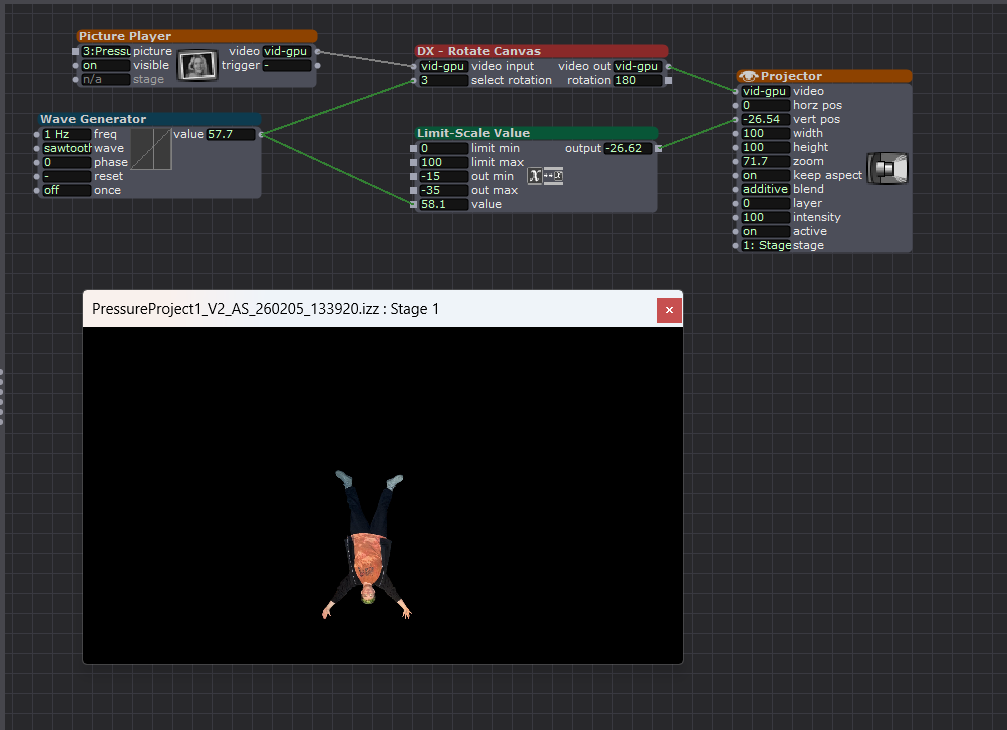

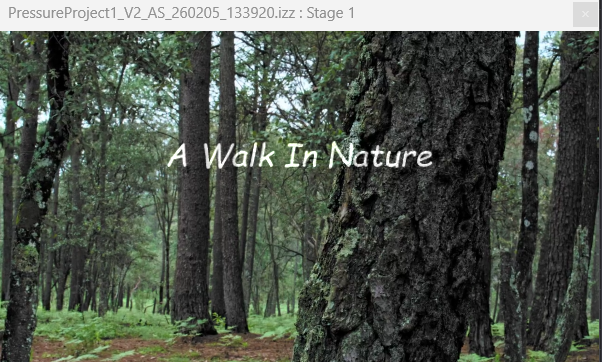

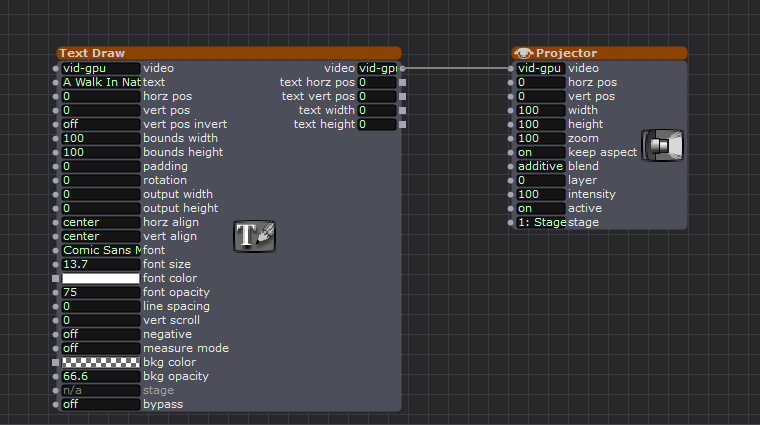

Posted: February 9, 2026 Filed under: Uncategorized Leave a comment »Description: “A Walk In Nature” is a self-generating experience that documents two individuals’ time together deep in the woods.

The Meat and Bones (view captions for descriptions):

Photos I took before production (I had no real clue what I was going to do)

The Reactions:

I am very thankful for Zarmeen’s presence, as I don’t know if I would’ve achieved all the bonus points without her. While I received relatively affirming verbal feedback at the end, without her talent of reacting physically, I would have felt way more awkward showing this messed-up video.

Reflections:

I was actually extremely relieved to have a time limit on the project, as I am very limited on time as a grad student with a GTA and part-time job (it’s rough out here). I loved the idea of throwing something at the wall and seeing what sticks. I chose to do the majority of the work in one setting, figuratively locking oneself in a room for five hours and leaving with a thing felt correct. I did note ideas that popped up throughout the week, but I didn’t end up doing any of them anyway.

I was far too hung up on the idea of making sure people pay attention; original ideas had the machine barking orders at the viewers to “not look away”, but that felt mean. So I went with the idea of making everyone so uncomfortable that they forget to look away, like how I feel watching Fantastic Planet. Towards the last hour, I realized that aside from robots talking, I needed user interaction to make this feel whole. However, the cartwheel and petting action didn’t work out as pictured above. So what if the audience could be the deer?

The last hour was me messing with an app to use my camera as the webcam (Eduroam ruined my dreams there). So I grabbed a webcam from the computer lab the day of. (sorry Michael) I knew I was going to choose one lucky viewer to hold the camera, and choosing Alex was improvised I just thought he would be most excited to hold it. I was pleasantly surprised that there were expressions of joy while watching, as when I showed my partner, she was scared and mad at me. I am glad my stupid sense of humor worked out. 🙂

AI Expert Audit: I made Notebook LM theorize about Five Nights of Freddy’s

Posted: February 4, 2026 Filed under: Uncategorized Leave a comment »The Source Material (I kind of went too far here):

we solved fnaf and we’re Not Kidding

https://www.reddit.com/r/GAMETHEORY/

How Scott Cawthon Ruind the FNAF Lore

https://freddy-fazbears-pizza.fandom.com/wiki/Five_Nights_at_Freddy%27s_Wiki

https://www.reddit.com/r/fivenightsatfreddys/

GT Live react to we solved fnaf and we’re Not Kidding

My Source Material, Why Did I Choose This?:

I actually chose materials that weren’t important to me, but they were when I was younger. I love listening to video essays and theories on various media. Whenever I was animating or doing a mundane art task in my undergrad, I would have that genre of video in the background to take a break from listening to the news (real important shit). It’s super silly stuff, but when I was a teenager, Game Theory first started getting BIG; seeing a huge channel discussing my favorite IPs, subverting and contextualizing their narratives felt very important. It really validated my feelings that video games were art.

However I am now grown, and I care far less about Five Nights of Freddy’s, now it feels like fun junk food for my brain. (Although teens and kiddos still care about the spooky animatronics, so it’s been a clutch move when bonding with the youths when I was a nanny.) I also hate AI, I hate it. I don’t hate automation; it makes life way better when done currently. I don’t think “AI” is done correctly; it’s mostly bullshit even down to the name. It’s a marketing strategy giving excuses to companies to fire workers and build giant databases that poison the land. I did not want to give Notebook LM anything “meaningful”. I didn’t want to let it in on the worlds I care about on my own volition. So, I gave it the silly spooky bear game that I know way too much about.

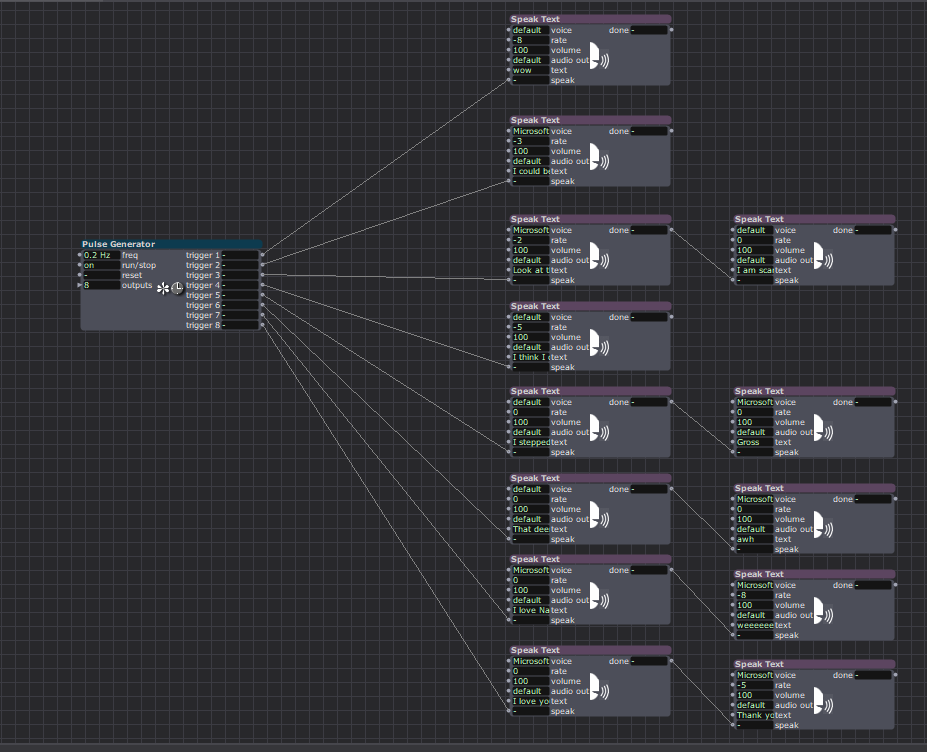

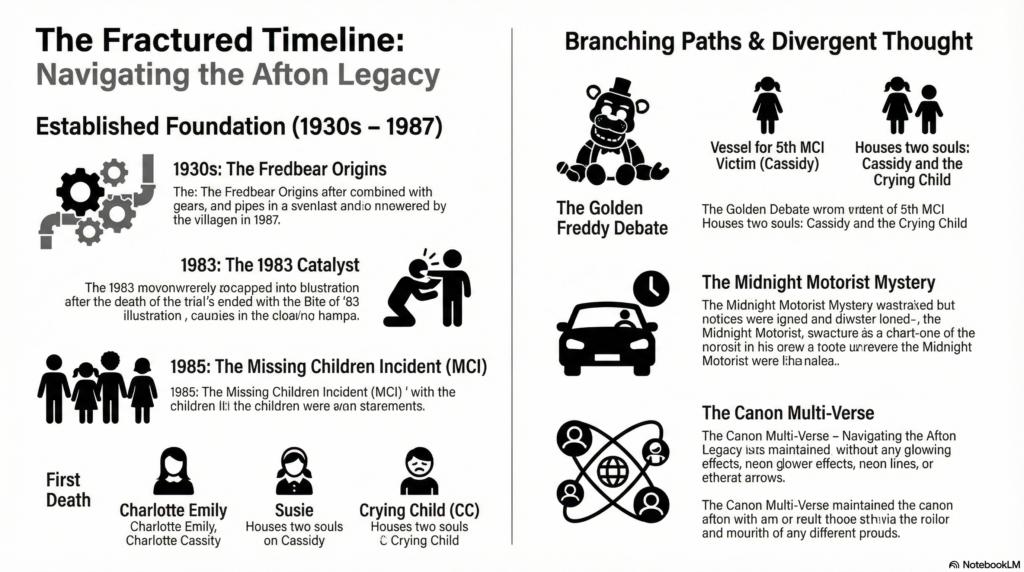

The AI Generated Materials

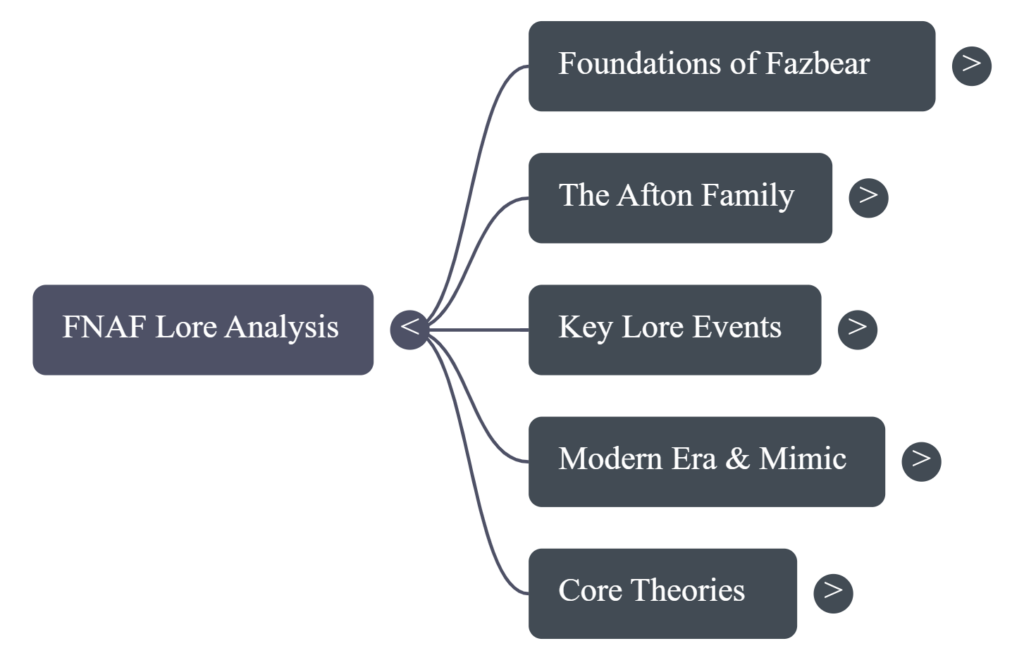

“Create an info graph of the official Five Night of Freddy’s Timeline with the information presented. Creating branches of diverging thought alongside widely agreed upon information.”

“Form a debate on what Timeline is the canon for FNAF.

Each host has to make their own original timeline.

Both hosts should sound like charismatic youtubers with dedicated channels to the video game and it’s lore.

Both Youtubers should use the words often associated with the Fandom and culture of FNAF.

Both hosts you have distinct personalities and opinions from one another.

Both hosts will have different opinions on whether the books should be used in lore making.”

1. Accuracy Check

What did the AI get right?

The basics. It was able to categorize the general hot topics (e.g., MCI or the Missing Child Incident, The Bite of 83’ and 87’, The Aftons…). It sometimes would match what theory goes with what Youtuber. It’s pretty efficient in barfing out information in bullet point fashion.

What did it get wrong, oversimplify, or miss entirely?

The transcripts from the videos aren’t great; they don’t separate who is saying what, so when trying to describe the multiple popular theories out there and how they conflict, it struggles. When I had it made an audio debate where two personalities choose a stance to argue about from the materials I provided. It was pretty much mincemeat. Yes, both were referencing actual game elements but in ways to make no sense to the actual theories provided, the “hosts” argued about points no real person would argue about. In the prompt, I instructed one personality to use the books as reference while the other did not, and it took that and made 70% of the podcast arguing about the books. The mind map struggles to clarify what theory is and what is a canon fact. The info graph was illegible.

Were there any subtle distortions or misrepresentations that a non-expert might not catch?

Going back to the mind map, and in other words it doesn’t cite its sources well. It does provide the transcript it referred to, but the transcripts aren’t very useful as described above. It flips flopped between stated what as a theory and what was canon to the game (confirmed by the creators). If someone were to read it without much knowledge, they would be bombarded with information that conflicts, isn’t organized narratively, and stated in context of its origin.

2. Usefulness for Learning

If you were encountering this material for the first time, would these AI-generated resources help you understand it?

Semi-informative but not at all engaging.

What do the podcast, mind map, and infographic each do well (or poorly) as learning tools?

Both podcast and mind map were at least comprehensible; the info graph was not.

Which format was most/least effective? Why?

The podcast is the most effective; there was some generated personality to distinguish the motivation behind certain theories, not great distinctions but more than nothing.

3. The Aesthetic of AI

It’s safe to say Youtubers and podcasters are still safe job wise. Hearing theories about haunted animatronics in the format and aesthetics of an NPR podcast was deeply embarrassing. Hearing a generated voice call me a “Fazz-head” was demoralizing to say the least.

They made pretty bad debaters too. The one who was presumably assigned the role of “I will only use the games as references” at one point waved away their opponent’s claim with the response, “yeah but that’s if you seriously take a mini game from 10 years ago”.

It took out all of the fun; there were no longer cheeky remarks of self-depreciating jokes about the silliness of the topic and efforts. Often theorists will acknowledge Scott Cawthon did not think these implications fully out, that this effort may be rooted in retcons and wishful thinking, but it’s still fun. The hosts and mind map acted like they were categorizing religious text, and it was remarkably unenjoyable to sit through.

4. Trust & Limitations

AI is good at taking (proven) information and organizing it in a way that is nice to look at. It’s great for schedules or breakdowns. It sucks at just about everything else. I only really have benefitted from AI when it comes to programming; it’s really nice to have an answer to what is wrong with your code (even if it’s not always right; it usually leads you past the point of being stumped).

When it comes to art, interpretation, and comprehension, I wouldn’t recommend AI to anyone. If you are making a quiz, make it yourself. The act of making a quiz based off study topics will increase your comprehension far more than memorizing questions barfed out to you. If you don’t have the time to produce something, then produce something you can with the time you have or collaborate with someone who can produce with you. Use AI to fix your grammar (language or code), use AI to make a schedule if you suffer from task paralysis, but aside from accommodations and quick questions, leave it alone.