Cycle 1 -Solo (but at this moment) Paper Plate DJ

Posted: April 9, 2026 Filed under: Uncategorized Leave a comment »What is up?

Here is my cycle one post 🙂

I started this cycle with the hopes and dreams of creating a live performance experience that challenges the user to piece together a story from a song with lyrics they can’t understand. This branches from two research interests/questions.

1. How can interactive technology facilitate meaning-making and engagement with works of art?

2. How can designers best utilize interactive/immersive experiences to invoke a sense of power within their participants?

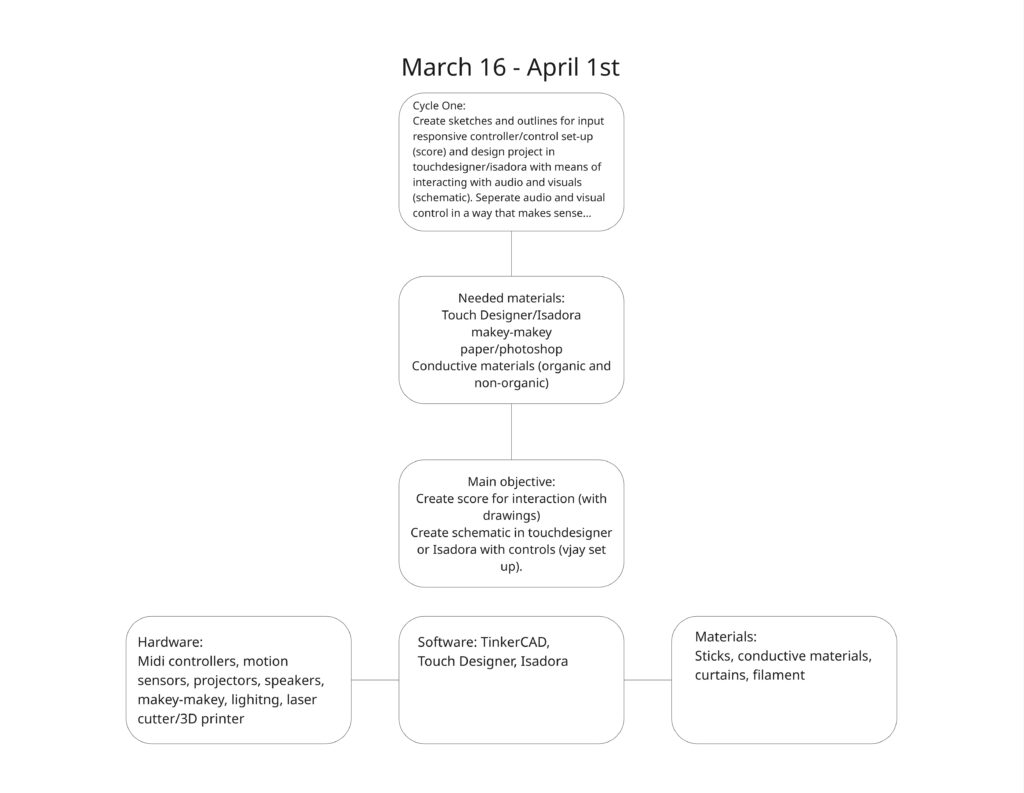

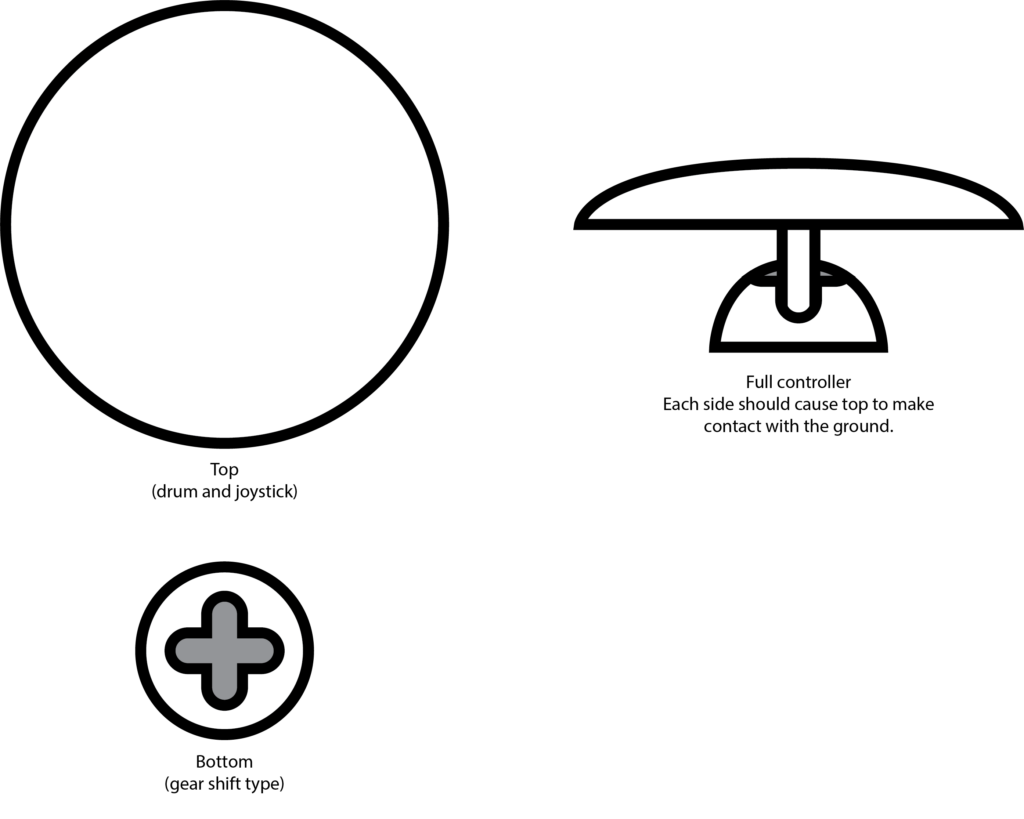

I tapped into my own personal lived experiences to explore these questions; I feel the most powerful when moving to music and playing rhythm games. So I set out to recreate that feeling of being pretty good at a rhythm game. Demonstrated in the brainstormed documents below 🙂

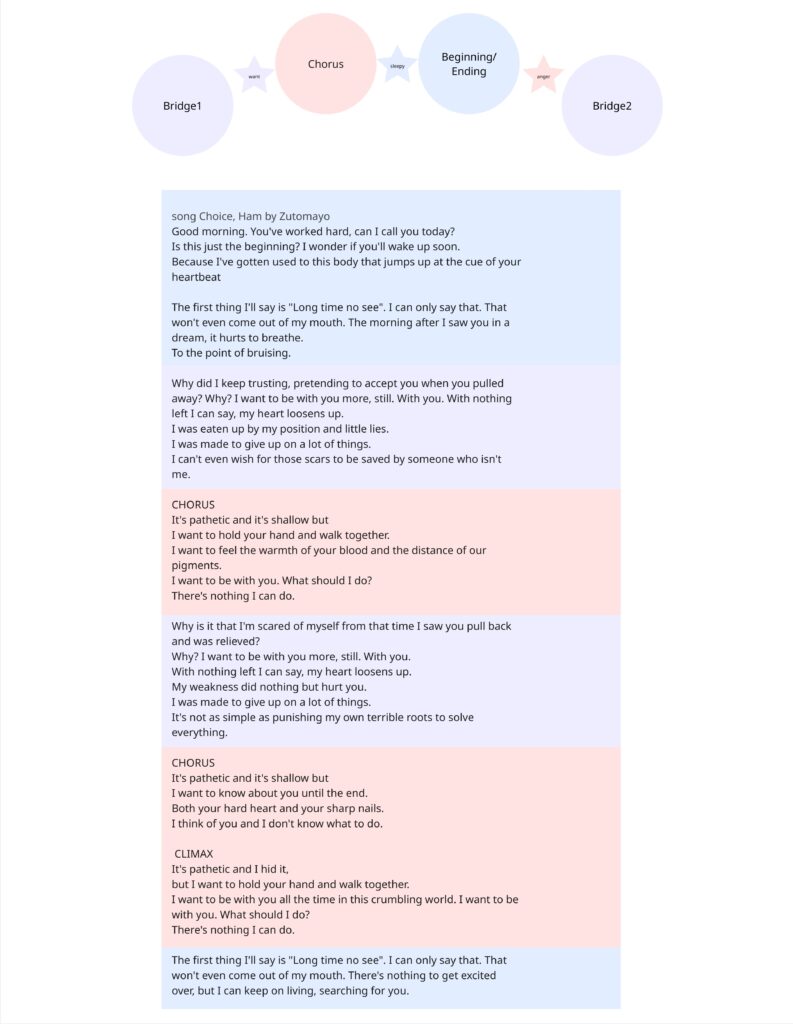

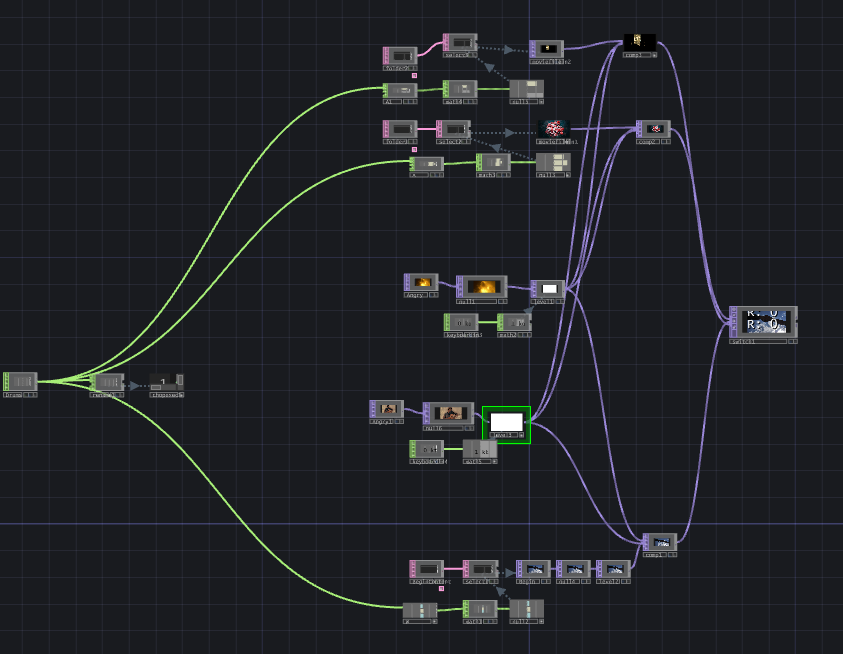

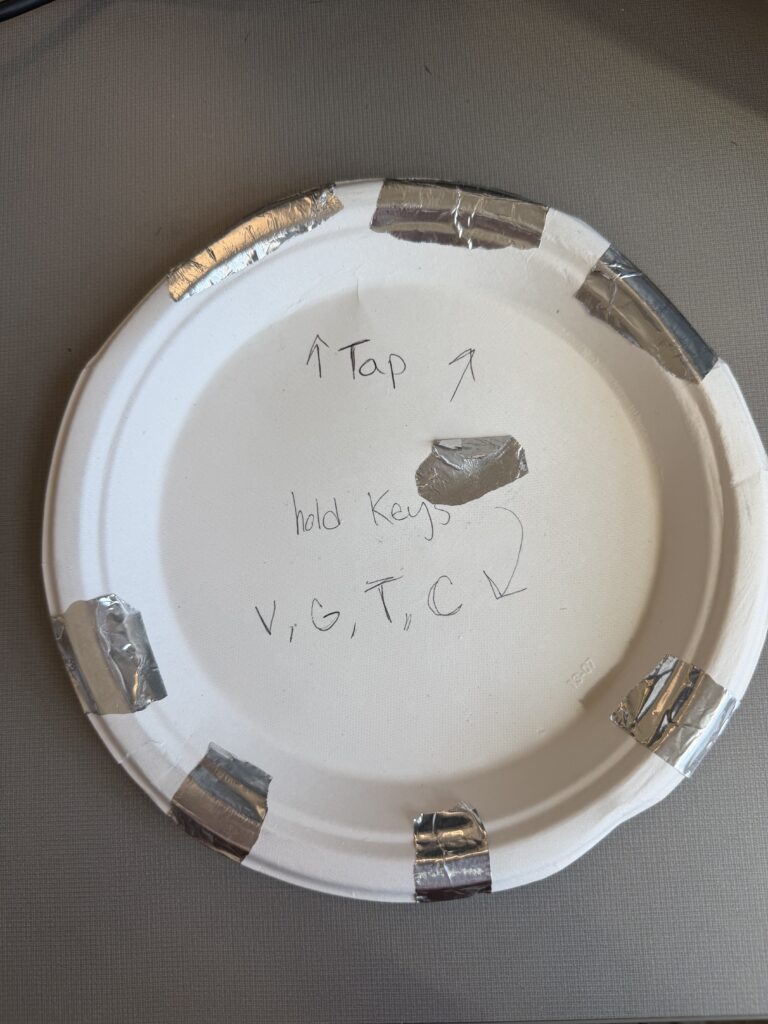

I chose a song that is entirely in Japanese, with the knowledge that no one in my class knows Japanese. I sectioned off a translation of the lyrics to attach to 4 different inputs that align with the imagery described. I also used the music video as a reference. I also planned to buy buckets. The user drums on various parts of the bucket that are labeled with lines of lyrics from the song. They will keep the tempo in the area of the drum where they believe the lyrics are being sung.

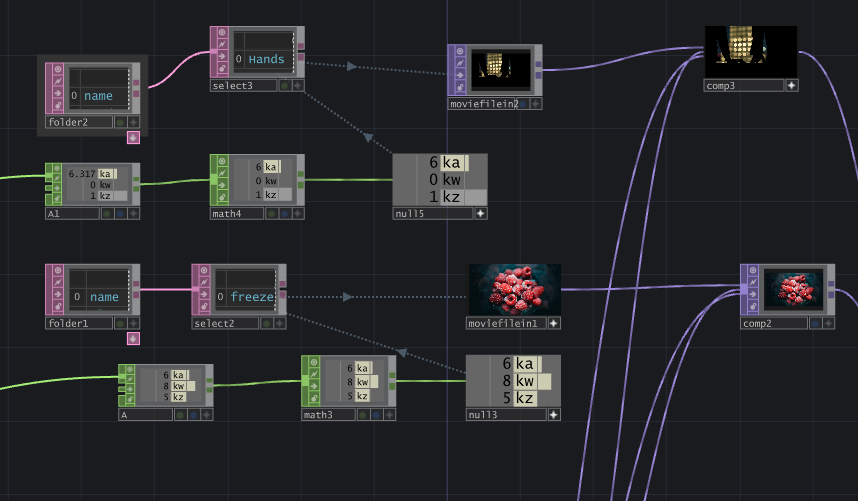

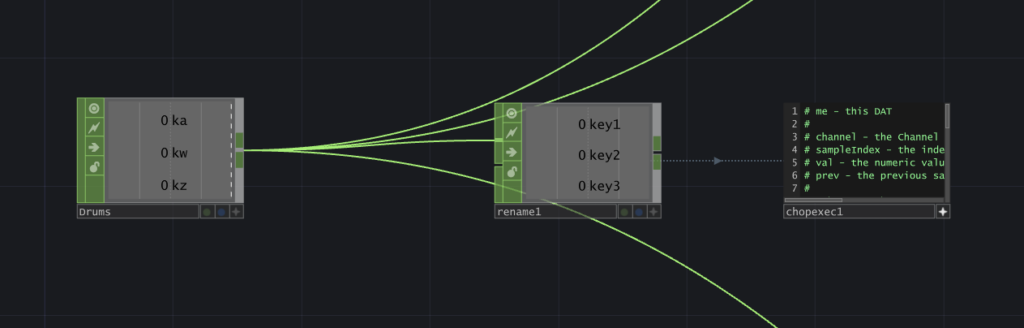

I decided to create the control scheme first – focusing entirely on that before setting up the physical controller.

This test was accompanied by instrumental music. I didn’t want to reveal the song yet, and wanted to focus on the physical reactions with the controls to influence how I construct the controller in cycle 2. Most of the songs were slower-paced, but once a faster one came on, Chad (shown in the video above, but the specific moment wasn’t captured on film) stood up and rapidly switched between visuals, which contrasted with the slow, exploratory manner he had before when testing out the various interactions. The song I chose is a bit funkier and faster-paced than the music played before, so I think it will add some excitement to the interactions.

I received feedback that having the grounding element be the user holding their thumb to the center of the plate felt more natural than the watch, and avoiding tangled wires. I also received feedback on the controls being confined to the desk. I agreed as I plan to have the control be in the center of the room, but I didn’t have that prepared for this cycle… So on to the next…