Pressure Project 2: One for All, All for one

Posted: March 9, 2026 Filed under: Pressure Project 2 | Tags: Pressure Project, Pressure Project 2, touchdesigner Leave a comment »I named my cell One for All, All for One because it is built around the idea that an individual, and communities as a whole are constantly shaping each other. The cell itself is a constan conversation between the oneself and the communal archive. It takes a live video feed and layers it over a slideshow of images showing communities and people from different parts of the world.

Then interactive sound enters the picture. A glitch effect driven by audio input levels determines how much the live video overlay fractures. The louder the audio, the more the live layer breaks apart and reveals the slideshow underneath. Alongside this, I built in an internal LFO paired with an Edge TOP to create a rhythmic pulse, something I called a “heartbeat”. Even without external input, the system works fine and feels alive.

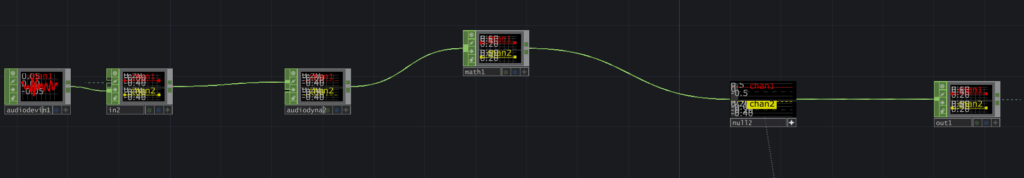

The structure is modular and layered, and honestly not that complicated. There is a live video input, and a media player (which controls the slideshow) which plug into a switch. The output of the switch goes into a glitch system, and a pulse system. Each could be replaced without breaking the overall logic. The audio input, live video input, and the signal (LFO) are designed so that they could be overridden by an external network signal as well. The cell has its own system, but it is designed to connect, following the true concept of one for all, all for one.

Reflection:

When all the cells assembled, things became unstable. Signals were constantly dropping and connections were dying. For a while, I thought something was wrong with my cell because nothing would show up (it was a problem with the input signals I was getting). When I finally got it to work, very interesting emergent behaviors appeared. The glitches danced to different rhythms. Video overlays ended up in very interesting stacked outputs. It was interesting because I did design my system while being aware that it had to be plugged into a bigger system. However, I did not envision the results I got during testing. What I controlled alone became either amplified or distorted by others. The network did not just combine outputs. It reshaped them. I think where my careful planning fell off was the heartbeat. I had not accounted for the fact that other cells can have signals of different types. Instead of a steady pulse, I got an irregular signal input, which changed the whole heartbeat effect. At first it felt like something went wrong. My cell was no longer just reacting to my inputs. It was reacting to everyone. That is exactly what One for All, All for One means. Each cell affects the others. Each signal influences the collective behavior. My cell had a life of its own. In the network, it learned to respond, adapt, and sometimes surrender to the collective.

Project File: pp2_Zarmeen.zip

Pressure Project 2 – The Flipper

Posted: March 3, 2026 Filed under: Uncategorized Leave a comment »Description

The Flipper is a TouchDesigner patch that uses an audio input to create video, and uses a video input to create audio. When used in a network this “cell-block” acts independently by creating entirely new audio and video, instead of just modifying the audio and video it receives. Its modularity lies in its ability to provide other users in the cell-block network with new sources of audio and video, that are themselves generated from other audio and video over the network.

Collective Documentation

Pending

Individual Documentation

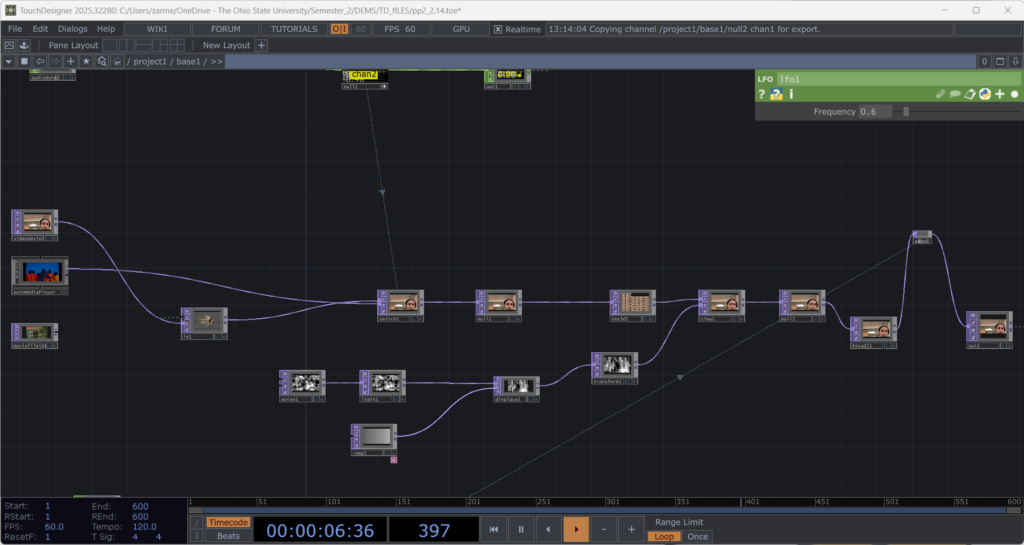

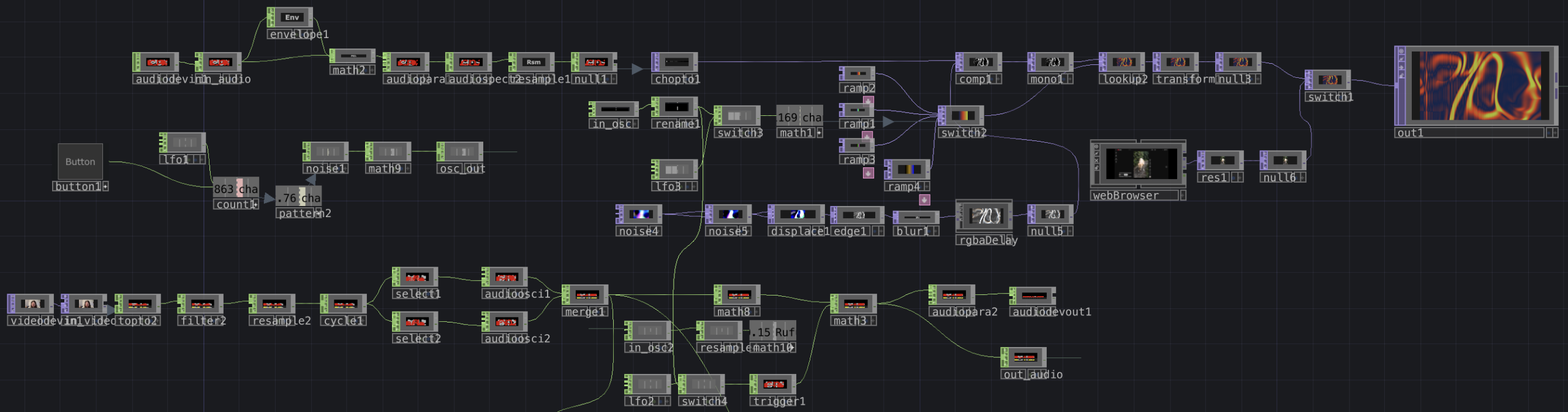

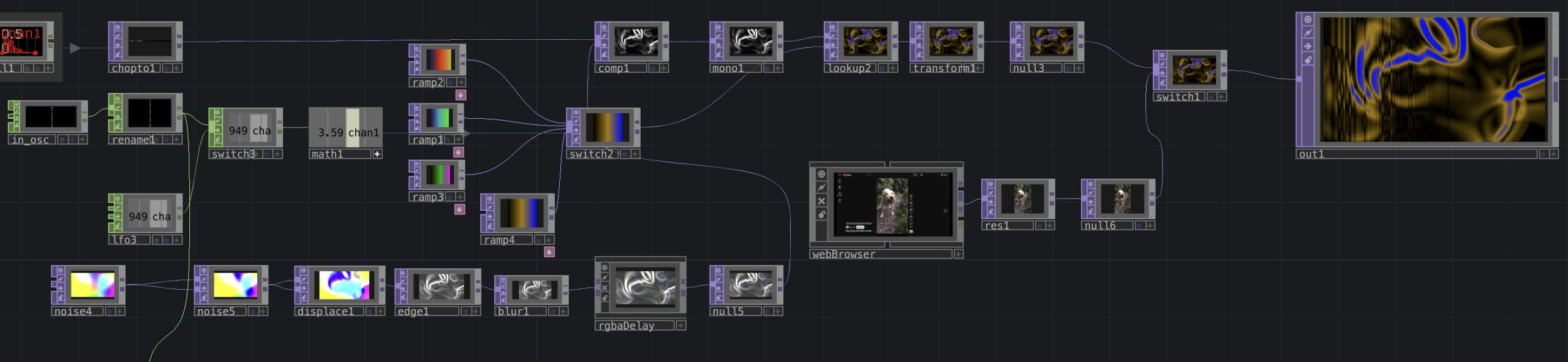

Overview of my cell-block’s network. This is connected to three inputs and outputs on the outside of the container, which connect to other cell-blocks on the network. While there’s a lot on screen, it breaks down into a few simple sections.

This portion of the network takes in audio from over the patch through the in_audio CHOP. The envelope, math, and audioparaeq objects slow the stream of data and boost high frequencies, respectively. This then is turned into a spectrogram and is sent directly to a chopto TOP.

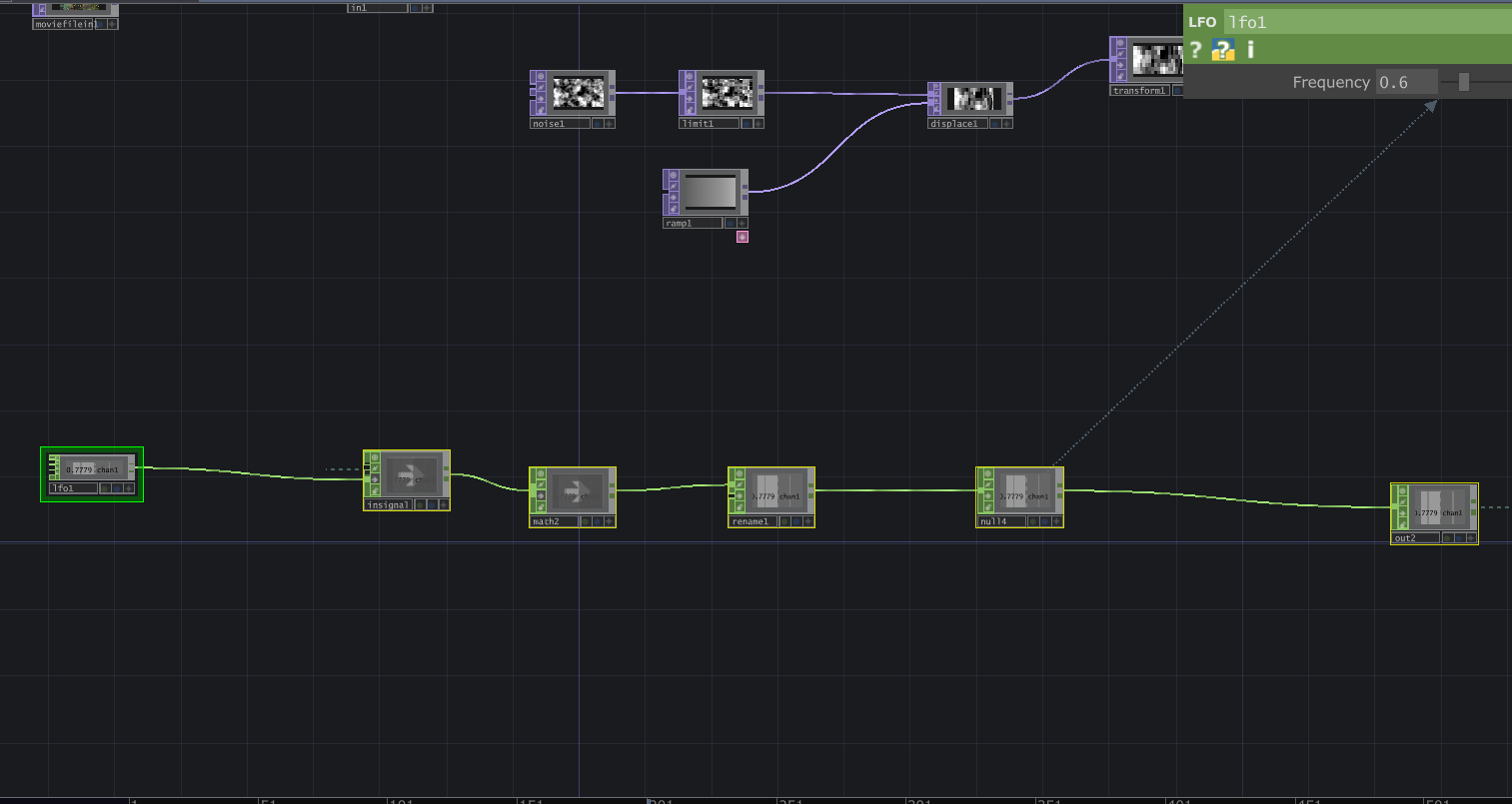

This portion processes that audio spectrum into a new visual. Starting in the bottom left, I use a series of TOPs to create a flow-like visual, which is then composited with the spectrum. This new visual is colored using a series of ramps and a look up TOP. The ramps are cycled through using either an LFO or an input from in_osc over the network. An example of the visuals this produces is below.

Lastly, this portion of the patch processes video received over the network from the in_video TOP (or in this case, a camera input) into audio. While I didn’t get quite as interesting an audio output as I wanted, I still think I was effective in transforming video to audio. The video that is received gets sent directly to a topto CHOP, which reads RGB values over the X and Y planes of the video. the following objects then reduce the amount of data, and turns those waves into a stereo audio signal by the merge CHOP. This wave is given an envelope by the math objects (I attempted to control this with another osc input but failed to make it work) and is sent out over the network. An example of audio is included below.

Reflection

Since I knew I wanted to flip the audio and video signals inside my patch, the independence of the cell-block was semi-inherent the entire time I was working on it. In order to ensure it was connectable with others, however, I needed to ensure that whatever the patch did was interesting enough, while still clearly using audio and video to influence the opposite output, so that it didn’t just seem like I was generating something entirely new.

I made choices about what to include and exclude primarily by trying to figure out what I could accomplish that was reasonably within my ability, but still interesting. For example, I’ve worked with spectrogram imagery in the past, so I knew I would be able to incorporate that the easiest. On the opposite end of that, I attempted to integrate FM synthesis into the audio part of my patch to get some more interesting sounds. However, with my inexperience in TouchDesigner, I found it really difficult to make FM work, so I chose to exclude it.

One thing that surprised me was how even if cell-blocks didn’t work “perfectly” together, they still were able to have some sort of interaction, even having unexplainable interactions. I was also a bit surprised how underutilized the OSC data we were sending was. I know I was personally having difficulty in doing something interesting with the OSC signals, but it was interesting that it was a widespread problem. I think this might come from the fact that the other signals we were working with were both very tangible. Since the OSC input was just a number, I think we were a bit less motivated to find an interesting way to use it, as opposed to the audio and video, which we could immediately do interesting things with.

I think we didn’t have quite enough time to experiment with combining our cell blocks in different ways for a lot of emergent behavior to emerge. But one that I enjoyed seeing was how the visuals would layer together through 2, 3 or 4 cell-blocks. I thought that all of the cell blocks were interesting on their own, but the most interesting visuals were created through the combination of several together. This relates to Halprin’s cell-block framework through the idea that we can each create our own module that does its own thing, but the most exciting behaviors only emerge once we begin to combine the different cell-blocks, and experiment with how they feed into each other.

Download Patch

Pressure Project #2 – Transcendence through Snares

Posted: March 3, 2026 Filed under: Uncategorized Leave a comment »- Description of my cell-block:

- Independently, my cell-block uses the snare of the audio provided to cycle through a set of mouth shapes to simulate lip syncing (albeit not realistic lip syncing). It also takes the video input and through Ramp and Displace, warps the image based on the “mid” registered from the audio as well. Without outside input the audio used is “Position Famous” by Frost Children, and the video is a looping timelapse POV of a subway traveling underground. This was to create a sense of motion and exhilaration (the movement of the subway and displacement), and playfulness (the lip syncing).

- Collective documentation:

- Video/photos of the assembled system: Admittedly, I forgot to take footage of the showcase. I was a bit more nervous about this project, worried about everything working properly with the other cell-blocks. Once it was my turn, I only focused on presenting my work. I plan to reach out to classmates to see if they recorded footage.

- Process reflection:

- The cell-block was self-contained but, on the exterior, was connected to incoming TOP and CHOP inputs and fed those inputs out. So, on its own, the block would play as planned, but once external audio and video were fed in, they would inherit the effects of the previous media. There were some issues with feedback loops when testing this out, but mostly, it worked. The lips were a last-minute add-on and therefore independent… so no matter what, the lips stayed on screen; how it reacted depended on the audio input.

- I chose to control the level of flashiness and movement in my visuals. It’s easy to fall into producing loud and flashy imagery with programs like TouchDesigner or even After Effects, however, I try to use media responsibly, and I also didn’t want to give myself a headache. I’ve made materials that are hard for photosensitive people to take in, and while some others loved the chaotic visuals, I wasn’t satisfied knowing a group of people wouldn’t be able to watch it (and enjoy it).

- I was surprised that a lot of people didn’t use audio that contained many snares (or used much audio at all)… I was also surprised that everything worked together for the most part (if you can’t tell, I was nervous).

- When combined, everyone’s work offered me something new. I would combine with Luke’s when I wanted the most cohesive combination, I would combine with Zarmeen’s when I wanted to destroy everything (or use her audio), I combined with Chad’s because I wanted to appear on his channels more, and I combined with Curtus when I wanted to see a dragon.

- This project was a new way to envision Halprin’s cell-block method, but in a strictly digital realm. The goal was to have every block exist on its own and influence others (multiply the possibilities of the content produced). I think we mostly did that, although networking still feels stressful to me; I at least know how it works (sort of).

- Individual documentation: