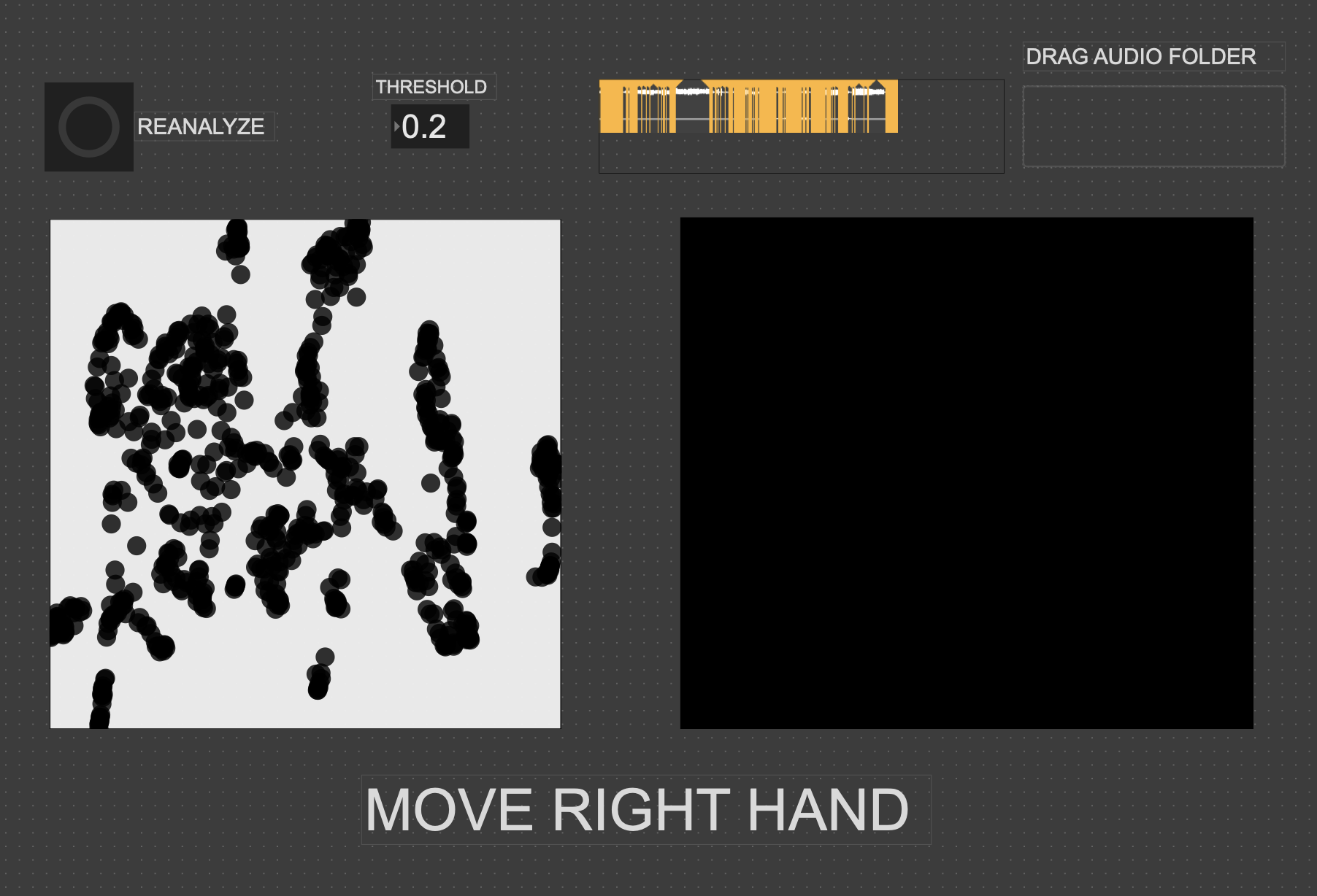

Cycle One: The Movement-Based Sound Explorer

Posted: April 13, 2026 Filed under: Uncategorized | Tags: cycle 1, FluCoMa, MaxMSP, MediaPipe, touchdesigner Leave a comment »As I began thinking about what I wanted to make my cycles about, I found myself gravitating towards a question I had previously been interested in when beginning to work on my senior project at the beginning of the year: How might technology allow for the creation of new modes of musical interface, where the relationship between audience and performer is almost entirely dissolved?

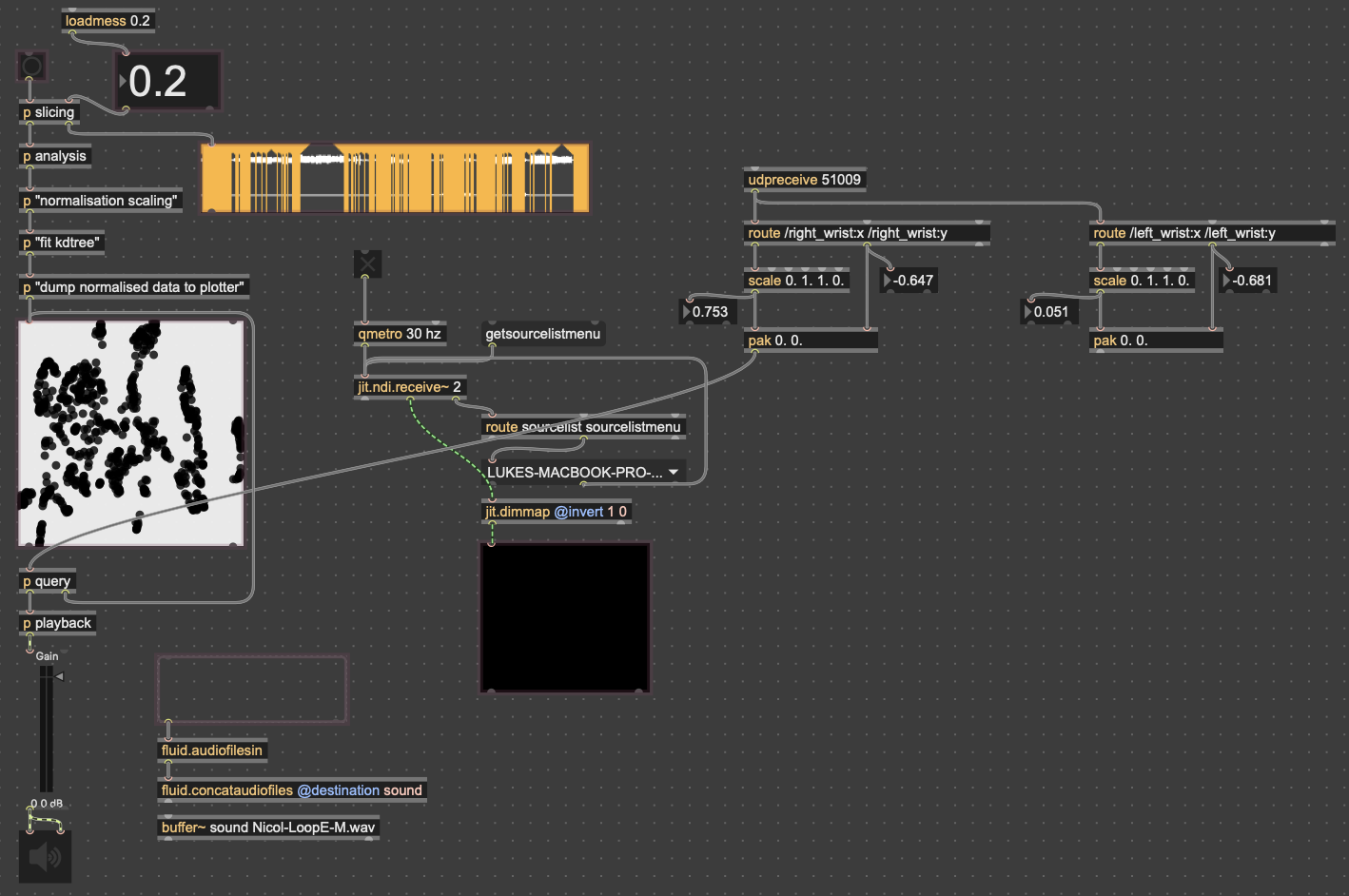

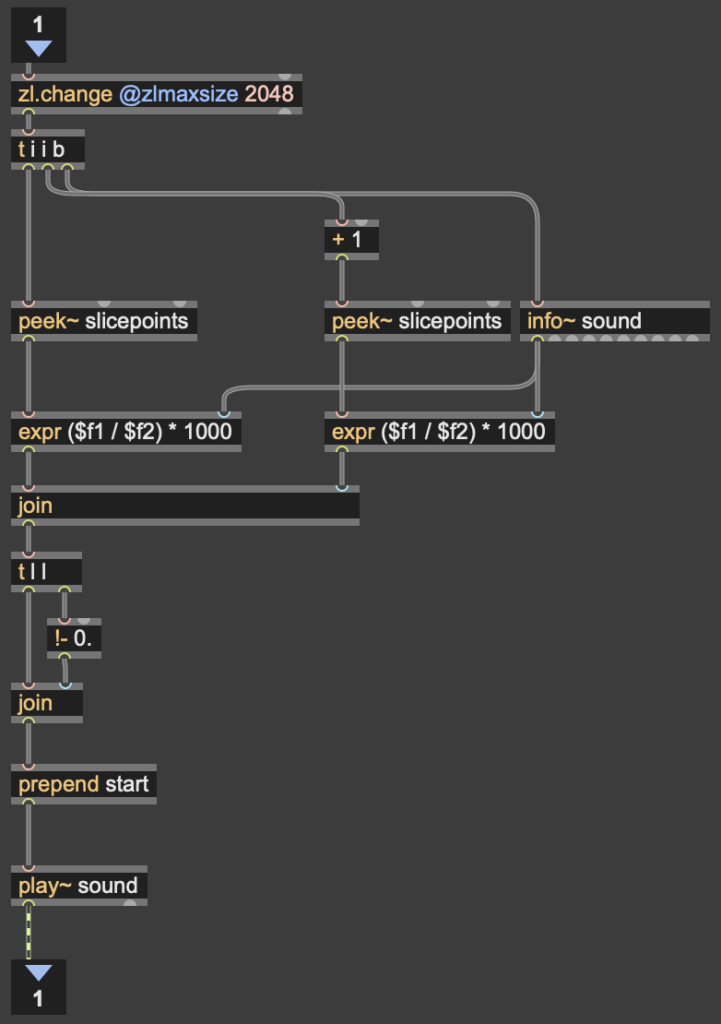

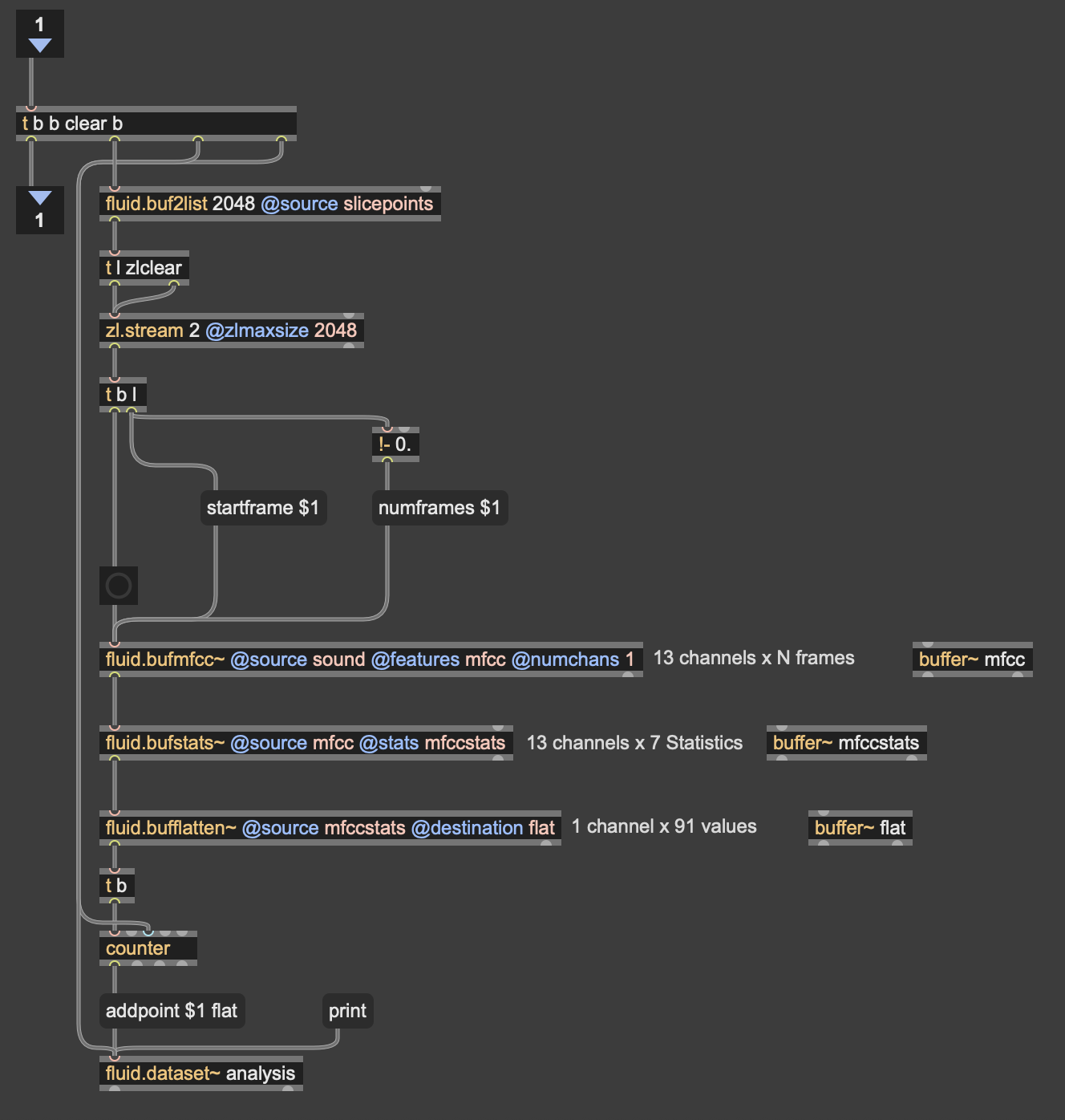

My primary resource was Max/MSP, as I know it best of all the computer music softwares, and I find it very useful for the development of new ways of making music.

Going into this cycle I knew one of the central resources that I could use to help me answer this question was Google MediaPipe–a real time motion capture software that uses webcam input as opposed to dedicated hardware/software that requires mo-cap suits. This allows for systems which anyone can easily interact with, even without knowing how the system works or what each mo-cap landmark is controlling. I handled this part of my patch in TouchDesigner, as the Max integration of MediaPipe has some difficulties I don’t have time to get into here.

My main goals for this cycle were to create an interface to interact with sound that was fun, interesting, but also left room for potential emergent behavior when left in the hands of different users.

The second major piece of software I used was the Fluid Corpus Manipulation (FluCoMa) toolkit for Max/MSP (also available in SuperCollider and Pure Data). This toolkit uses machine learning software to analyze, decompose, manipulate, and playback a large collection (or corpus) of samples. I initially chose this piece of software as one of its modes of playback is a 2D plotter which can map two different aspects of the sample analysis on to an X and Y axis. I thought this would be a perfect interface for MediaPipe control as the base 2D plotter uses mouse input, which I found to be detrimental to using it as an “instrument.”

I had initially wanted to expand the idea of the 2D plotter to a 3D one, as I felt being able to interact with the patch in a 3D space would be much more natural. However, I found expanding the logic to work in 3 dimensions was a much more difficult task than I’d thought, so I decided to stick with the 2D plotter for this cycle.

Results

I thought that I was mostly very successful with the goals I set out to accomplish. Everyone wanted to try out the patch, which I thought was a testament to the “fun” and “interest” aspects of it. The controls were also quickly picked up on, which was a goal of mine, as I’m interested in systems that audiences can interact with regardless if they’re conscious of the mechanics of that interaction or not. I was most interested to see how different people had their own unique ways of interacting with it as well. Chad, for example, was really trying to make something rhythmic and intelligible out of it, while others were going all over the place, or looking for specific sounds.

Some missed opportunities that I want to expand on in future cycles is the use of the Z dimension in controlling the playback of samples, as well as the use of multiple limbs to control playback. As you can see in the video, users were somewhat restricted in how they could control the patch by the Z direction not doing anything, as well as the fact that only the right hand could trigger sounds. By expanding this idea to 3D, instead of two, and allowing for the use of multiple limbs, I think it’ll give people more freedom in how they interact with the corpus of sounds.

This was the first real project I’ve done with FluCoMa, and thus I learned a ton about its mechanisms, particularly the storage of non-audio data in buffers. This is a concept used a lot more in environments like SuperCollider or Pure Data, as Max has some other objects for storing that kind of information. However because of the way the machine learning tools in FluCoMa work, it needs to store all of the information it may need in RAM. This was also the first project I’ve done sending OSC data between different apps on my computer, which had a bit of a learning curve as I discovered OSC data sends as strings, instead of floating point numbers. This didn’t create any real difficulty, as the conversion took no time, but it did make me aware of an important aspect of using OSC (particularly with Max, as certain objects process strings/floats/integers differently).

Cycle 1: The (bad) Friend

Posted: April 7, 2026 Filed under: Uncategorized | Tags: cycle 1, touchdesigner Leave a comment »The Score

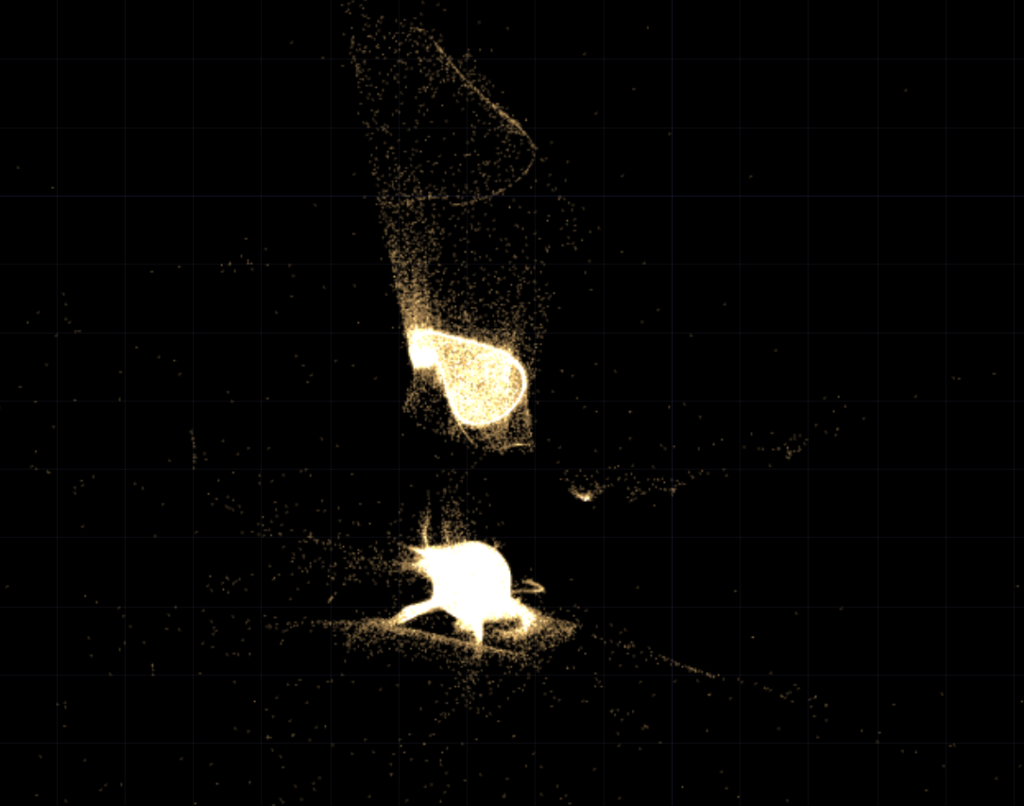

My idea for this cycle was simple (or atleast it seemed so in my head): make an AI-powered interactive experience where the user shares a space with an AI ‘presence’. It lives on a screen, but its there for you and it listens to whatever you have to say – or dont have to say. The score: a participant enters a space, speaks naturally, and the environment responds to the quality of what they shared through a particle system. No text output, no voice back. Just the space changing around them. The framing I gave participants was: “this is a friend you can talk to.” That framing is what became the main problem.

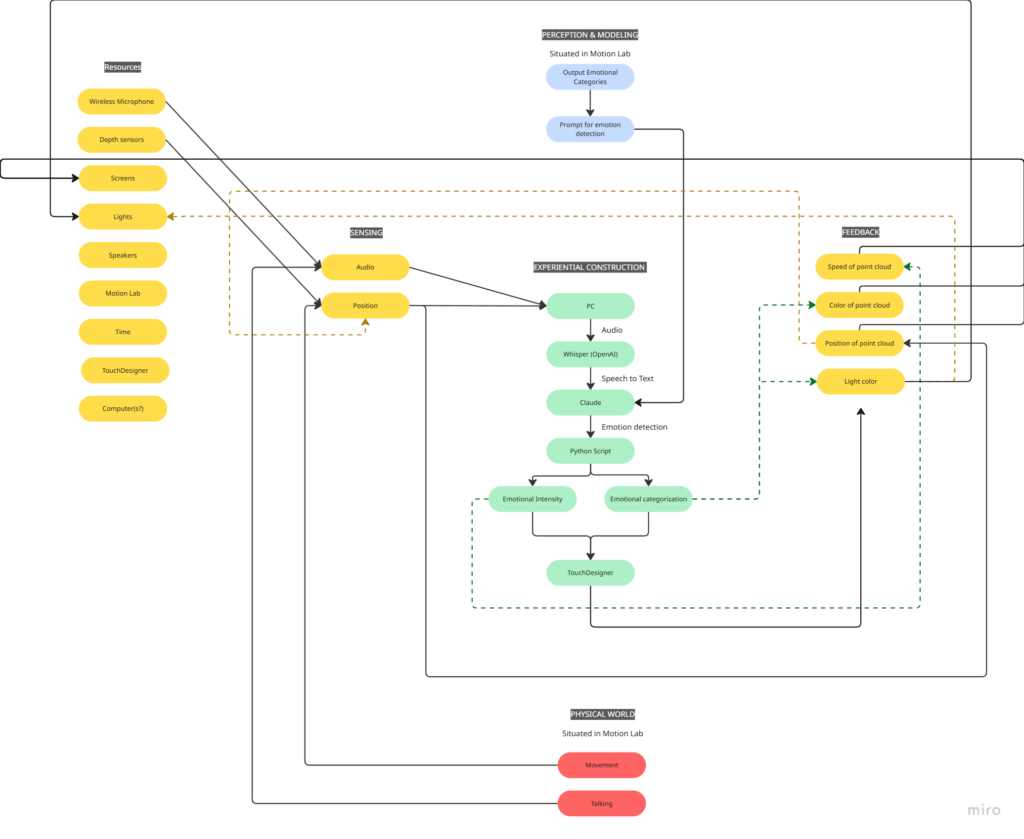

Resources

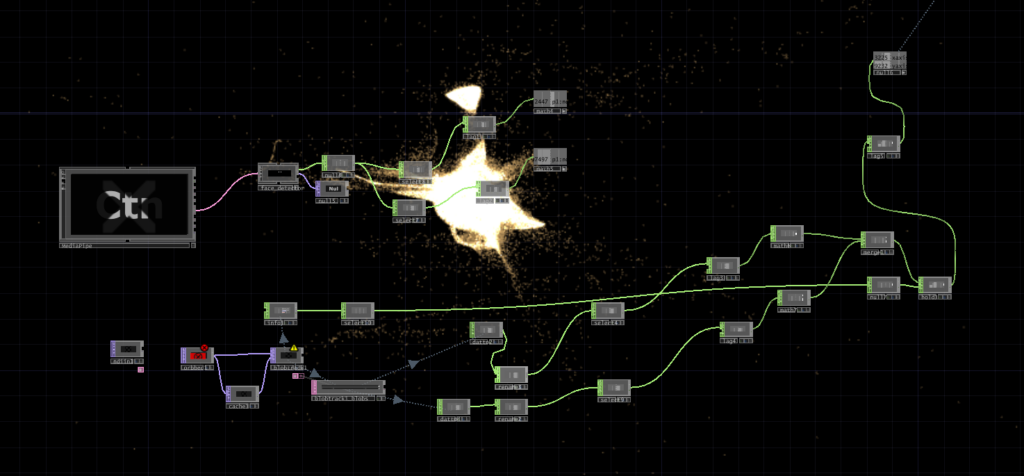

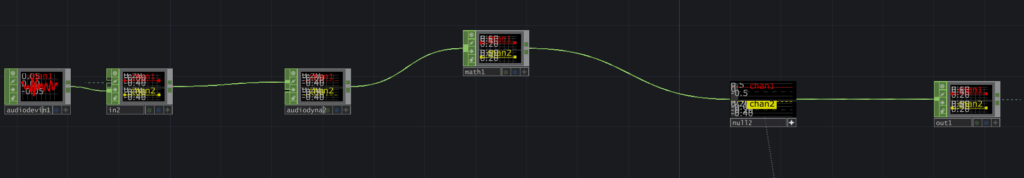

- TouchDesigner for the visual/particle system

- Python + PyAudio for microphone input

- OpenAI Whisper for speech-to-text transcription

- Claude API to interpret the speech and return atmospheric parameters (brightness, movement, weight, density) as JSON

- OSC to pipe values from Python into TouchDesigner

- Orbecc depth camera for body tracking (ceiling-mounted, blob detection)

- Motion Lab

- A Michael for troubleshooting (1)

Process and Pivots

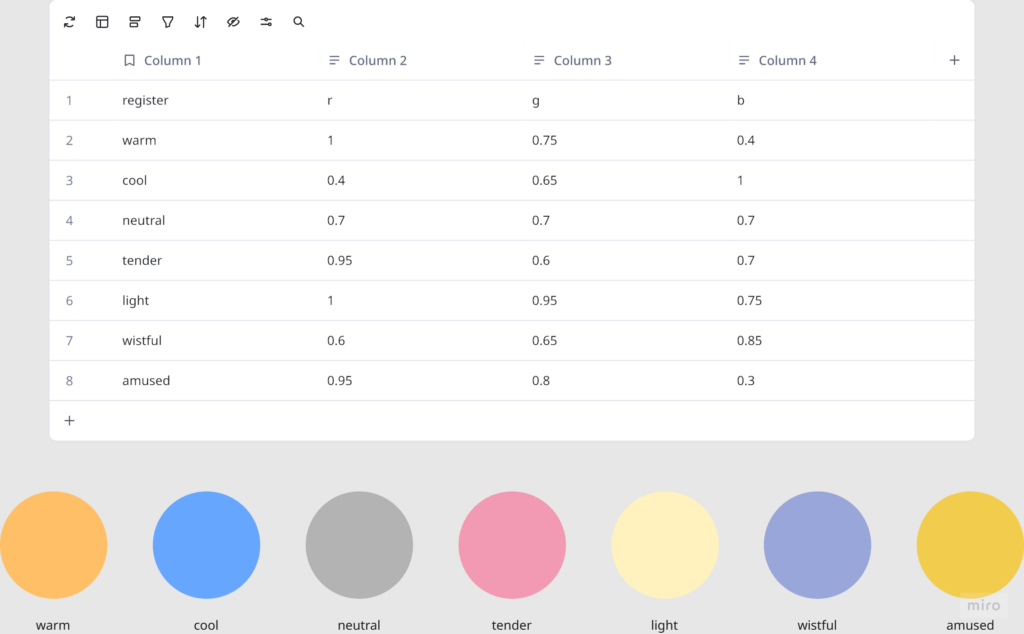

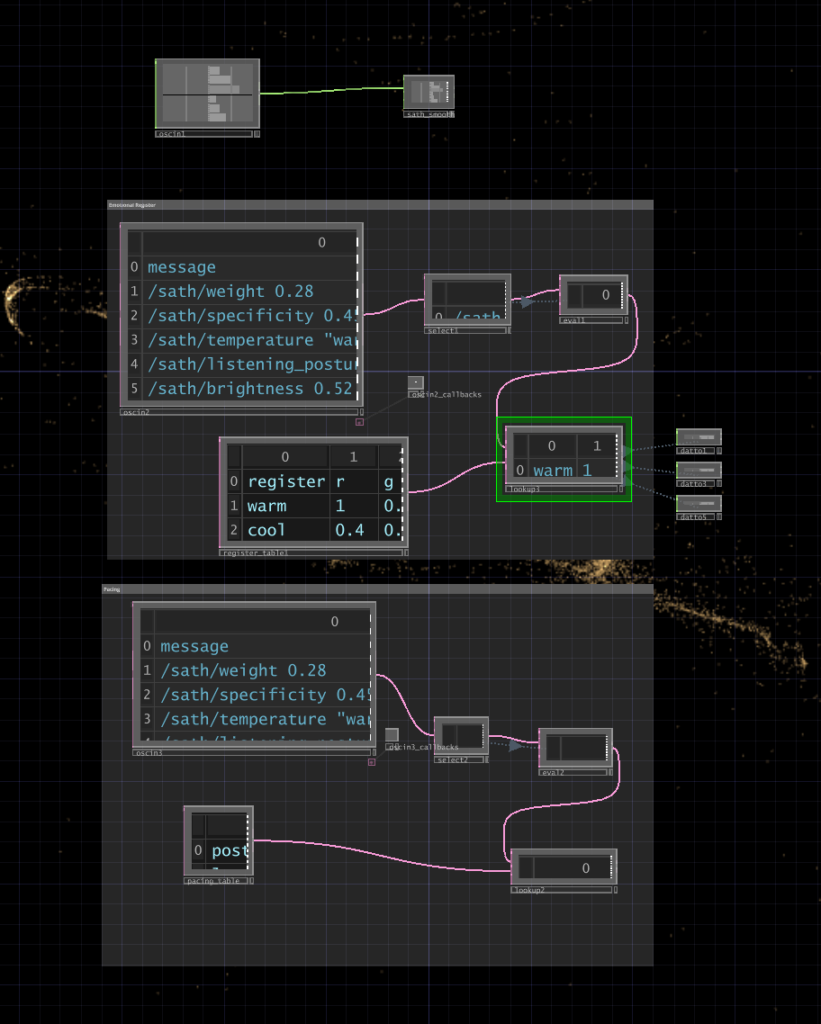

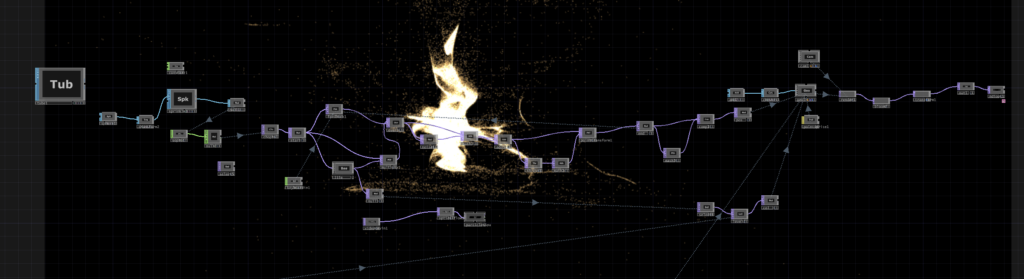

I wrote a python script that takes user input through the microphone, then uses OpenAI Whisper for speech-to-text transcription. It then sends the speech to claude in order to parse it according to the system prompt I gave it, which were metrics like emotional register, weight, intensity etc. The python script in turn sends these metrics to touchdesigner through OSC. Inside touchdesigner, I made table DATs that were storing the values of the incoming signals in order to apply those values to the visual system (a particle system). The values were suppsoed to effect the movement and color of the particle system.

I initially built my system on MediaPipe for body tracking, but then when I shifted the system to the motionlab, I had revelations. The system worked fine for a laptop but for it to work in an open space and a big projection screen, it would need a camera directly in front of the participant’s face (and the screen) to work, which sounds horrible for an immersive experience. So, I switched to blob detection through the Orbecc ceiling camera. That took a while to get right. It wouldn’t even detect me and I couldn’t figure out why so I made the very obvious assumption that it hates me lol. Turns out it needs something to reflect off of and I was wearing all black.

The original prompt to Claude was trying to do an emotional analysis, as in read how the person was feeling and respond to that. At some point I rewrote it to just read the texture and quality of what was shared, not the emotional content. That was actually the most important design decision I made: the difference between “I understand you” and “I am here.” The particle system was jerking between states and it felt mechanical, so I also had to apply some smoothing for it to not act crazy.

What Worked, What Didn’t, What I Learned

What didn’t work: ALOT. I think apart from the framing of the system, I had not realized the amount of time I needed to properly do this. I had only gotten a limited amount of time in the MOLA so I was only able to troubleshoot the projection and not run through the whole pipeline. I did not anticipate alot of things as they went wrong the biggest example of this would be the lag. There’s bad bad latency in the pipeline (mic → Whisper → Claude → OSC → TouchDesigner) and it was long enough that participants got confused. They’d speak, nothing would happen, they’d speak again, then two responses would arrive at once. A few people got genuinely frustrated. The “friend you can talk to” framing made this much worse because it set up an expectation of conversational timing that the system couldn’t meet. Lou said it was a bad bad friend. Like one of those people who keep looking at their phone when you’re trying to talk to them.

What worked unexpectedly: The observers. People watching someone else use the system felt something – specifically, they felt empathy for the participant who was being poorly served by the AI. That observation became the most interesting research finding of the whole cycle.

What I learned: Time is the biggest resource, and you have to plan according to it. Instead of trying to force all of your bajillion ideas into the time that you have. Also, framing matters more than you think it does! Had the same system been framed a different way, I would’ve gotten away with it, but since I had framed it a specific way, there were specific expectations.

Pressure Project 2: One for All, All for one

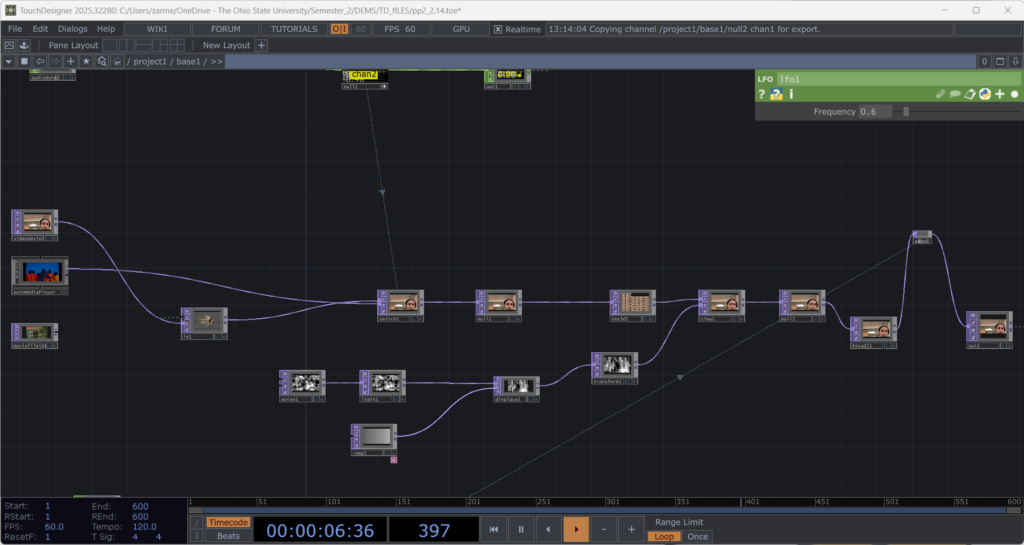

Posted: March 9, 2026 Filed under: Pressure Project 2 | Tags: Pressure Project, Pressure Project 2, touchdesigner Leave a comment »I named my cell One for All, All for One because it is built around the idea that an individual, and communities as a whole are constantly shaping each other. The cell itself is a constan conversation between the oneself and the communal archive. It takes a live video feed and layers it over a slideshow of images showing communities and people from different parts of the world.

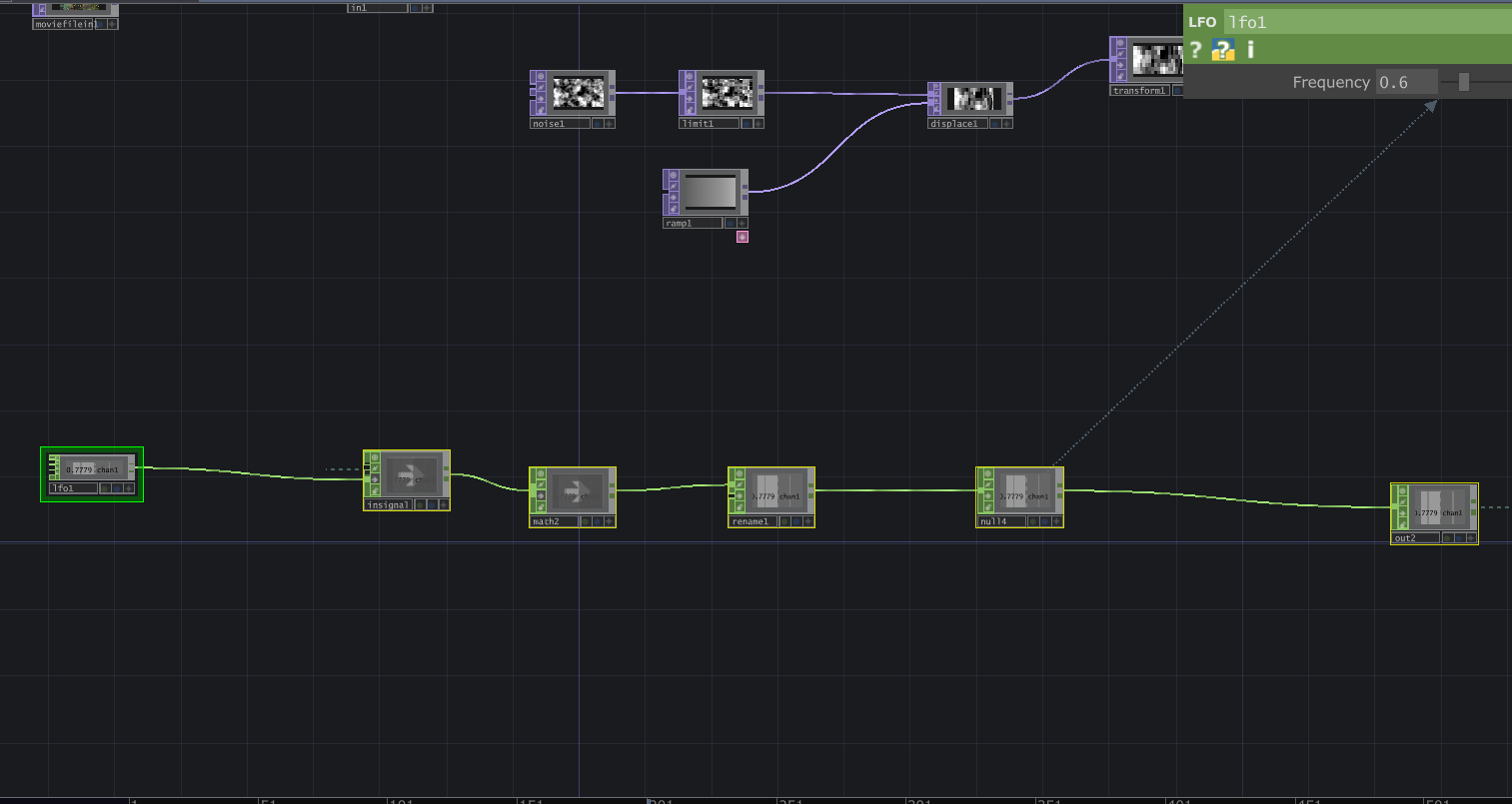

Then interactive sound enters the picture. A glitch effect driven by audio input levels determines how much the live video overlay fractures. The louder the audio, the more the live layer breaks apart and reveals the slideshow underneath. Alongside this, I built in an internal LFO paired with an Edge TOP to create a rhythmic pulse, something I called a “heartbeat”. Even without external input, the system works fine and feels alive.

The structure is modular and layered, and honestly not that complicated. There is a live video input, and a media player (which controls the slideshow) which plug into a switch. The output of the switch goes into a glitch system, and a pulse system. Each could be replaced without breaking the overall logic. The audio input, live video input, and the signal (LFO) are designed so that they could be overridden by an external network signal as well. The cell has its own system, but it is designed to connect, following the true concept of one for all, all for one.

Reflection:

When all the cells assembled, things became unstable. Signals were constantly dropping and connections were dying. For a while, I thought something was wrong with my cell because nothing would show up (it was a problem with the input signals I was getting). When I finally got it to work, very interesting emergent behaviors appeared. The glitches danced to different rhythms. Video overlays ended up in very interesting stacked outputs. It was interesting because I did design my system while being aware that it had to be plugged into a bigger system. However, I did not envision the results I got during testing. What I controlled alone became either amplified or distorted by others. The network did not just combine outputs. It reshaped them. I think where my careful planning fell off was the heartbeat. I had not accounted for the fact that other cells can have signals of different types. Instead of a steady pulse, I got an irregular signal input, which changed the whole heartbeat effect. At first it felt like something went wrong. My cell was no longer just reacting to my inputs. It was reacting to everyone. That is exactly what One for All, All for One means. Each cell affects the others. Each signal influences the collective behavior. My cell had a life of its own. In the network, it learned to respond, adapt, and sometimes surrender to the collective.

Project File: pp2_Zarmeen.zip