Multi-Viewer 3D Displays (why the 3DS is a handheld divice)

Posted: November 12, 2015 Filed under: Uncategorized Leave a comment »I have been trying to make a, glasses free, multi-viewer 3D monitor (like the Nintendo 3DS, only 23 inch and multiple viewers), and it is much more tricky than it looks.

The parallax barrier cuts down the brightness exponentially based on the number of viewer (50% light cut to do 1 pair of eyes, 75% for 2), so with 2 viewers the screen is dim. But there is more, the resolution is not only cut by 1/4, a pixel is just as narrow, but I am adding a huge gap between them (some test subjects could not even tell what they were looking at)…

Lenticular lenses cost about $12 a foot, but none of the ones I got in the sampler pack line up with the pixels on any of the monitors, so I have to sacrifice even more horizontal resolution…

I gave up and started doing head tracking, but isolating individual eyes on that scale is just about impossible, so I wind up wasting 4X the resolution just to have enough margin for a single viewer…

And it still doesn’t isolate the eyes properly!!!

Now I have a 3D display (if you do not move faster than the head tracking can follow, and remain 2 to 3 1/2 feet from the monitor) that tracks your head, but will not respond to more than 1 viewer…

So I decided that when the head tracking detects more than one person, or if you move around too fast, the character (Tuba-Goose) will get irritated and leave…

If I can get it to do that, I would consider it a hell of an achievement. And even watching this quasi-3D interface figure out where you are and adjust the perspective to compensate (with a bit of a lag sometimes) is a little unsettling… Kind of like being eyeballed by a goose…

I can work with this, but I might still have to buy the 3D monitor, or at least the lens that is made for the 23 inch display…

The Present

Posted: November 9, 2015 Filed under: Anna Brown Massey, Final Project Leave a comment »The Past

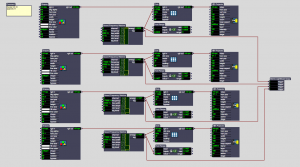

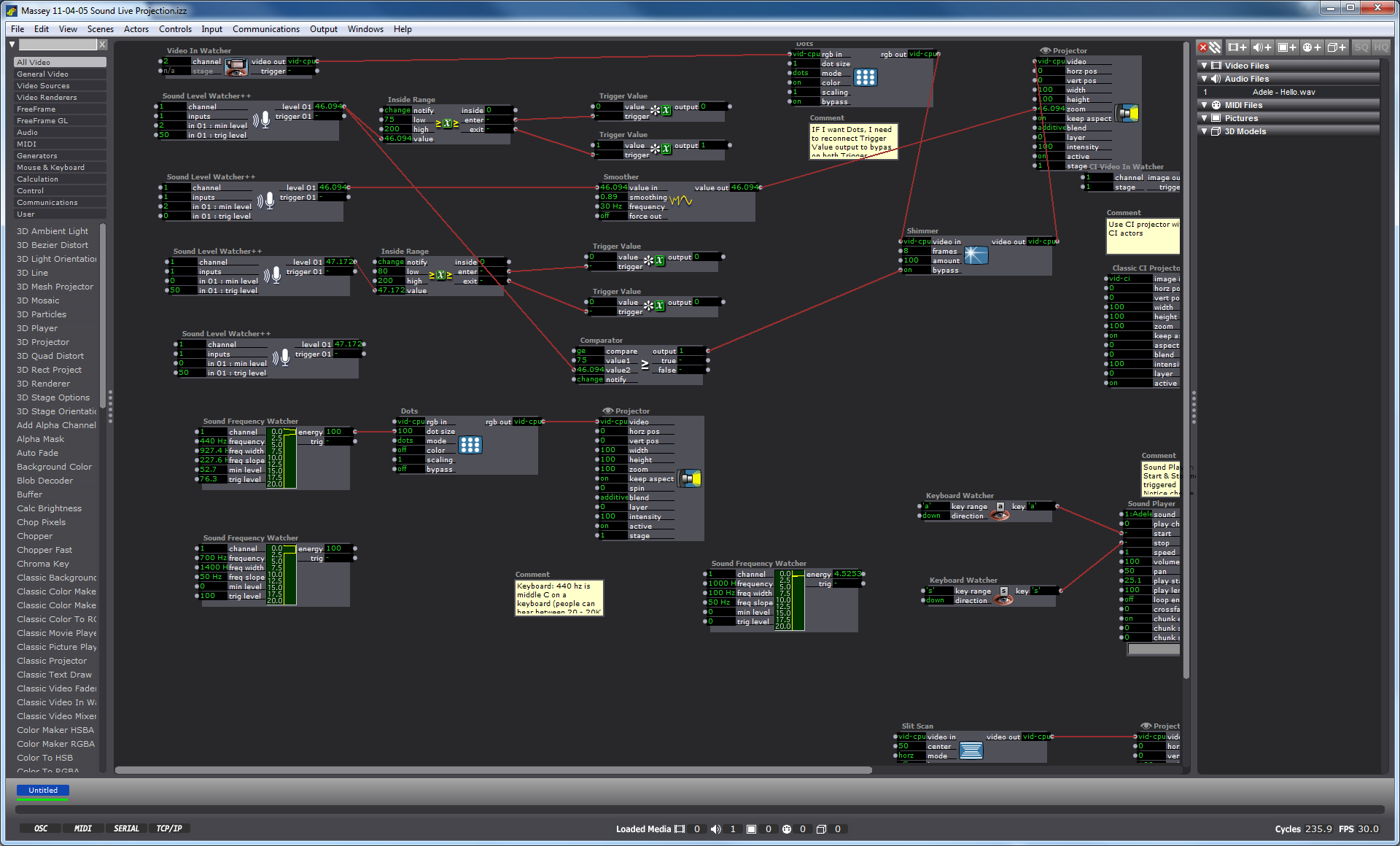

I am currently designing an audio-video environment in which Isadora reads the audio and harnesses the dynamics (amplification level) data, and converts those numbers into methods of shaping the live video output, which in its next iteration may be mediated through a Kinect sensor and separate Isadora patch.

My first task was to create a video effect out of the audio. I connected the Sound Level Watcher to the Zoom data on the Projector actor, and later added a Smoother in between in order to smooth the staccato shifts in zoom on the video. (Initial ideation is here.)

Once I had established my interest in the experience of zoom tied to amplitude, I enlisted the Inside Range actor to create a setting in which past a certain amplitude, the Sound Level Watcher would trigger the Dots actor. In other words, whenever the volume of sound coming into the mic hits a certain point and above, the actors trigger a effect on the live video projection in which the screen disperses into dots. I selected the Dots actor not because I was confident that it would create a magically terrific effect, but because it was a familiar actor with which I could practice manipulating the volume data. I added the Shimmer actor to this effect, still playing with the data range that would trigger these actors only above a certain point of volume.

The Future

User-design Vision:

Through this process my vision has been to make a system adaptable to multiple participants of a range between 2 and 30 who can all be simultaneously engaged by the experience and possibly having different roles by their own self-selection. As with my concert choreography, I am strategizing methods of introducing the experience of “discovery.” I’d like this one to feel, to me, to be delightful. With a mic available in the room, I am currently playing with the idea of having a scrolling karaoke projection with lyrics to a well-known song. My vision includes a plan to plan on how to “invite” an audience to sing and have them discover that the corresponding projection is in causation relationship to the audio.

Sound Frequency:

Next steps, as seen at the bottom of the screenshot (and on the righthand side some actors I have laid aside for possible future use), is to start using the Sound Frequency actor as a means of taking in data about pitch (frequency) as a means of affecting video output. To do so I will need to provide an audio file in a variety of ranges as a source to experiment to observe how data shifts at different human voice registers. Then I will take the frequency data range and match that up, through an inside actor, to connect to a video output.

Kinect Collaboration:

I am also considering collaborating with my colleague Josh Poston’s project which currently uses the Kinect with projection as a replacement “live video projection,” that I am currently using, and instead to affect a motion-sensing movement on a rear-projected screen. As I consider joining our projects and expand its dimensions (so to speak-oh puns!), I need to start narrowing on the user-design components. In other words, where does the mic(s) live, where the screen, how many people is this meant for, where will they be, will participants have different roles, what is the

Iteration 1 of Final Project

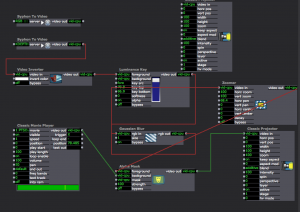

Posted: November 9, 2015 Filed under: Alexandra Stilianos, Final Project Leave a comment »Here is my patch for the first iteration:

The small oval shape followed a person around the space that is visible by both a Kinect and the projector. This was difficult since their field of views were slightly off, but was able to create it with good accuracy.

I would’ve like the shape to move a bit more smoothly and conform more closely to the shape of the body. I think using some of the actors that Josh did (Gaussian Blur and Alpha Mask) will help with these issues.

Cycle 2 – Sarah Lawler

Posted: November 9, 2015 Filed under: Final Project, Sarah Lawler Leave a comment »Cycle 2 – Using Midi, Connor and I were able to control light cues by strumming strings on Connor’s guitar.

Cycle 2 Demo

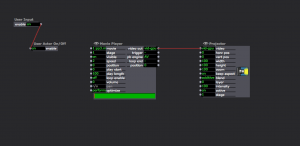

Posted: November 7, 2015 Filed under: Jonathan Welch, Uncategorized | Tags: Jonathan Welch Leave a comment »The demo in class on Wednesday showed the interface responding to 4 scenarios:

- No audience presence (displayed “away” on the screen)

- Single user detected (the goose went through a rough “greet” animation)

- too much violent movement (the words “scared goose” on the screen)

- more than a couple audience members (the words “too many humans” on the screen)

The interaction was made in a few days, and honestly, I am surprised it was as accurate and reliable as it was…

The user presence was just a blob output. I used a “Brightness Calculator” with the “Difference” actors to judge the violent movement (the blob velocity was unreliable with my equipment). Detecting “too many humans” was just another “Brightness Calculator”. I tried more complicated actors and patches, but these were the ones that worked in the setting.

Most of what I have been spending my time solving is an issue with interlacing. I hoped I could build something with the lenses I have, order a custom lens (they are only $12 a foot + the price to cut), or create a parallax barrier. Unfortunately, creating a high quality lens does not seem possible with the materials I have (2 of the 8″ X 10″ sample packs from Microlens), and a parallax barrier blocks light exponentially based on the number of viewing angles (2 views blocks 50%, 3 blocks 66%… 10 views blocks 90%). On Sunday I am going to try a patch that blends interlaced pixels to fix the problem with the lines on the screen not lining up with the lenses (it basically blends interlaces to align a non-integral number of pixels with the lines per inch of the lens).

Worse case scenario… A ready to go lenticular monitor is $500, the lens designed to work with a 23″ monitor is $200, and a 23 inch monitor with a pixel pitch of .270 mm is about $130… One way or another, this goose is going to meet the public on 12/07/15…

Links I have found useful are…

Calculate the DPI of a monitor to make a parallax barrier.

Specs of the one of the common ACCAD 24″ monitor

http://www.pcworld.com/product/1147344/zr2440w-24-inch-led-lcd-monitor.html

MIT student who made a 24″ lenticular 3D monitor.

http://alumni.media.mit.edu/~mhirsch/byo3d/tutorial/lenticular.html

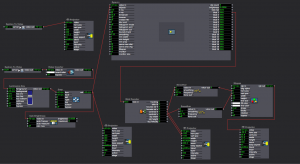

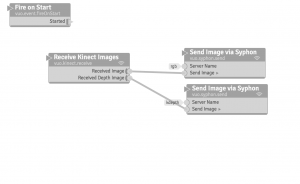

Josh Isadora PP3

Posted: November 6, 2015 Filed under: Josh Poston, Pressure Project 3 Leave a comment »For Pressure project three I used the tracking to trigger a movie on the upstage projection screen when a person entered into my assigned section of the space. Here is the patch that I utilized.

Josh Final Presentation 1 Update

Posted: November 6, 2015 Filed under: Josh Poston, Uncategorized Leave a comment »

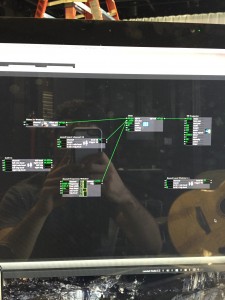

This is my patch which receives the kinect data through syphon into isadora. There it takes the kdepth data and using luminance key and gaussian blur creates a solid and smoother image. From there that smooth image of the person standing in the space is fed into an alpha mask and combined with a video feed which projects a video within the outline of the body.

Checking In: Final Project Alpha and Comments

Posted: November 4, 2015 Filed under: Connor Wescoat, Isadora Leave a comment »Alpha:

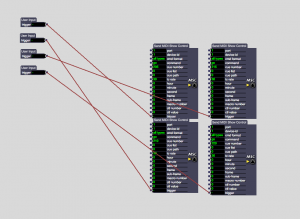

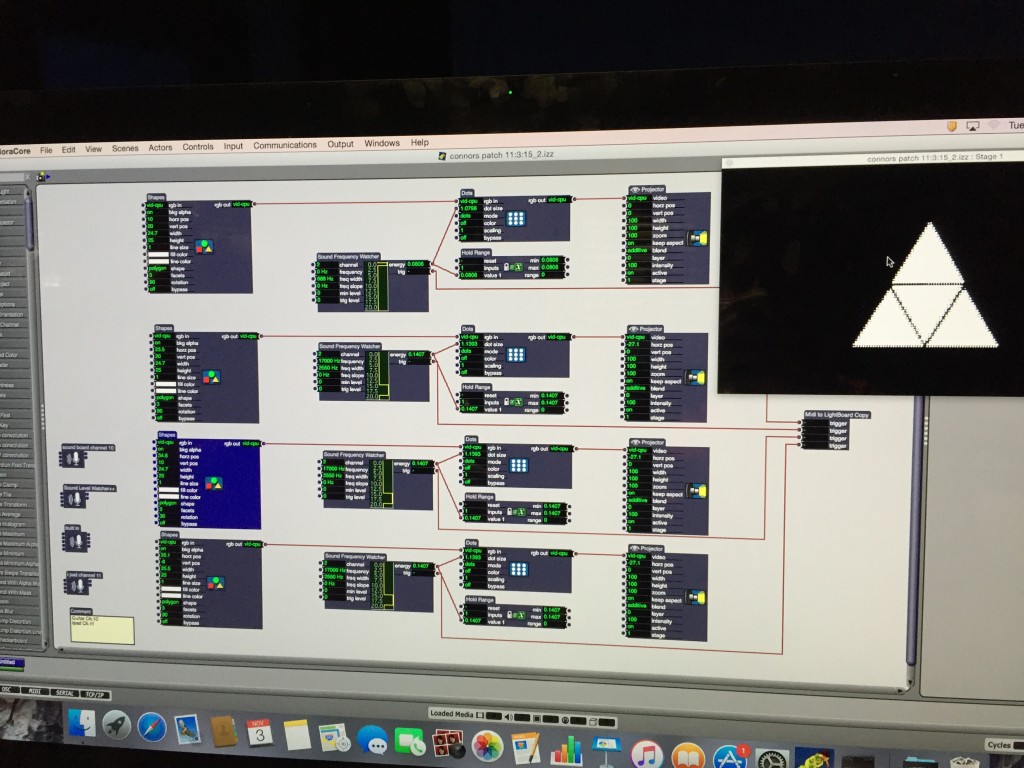

Today marked the first of our cycle presentation days for our final project. While I initially was stressing hard about this day, I was able to, with the help of some very important people, present a very organized “alpha” phase to my final project. While my idea initially took a lot of incubating and planning, I hashed it out and executed the initial connection/visual stage of my performance. Below is a sketch of my ideas for layouts, my beginning patch and my final alpha patch that I demonstrated in class today.

You will see the difference between my first patch and my second.

The small actor off to the right and bellow the image is a MIDI actor calibrated to the light board and my guitar. Sarah worked with me through this part and did a wonderful job explaining and visualizing this process.

Comments:

Lexi: Great start to your project! You made a really cool virtual spotlight using the Kinect. I know that the software may be giving you some initial troubles but I know you’ll be able to work through it and deliver an awesome final cut that incorporates your dance background. Keep it up!

Anna: I really like what you are doing with the Mic and Video connection. I believe that when it all comes together it will be something that’s really interactive and fun to play around with. I’m already having fun seeing how my voice influences the live capture. Keep it up!

Sarah: Words cannot describe how much you have helped me in this class and I look forward to working with you to not only complete but also OWN this project. I’m sure the final cut of your lighting project is going to be awesome and I look forward to working with you more.

Josh: How you implemented the Kinect with your personal videos is a very cool take on how someone can actually interact with video in a space. I cant wait to see what else you add to your project!

Jonathan: Dude, your project is practically self-aware! Even if you explained it to me, I would never know how you were able to do that. I look forward to seeing your final project. Keep it up!

Isadora Updates

Posted: November 2, 2015 Filed under: Uncategorized Leave a comment »http://troikatronix.com/isadora-2-1-release-notes/

Mark recently updated Isadora …

Check out the release notes.

It changes the ways that videos are assigned to the stage.