Final Project

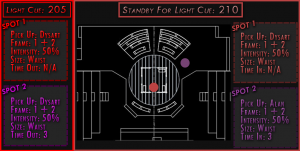

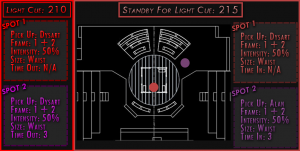

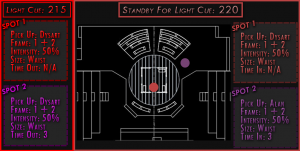

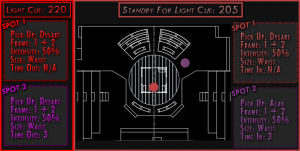

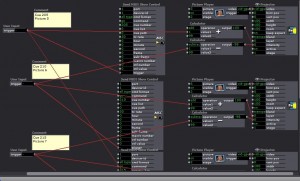

Posted: December 13, 2015 Filed under: Pressure Project 3, Sarah Lawler, Uncategorized 1 Comment »Below is a zip file of my final project along with some screen shots of the patch and images embedded into the index of the patch.

The overall design of this project was based off of being an emergency spot light operator. There was no physical way to read cues on a sheet of paper while operating a spotlight. This read only system is a prototype. The images are pushed to a tablet sitting in front of the spot light operator based off the cues in the light board. The spot light operator can then determine what cue they need to be in standby for hands free.

How To Make An Imaginary Friend

Posted: December 13, 2015 Filed under: Anna Brown Massey, Final Project 1 Comment »An audience of six walk into a room. They crowd the door. Observing their attachment to remaining where they’ve arrived, I am concerned the lights are set too dark, indicating they should remain where they are, safe by a wall, when my project will soon require the audience to station themselves within the Kinect sensor zone at the room’s center. I lift the lights in the space, both on them and into the Motion Lab. Alex introduces the concepts behind the course, and welcomes me to introduce myself and my project.

In this prototype, I am interested in extracting my live self from the system so that I may see what interaction develops without verbal directives. I say as much, which means I say little, but worried they are still stuck by the entrance (that happens to host a table of donuts), I say something to the effect of “Come into the space. Get into the living room, and escape the party you think is happening by the kitchen.” Alex says a few more words about self-running systems–perhaps he is concerned I have left them too undirected–and I return to station myself behind the console. Our six audience members, now transformed into participants, walk into the space.

I designed How to make an imaginary friend as a schema to compel audience members, now rendered participants, to respond interactively through touching each other. Having achieved a learned response to the system and gathered themselves in a large single group because of the cumulative audio and visual rewards, the system would independently switch over to a live video feed as output, and their own audio dynamics as input, inspiring them to experiment with dynamic sound.

System as Descriptive Flow Chart

Front projection and Kinect are on, microphone and video camera are set on a podium, and Anna triggers Isadora to start scene.

> Audience enters MOLA

“Enter the action zone” is projected on the upstage giant scrim

> Intrigued and reading a command, they walk through the space until “Enter the action zone” disappears, indicating they have entered.

Further indication of being where there is opportunity for an event is the appearance of each individual’s outline of their body projected on the blank scrim. Within the contours of their projected self appears that slice of a movie, unmasked within their body’s appearance on the scrim when they are standing within the action zone.

> Inspired to see more of the movie, they realize that if they touch or overlap their bodies in space, they will be able to see more of the movie.

In attempts at overlapping, they touch, and Isadora reads a defined bigger blob, and sets off a sound.

> Intrigued by this sound trigger, more people overlap or touch simultaneously, triggering more sounds.

> They sense that as the group grows, different sounds will come out, and they continue to connect more.

Reaching the greatest size possible, the screen is suddenly triggered away from the projected appearance, and instead projects a live video feed.

They exclaim audibly, and notice that the live feed video projection changes.

> The louder they are, the more it zooms into a projection of themselves.

They come to a natural ending of seeing the next point of attention, and I respond to any questions.

Analysis of Prototype Experiment 12/11/15

As I worked over the last number of weeks I recognized that shaping an experiential media system renders the designer a Director of Audience. I newly acknowledged once the participants were in the MOLA and responding to my design, I had become a Catalyzer of Decisions. And I had a social agenda.

There is something about the simple phrase “I want people to touch each other nicely” that seems correct, but also sounds reductive–and creepily problematic. I sought to trigger people to move and even touch strangers without verbal or text direction. My system worked in this capacity. I achieved my main goal, but the cumulative triggers and experiences were limited by an all-MOLA sound system failure after the first few minutes. The triggered output of sound-as-reward-for-touch worked only for the first few minutes, and then the participants were left with a what-next sensibility sans sound. Without a working sound system, the only feedback was the further discovery of unmasking chunks of the film.

Because of the absence of the availability of my further triggers, I got up and joined them. We talked as we moved, and that itself interested me–they wanted to continue to experiment with their avatars on screen despite a lack of audio trigger and an likely a growing sense that they may have run out of triggers. Should the masked movie been more engaging (this one was of a looped train rounding a station), they might have been further engaged even without the audio triggers. In developing this work, I had considered designing a system in which there was no audio output, but instead the movement of the participants would trigger changes in the film–to fast forward, stop, alter the image. This might be a later project, and would be based in the Kinect patch and dimension data. Further questions arise: What does a human body do in response to their projected self? What is the poetic nature of space? How does the nature of looking at a screen affect the experience and action of touch?

Plans for Following Iteration

- “Action zone” text: need to dial down sensitivity so that it appears only when all objects are outside of the Kinect sensor area.

- Not have the sound system for the MOLA fail, or if this happens again, pause the action, set up a stopgap of a set of portable speakers to attach to the laptop running Isadora.

- Have a group of people with which to experiment to more closely set the dimensions of the “objects” so that the data of their touch sets off a more precisely linked sound.

- Imagine a different movie and related sound score.

- Consider an opening/continuous soundtrack “background” as scene-setting material.

- Consider the integrative relationship between the two “scenes”: create a satisfying narrative relating the projected film/touch experience to the shift to the audio input into projected screen.

- Relocate the podium with microphone and videocamera to the center front of the action zone.

- Examine why the larger dimension of the group did not trigger the trigger of the user actor to switch to the microphone input and live video feed output.

- Consider: what was the impetus relationship between the audio output and the projected images? Did participants touch and overlap out of desire to see their bodies unmask the film, or were they relating to the sound trigger that followed their movement? Should these two triggers be isolated, or integrated in a greater narrative?

Video available here: https://vimeo.com/abmassey/imaginaryfriend

Password: imaginary

All photos above by Alex Oliszewski.

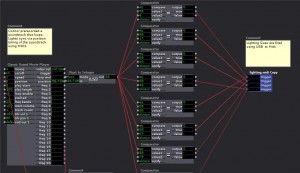

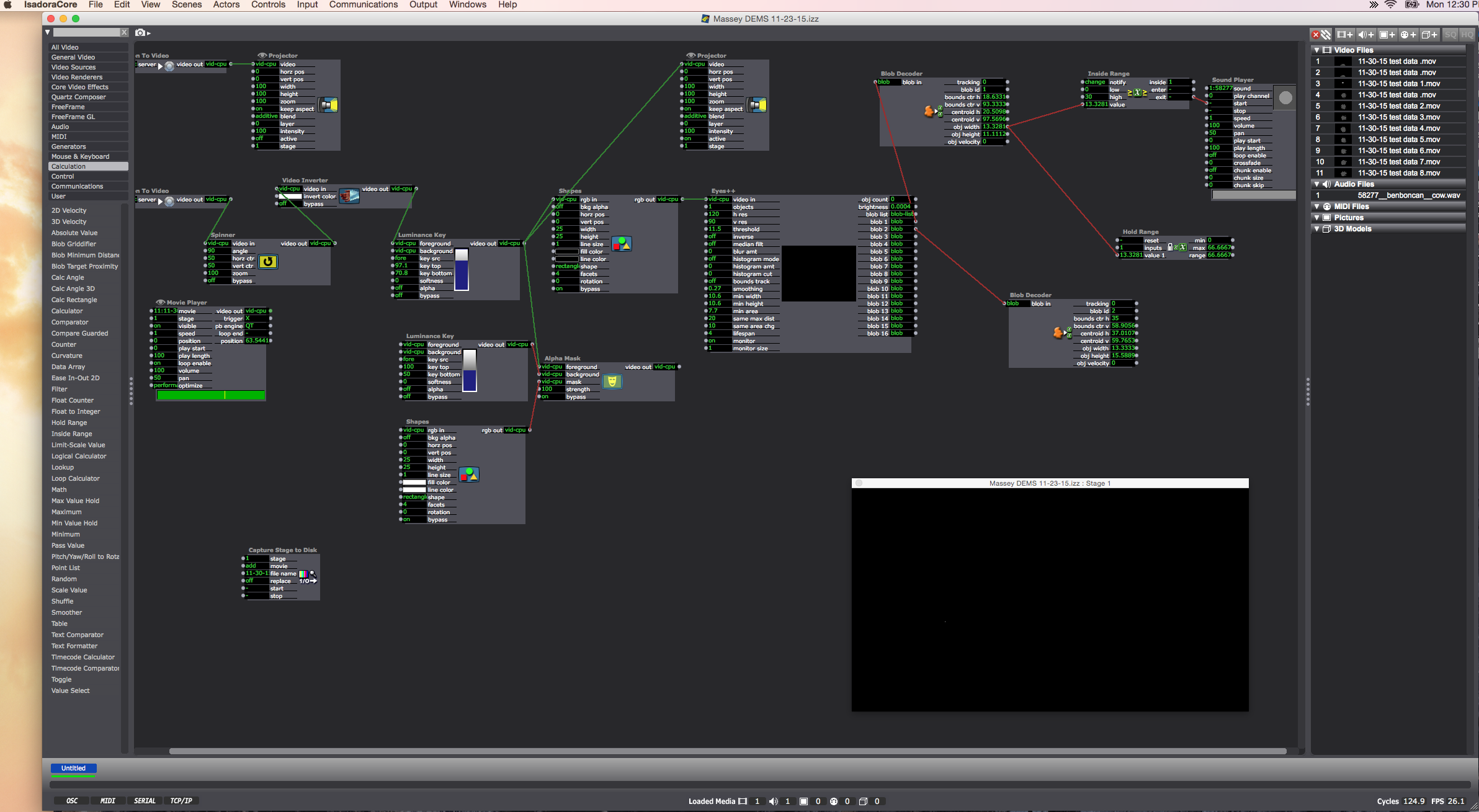

Software Screen Shots

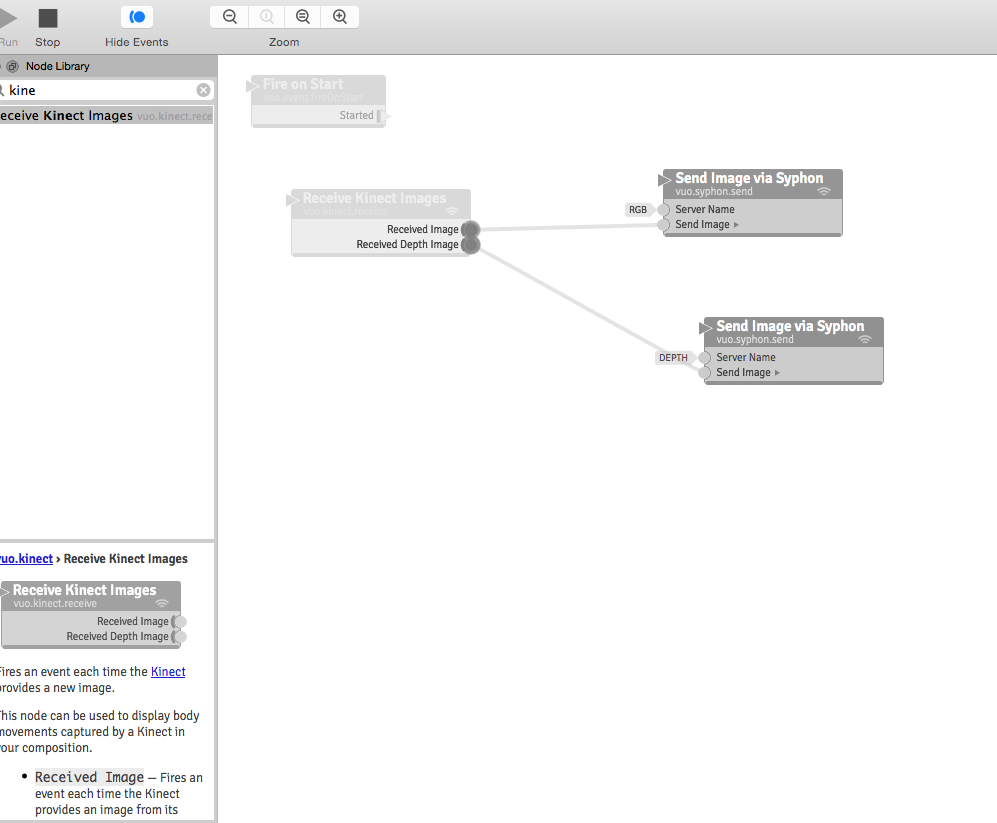

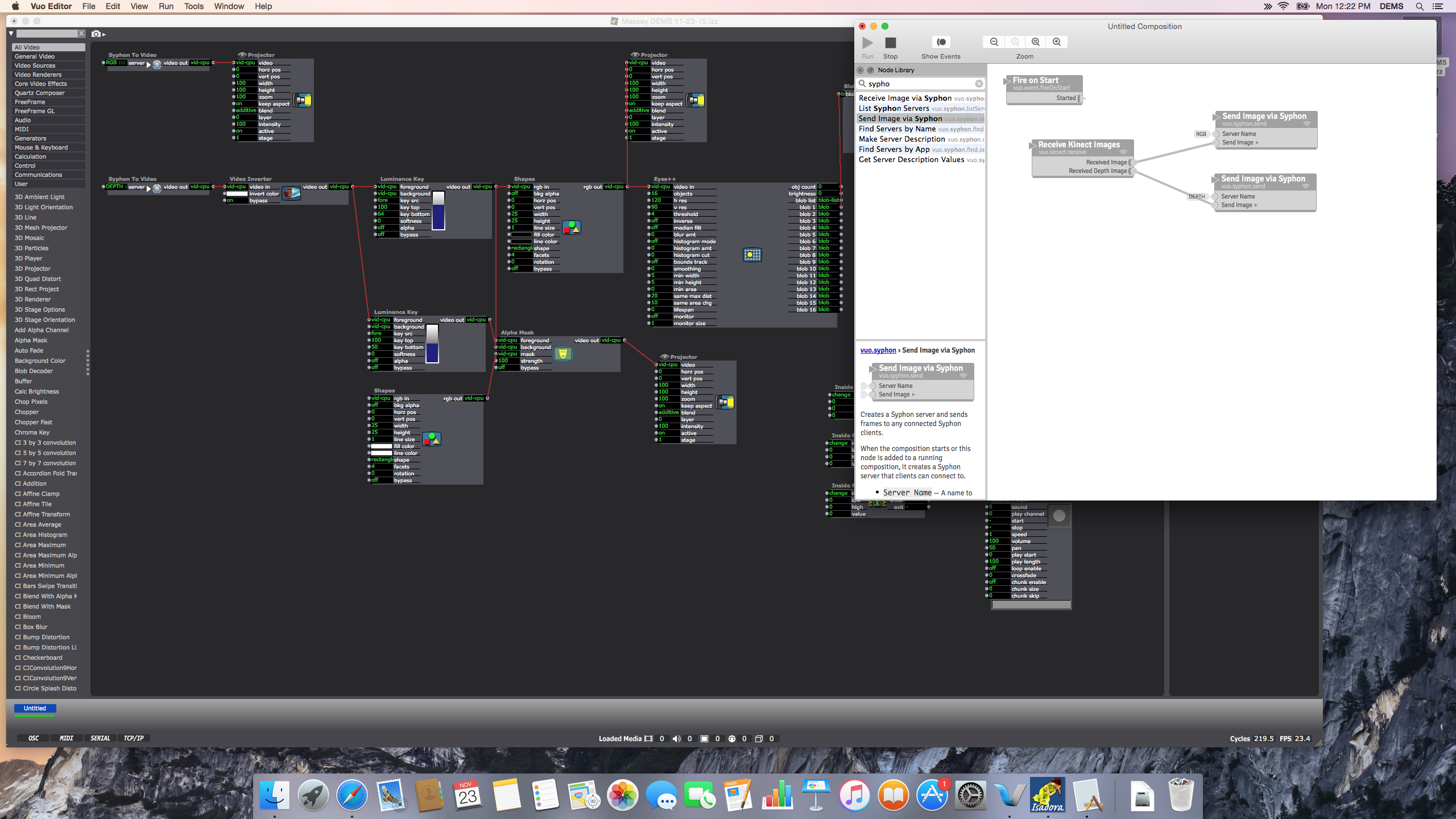

Vuo (Demo) Software connecting the Kinect through Syphon so that Isadora may intake the Kinect data through a Syphon actor:

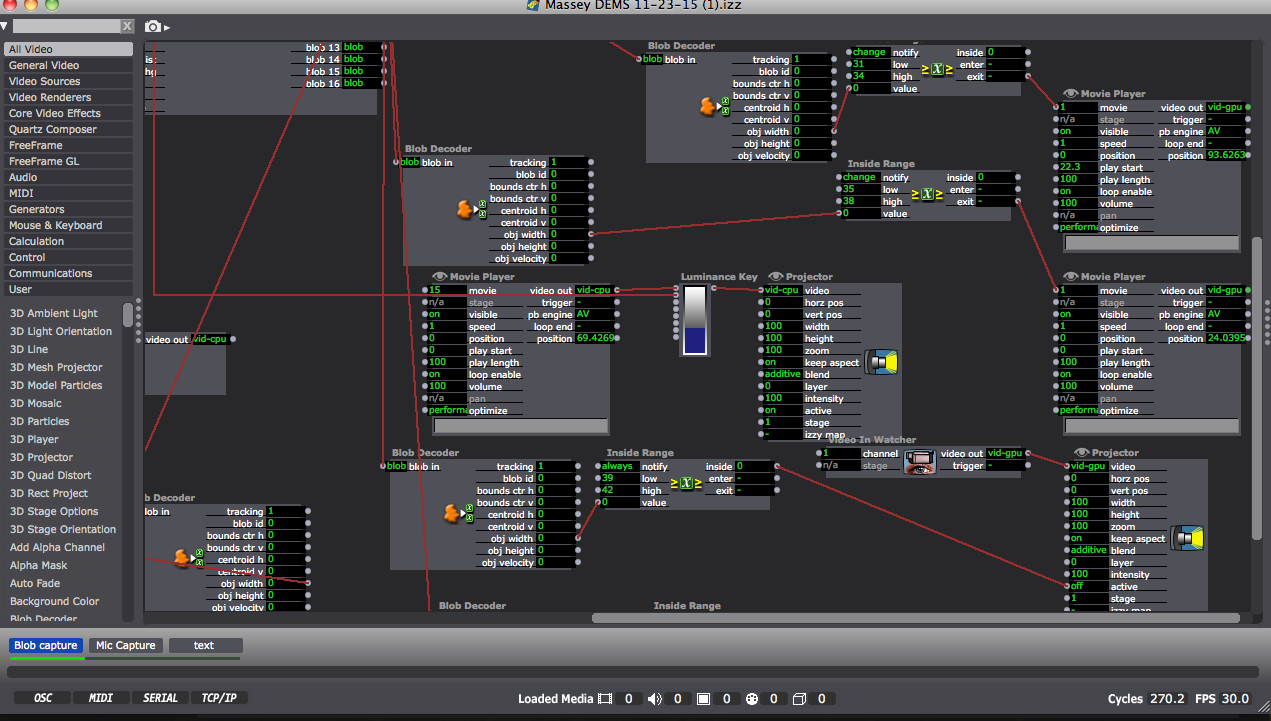

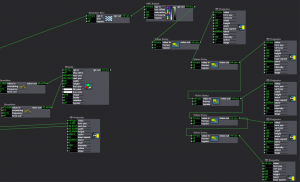

Partial shot of the Isadora patch:

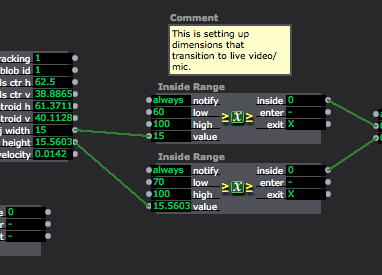

Close up of “blob” data or object dimensions coming through on the left, mediated through the Inside range actors as a way of triggering the Audio scene only when the blobs (participants) reach a certain size by joining their bodies:

Final Project Showing

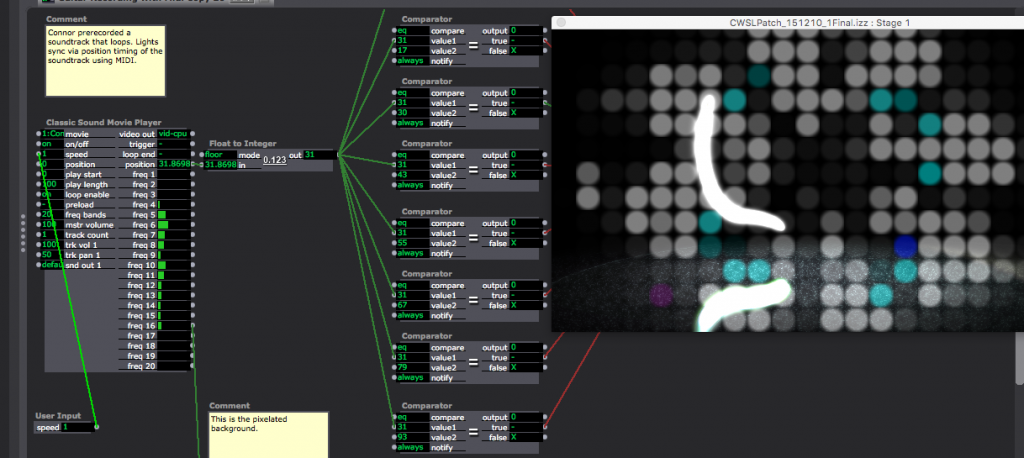

Posted: December 12, 2015 Filed under: Connor Wescoat, Final Project Leave a comment »I have done a lot of final presentations during the course of my college career but never one quite like this. Although the theme of event was “soundboard mishaps”, I was still relatively satisfied with the way everything turned out. The big take away that I got from my presentation was that some people are intimidated when put on the spot. I truly believe that anyone of the audience could of come up with something creative if they got 10 minutes to themselves with my showing to just play around with it. While I did not for see the result, I was satisfied that one of the 6 members actually got into the project and I could visually see that he was having fun. In conclusion, I enjoyed the event and the class. I met a lot of great people and I look forward to seeing/working with anyone of my classmates in the future. Thank you for a great class experience! Attached are screen shots of the patch. The top is the high/mid/low frequency analysis while the bottom is the prerecorded guitar piece that is synced up to the lights and background.

Documentation Pictures From Alex

Posted: December 12, 2015 Filed under: Uncategorized Leave a comment »Hi all,

You can find copies of the pictures I took from the public showing here:

https://osu.box.com/s/ff86n30b1rh9716kpfkf6lpvdkl821ks

Please let me know if you have any problems accessing them.

-Alex

Final Project Patch Update

Posted: December 2, 2015 Filed under: Alexandra Stilianos, Final Project Leave a comment »Last week I attempted to use the video delays to create a shadow trail of the projected light in space and connect them to one projector. I plugged all the video delay actors into each other and then one projector which caused them to only be associated to one image. Then, when separating the video delays and giving them their own projectors and giving them their own delay times, the effect was successful.

Today (Wednesday) in class, we adjusted the Kinect and the Projector to be aiming straight towards the ground so the reflection caused by the floor from the infrared lights in the Kinect would no longer be a distraction.

I also raised the depth that the Kinect can read from a persons shins and up so when you are below that area the projection no longer appears. For example, a dancers can take a few steps and then roll to the ground and end in a ball and the projection will follow and slowly disappear, even with the dancer still within range of the kinect/projector. They are just below the readable area.

Moo00oo

Posted: November 30, 2015 Filed under: Anna Brown Massey, Final Project Leave a comment »Present: Today was a day where the Vuo and Isadora patches communicated with the Kinect line-in. Alex suggested it would be helpful to record live movement in the space as a way of later manipulating the settings, but after a solid 30 minutes of turning luminescence up and down and trying to eliminate the noise of the floor’s center reflection, we made a time-based decision that the amount of time I hoped to save by having a recording off of which I could work outside of the Motion Lab would be less than the amount of time it would take to establish a workable video. I moved on.

Oded kindly spiked the floor to demarcate the space which the (center ceiling) Kinect captures. This space turns out to be oblong: deeper upstage than wider right to left. The depth data of “blobs” (“objects or people in space – whatever is present) is taken in through Isadora (first via Vuo as a way of mediating Syphon). I had Alex/Oded in space walk around so that I could examine how Isadora measures their height and width, and enlisted an Inside Range actor to set the output so that whenever the width data rises above a certain number, it triggers … a mooing sound.

Which means: when people are moving within the demarcated space, if/when they touch each other, a “Mooo” erupts. It turns out it’s pretty funny. This also happens when people spread their arms wide in the space, as my data output allows for that size.

Future:

Designing the initial user-experience responsive to the data output I can now control: how to form the audience entrance into the space, and to create a projected film that guides them to – do partner dancing? A barn-cowyboy theme? In other words, how do I get my participants to touch, and to separate while staying within the dimensions of the Kinect sensor, and they touch by connecting right-to-left in the space (rather than aligning themselves upstage-downstage). Integrating the sound output of a cow lowing, and having a narrow and long space is getting me thinking on line dancing …

Blocked at every turn

Posted: November 30, 2015 Filed under: Anna Brown Massey, Final Project Leave a comment »- Renamed the server in Vuo (to “depth anna”) and noted that the Syphon to Video actor in isadora allowed that option to come up.

- Connected the Kinect with which Lexi was successfully working to my computer, and connected my Kinect line to hers. Both worked.

This was one of those days where all I started to feel I was running out of options:

UPDATE 11-30-15: Alex returned to run the patches from the MOLA preceding our before-class meeting. He had “good news and bad news:” both worked. We’re going to review these later this week to see where my troubleshooting could have brought me final success.

Cycle… What is this, 3?… Cycle 3D! Autostereoscopy Lenticular Monitor and Interlacing

Posted: November 24, 2015 Filed under: Jonathan Welch, Uncategorized | Tags: Jonathan Welch Leave a comment »One 23″ glasses-free/3D lenticular monitor. I get up to about 13 images at a spread of about 10 to 15 degrees, and a “sweet spot” 2 to 5 or 10 feet (depending the number of images); the background blurs with more images (this is 7). The head tracking and animation are not running for this demo (the interlacing is radically different from what I was doing with 3 images, and I have not written the patch or made the changes to the animation). The poor contrast is an artifact of the terrible camera, the brightness and contrast are normal, but the resolution on the horizontal axis diminishes with additional images. I still have a few bugs, honestly I hoped the lens would be different, this does not seem to really be designed specifically for a monitor with a pixel pitch of .265 mm (with a slight adjustment to the interlacing, it works just as well on the 24 inch with a pixel pitch of .27 mm). But it works, and it will do what I need.

better, stronger, faster, goosier

No you are not being paranoid, that goose with a tuba is watching you…

So far… It does head tracking and adjusts the interlacing to keep the viewer in the “sweet spot” (like a nintendo 3Dsa, but it is much harder when the viewer is farther away, and the eyes are only 1/2 to 1/10th of a degree apart). The goose recognizes a viewer, greets and follows the position… There is also recognition of sound, number of viewers, speed of motion, leaving, and over volume vs talking, but I have not written the animations for the reaction for each scenario, so it just looks at you as you move around. And the background is from the camera above the monitor, I had 3, so it would be in 3D, and have parallax, but it was more than the computer could handle, so I just made a slightly blurry background several feet back from the monitor. But it still has a live feed, so…

Ideation 2 of Final Project- Update

Posted: November 20, 2015 Filed under: Alexandra Stilianos, Pressure Project 3 Leave a comment »A collaboration has emerged between Sarah, Connor and myself where we will be interacting/manipulating various parts of the lighting system in the MoLa. My contribution is a system that will listen to sounds of a tap dancer on a small piece of wood (a tap board) and affect the colors of specific lights projected on the floor on or near the board.

Connection from microphone to computer/Isadora:

Microphone (H4) –> XLR code –> Zoom Mic –> Mac

The zoom mic acts as a sound card via a USB connection. It needs to be on Channel 1, USB transmit mode, and audio IF.

Our current question now is can we control individual lights in the grid through this system and my current next step is playing with the sensitivity of the microphone so the lights don’t turn on and off out of control or pick up sounds away from the board.

Dominoes

Posted: November 19, 2015 Filed under: Anna Brown Massey, Final Project Leave a comment »

I am facing the design question of how to indicate to the audience the “rules” of “using” the “design” i.e. how to set up a system of experiencing the media, i.e. how to get them to do what I want them to do, i.e. how to create a setting in which my audience makes independent discoveries. Because I am interested in my audience creating audio as an input that generates an “interesting” (okay okay, I’m done with the quotation marks starting… “now”) experience, I jotted down a brainstorm of methods of getting them to make sounds and to touch preceding my work this week.

We use “triggers” in our Isadora actors, which is a useful term for considering the audience: how do I trigger to the audience to do a certain action that consequently triggers my camera vision or audio capture to receive that information and trigger an output that thus triggers within the audience a certain response?

Voice “from above”

- instructions

- greeting

- hello

- follow along – movement karoaoke

- video of movement

- → what trigger?

- learn a popular movement dance?

- dougie, electric slide, salsa

- markings on floor?

- dougie, electric slide, salsa

- video of movement

- follow along touch someone in the room

- switch to kinect – Lexi’s patch as basis to build if kinesphere is bigger then creates output

- what?

- every time people touch for sustained length is creates

- audio? crash?

- lights?

- how does this affect how people touch each other

- what needs to happen before they touch each other so that they do it

- switch to kinect – Lexi’s patch as basis to build if kinesphere is bigger then creates output

- follow along karaoke

- sing without the background sound

- Maybe with just the lead-in bars they will start singing even without music behind

- sing without the background sound