Cycle 1

Posted: May 7, 2026 Filed under: Uncategorized Leave a comment »Themes

- Exploration

- Resource exploitation

- Free play

Resources

- TouchDesigner

- MacBook Pro (M1)

- Mac Studio

- Motion lab

- Circular rug

- Orbbec femto

- Robo cam

- MediaPipe

Score

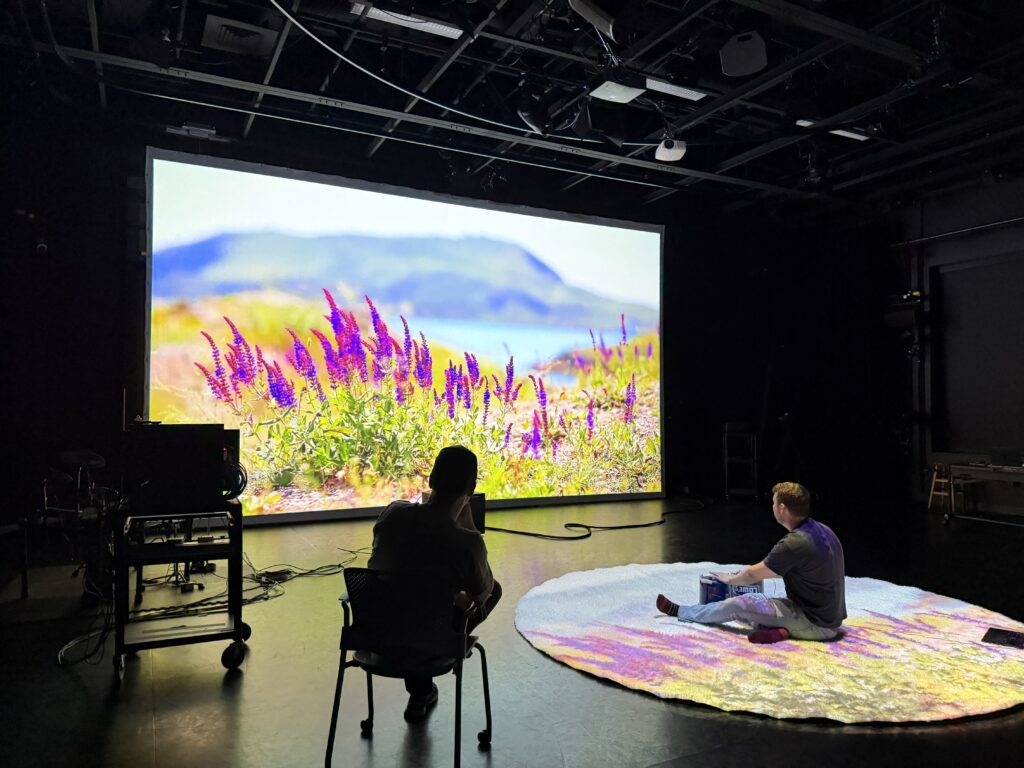

Audience members walk into the space to see a white rug with a psychedelic animation.

Stepping onto the rug alters variables on the noise CHOPs. There are 8 zones that change the values of different parameters.

When a participant steps onto the rug, they are captured by the depth sensor. A point cloud generated from this feed runs through a composite TOP set to ‘over’ blend mode. The point cloud is composited with the motion-tracking fluid simulation and projected onto the front screen. The point cloud spins around the y-axis using an ‘absTime.seconds’ expression.

Valuation

For Cycle 1 my goal was to incorporate some new elements from the motion lab that I had never previously used with some reclaimed elements from prior projects and see how people engaged with it.

Performance

While waiting for people to return from the bio-break before my performance, Michael asked if he could play some Pink Floyd. I agreed and as the music began to swell I realized how much of a void the silence would leave when we cut the music. I tried to signal to Michael that I wanted to him to keep it rolling. The soundtrack made the experience feel much more full and ethereal.

The entire experience was heavily improvised, and I attempted to demonstrate the system affordances silently by modeling the interactions. I was also able to manually manipulate the patch to make synchronized transitions between songs on the fly. I actually really enjoyed this aspect though it was not planned from the beginning. This was a seed that I would nurture in future iterations.

The interaction with the rug was not obvious. Mainly because I was using spatial positioning to change random parameters in the noise on a video heavily driven by noise. The changes were too subtle and disconnected from the precipitating action to feel meaningful or intentional. However, there’s something to be said for that. It’s not necessarily something that I would do again, but it was just noticeable enough that people spent time trying to figure out exactly what was happening and whether they had any impact on it.

I failed to account for the low light when incorporating the MediaPipe-based fluid simulation. During the performance I tried to use several lights to illuminate my hands for the camera. I was able to get some outputs, and enough to intrigue participants and encourage them to attempt to replicate the behavior.

I also failed to account for MediaPipe’s default tracking behavior. The first target the operator locks onto has their hands labeled h1 and h2, and the party uses these to draw the fluid onto the screen. This made it frustrating because it was impossible to determine who was in control of the fluid simulation at any given time.

Overall the reception of this cycle was positive. People enjoyed trying to figure out what was responsive and how the controls operated.

The rotating particle clouds were also well received, though went unnoticed for most of the performance. With people in the room and amidst all the commotion, it became obvious that they were far too small, which was an easy fix.

These were good lessons that I could incorporate into future builds.

Pressure Project 3

Posted: May 7, 2026 Filed under: Uncategorized Leave a comment »Storytelling through sound

Themes

- Decisions

- False Dichotomies

- Search for meaning

- Pretense

I wanted to explore the idea that we spend a tremendous amount of time seeking meaning out in the world, when what matters most often happens closest to home. This value in combination with the shared context of the assignment provided the initial framework for the experience. With this in place I worked out my score fairly quickly. The experience would present two options where audience members could try to pick apart the meaning of the story being told (which would be devoid of any direct meaning).

My experience began in the ACCAD Hallway. My introduction to the experience was tightly scripted and carefully crafted to avoid any explicit imperative instructions:

“Welcome home. You may choose to go through the door to the motion lab. You may choose to go through the door to the classroom. Each space contains a different experience.”

During the performance, one classmate asked if they could go between rooms. I hadn’t anticipated this question, which was a fairly obvious one in hindsight. I replied that they were free to make their own decisions.

Each room had a non-sensical musical composition that I had created. I like to run through a process with music where I start with a dumb idea and pour effort into it. Aside from being a fun exercise, it allows me to compose music without the stress of trying to make meaning. I developed this practice after becoming disenchanted with trying to make meaningful music or music with a message. I make music for fun, but I would cringe years later when I’d listen to a younger iteration of myself trying to be profound or expressive or whatever it was that I was trying to accomplish. The author had died and I was making up my own meaning when listening to it anyway, so I reasoned that this approach was just as good as any other I was trying out. And oddly – because my effort shifted to the construction and away from being self-conscious or self-involved, I enjoyed listening to the songs more. How could I not, when it gave us such tracks as the pandemic era Appletini with my Homeboys? (I actually would have preferred to use this song over the second song below, but there is an actual story to be derived from it, and I wanted to use tracks that were completely devoid of meaning to capture the spirit of this project).

Fue is a song that I recorded on my iPhone years ago as a joke for a close friend I met years ago in a college French Class. The lyrics are:

Du beur, du beur

Le voyaguer

Du beur, du beur

Le voyaguer

Du beur, du beur

Ma petite ami

Du beur, du beur

Avec moi aujourd’hui

(X2)

Du bœuf

Du bœuf

Du bœuf

Vous ne voulez pas ce bœuf

Which translates more or less (with what is probably very poor grammar, I’m not that good at French)

Some butter, some butter

The voyager

Some butter, some butter

The voyager

Some butter, some butter

My girlfriend

Some butter, some butter

With me today

(X2)

Some beef

Some beef

Some beef

You don’t want this beef (formal pronoun)

It’s stupid. I love it.

Here, listen.

The second song was called Pareidolia (A Confession 4/4). It was originally constructed using vocal samples in 2021. I spent several hours editing this one for Pressure Project 3 just to change the song’s structure a bit. There’s nothing particularly interesting in this song. When I made it I was trying to evoke the idea of someone trying really hard to make something overly artistic, deep, and meaningful (basically taking a jab at myself as a teenager). It’s not a particularly good listen.

The main point of this story is that while you’re out trying to milk meaning from the world you’re missing the real story. As soon as I had given the instructions I began to pull up a video of my daughter and I doing bedtime stories. It was important to me that I didn’t “cheat” and wait for people to leave before pulling it up. If someone had stuck around long enough they might have heard the sound and stuck around.

During the reflection period I refused to replay the story time file because that’s the point – you missed it. Nobody in the class actually heard the story, however several people walking through the halls did. This actually made me more uncomfortable than expected. I had considered the high probability that people without the context of the class would walk by during the performance and saw this as a major plus… as reinforcing the point that I was trying to make. While you are missing the story other people to whom the story means nothing are likely catching glimpses. While I had anticipated this and liked the prospect in theory, the actual experience of letting this play out while other students walked by was intensely uncomfortable for me. I don’t like to let my phone play audio where others can hear it and potentially be disturbed by it. I had made a commitment to let the story run for its full length without interruption.

I also recognized during the reflection discussion that while the idea behind this project was to kind of deride my own self-indulgent and pretentious pursuits over more meaningful even if more mundane experiences, this conceit itself was very self-indulgent and pretentious. It also felt a little preachy. I did not like the experience of denying people the opportunity to listen to the bedtime story (opportunity feels like a strong word here), but it felt conceptually important for the point I was attempting to make.

For the first time I will reveal that the story in question was in fact The Little Engine that Could, for what it’s worth.

Pressure Project 2

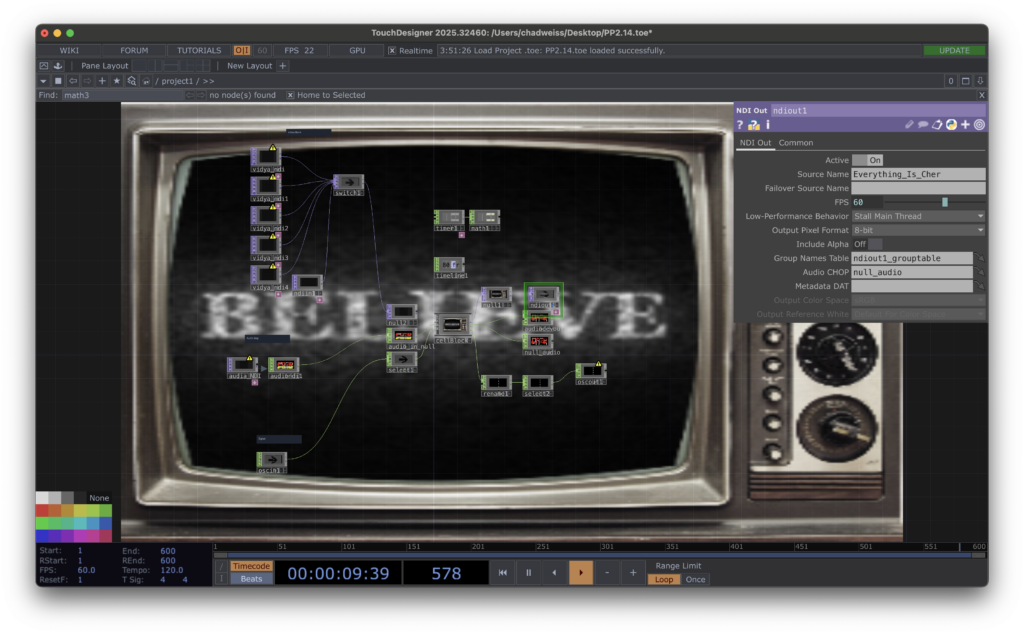

Posted: May 7, 2026 Filed under: Uncategorized Leave a comment »The Networked patch: Everything is Cher

- TouchDesigner

- Cher Believe Midi File

- Dial CRT TV image

- Network

I wanted to play with nostalgia for this project. I chose to use a retro television as the backdrop for my patch. The TOP in would take a video feed and apply a noise overlay and a lens distort to apply it to the CRT screen.

I also created a switch to change channels, selecting from NDI-in TOPs.

The audio portion would take any audio NDI input and play it through a Logic Pro file that would use the audio as a carrier channel for a vocoder to reshape every sound to match pitch with Cher’s Believe.

Finally for my control portion, The OSC-In feed would run through a rename CHOP and a reorder chop to switch between several filters applied to the video content and change the channel on the TV.

On the output NDI I provided the a video of the patch running and the processed audio (i.e. the audio-in feed being shared having been Cher’d). I also provided the current TV Channel parameter as a number between 0 and 5 over OSC-out.

I used the IN CHOPs and TOPs in my patch with default values to ensure that the patch ran on its own and interacted with others.

I tried to include things that reminded me of my youth. Things that I haven’t heard or seen in years, but when I did they took me right back to a nostalgic past. I didn’t get too crazy with complexity, and tried to keep it simple, self-contained, and cohesive.

In my initial imagining of this project, external users would be able to feed their content through my patch and see their content displayed on the screen, but that’s not the way it worked. They could take my screen with whatever content it was playing and incorporate it into their own patches. Likewise they could incorporate whatever audio I was taking in and hear how it sounded as Cher’s Believe.

There were a number of interesting and unexpected interactions. I especially liked the way that the effects stacked. In preparation for this pressure project, I was mainly considering how two patches might interact, but I didn’t consider what might happen when long chains of patches interacted. I was surprised by how at some point it became impossible to trace the chain of patch interactions and all that was left was just to enjoy what was there to be observed.

While each patch was interesting on its own, the combination of multiple patches was where the real magic happened.

Pressure Project 1

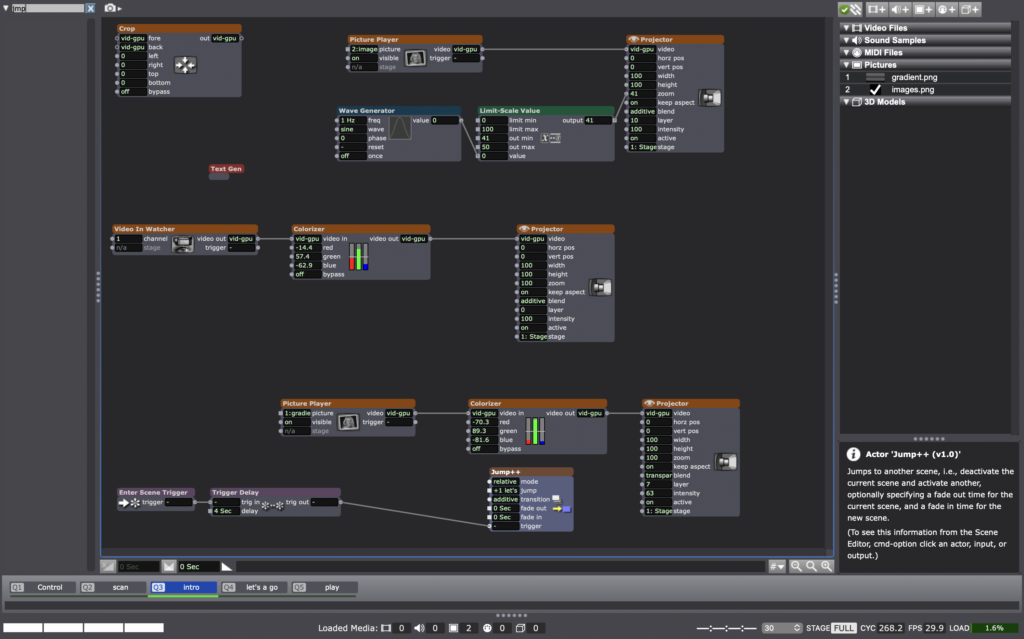

Posted: May 7, 2026 Filed under: Uncategorized Leave a comment »Required Resources

- Isadora

- Shapes Actor

- Projector Actor

- User Actor

- Pulse Generator

- Wave Generator

- Random Number Generator

- Jump ++ Actor

- Scene Functionality

- 5 hours

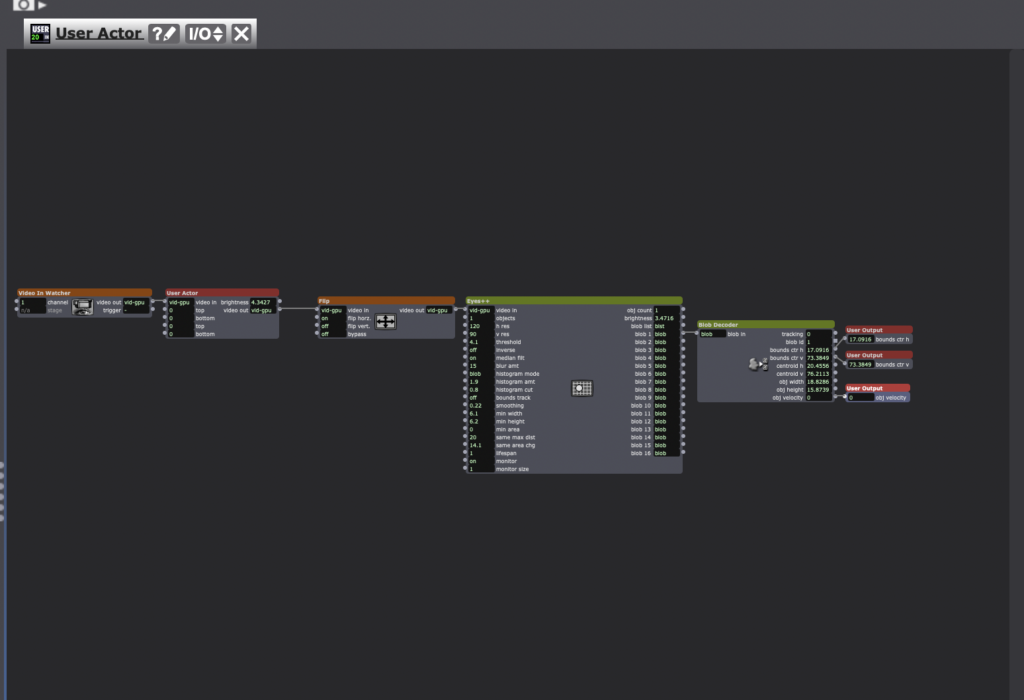

My goal for pressure project 1 was to build a self generating patch that enabled users to play the original Mario Bros. with their body. I thought that I would be able to accomplish this using a version of Mario instantiated in Python for machine learning. This was an ambitious goal but I thought it would be possible to do it within the five hour window. If it proved to be impossible I knew that I could simplify it to achieve a reasonable outcome. I spent one hour setting up the patch, a total I spent 3 hours trying to get Mario running: Two hours trying to get a basic Python script to run in Isadora and one hour running it directly on my laptop with Isadora sending keystrokes to control the game running in python. Neither of these approaches was successful.

I spent the final hour crafting a new experience that borrowed from my initial concept.

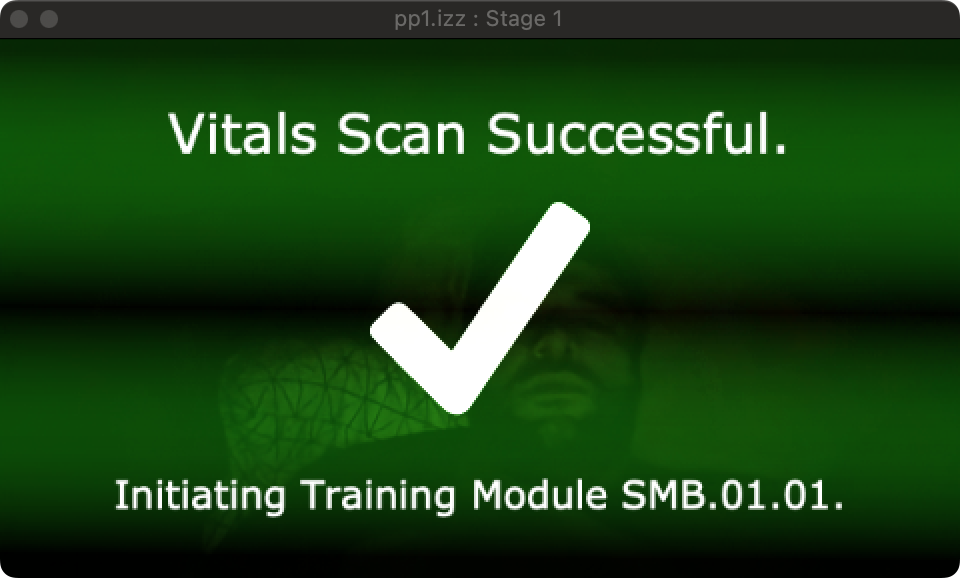

Score

The user stands facing a camera. A message on screen informs them that a vitals scan is in progress and instructs them to move their body.

Blue shapes moves up and down the screen while a TT Edge Detect actor outlines their body, simulating a poor man’s retro body scan. When a blob decoder reports object velocity between 0.1 and 2, the Inside range actor fires a trigger. The trigger travels through a simultaneity actor and if a wave generator connected to an inside range actor is less than 10 or greater than 90, it will fire a trigger to activate a text actor, informing the user of success. It will also fire a trigger (delayed 5 seconds) to activate a jump++ actor and move on to the introduction screen.

The user is informed that the vitals check was successful. and they will now betrayed.

The screen goes black and you hear the iconic:

I then added a video of the first level of Mario. After a few seconds, before the player has a chance to recognize that they’re not actually controlling the game, the system begins to warp and distort the game video by sending the stage image through a feedback loop (this was going to make a very ironic re-emergence later in the semester). A message onscreen onscreen progressively unfolds to inform the user “Oh no. You broke it. Shame on you” the line “shame all over you” appears on screen and its position is periodically moved by random number generators hooked up to the position.

In total the experience contains five scenes that progress automatically upon fulfilled success criteria.

It contains three user actors. Two are nested in the control scene and define how the system is supposed to track user movement and convert them into controls for playing the Mario game.

The other defines how text is drawn up on the screen.

Valuation

I was unable to give the user control over Mario Bros. Instead I simplified the experience and fell back on an old favorite theme of mine, playing with the idea of technology failures as part of an immersive experience. I’m prone to stress when I can’t make a project do what I want it to do. In my first time taking DEMS, my first pressure project broke down and the experience was embarrassing. It was in my second project that I started working with simulated failures as an emotional trigger point.

Performance

The patch worked as expected and it was fine. Obviously a bit gimmicky. It was an important step though, because it had been a year and a half since I’d used Isadora and several months since I’d worked with TouchDesigner. The first pressure project was a nice reacquaintance with the software.

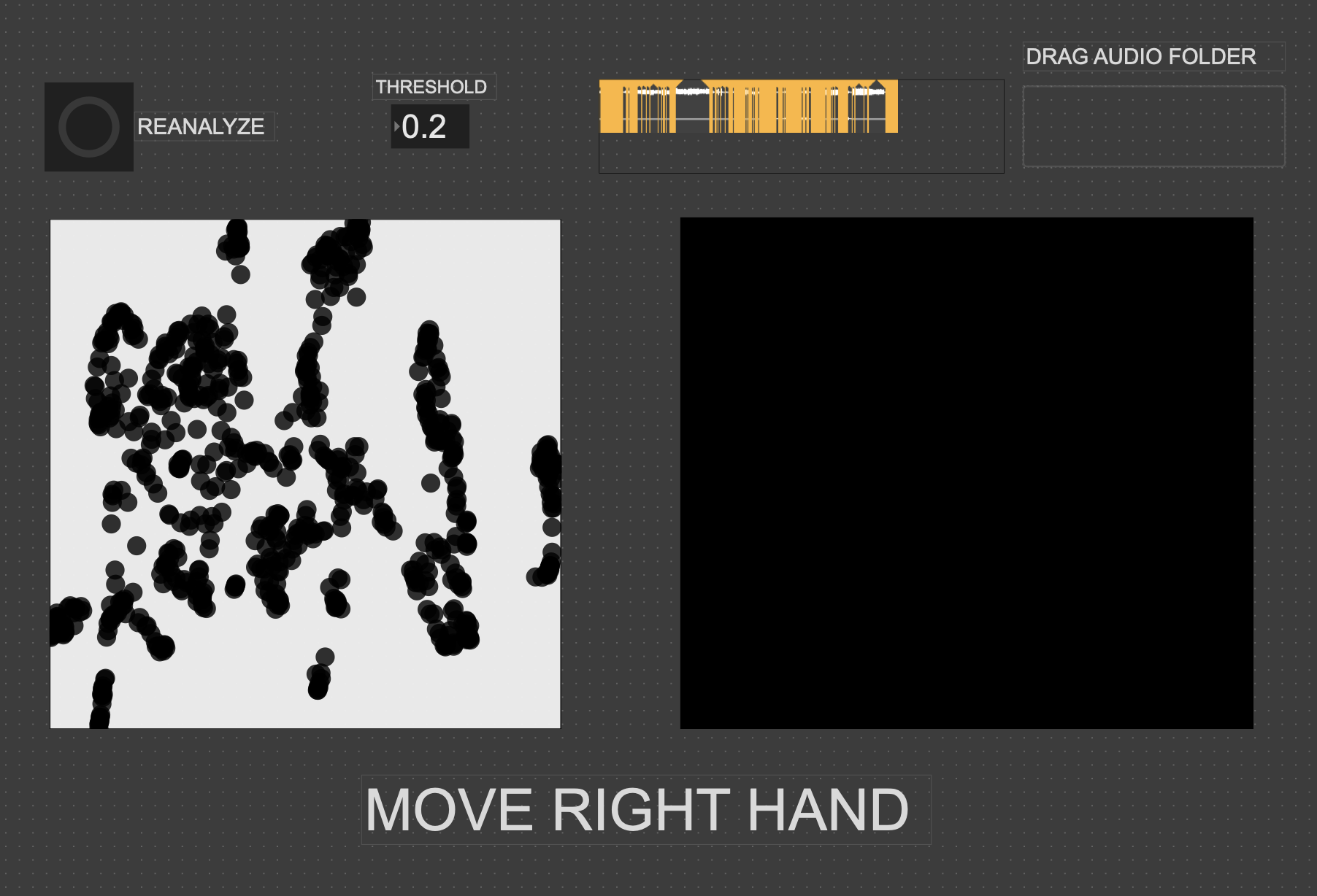

Cycle Three: A Collective Sound Explorer

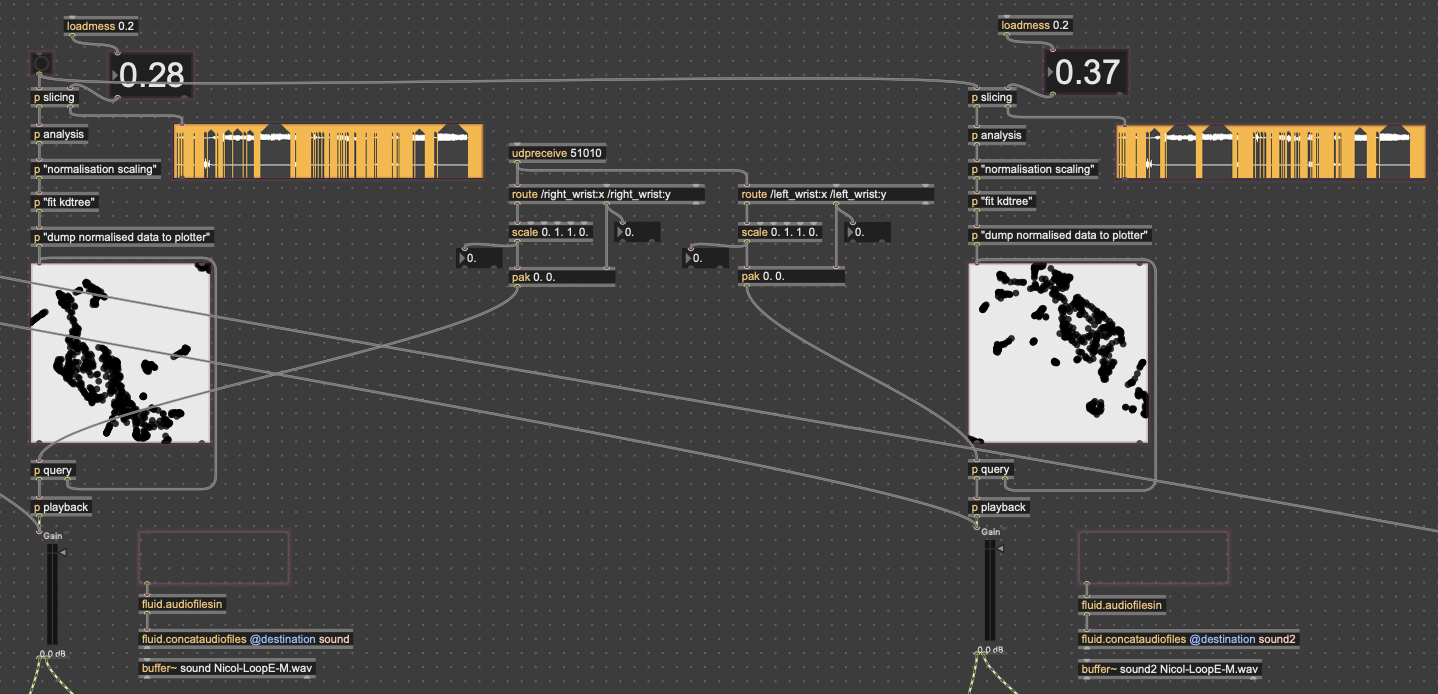

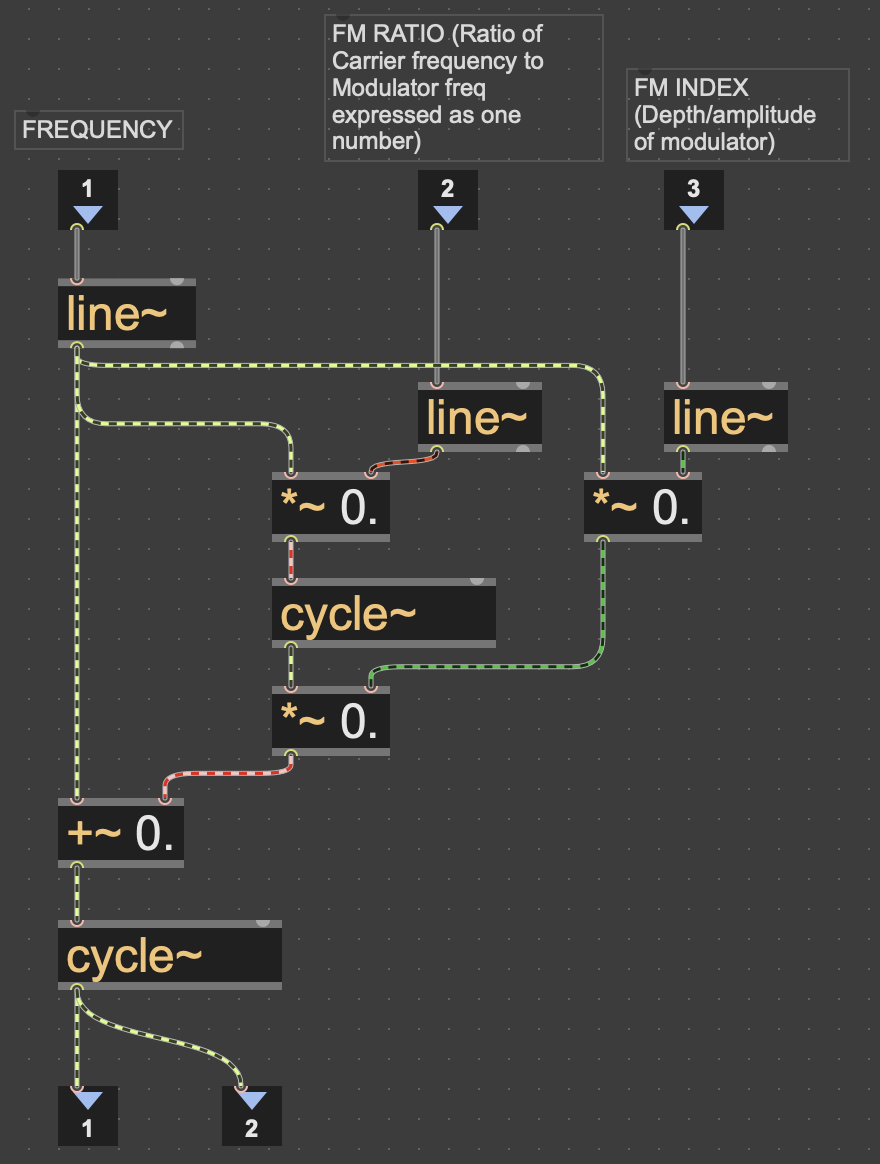

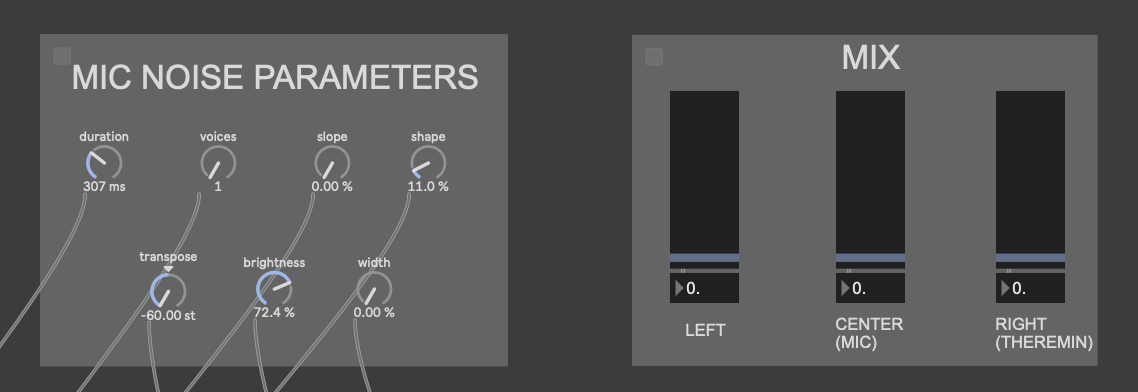

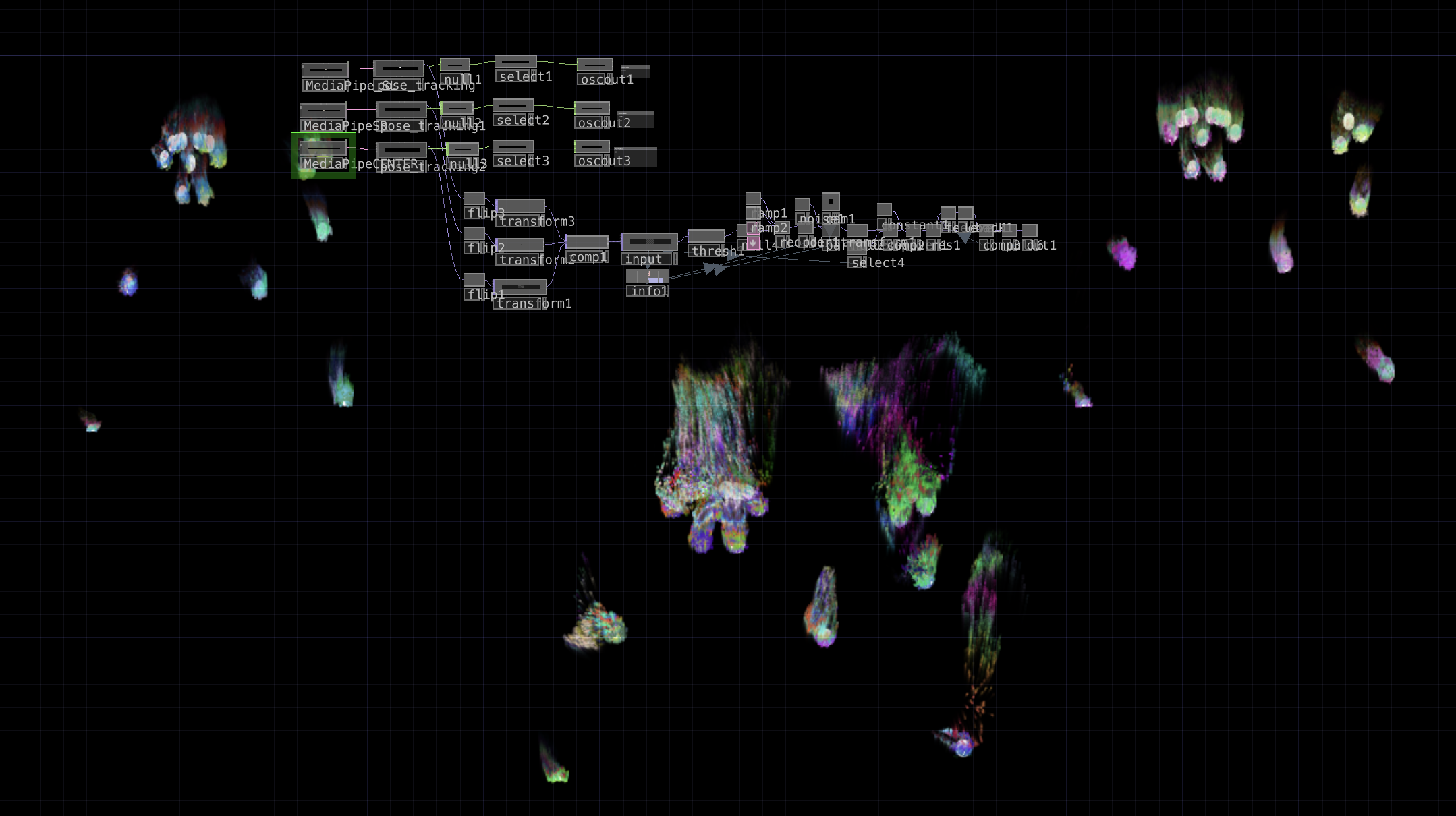

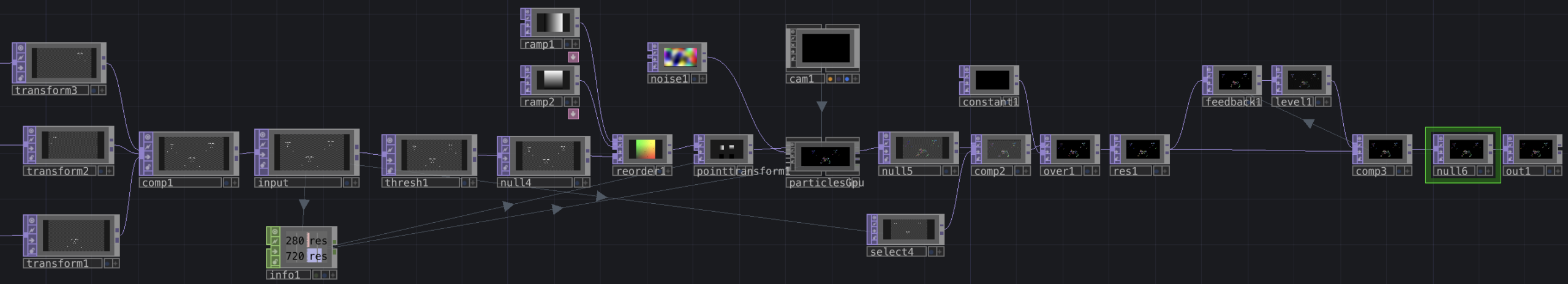

Posted: May 6, 2026 Filed under: Uncategorized Leave a comment »As I alluded to in the previous cycle, I wanted to really focus on creating an experience for multiple people in this cycle, as part of my original goal was to do something for a group of people. As the ideas I was exploring in cycle two didn’t work out so great, I pivoted to creating an experience with several different kinds of sound manipulation, as opposed to one “instrument” that everyone could interact with at once. The different systems I picked to include in this cycle were: the 2D movement-based sound explorer from my first cycle, a patch that resynthesizes from a mic input using the Data Knot package, a FM theremin also using motion tracking, and an iPad connected to parameters of the different instruments using Max’s MiraWeb package.

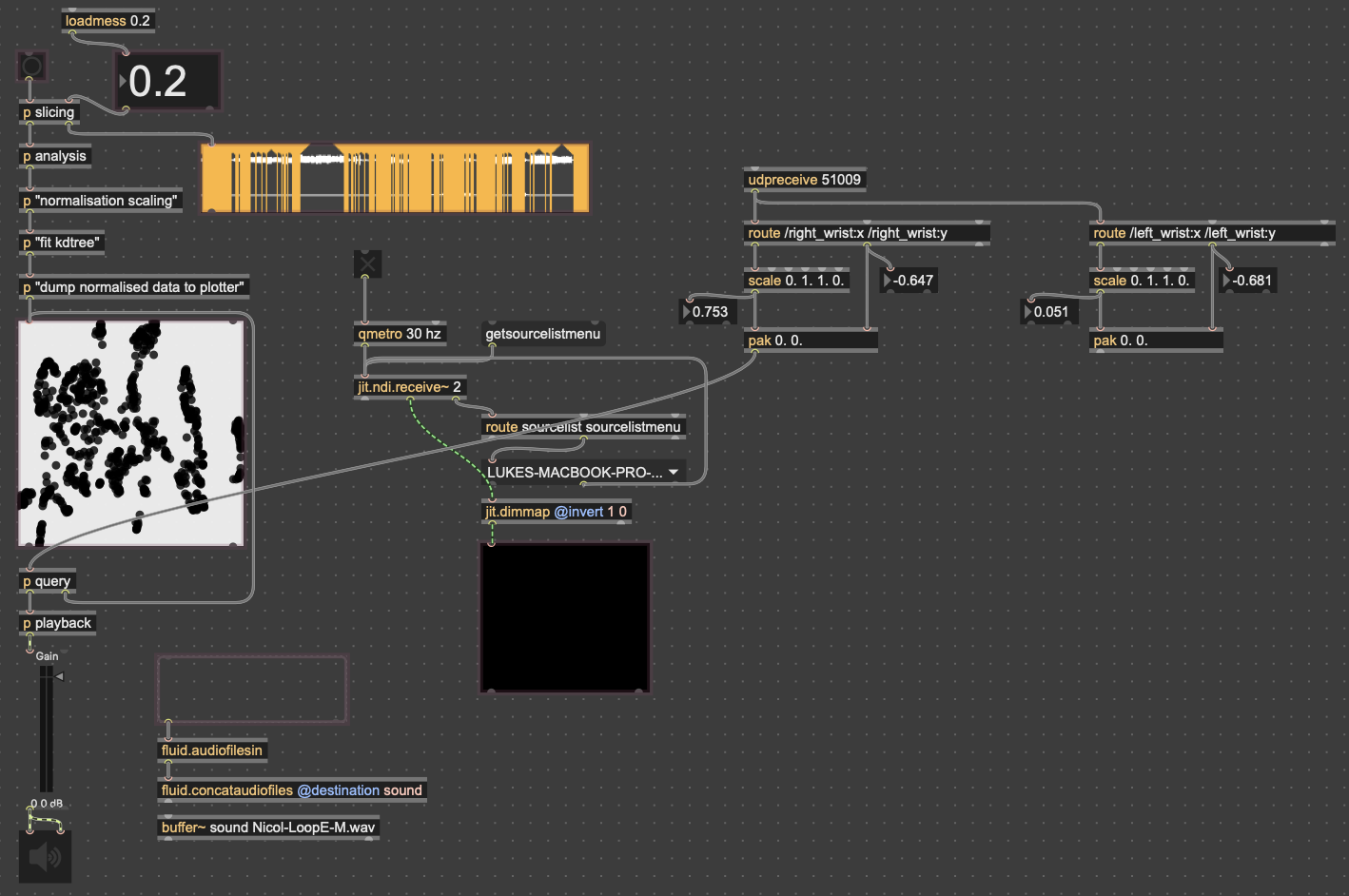

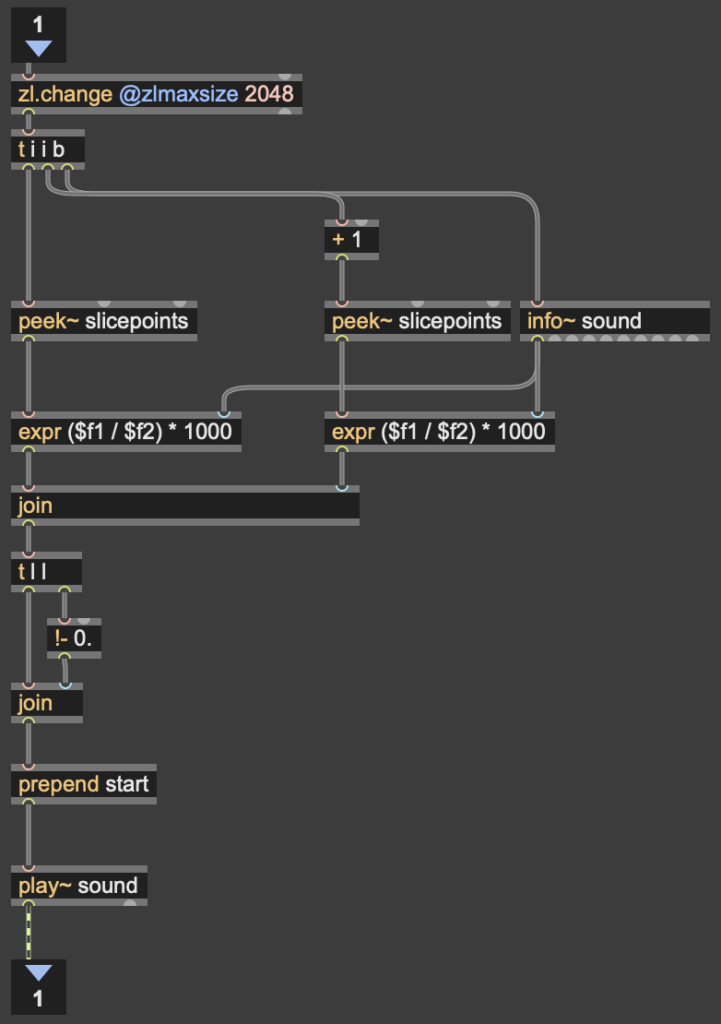

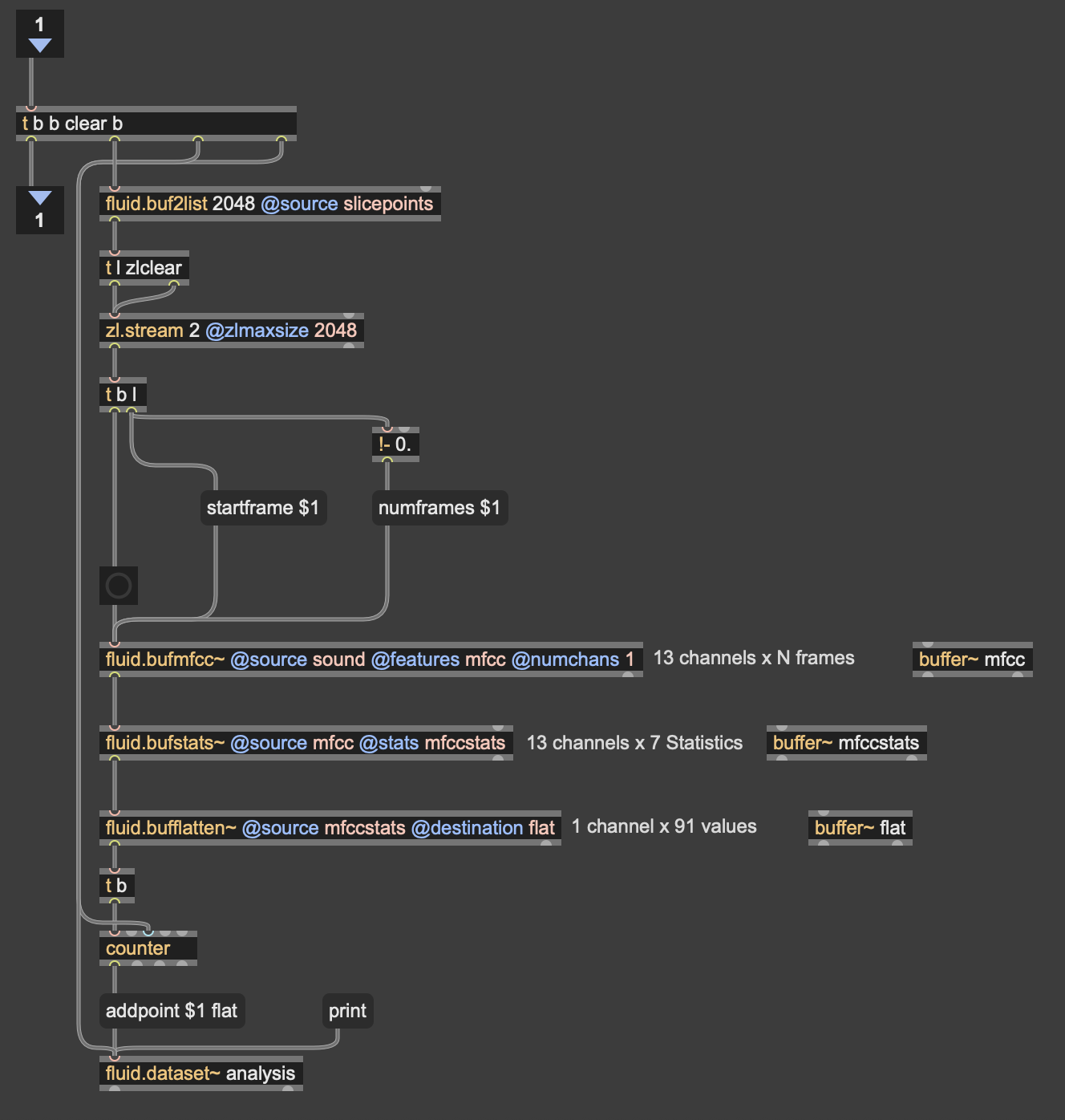

Since the 3D version had some large issues, I chose to go back to the 2D version, since it was much easier to interact with. While the the code didn’t change much in regard to how it was working, I expanded the control capabilities for more “natural” input. In both cycles one and two, people found it unintuitive that only one hand had any effect on the sound, so for this time, I wanted to get two handed control working. I did this by just duplicating the original FluCoMa code, basically creating two “voices” for the instrument. I then mapped the coordinates of each wrist (received from MediaPipe in TouchDesigner over OSC) to a voice. By analyzing the sample folders with different thresholds for detection, I was able to create two different corpuses for the wrists to move through.

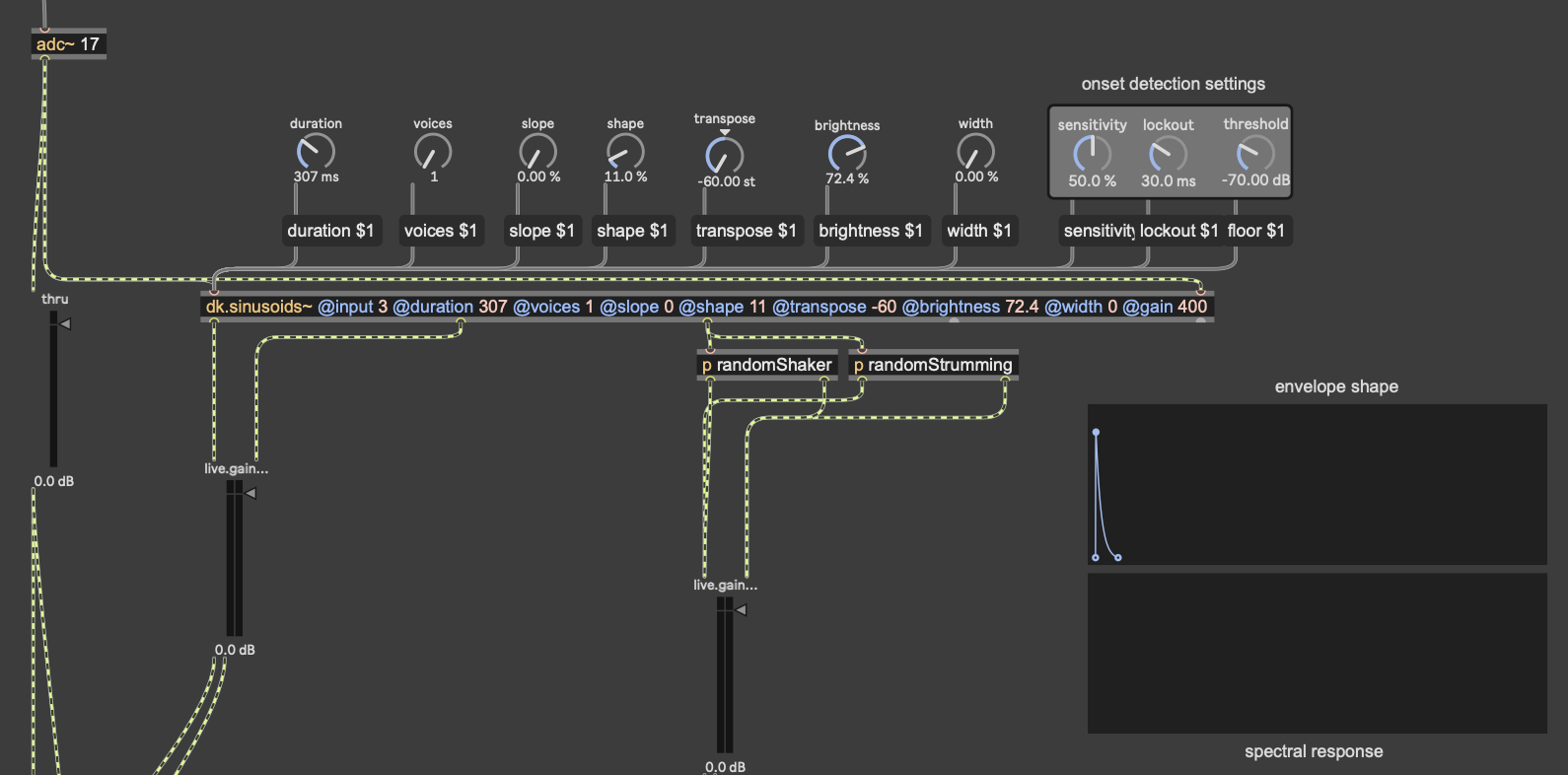

As I mentioned earlier, the mic input resynthesis was done using the package DataKnot for MaxMSP, which is used for real time audio analysis and processing, based in FluCoMa’s tools. The patch I used takes the signal from the microphone, looks for the onset of a sound (determined by a dB threshold) and triggers waves resynthesized from the most present pitches in the original. The signal onset detection also randomly triggers some strumming and “shaking” noise. While this instrument isn’t controlled with a camera, I did put a camera in front of the microphone, so that the user could still create visuals.

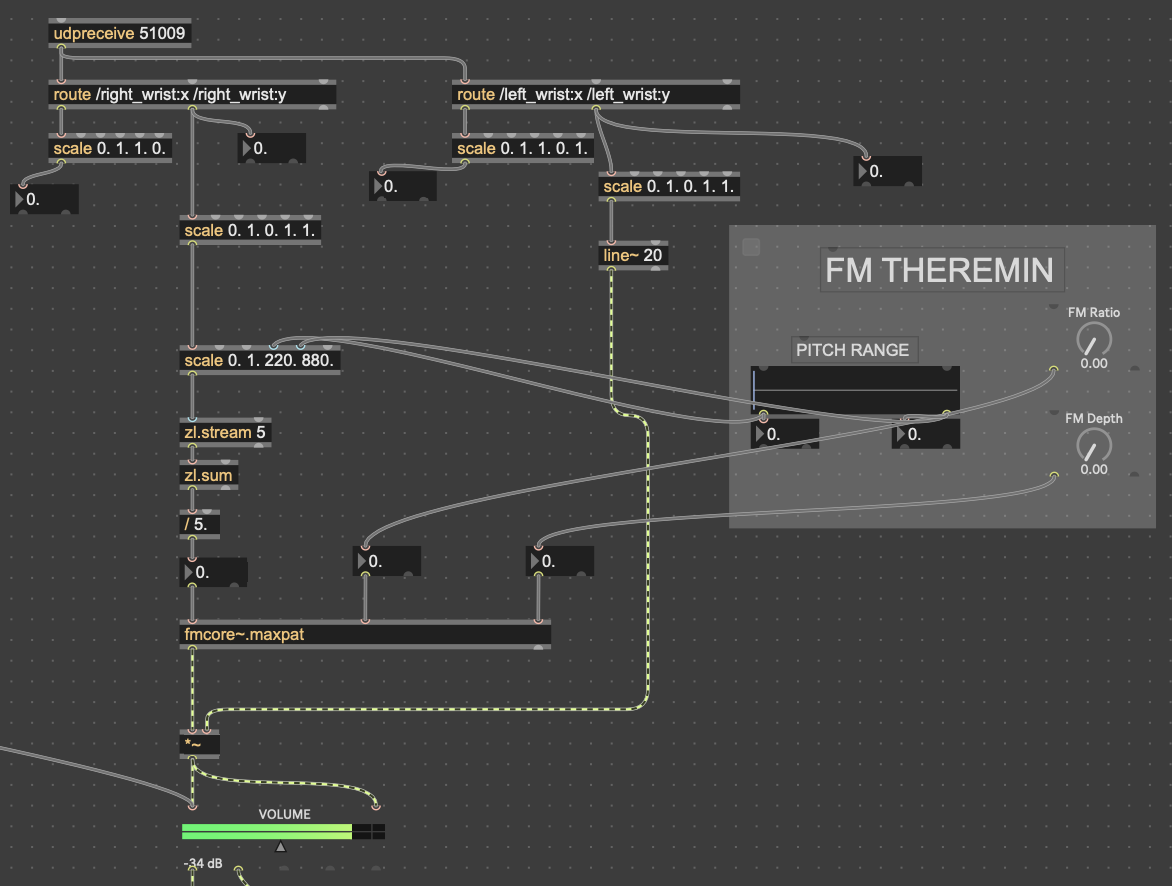

The theremin portion of the patch was done with a simple FM synth patch I have (fmcore~.maxpat). The y-values of the left wrist controlled the volume of the wave being played, while the y-values of the right wrist controlled the pitch. I had to smooth out the pitch values with zl.stream and zl.sum, taking the average of the most recent 5 values in order to prevent the pitch from wobbling around from unstable data.

The little grey box with the “FM THEREMIN” header in those pictures is where I connected the patch on my computer to an external iPad for parameter control. I did this with Max’s built-in package Mira, which allows you to connect a device on the same network to a Max patch. In addition to the pitch, FM ratio and depth of the theremin, I also gave the iPad user seven parameters for the Data Knot processing, as well as a mixer for all of the instruments

In addition to this, I also expanded upon my original TouchDesigner patch–which was handling the motion capture via Google MediaPipe–to create some particle visuals based on the landmark skeleton from MediaPipe. First, I went copied the code for one video stream into three, to capture data for all three instrument users. I mostly followed this tutorial to create them, as I’m not the best at TouchDesigner, but I took a couple of my own liberties. The two largest of those were that I used the skeleton video feed out of MediaPipe as input for the particles, and I also used noise to generate colors instead of the input video, as the skeleton is only white. Lastly, I placed the three skeletons in different spots on the screen in relation to where each station would be set up in the motion lab. Code is posted below.

Results

While there were some oversights I made when creating my patches, as well as when I presented this cycle, I overall feel that this was a very successful experience. People seemed really interested in the sounds the experience made, as well as in making them themselves. With the two camera-controlled parts, people seemed to understand their movement was controlling something right away, which was another goal of mine. I think that the sounds that the system made were quite interesting as well once every station had someone providing input. People also enjoyed the visuals, and seeing the particles flow with their movements.

While my mistakes in this cycle weren’t too numerous, they definitely detracted from the experience a considerable amount. In terms of presentation, I didn’t explain much about how to use each station. Part of this was deliberate, as I wanted to see how first-time users could pick up on the controls. However, there were still a few basic instructions that I should’ve given, such as how far to stand from the cameras, as well as that the camera by the microphone was not controlling any sound, just the mic. I also made a mistake by making the volume sliders on the iPad full range, as it just resulted in the instruments getting turned down too quiet. I think people also had a hard time understanding what the iPad was controlling in the first place, as some of the terms used for the knobs were jargon-y.

I think if I were to continue this into a cycle 4, I’d focus much more on making things more easily understood by the user. This class has taught me that the most effective interactive systems are made to create potential for experiences, not designing the experience itself. My ultimate goal with things like this is to create something that anyone can walk up to, interact with, and make something cool sounding regardless if they know every detail of how it works or if they haven’t even played an instrument before. While I think that these three cycles have shown a lot of progression towards that goal, I still have a lot of work to do.

Longer video from Michael pending

Independent Study with Makey Makey

Posted: May 6, 2026 Filed under: Uncategorized Leave a comment »Building on my skills and experiences from the DEMS class I took last semester, I wanted to use some independent study time to continue experimenting with Makey Makey to keep developing ideas for my MFA thesis project in dance. At the end of last semester, with the tools I had been experimenting with in DEMS such as Makey Makey, OSC, Live Video, NDI camera, Isadora, I combined my interests in movement and technology in my 2nd year showing performance and presented my work to my dance colleagues and professors. In that showing, I had used Makey Makey as a bridge between touch and memory/answer/response. After receiving feedback and progressing my ideas, I steered toward another trajectory in my approach where I started experimenting with absurdity and humor to reach the depth. This was also a progression I was able to mentally achieve this semester after the experience I devised with honey in DEMS last semester. Letting myself include objects in my artistic works with curated associations allowed me to keep asking and answering the question “What is the experience?” with each trial.

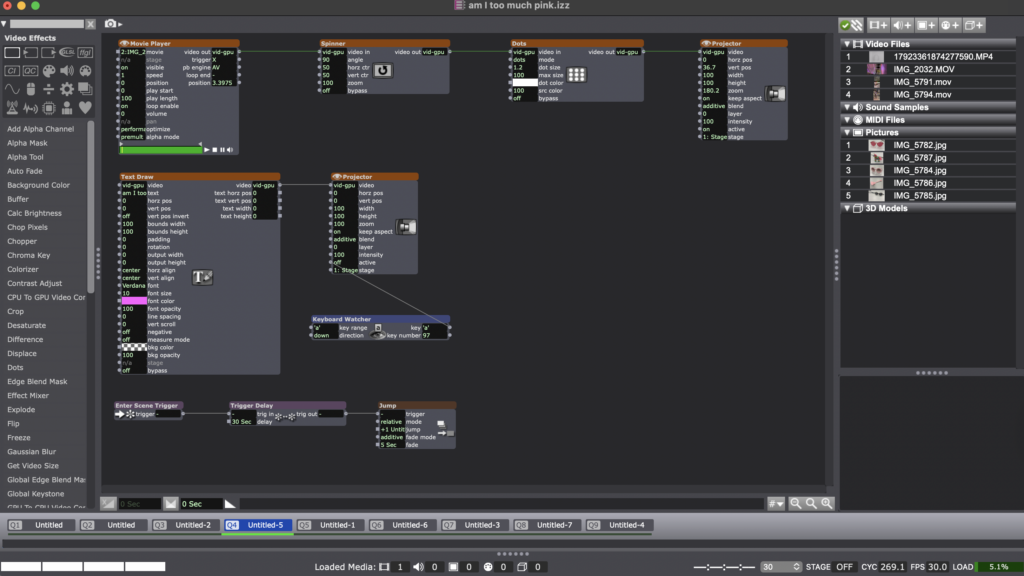

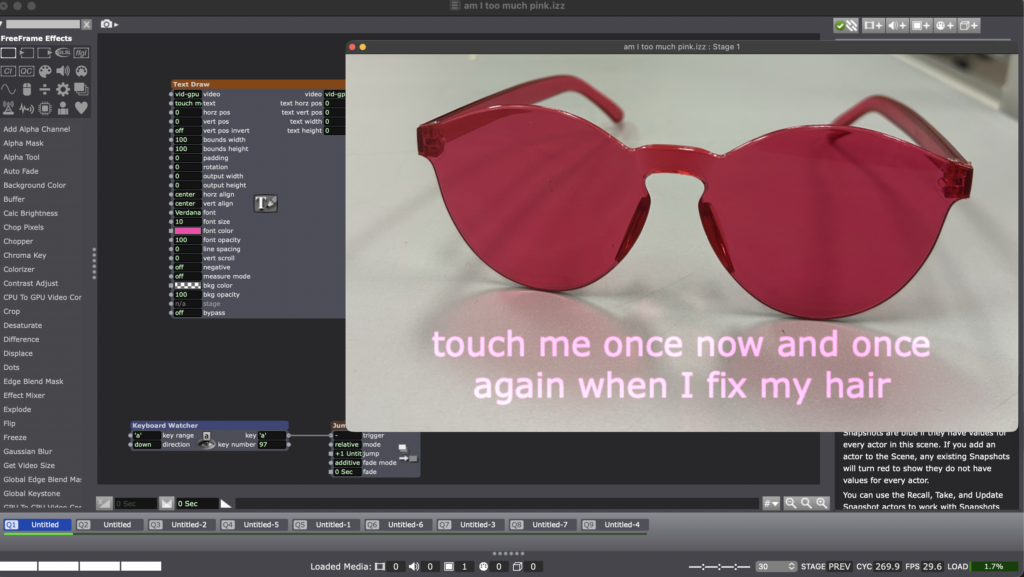

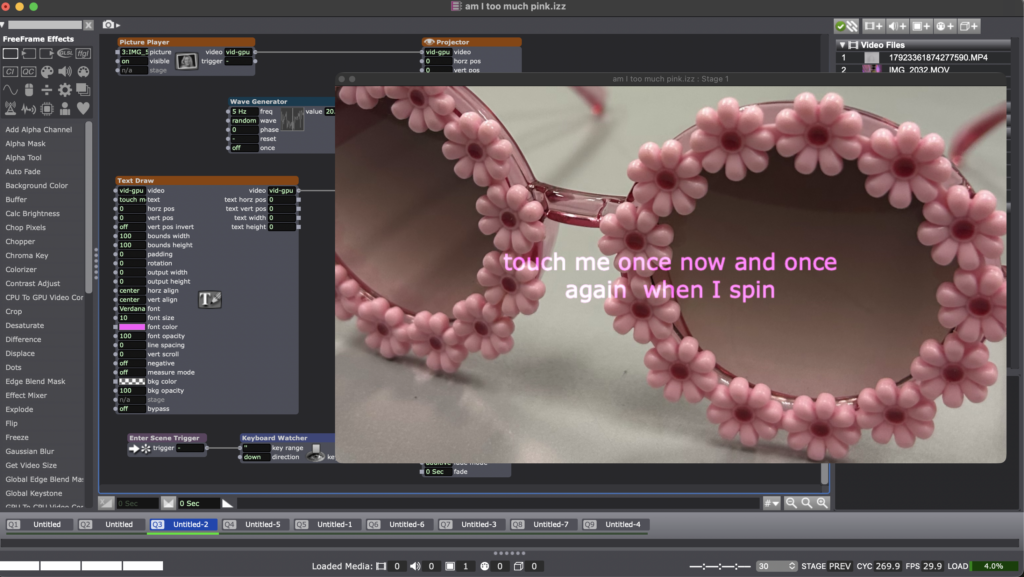

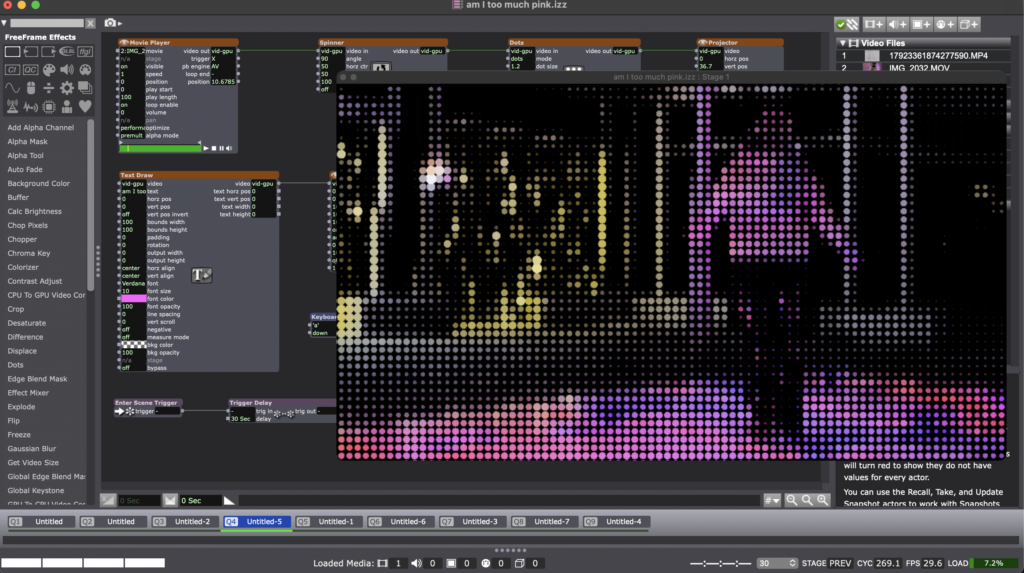

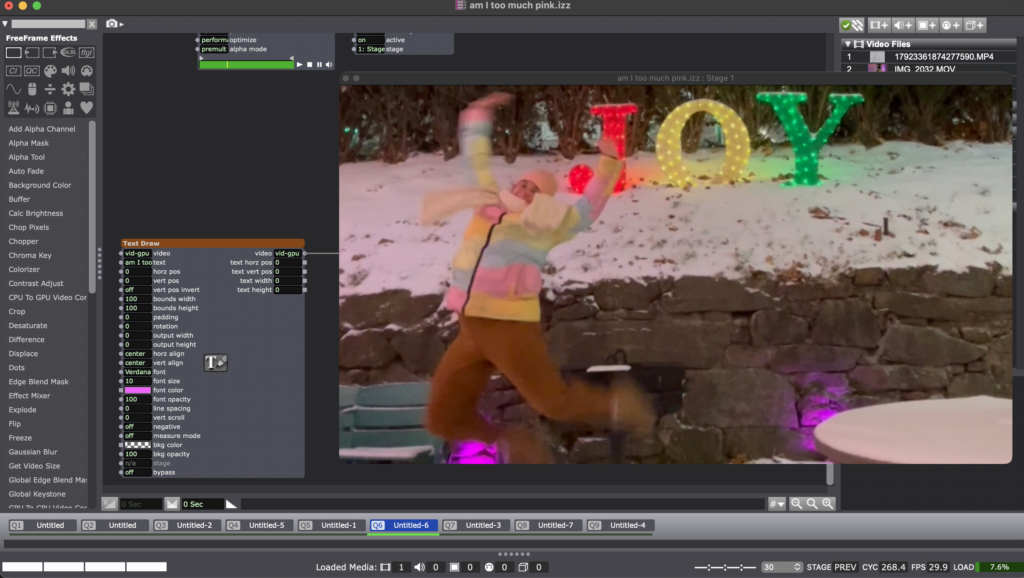

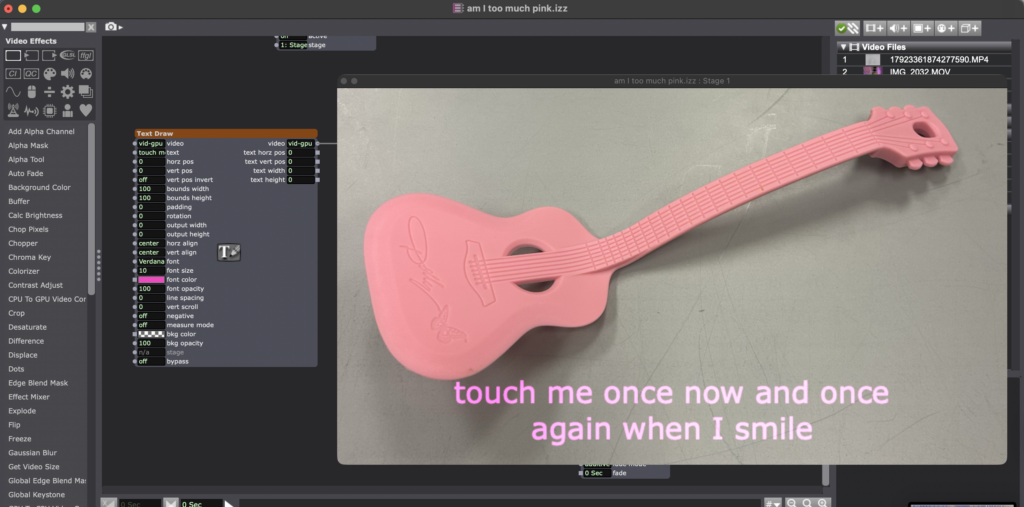

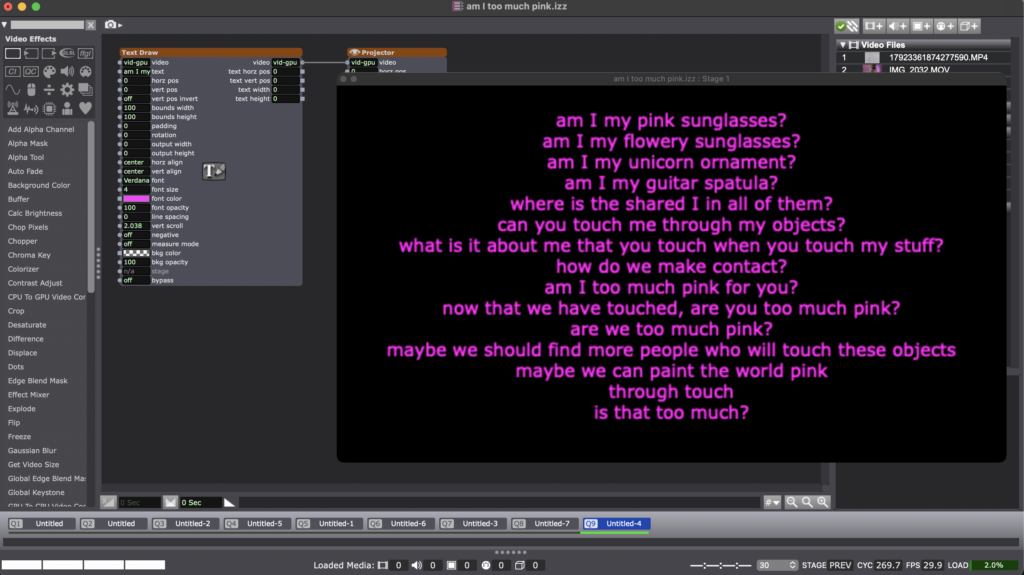

This semester, I also took a composition course called Rebel Innovations with Abby Zbikowski, where I had the chance to work on a new solo piece. In that piece titled “Am I too much pink?” I used several objects. Some of them are pink sunglasses that make you see the world in pink when you put them on, another pair of sunglasses with pink flowers all around the frame, tiny sunglasses that is too small for a human head, an ornament shaped like a unicorn, and a pink spatula shaped like a guitar.

Using objects, humor, and silliness, the piece implicitly explores themes of queerness, childlike disinhibition, neurodivergence, and ways of seeing/perceiving, through my and the audience’s interaction with the objects.

For my Independent Study, I decided to pursue my intended scores within this new context. My work for that class didn’t have the deep media systems component engrained other than using my phone to play music/sound and someone else’s phone to use the flashlight as a “spotlight”. But I remained interested in incorporating media systems because I am still interested in composing my final MFA thesis project with technology incorporation. So I worked with Isadora and Makey Makey to discover what the possibilities were. My main question was “How can Makey Makey and Isadora help me with building a bridge between object and meaning through a technology interface?” I remained interested in pursuing touch as a trigger for a response. My objects were mostly plastic and weren’t gonna conduct electricity the way I would need them to. So once again, I used the help of the conductive pencil to complete the Makey Makey connection. I programmed an Isadora patch with 9 scenes.

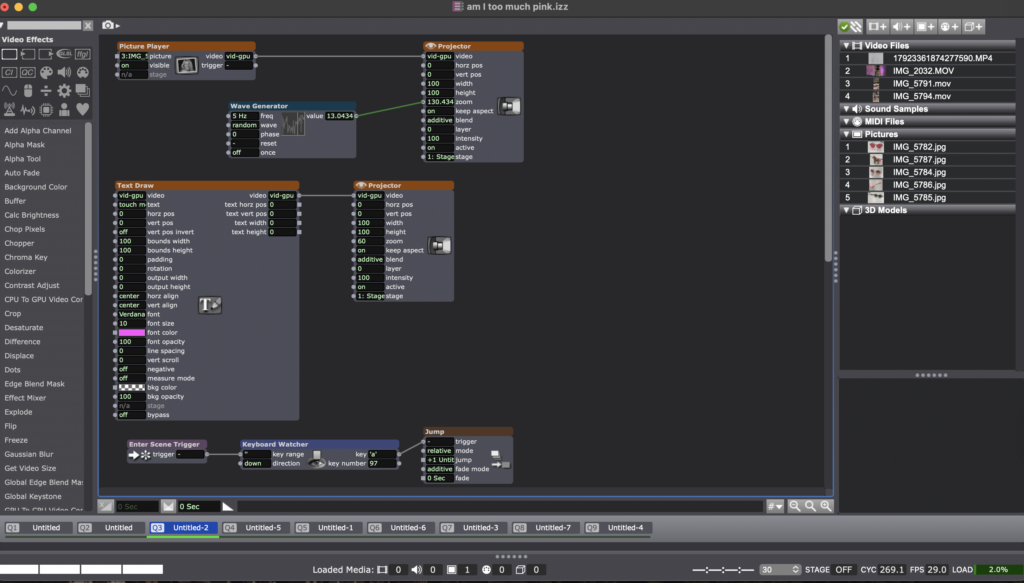

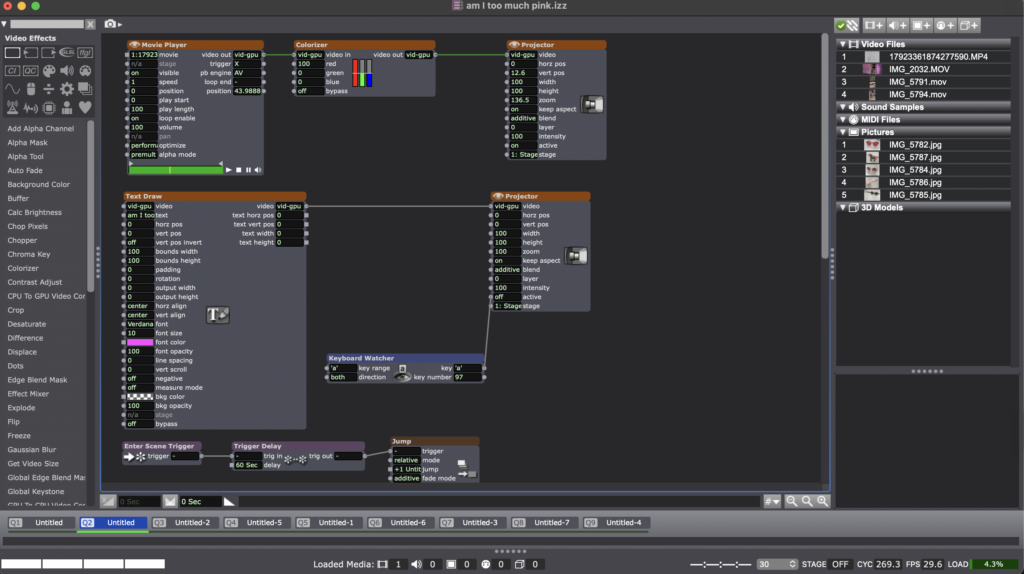

Using the keyboard watcher, text draw, trigger delay, jump, wave generator that’s set to random, colorizer, spinner, dots, and enter scene trigger, I designed/devised an experience where seeing the image of an object would prompt the interactor to touch the object, the object being touched would trigger a text or video to show up and those objects, texts and videos would accumulate in more questions for the interactors for them to reflect on internally.

Even though my initial Independent Study score also included exploring the relationship between camera, movement, and OSC, in this new context, I built a new relationship between movement and technology through pre-recorded videos and specific timestamps in the videos where that specific action would occur, asking the interactor to trigger the next scene through touch via Makey Makey when they encounter a specific movement.

As I have one more year left to develop my final piece, I am still in the building, questioning, asking, and responding phase. My explorations are finding micro shifts and deepening through experiments. With this Independent Study, I managed to accomplish my own scores and create a more solid foundation for my future creations involving objects, movement, Isadora, and Makey Makey.

Cycle Two: A 3D Movement-Based Sound Explorer

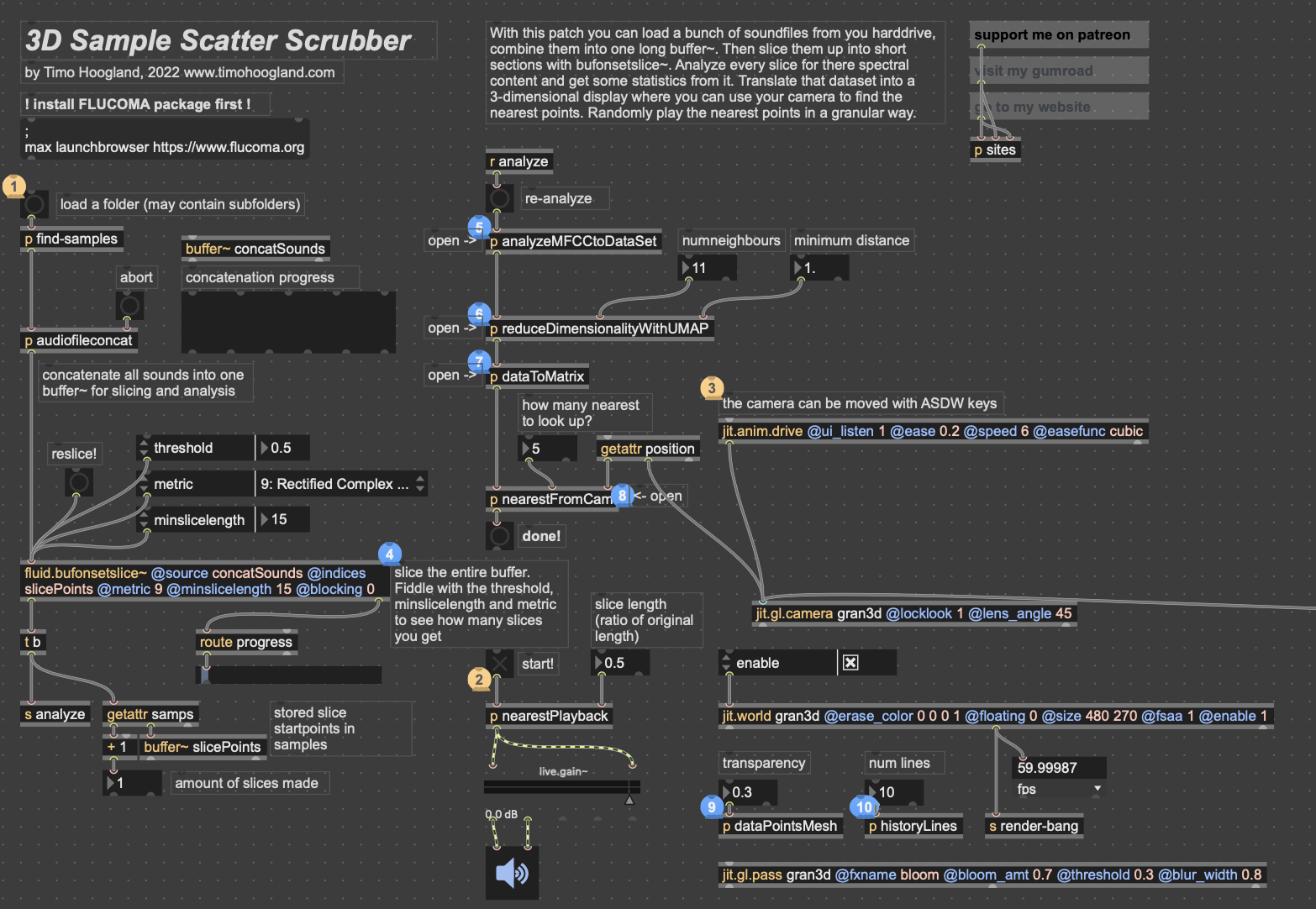

Posted: May 5, 2026 Filed under: Luke Buzard | Tags: Cycle 2, FluCoMa, MaxMSP, MediaPipe, touchdesigner Leave a comment »Going into cycle two, I immediately knew that I wanted to take the ideas I had done in 2 axes of movement in cycle one and translate it to 3D. In order to do this, I needed to rework the analysis portion to plot all the samples in 3 dimensions, as well as figure out a new way for the user to see their movement within the corpus of sounds. For several days, I tried to do the analysis section on my own, but found myself unable to figure out how to plot them in a 3D space as I’m not great with Jitter, Max’s visual package. However, just about when I was getting ready to give up, I found someone who had already done exactly what I was trying to do.

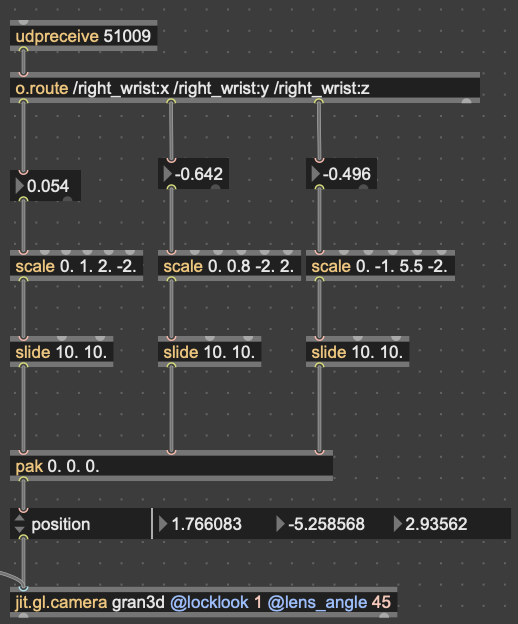

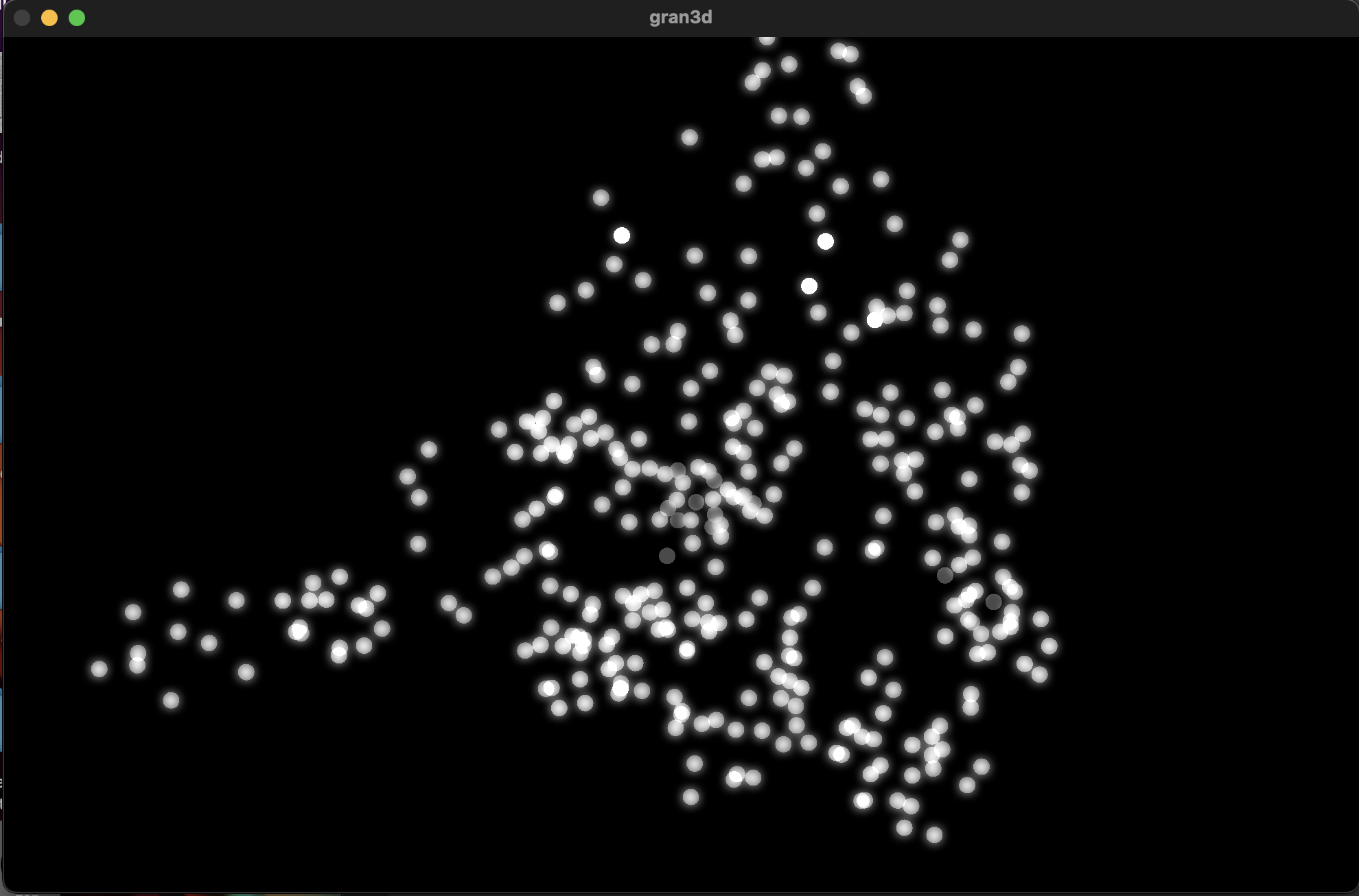

I won’t get too in-depth on how this works. But in short, it reduces the MFCC analysis data to 3 dimensions, as opposed to 2 in cycle 1, unpacks all that data into a matrix with 3 dimensions, and then that matrix data is used to generate an OpenGL environment with all the samples plotted in a 3D space. Then, depending on whatever point the camera is closest to in the Jitter window, the sample that correlates with that point is played back. This camera, however, was driven with WASDQZ, so I had to implement a new system of control that allowed this 3D corpus explorer to interface with the Google Mediapipe data I was receiving from TouchDesigner. I did this by scaling the data to the 3D space, smoothing it out some, and sending it a as list into the position attribute of the jit.gl.camera object.

Zoom-out of the 3D corpus space, each dot represents a sample; dimmer dots are deeper on the Z-axis

Results

Unfortunately, I don’t think my vision for this cycle translated to reality as well as I’d hoped it would. One of my biggest issues was that I left on an option that locked the camera to (0,0,0) in the space. This caused a major decorrelation between the movements of anyone using it and the movement in the space. Everyone seemed to struggle with “navigating” the corpus space much more than in the 2D iteration. Once I got rid of this attribute during our discussion afterwards, people understood what was happening better right away. I think the camera was also just a little glitchy since MediaPipe isn’t the *best* at detecting depth, which caused it to jump around wildly. I think if I were to continue with this 3D idea, I would have to incorporate a depth sensor in some way so that all 3 dimensions are accurate.

In my first cycle, I also included the MediaPipe landmark camera feed in the projector image as a sort of monitor for movement. In this one, I made the decision not to include it, as I’d hoped the movement in a 3D space would translate more intuitively to the user. However, I was really surprised that most everyone actually missed its presence. I think the playfulness of the little dot stickman moving around on screen must have provided a nice counter to the cacophony of sound. For this reason, I made a point to include the landmark skeleton in the final version.

Here’s a short recording of Alex using the system. As you can see, it was not very precise. Additionally, something in the analysis created a lot of sample points with that shrill high pitch sound that frequently plays. I don’t know where this came from, but it taught me to always check the samples when I’m working with tools like FluCoMa.

While this cycle was a bit of a fail in meeting the goals I had, I think it actually gave me a chance to step back afterwards and examine what the goals I wanted to achieve with these cycles were. Out of all of them, the largest was to create a group experience. This setback caused me to really narrow in on that idea for the 3rd cycle, which I think worked out much better in the long run.

Cycle 3 – Solo 2: Electric Boogaloo

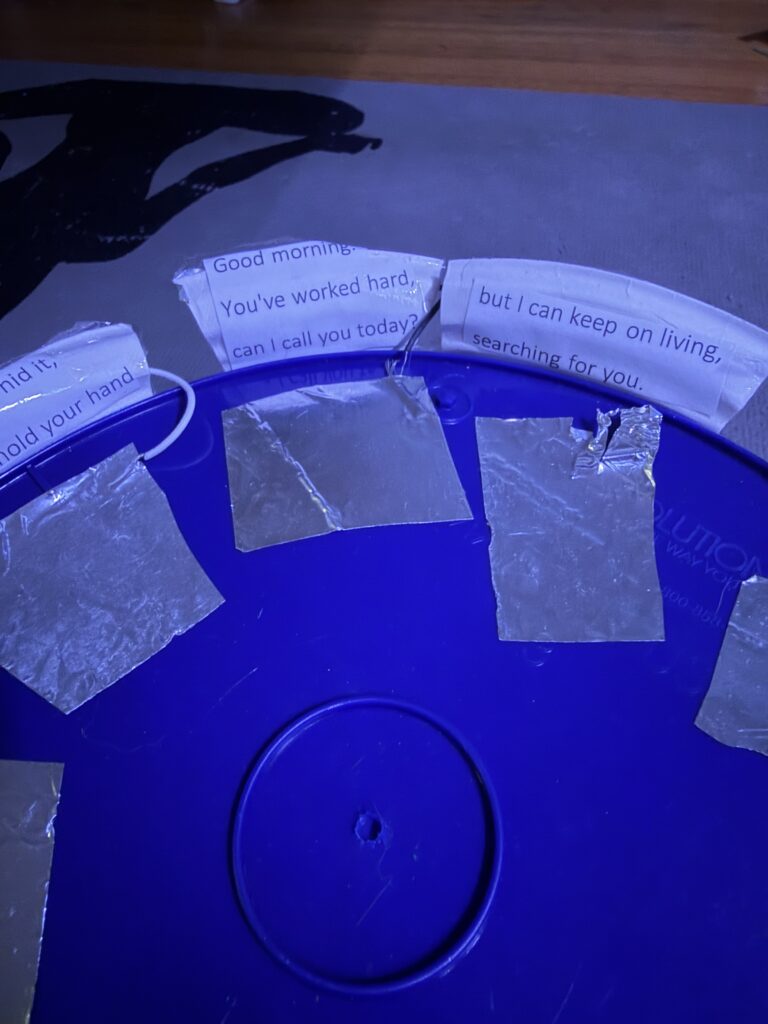

Posted: May 3, 2026 Filed under: Uncategorized Leave a comment »Hello! Welcome to the official successor of Solo: Paper Plate DJ.

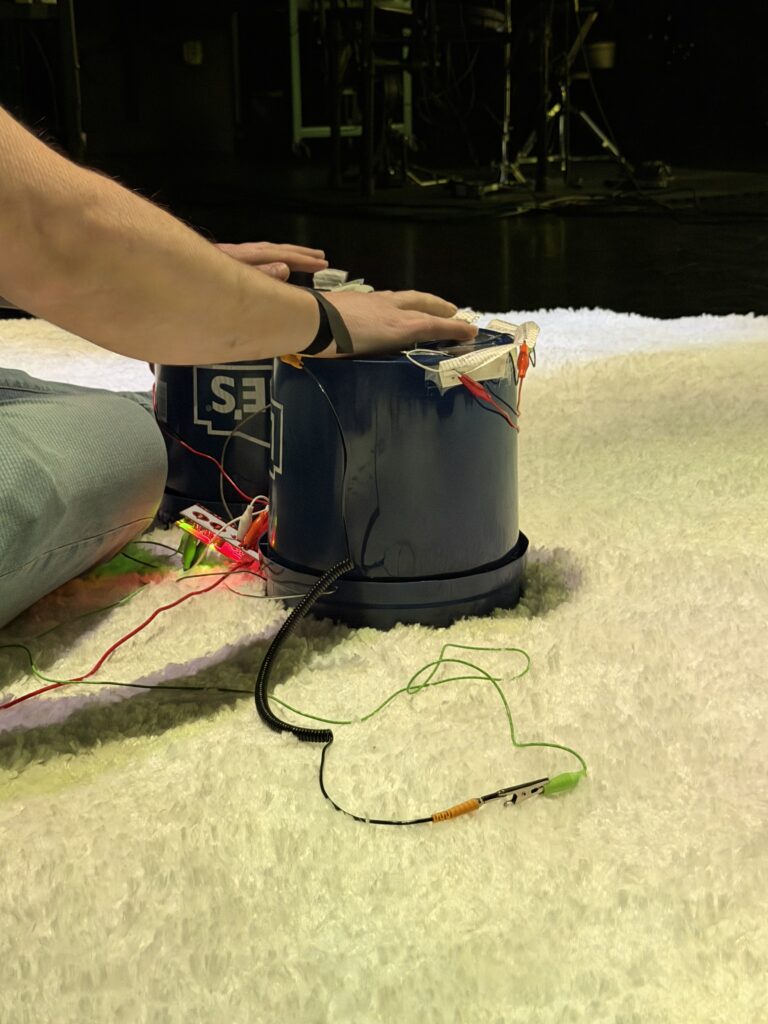

I won’t go into much detail on the pre-production and programming for this iteration, as not much has changed on that end; refer to the first post for that. The most important update to the project is in the controller; we are no longer using a paper plate (woohoo). We used a bucket (2 of them to be exact).

There were 9 inputs wired into the two buckets: 3 based on taps and 5 on holds. Originally, the player was to wear my watch with the conductive alligator clip attached, which worked pretty well for me, but stressfully, during the presentation, it was not working for Zarmeen. Michael came in with a save by lending me a bracelet designed to do exactly what my jimmy-rigged watch was meant to do (be a bracelet that makes the user conductive). The user finger-tapped their way through the visuals, each labeled with a lyric from the song (what the visuals were connected to).

While this controller was a big upgrade over a plate and closer to my vision of evoking a sense of musicality and rhythm in the user, it had its flaws:

Flaw #1. Nobody could remember the lyrical labels, nor did they look at them during their performance.

SO yeah, turns out it’s hard to read and hit buttons that make visuals appear at the same time. I was not shocked at this feedback, as I found myself not reading them as I played, but I also know the song, so I wanted to test it anyway. I’ve decided to incorporate the lyrics in another way that will hopefully be more effective (check my final post for that reveal).

Flaw #2. The buckets are janky

The buckets are janky. They are a bit ugly and easily disconnect from their inputs (the Makey Makey)… I will try my best to make this less the case in the next cycle.

Flaw #3. The meaning of the song did not matter to the users/was not clear.

Because no one remembered the lyrics and felt they were mostly preoccupied with making the coolest visual combos possible. I hope that by incorporating the lyrics into the visuals, the user will take heed of them.

Okay, time to be more positive.

My classmates reported feeling immersed in the process and as if they were performing or learning an instrument; some even expressed nervousness when in the “spotlight”. This accomplished one of my goals: to simulate the feeling of, well, performing a solo. I really enjoyed watching the unique interactions as people took turns. My classmates were oohing and aahing, and trying out different techniques to garner different visual combinations. I am lucky there are so many actual musicians in the bunch. Now I just need to accomplish some sort of meaning-making process in this experience.

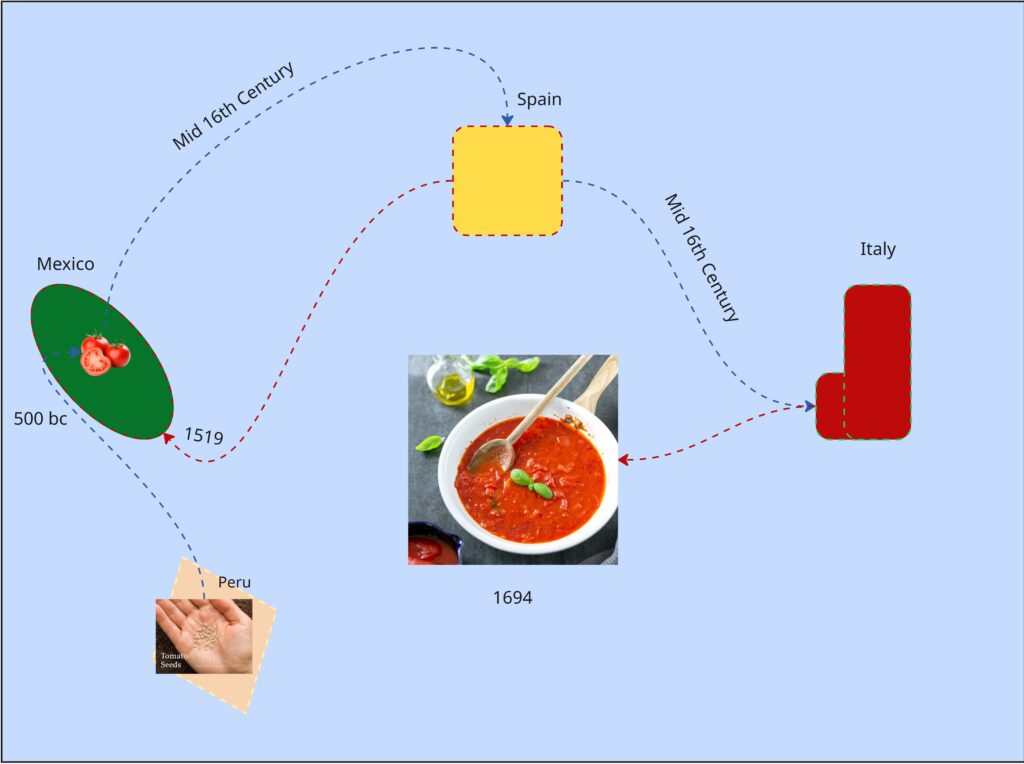

Pressure Project #3 – Tomato Tales

Posted: April 16, 2026 Filed under: Uncategorized Leave a comment »From my understanding this pressure project was to create an audio-based experience tied to a cultural subject close to you. We only had 5 hours total to work on it. Maybe due to the amount of work, I had ahead of me at the time, in this course and others I decided to go with the first idea that came to me. I thought of tomatoes.

In a design course taken in the prior semester, we did an on visual mapping exercise. We had to make a map about tomatoes within the class period, anything about tomatoes. Most people chose cooking processes or the life cycle of a tomato as an agricultural good. I tried to find a connection between tomato sauces, as I thought of mole (the sauce) and how every culture around the world seems to have their own version of tomato sauce…so where did tomatoes come from and how did they get to Italy?

It was colonialization, because of course it was. But Tomatoes originate from South America in the Inca Empire and flourished in the Aztec Empire.

It’s not the prettiest chart but I spent most of the exercise learning about tomatoes.

So, I made an audio experience using tomatoes as the means of interaction. While we weren’t allowed to show any visuals through the first playthrough I planned to reveal the control panel for the sounds with a second playthrough (which was allowed). I prompted the audience to try to piece together a narrative taking place, before and after the second playthrough.

As for the audio I built a library of 15th century Inca instruments, Aztec instruments and 16th century Spanish instruments. I began the recording with a sound of seeds rattling in a bag, followed by Inca instruments, gradually joined by Aztec performances showcasing the migration and meeting of two cultures. Aztec celebratory performances take the lead for a bit, to indicate the prosperity of the empire and cultivation of the crop. What follows is a change of lighting and sound. Waves and grumbling can be heard, followed with heavy footfall. A Spanish guitar plays a riff and is met with an Aztec Death Flute (scary ass sound) but is quickly cut off by the boom of a musket. This was to show way weapons (guns) from Spanish and other Western colonial forces were able to lethally end even the mightiest of native empires. To conclude waves and ship sailing sounds ensue again; a ren faire sounding band performance plays (apparently the sound of Italy at the time) along with chopping noises (the tomato is being used).

Shout out to Luke to basically nailing the message on the head when I prompted everyone to guess.

The recording process was very stupid. I used the Makey Makey and their soundboard app, however it doesn’t have a feature that lets you record in browser. So, I set up a microphone beside my speaker, and I am so shocked it didn’t sound horrible. I realized I more made a tactile activity for myself and gave far more cerebral activity for my audience (thinking game).

I chose this topic because colonialization is the primary driving force that has led to the life I live today. Colonialization is what makes me an American and not some random person in Ireland or Germany right now (where my ancestors are from). Colonialization is what provides us with iPhones, clothes…bananas most stuff really. My partner is here because her ancestors were stolen from their country and brought here as slaves. Behind almost every invention, creature comfort, and privilage that makes up the West is covered in a history of bloodshed… Including spaghetti if you follow the threads enough. This isn’t something to make people feel bad or anything. Knowing this type of thing is impotrant to me, how much violence makes up the creature comforts we have today. Who am I harming or partcipating in the harm of by consuming in the empire I live in now? How do I react to learning this things?

The good news is, I don’t really like tomatoes anyway.

Mole is pretty good though.

Cycle One: The Movement-Based Sound Explorer

Posted: April 13, 2026 Filed under: Uncategorized | Tags: cycle 1, FluCoMa, MaxMSP, MediaPipe, touchdesigner Leave a comment »As I began thinking about what I wanted to make my cycles about, I found myself gravitating towards a question I had previously been interested in when beginning to work on my senior project at the beginning of the year: How might technology allow for the creation of new modes of musical interface, where the relationship between audience and performer is almost entirely dissolved?

My primary resource was Max/MSP, as I know it best of all the computer music softwares, and I find it very useful for the development of new ways of making music.

Going into this cycle I knew one of the central resources that I could use to help me answer this question was Google MediaPipe–a real time motion capture software that uses webcam input as opposed to dedicated hardware/software that requires mo-cap suits. This allows for systems which anyone can easily interact with, even without knowing how the system works or what each mo-cap landmark is controlling. I handled this part of my patch in TouchDesigner, as the Max integration of MediaPipe has some difficulties I don’t have time to get into here.

My main goals for this cycle were to create an interface to interact with sound that was fun, interesting, but also left room for potential emergent behavior when left in the hands of different users.

The second major piece of software I used was the Fluid Corpus Manipulation (FluCoMa) toolkit for Max/MSP (also available in SuperCollider and Pure Data). This toolkit uses machine learning software to analyze, decompose, manipulate, and playback a large collection (or corpus) of samples. I initially chose this piece of software as one of its modes of playback is a 2D plotter which can map two different aspects of the sample analysis on to an X and Y axis. I thought this would be a perfect interface for MediaPipe control as the base 2D plotter uses mouse input, which I found to be detrimental to using it as an “instrument.”

I had initially wanted to expand the idea of the 2D plotter to a 3D one, as I felt being able to interact with the patch in a 3D space would be much more natural. However, I found expanding the logic to work in 3 dimensions was a much more difficult task than I’d thought, so I decided to stick with the 2D plotter for this cycle.

Results

I thought that I was mostly very successful with the goals I set out to accomplish. Everyone wanted to try out the patch, which I thought was a testament to the “fun” and “interest” aspects of it. The controls were also quickly picked up on, which was a goal of mine, as I’m interested in systems that audiences can interact with regardless if they’re conscious of the mechanics of that interaction or not. I was most interested to see how different people had their own unique ways of interacting with it as well. Chad, for example, was really trying to make something rhythmic and intelligible out of it, while others were going all over the place, or looking for specific sounds.

Some missed opportunities that I want to expand on in future cycles is the use of the Z dimension in controlling the playback of samples, as well as the use of multiple limbs to control playback. As you can see in the video, users were somewhat restricted in how they could control the patch by the Z direction not doing anything, as well as the fact that only the right hand could trigger sounds. By expanding this idea to 3D, instead of two, and allowing for the use of multiple limbs, I think it’ll give people more freedom in how they interact with the corpus of sounds.

This was the first real project I’ve done with FluCoMa, and thus I learned a ton about its mechanisms, particularly the storage of non-audio data in buffers. This is a concept used a lot more in environments like SuperCollider or Pure Data, as Max has some other objects for storing that kind of information. However because of the way the machine learning tools in FluCoMa work, it needs to store all of the information it may need in RAM. This was also the first project I’ve done sending OSC data between different apps on my computer, which had a bit of a learning curve as I discovered OSC data sends as strings, instead of floating point numbers. This didn’t create any real difficulty, as the conversion took no time, but it did make me aware of an important aspect of using OSC (particularly with Max, as certain objects process strings/floats/integers differently).