Pressure Project 3 (Murt)

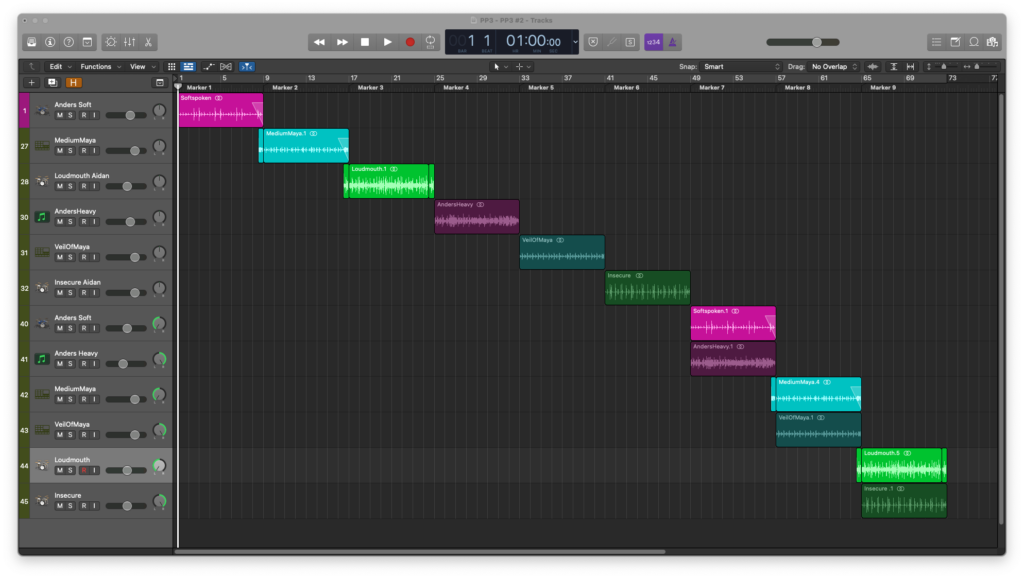

Posted: April 28, 2021 Filed under: Uncategorized Leave a comment »For this project, I wanted to explore the use of spatial effects to distinguish the inner states-of-mind of different characters from their outward presentation. Within Logic Pro X, I set up three of the “smart drummers”(an oxymoron, depending who you ask). Each drummer plays an outer-world part, and inner-world part with some form of reverb/delay, and a third section where the two parts are combined but panned hard to opposite sides of the binaural field.

Anders is Logic’s metal drummer, but I used him to play something steady and subdued(soft-spoken) outwardly. For the inner section, I used an instance of EZDrummer2 Drumkit from Hell with a reverb and dub delay to create the inner metalhead.

Maya is a retro 80s drummer with a rad WYSIWYG vibe.

Aidan is indie rock oriented. He is constantly overplaying and it doesn’t suit the music. In other words, he is a loud mouth.

Based on the critique session, it seemed that the project might read better if I put each drummer’s parts in their own sequence rather than three external-> three internal-> three discrepancy.

Cycles 1-3

Posted: April 28, 2021 Filed under: Uncategorized Leave a comment »For the duration of the current millennium, I have been working in the live audio field. I have also been involved in a handful of theatre events. It is through this work that I have grown attached to the practice of providing support to performers. Within the pandemic milieu, I have been severed from my Allen & Heath digital audio console for the longest period yet. More importantly, I have no access to performers to support. I am not much of a performer myself, so I have resorted to the lowly wooden train set.

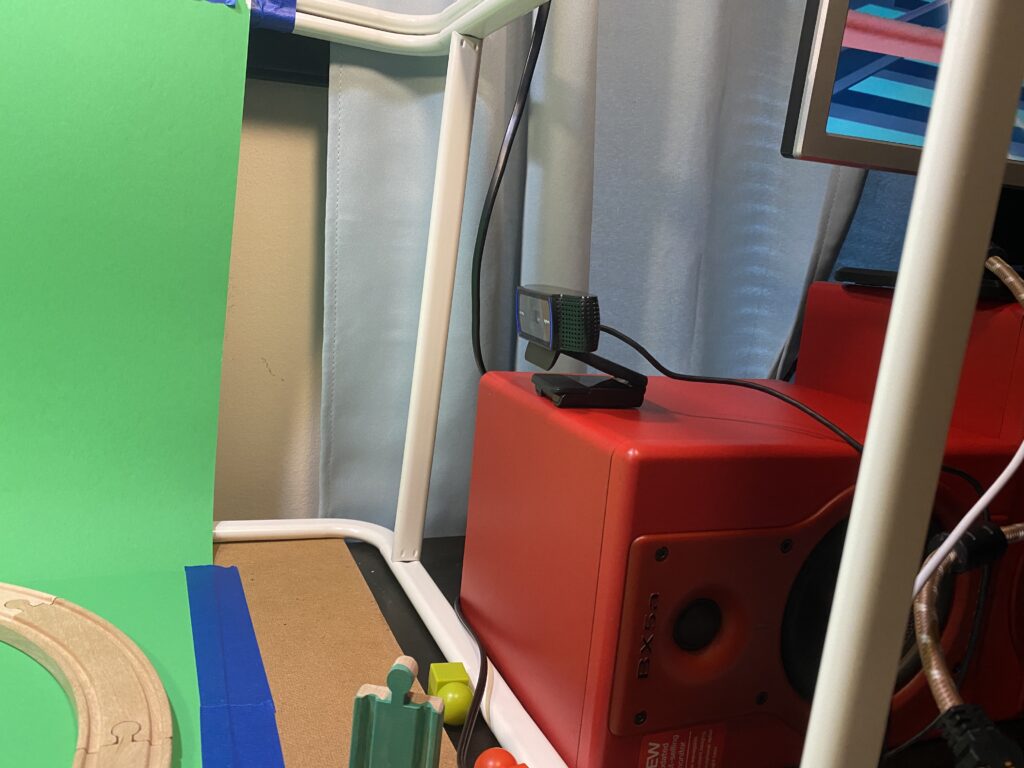

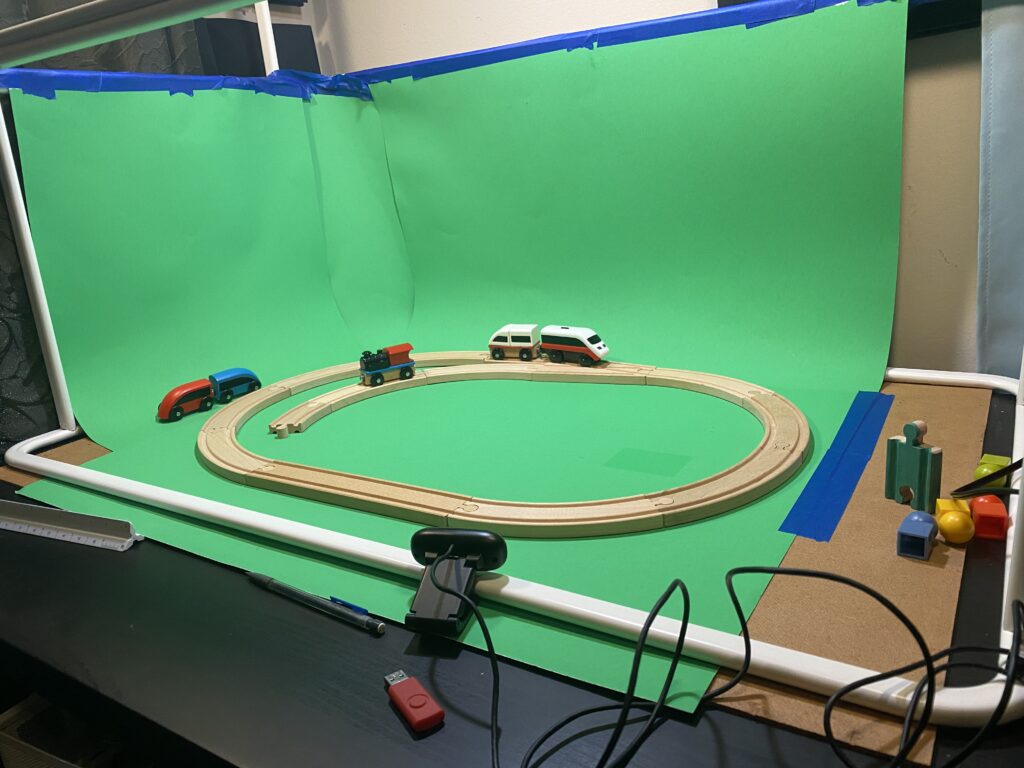

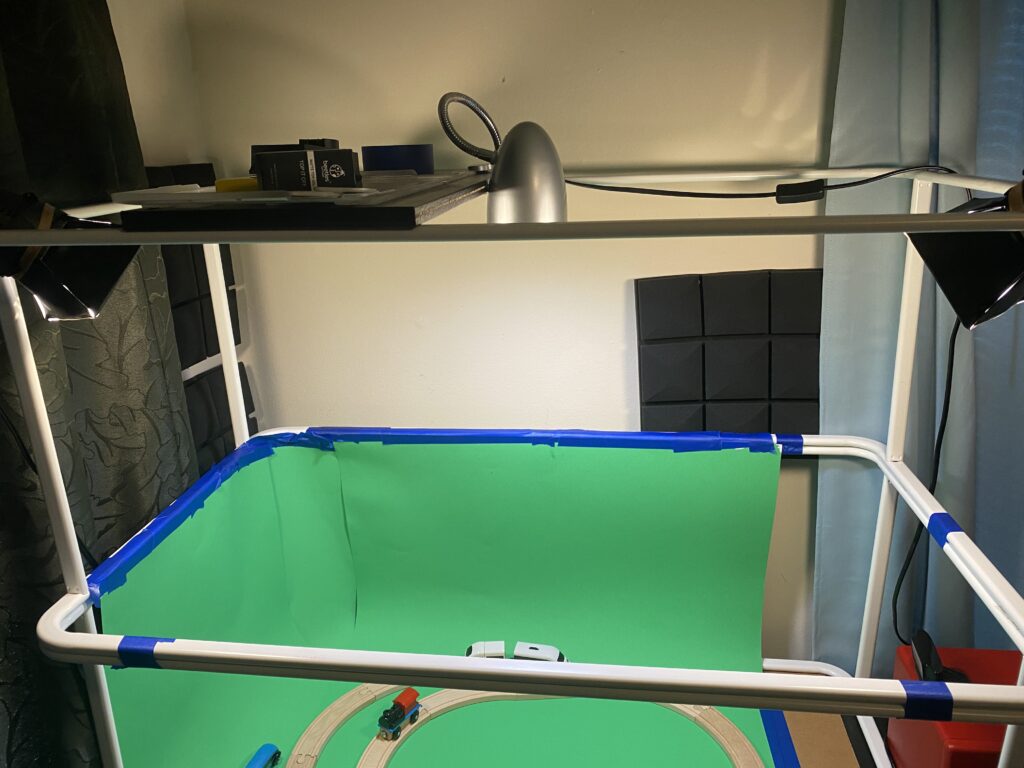

In Cycle 1, I created a green box setup for the purpose of chromakeying my train into an interactive environment. It was a simple setup: 2 IKEA Mulig clothing racks to provide a structure for mounting lights and cameras, 3.5 sheets of green posterboard, 2 Logitech webcams(1 of which is 1080p), a pair of mini LED soft box lights for key lighting and a single soft LED for back lighting.

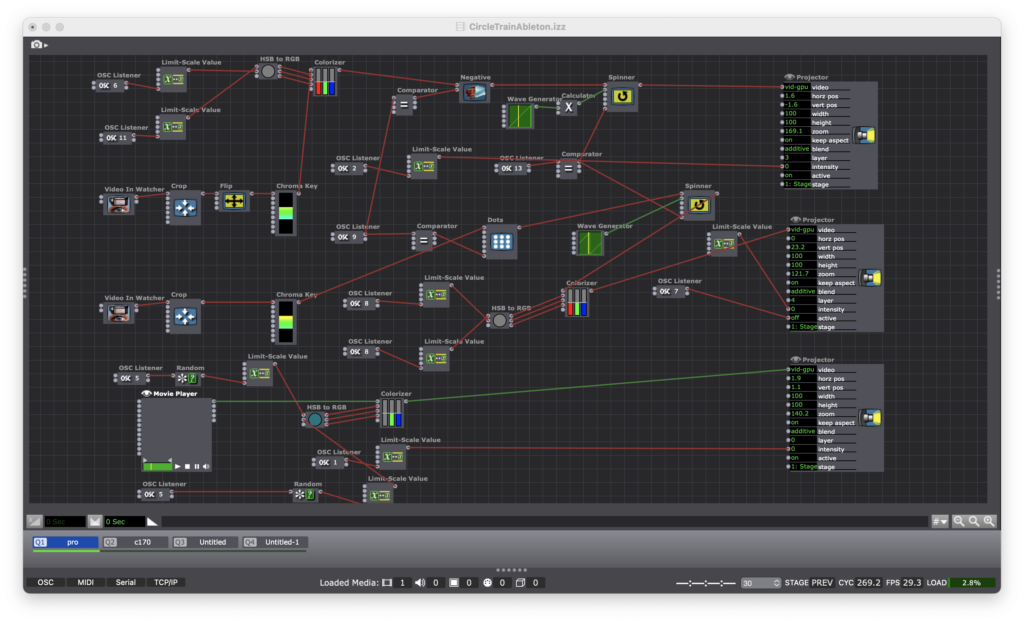

Within Isadora, I patched each of the webcams into a distinct video effect, a spinner actor, and a master RGBA/Colorizer. Using the “Simple” preset in TouchOSC on an iPad and an iPhone, I was able to set OSC watchers to trigger each of the effects, the spinner and the colorizer. The effects were triggered with the MPC-style drum pad “buttons” in TouchOSC on the iPad and the colorizer was set to an XY pad on the iPhone. All of this is layered over a blue train themed video. The result is a patch that makes the train a true “performer” dancing to a Kick and Snare beat…that no one can hear…yet.

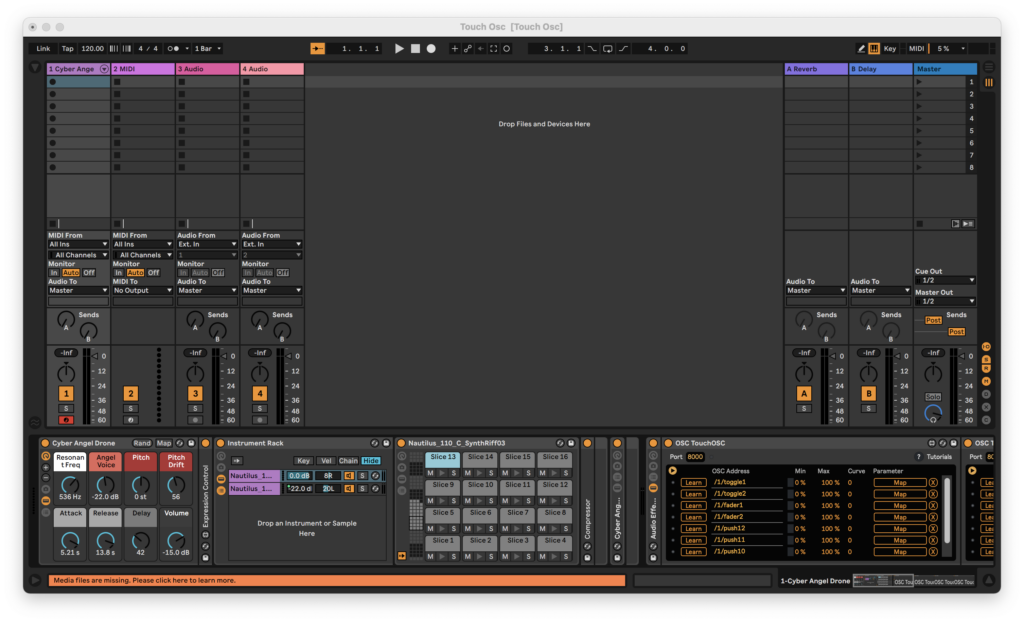

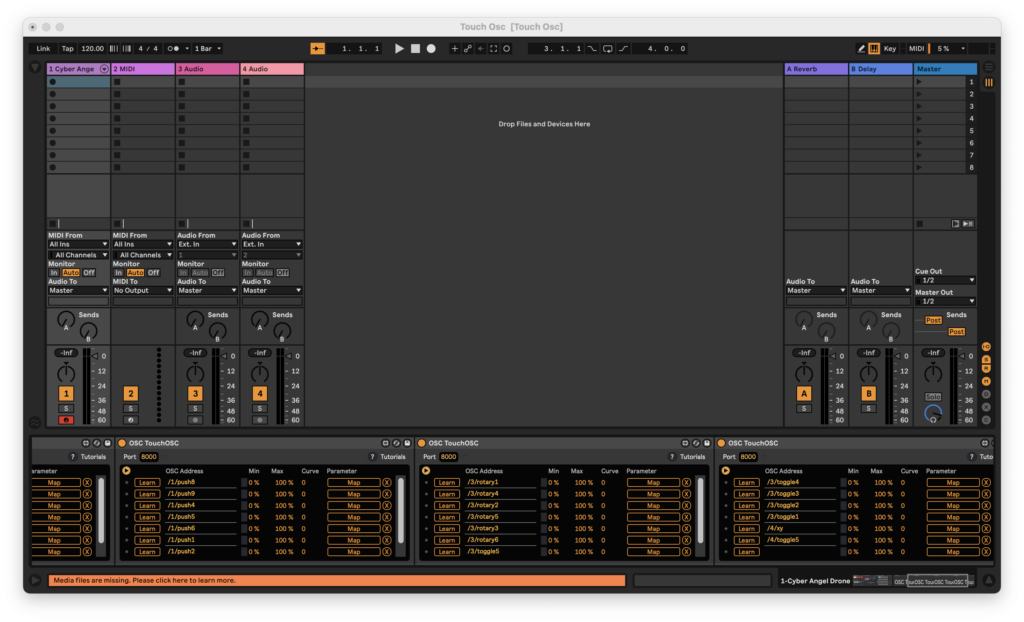

Cycle 2 began with the untimely death of my green box in a gravity related accident. In dealing with this abrupt change in resources, the cycle saw the implementation of Ableton Live. Woven into the Cycle 1 patch is a change of OSC channels in order to line up with the MIDI notes of a Kick and Snare in Ableton. I built in some ambition into my Ableton set by assigning every button in the “Simple” TouchOSC preset. The result is a similar video performance by the train, but now we can hear its music.

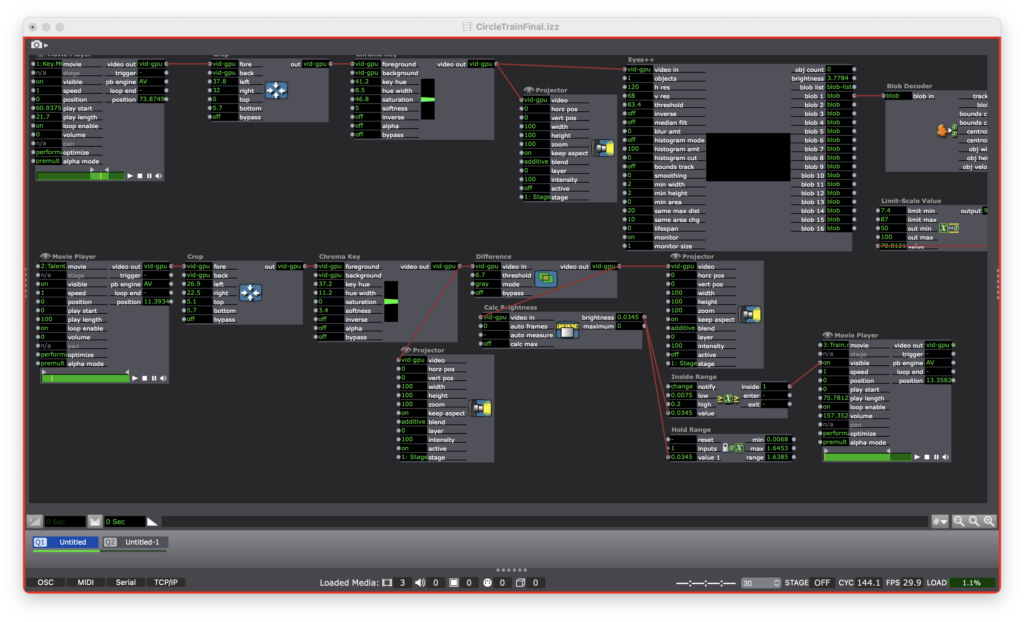

Cycle 3 began with a number of deviations from the train theme. I was looking for new ways to integrate my previous discoveries in a manner that would be less constrained by the physical domain existing solely in my house. I toyed around with some inverse chromakey patches that would sense the head of a blue-yarn mallet and trigger a sound. In returning to the train theme, I attempted to make an overlay that would use blob tracking and proximity targets to trigger sounds.

Ultimately, I resurrected the green box…and it the product looks and behaves a bit like a zombie. I went with a different Eyes++ setup in tandem with a simple motion tracker. As it turns out, I messed up the Eyes++ to the point that it wasn’t doing anything at all. In my final patch, the motion sensor is simply turning a train sound on and off as the train enters the frame of the camera. I intended to implement an cartoon overlay that would follow the train around the track thanks to the Eyes++ rig. Alas, the Roger Rabbit train is Sir Not-Appearing-in-This-Film thanks to a supply chain disruption of that most important of resources: time.

In this patch, I used video of the train from two different angles: bird’s eye and slightly downward. This made the patch easier to work with since I wasn’t constantly turning the train on and off. It also zombified the Cycle a bit more with the train no longer being a live performer. However, I justify this decision with the idea that the train was only ever a stand-in for a live performer.

On a final note, I will be packaging the final patch for future enjoyment to be accessed on this site.

Cycles 2+3 (Sara)

Posted: April 28, 2021 Filed under: Assignments, Sara C | Tags: Godot Leave a comment »Intent

Originally, I intended to call this project “Cliffside Cairn.” My goal was to build an ambient, meditative game in which the user could pick up and stack rocks as a way to practice slowing down and idly injecting a moment of calm into the churn of maintaining a constant online presence in the current global pandemic. I took inspiration from the Cairn Challenges present in Assassin’s Creed: Valhalla. While I haven’t been able to maintain the attention necessary to tackle a new, robust open-world game in some time, my wife has been relishing her time as a burly viking bringing chaos down upon the countryside. Whenever she stumbled upon a pile of rocks on a cliff, however, she handed the controller over to me. The simplicity of the challenge and the total dearth of stakes was a relief after long days.

As the prototype took shape, though, I realized that no amount of pastels or ocean sounds could dispel the rock stacking hellscape I had inadvertently created. I showed an early version to a graduate peer, and they laughed and said I’d made a punishment game. Undeterred, I leaned into the absurdity and torment of watching teetering towers tumble. I slapped the new name Rock Stackin’ on the project and went all in.

Process

Initially, I sought to incorporate Leap Motion controls into the Godot Game Engine, so the user could directly manipulate 3D physics objects. To do so, I first took a crash course in Godot and created a simple 3D game based on a tutorial series. With a working understanding of the software under my belt, I felt confident I could navigate the user interface to set up a scene of 3D rock models. I pulled up the documentation I had barely skimmed the week before about setting up Leap Motion in Godot—only to find that the solution required a solid grasp of Python.

After it became clear that I would not be able to master Python in a week, I briefly toyed around with touch-and-drag controls as an alternative before setting Godot aside and returning to Isadora. Establishing Leap Motion controls in Isadora was straightforward; establishing physics in Isadora was not. Alex was remarkably patient as I repeatedly picked his brain for the best way to create a structure that would allow Isadora to recognize: 1. When a rock was pressed, 2. When a rock was released, and 3. Where the rock should fall when it was released. With his help, we set up the necessary actors; however, incorporating additional rocks presented yet another stumbling block.

I returned to my intent: stack rocks for relaxation. Nowhere in that directive did I mention that I needed to stack rocks with the Leap Motion. Ultimately, I wanted to drag and drop physics objects. That was not intuitive in Isadora, but it was in Godot, so at the 11th hour, I returned to Godot because I believed it was the appropriate tool for the job.

Challenges

As a designer, I know the importance of iteration. Iterate, iterate, iterate—then lock it in. The most significant challenge I faced with this effort was the “locking it in” step. I held onto extraneous design requirements (i.e., incorporate Leap Motion controls) much longer than I should have. If I had reflected on the initial question and boiled it down to its essential form sooner, I could have spent more time in Godot and further polished the prototype in time for the final presentation.

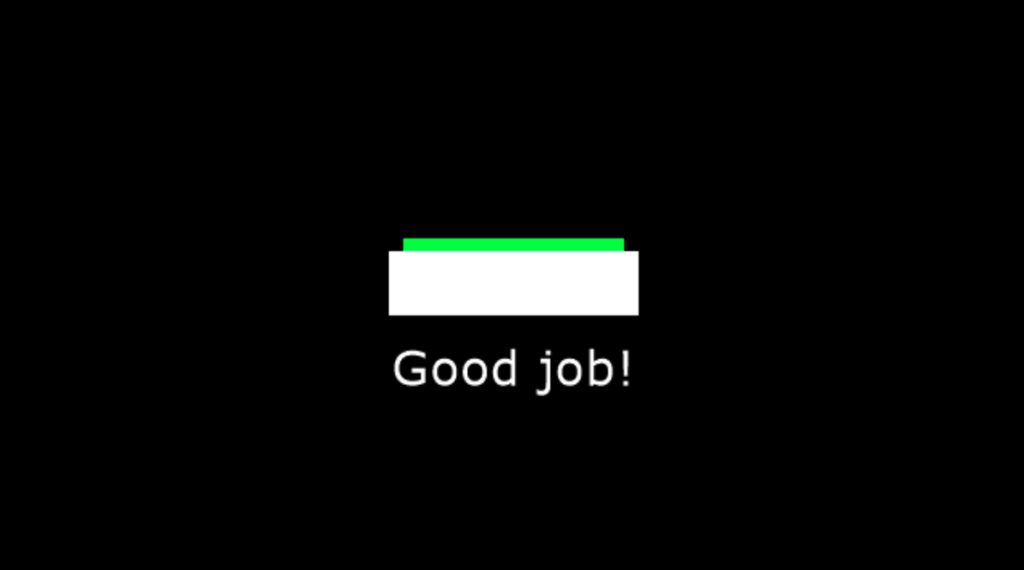

Additionally, when I did decide to lean into the silliness of the precariousness of the rock stack, I decided I wanted to insert a victory condition. If the user could indeed create a rock stack that stayed in place for x amount of time, I wanted to propel them to a “You Win!” screen. I thought I would replicate the boundary reset script I successfully implemented, but I realized one could game the system just by dragging an object into the Area2D node.

Finally, while I did incorporate ambient wave sounds, I struggled to add additional sound effects. The rock splash sound was only supposed to trigger when it hit the water, but it fired when the scene began as well. I faced similar challenges when trying to add rock collision sound effects.

Accomplishments

Challenges aside, I’m incredibly pleased with the current prototype. I successfully implemented code that allowed for dragging and dropping of physics objects that interacted with colliders. Without that, Rock Stackin’ would have been impossible. Furthermore, I established a scene reset script if the user flings a rock off the edge. At first, I prevented the user from being able to drag a user off the screen with the inclusion of multiple invisible colliders. However, I wanted some tension to emerge from the potential of a tumbling tower, and a reset seemed like a gentle push. Lastly, I’m pleased with the rock colliders I added. Capsule colliders caused jitter, while rectangle colliders provided no challenge. Multiple rectangle colliders scaled and rotated to fit the rocks I had drawn and led to a pleasing amount of collision and groundedness.

Observations

As I mentioned previously, I was using software not ideally suited for my needs. My main observation stems from that realization:

Go where the water flows.

Identify the hierarchy in your design goals and use the best tools for the job. I spent a while bobbing against the shore before I pushed myself back into the stream to where the water flowed.

Cycle 2

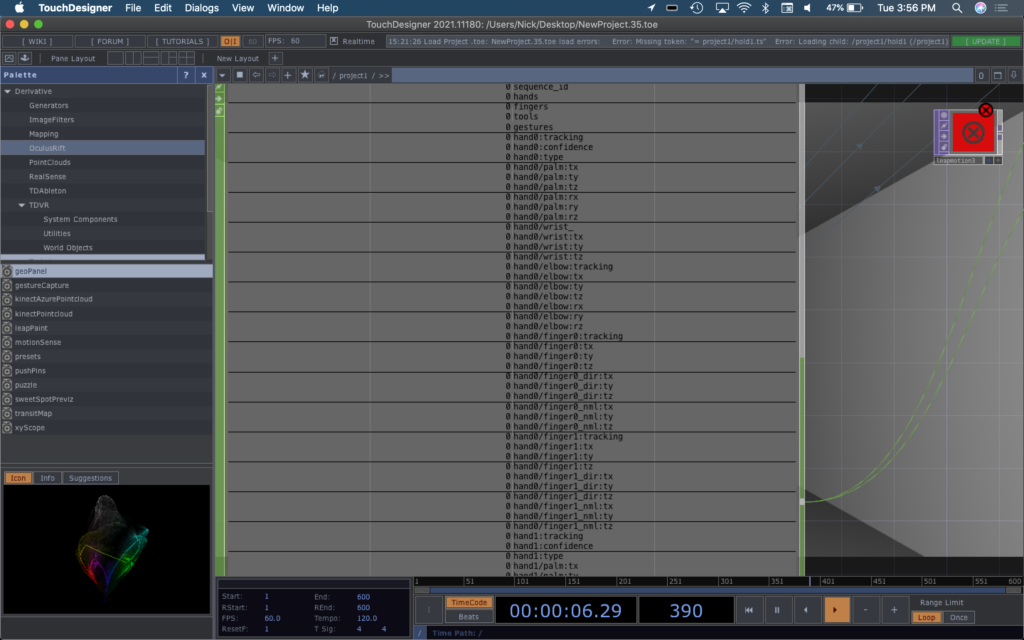

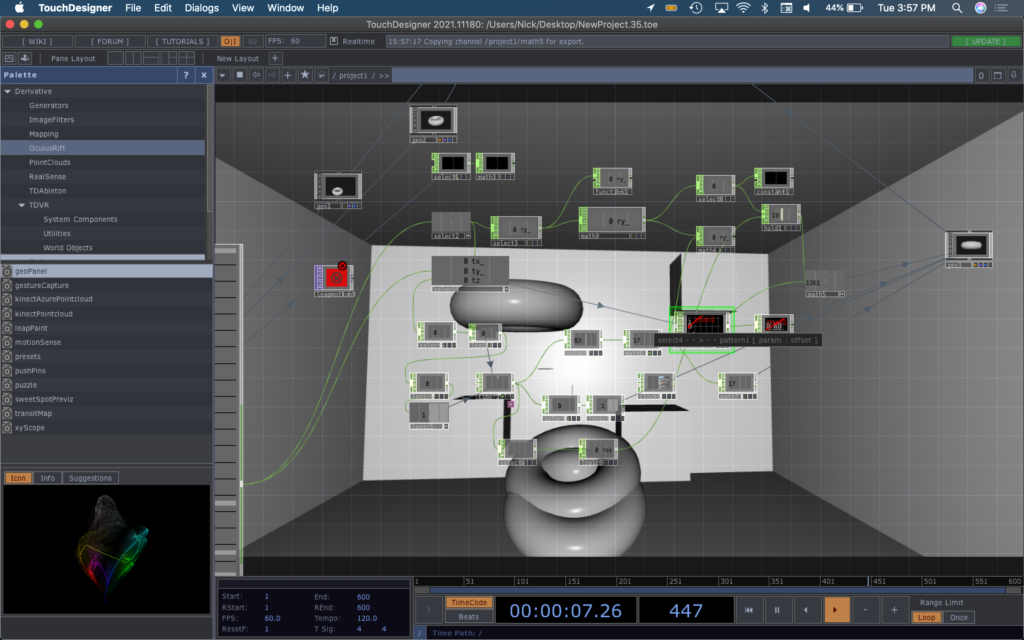

Posted: April 27, 2021 Filed under: Nick Romanowski Leave a comment »The goal with Cycle 2 was to create the leap-motion driven firing mechanism for the game environment. My idea was to use the leap-motion to read a user’s hand position and orientation and used that to set an origin of and trigger the launch of a projectile. TouchDesigner’s Leap operator had an overwhelming amount of outputs that read nearly every joint in the hand, but they didn’t quite have a clean way to recognize gestures. To keep things simple, the projectile launch is solely based on the x-rotation of your hand. When the leap detects that your palms is perpendicular to the ground, it sends that pulse that causes the projectile to launch.

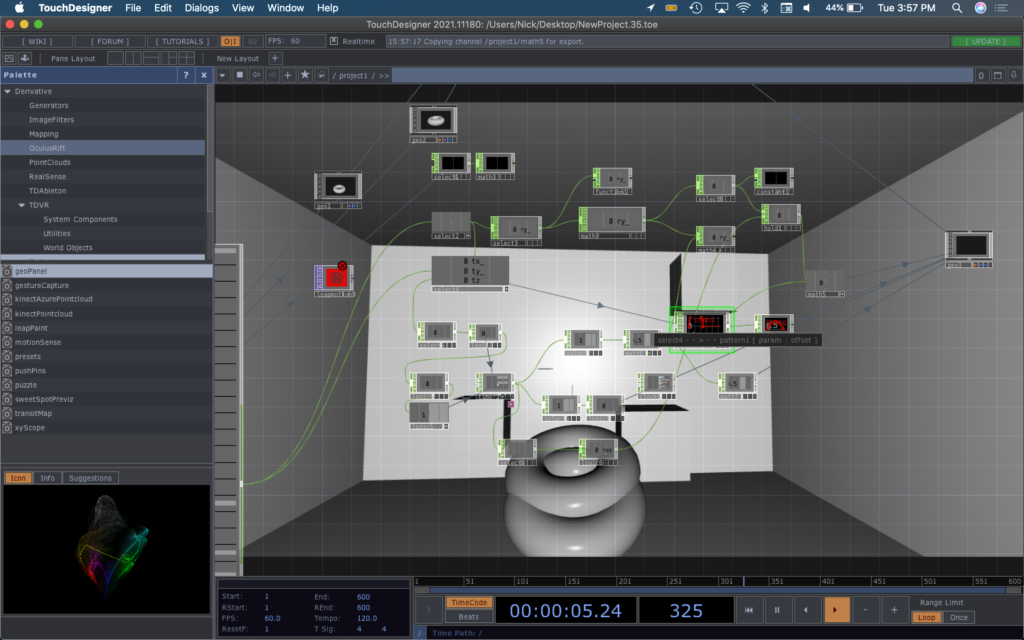

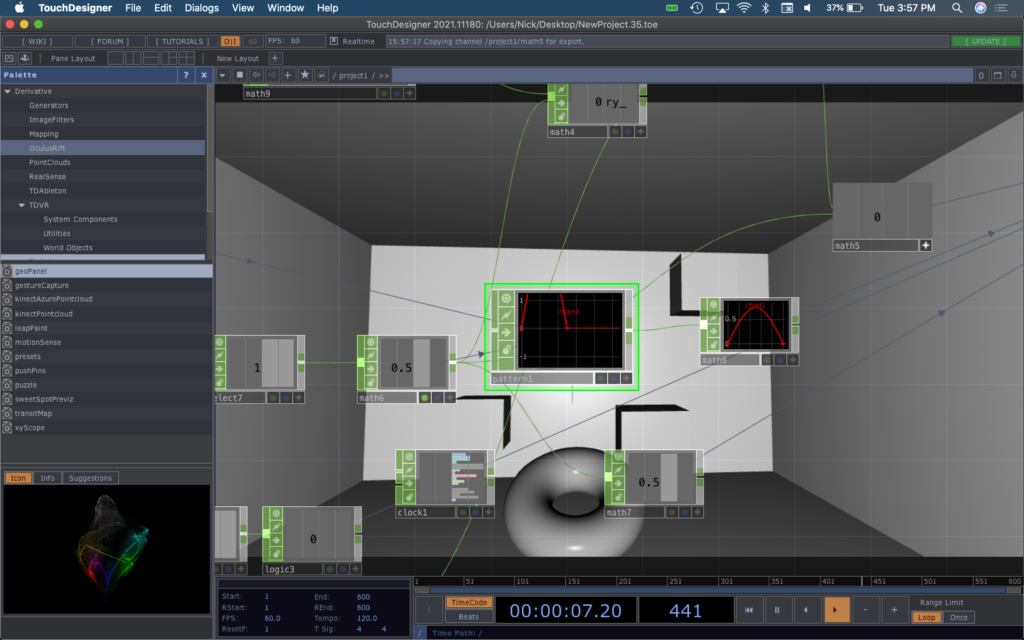

Creating launch mechanics was a bit of a difficult feat. The system I built in Cycle 2 had no physics and instead relied on some fun algebra to figure out where a projectile should be at a given time. When the hand orientation trigger is pulsed, a timer starts that runs the duration of the launch. When it’s running, a torus’s render is toggled to on and its position is controlled by the network shown below.

The timer numbers undergo some math to become the z-axis value of the torus. The value of a timer at a specific time feeds into a pattern operator formatted like a parabola to grab a y-axis value on said parabola and map it to the y-axis of the parabola. The x-axis of the user’s hand is used to manipulate that amplitude of the pattern operator parabola to cause the torso to go higher if you tilt your hand back. Using a slope formula: y=mx. the x-axis position of the torus is calculated. The timer value is multiplied by a value derived from the y-rotation of your hand to fire the torus in the direction that your aiming. When the timer run completes, it resets everything and puts the torus back at its start point.

Cycle 1/2 (Sean)

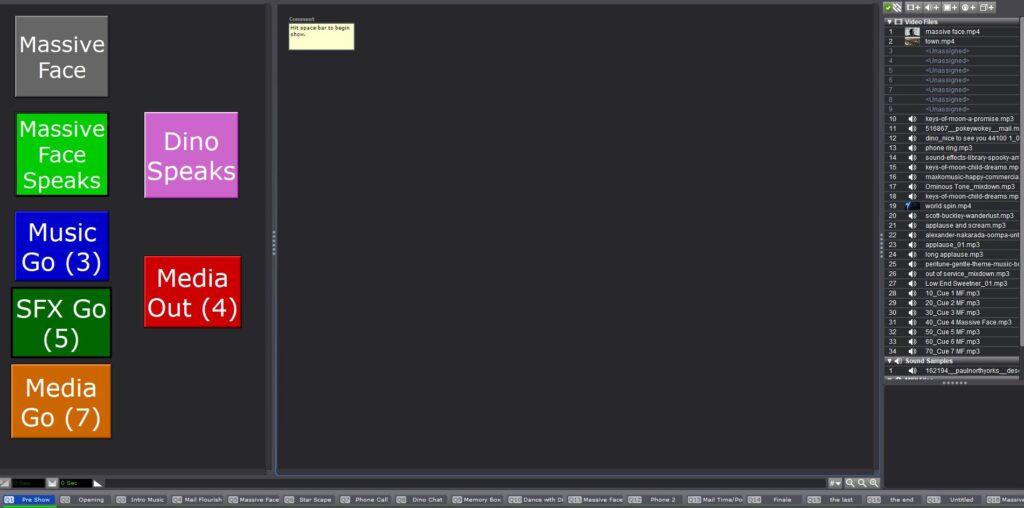

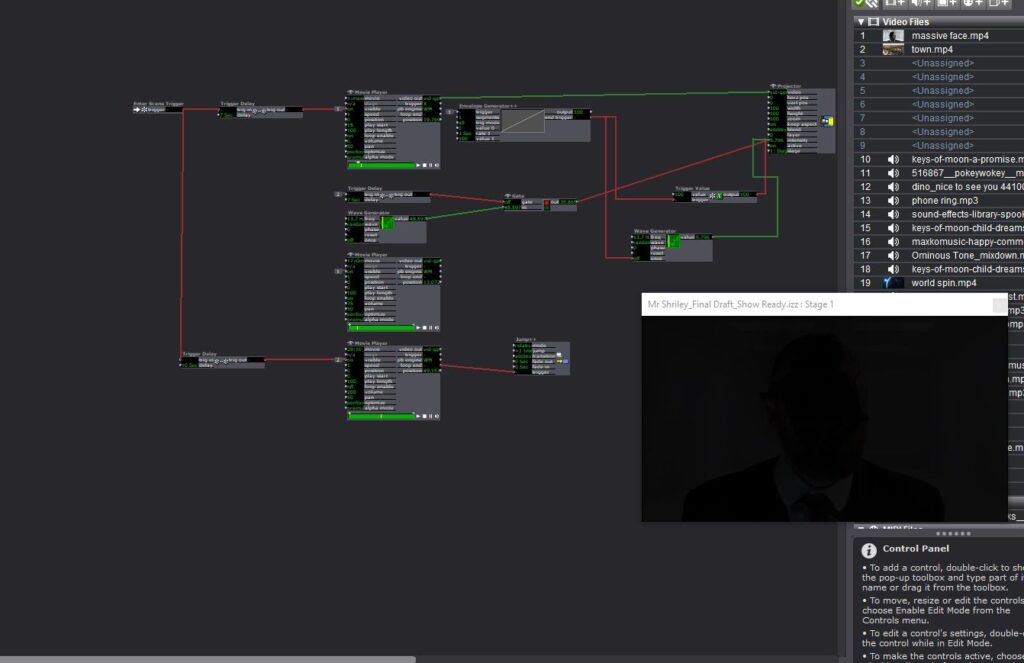

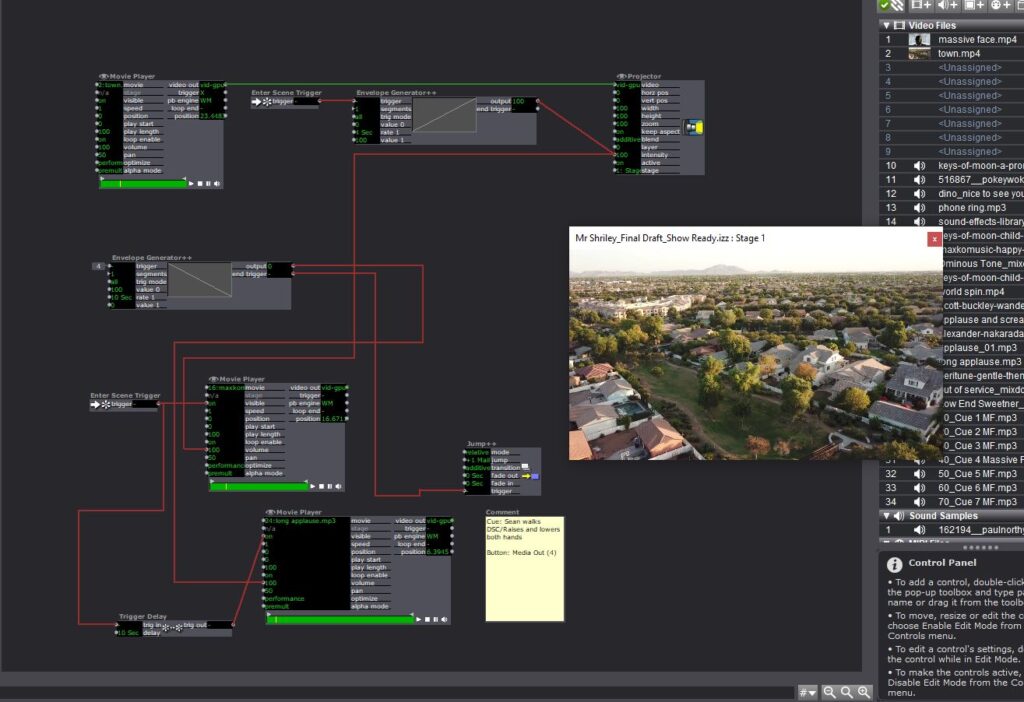

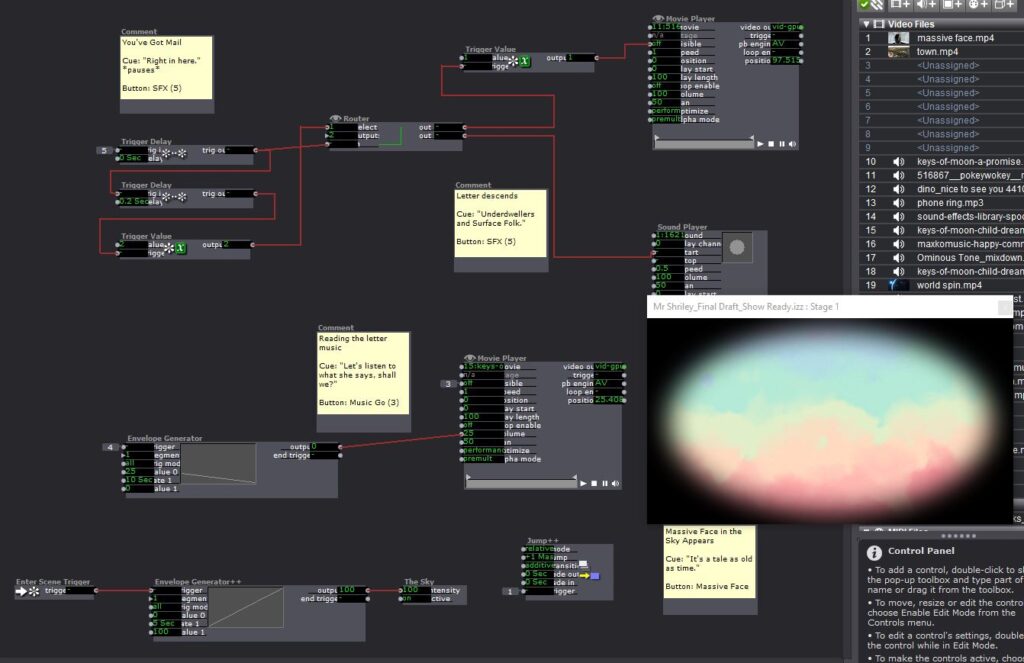

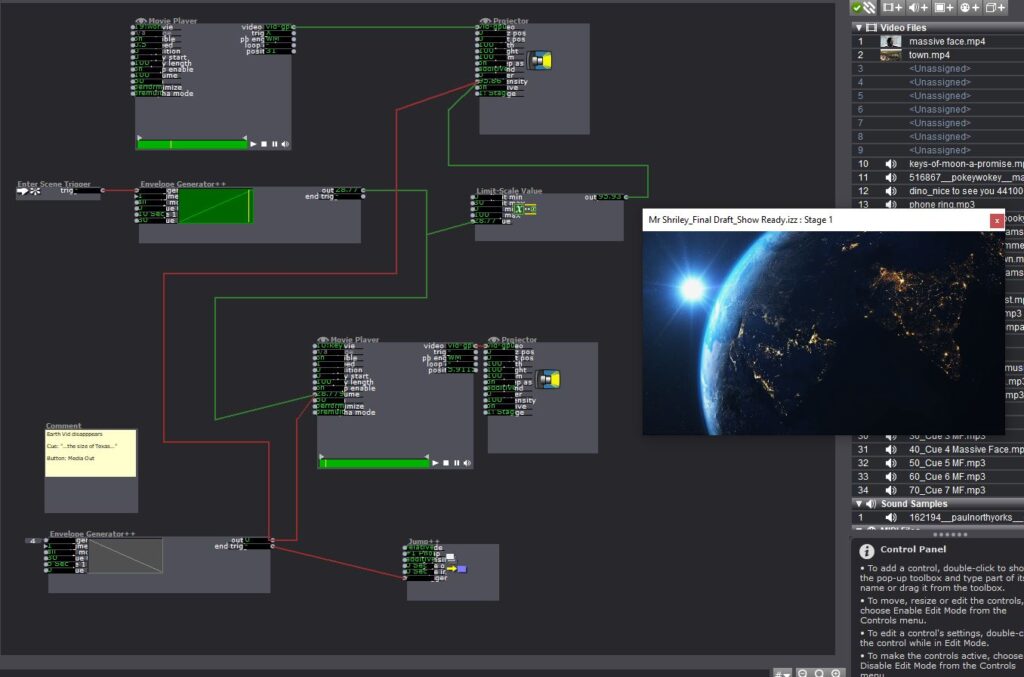

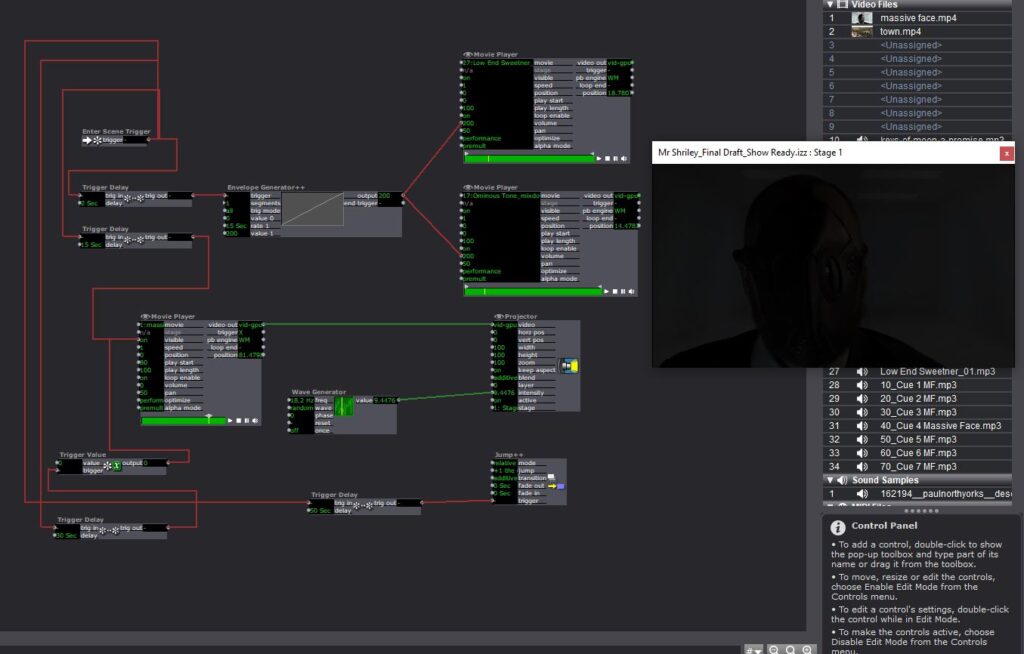

Posted: April 7, 2021 Filed under: Uncategorized Leave a comment »My first couple of cycles got mashed up a bit. I was working with the set deadline of tech rehearsal and performance of my solo thesis show, Mr. Shirley at the End of the World. In ordinary times, this would be presented live but due to the pandemic, I had to film the production for virtual presentation. The primary reason I enrolled in this class was to work with Isadora to program the sound and media in my show. Here are some images below.

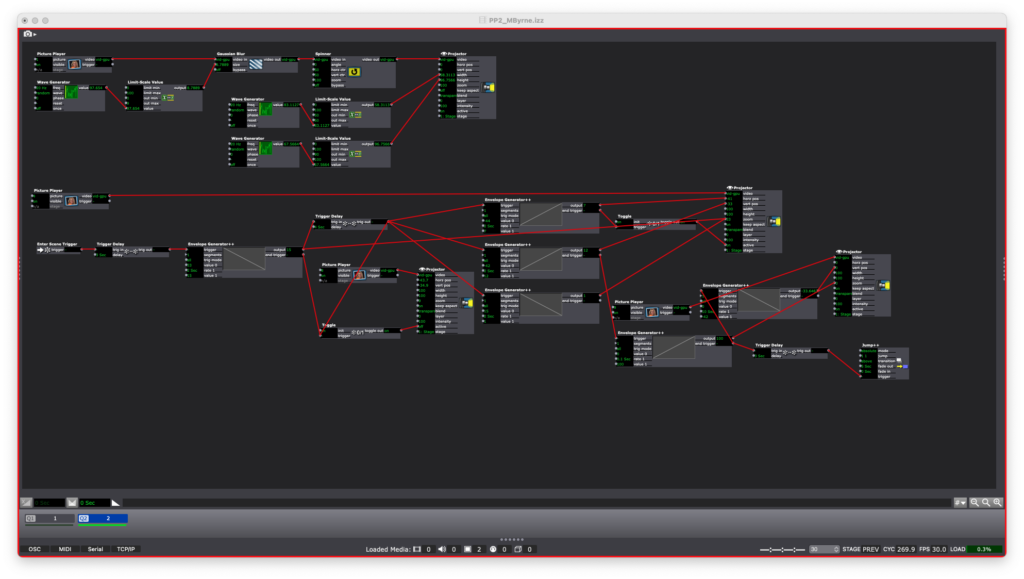

Basically what I was trying to do was create a user friendly interface for the person running the media/sound for my show to just hit assigned buttons and make things happen on cue. I tried my best to automate as many transitions as I could to cut down on them having to think too hard about what do. For instance, to begin the show they just hit the space bar and the next could scenes cycled through automatically. A visual cue was noted in the program to let the Isadora operator know when to transition to the next scene by hitting the “Media Out” button I created.

I made some fairly significant use of the envelope generators in this to control the fading of music and media images. I also created a User Actor that would act as a neutral background and repeat several times throughout the performance. I also utilized a router on several scenes so that I could use the same button to initiate separate MP3 files.

The next two slides are from the end of the show. After the last cue I’m able to let the rest of the show play out without having to manually hit any buttons.

Cycle 1

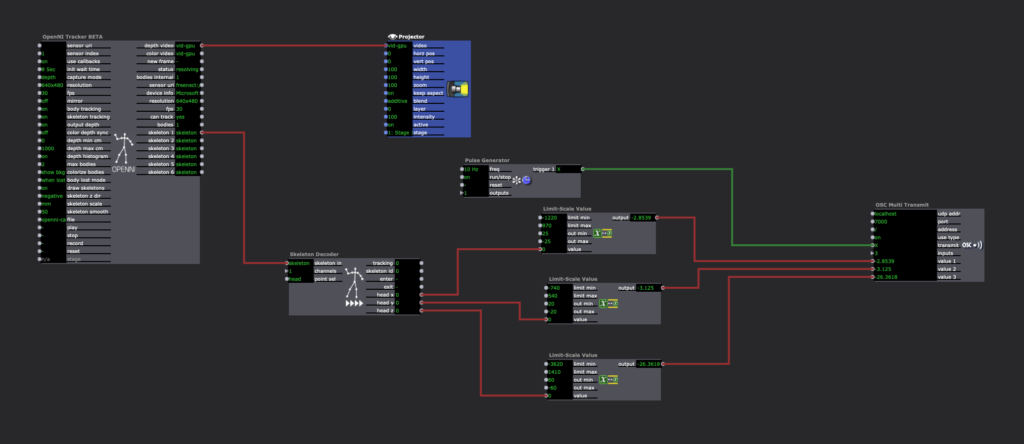

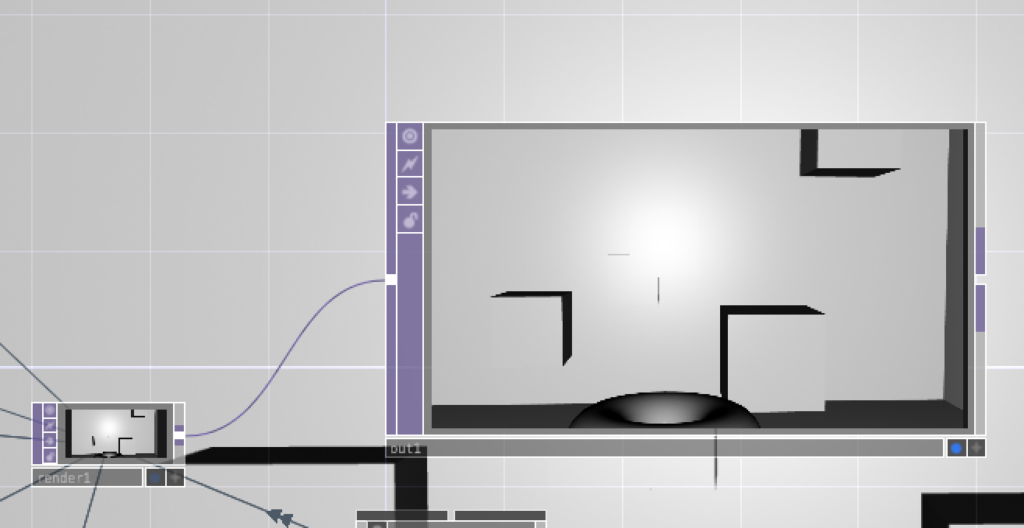

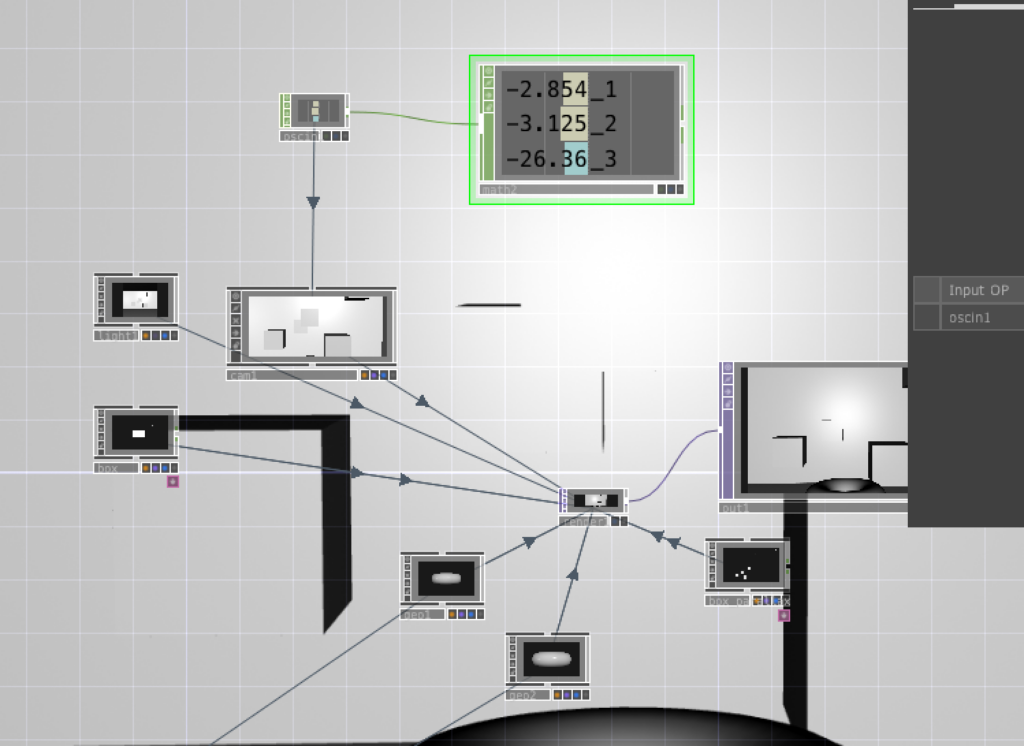

Posted: April 6, 2021 Filed under: Nick Romanowski Leave a comment »My overall goal for this final project is to create a video game like “shooting” environment that utilizes leap motion controls and a parallax effect on the display. Cycle 1 was focused on creating the environment in which the “game” will take place and building out a system that drives the head tracking parallax effect. My initial approach was to use basic computer vision in the form of blob tracking. I originally used a syphon to send kinect depth image from Isadora into TouchDesigner. That worked well enough, but it lacked a z-axis. Wanting to use all of the data the kinect had, I decided to try and track the head in 3D space. This lead me down quite the rabbit hole as I tried to find a kinect head tracking solution. I looked into using NIMate, several different libraries for processing, and all sorts of sketchy things I found on GitHub. Unfortunately none of those panned out so I fell on my backup, which was Isadora. Isadora looks at your skeleton and picks out the bone associated with your head. It then runs that through a limit scale value actor to turn it into values better suited for what I’m doing. Those values then get fed into an OSC transmit actor.

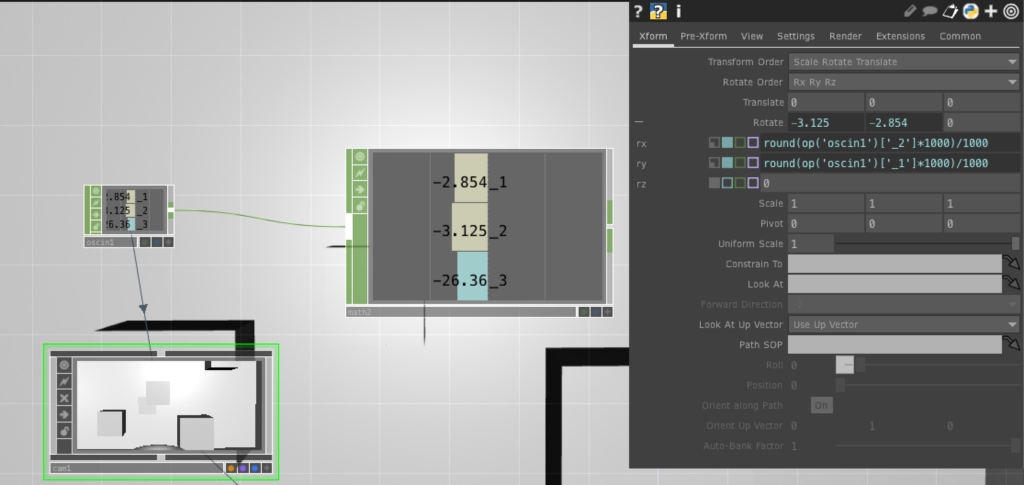

TouchDesigner has an OSCin node that listens for the stream coming out of Isadora. Using a little bit of python, a camera in touch allows its xRotation, yRotation, and focal length to be controlled by the values coming from the OSCin. The image below shows some rounding I added to the code to make the values a little smoother/consistent.

TouchDesigner works by allowing you to set up a 3D environment and feeding the various elements into a Render node. Each 3D file, the camera, and lighting are connected to the renderer which then sends that image to an out which can be viewed in a separate window.

Cycle 1 Documentation (Maria)

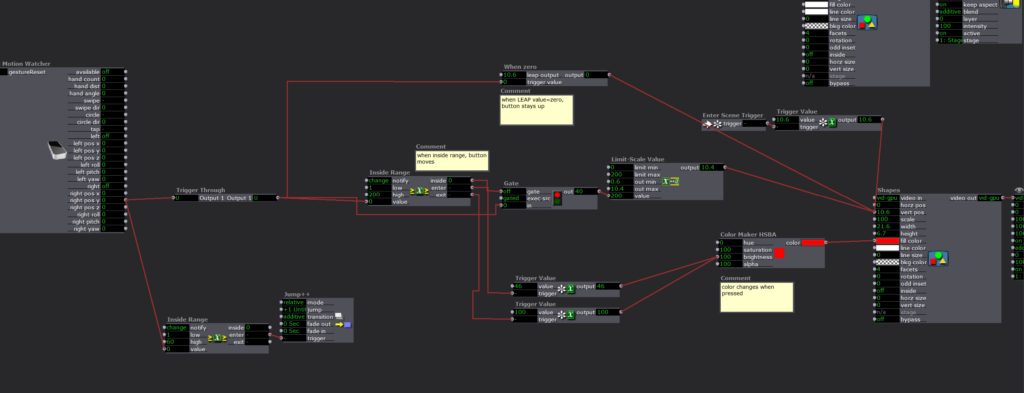

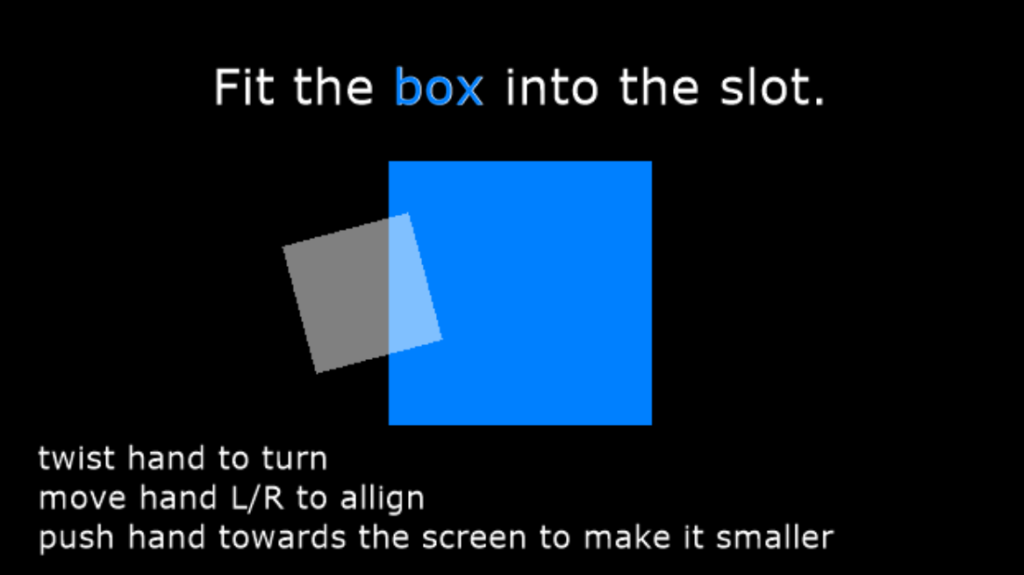

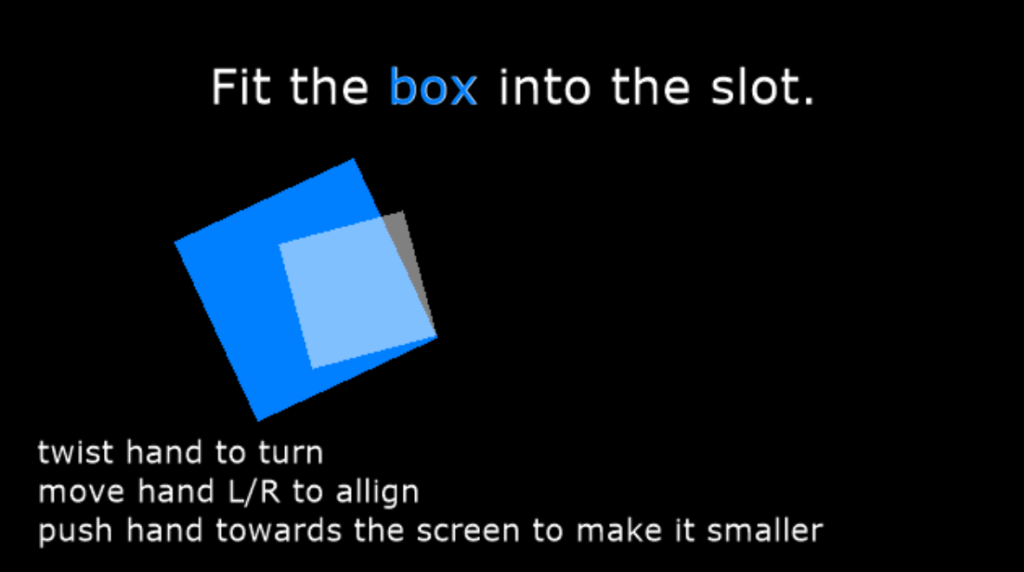

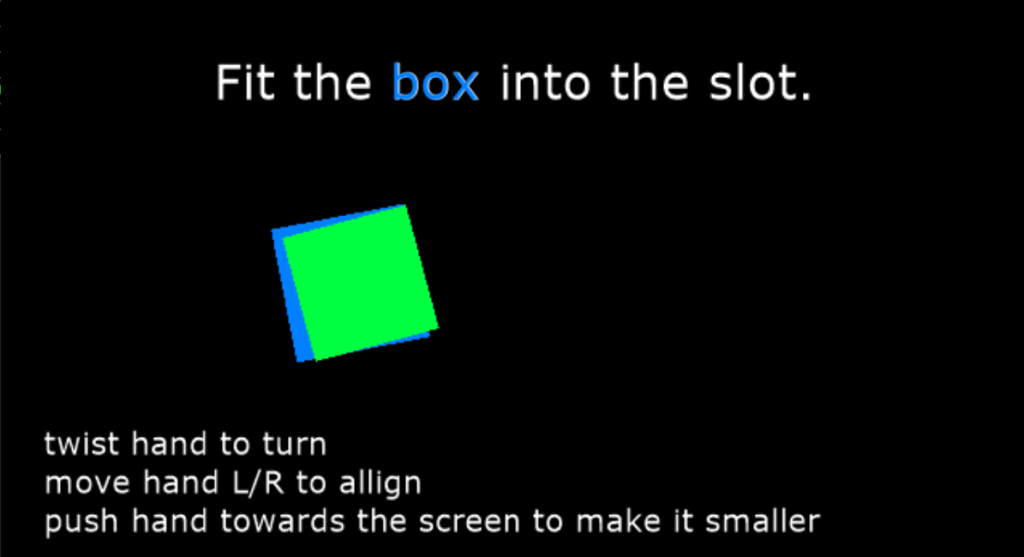

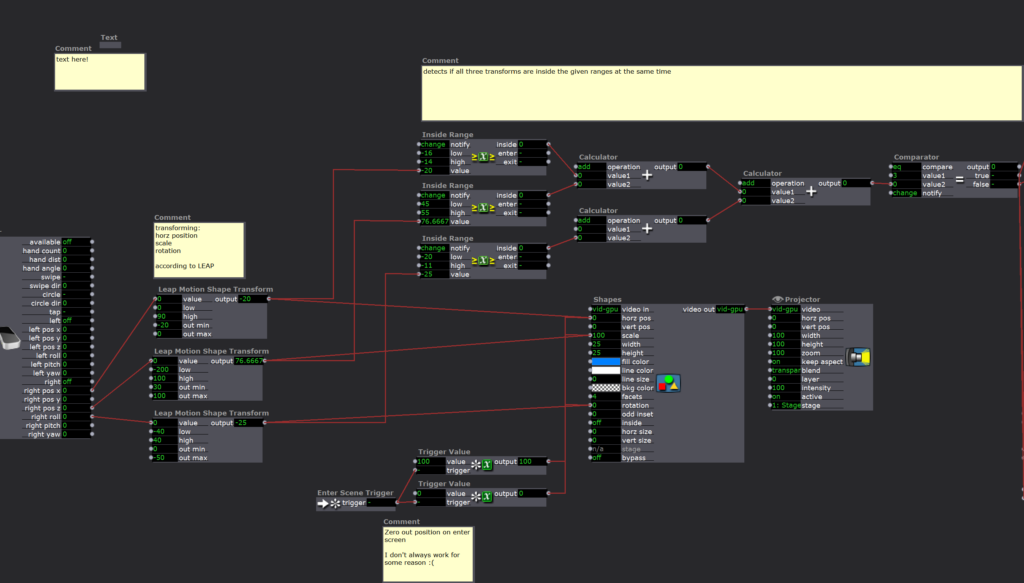

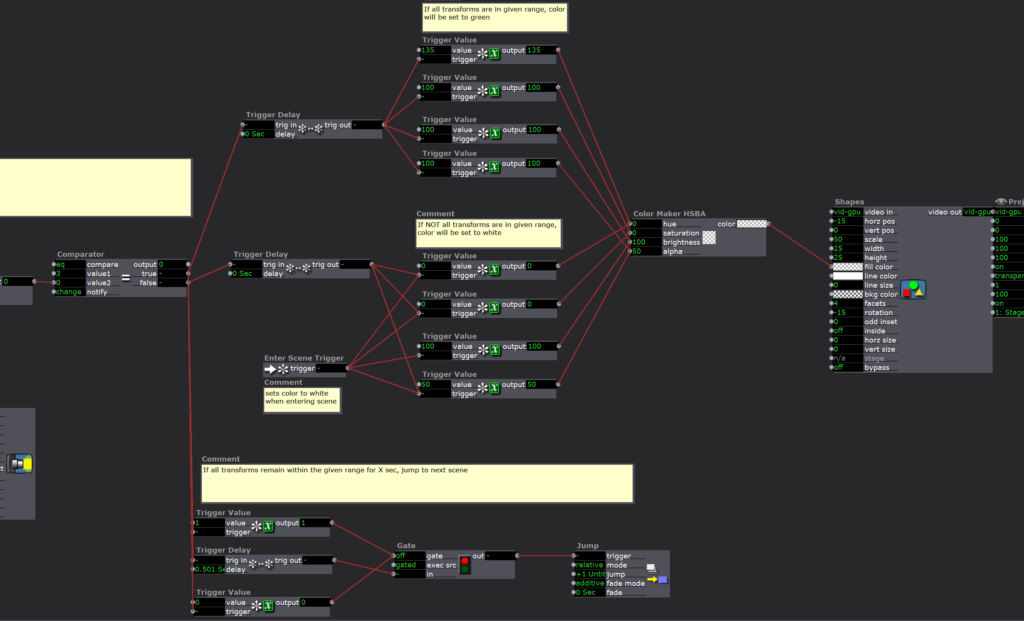

Posted: March 29, 2021 Filed under: Maria B, Uncategorized Leave a comment »For this cycle, I wanted to explore interaction design with the LEAP motion. I made two little ‘puzzles’ in Isadora in which the user would have to complete certain actions to get to the next step. I thought a lot about the users experience–providing user feedback in response to actions and making the movements intuitive. The first one was simply pressing a button by moving your hand downwards.

The second puzzle was aligning a shape into a hole based on the movement of the users hands.

For the next cycle, the goal is to create more of a story that guides/gives purpose to these puzzles.

Cycles 1 (Sara)

Posted: March 25, 2021 Filed under: Assignments, Sara C Leave a comment »Intent

For the remainder of the term, Alex has given us free rein to explore any hardware or software we so choose in order to create an experiential media system of our very own. I’m a lifelong gamer who normally can’t abide motion controls (It’s like those Reese’s commercials: “You got your motion controls in my video game!”), but I’m also desperately curious about the ways in which we can make VR experiences more immersive. As a result, my goal for the next month is to test Leap Motion functionality by swapping out conventional video game controls for hand gestures. Problem 1: I don’t have a VR headset at the moment upon which to test. Problem 2:

I’ve never made a video game.

I decided I’d hop, skip, and jump over Problem 1 entirely and simply focus on a desktop-based, 3D game experience for the duration of this project. As for Problem 2? Well, I did what most any human in the world looking to acquire a new skill would do in the present day—I started browsing YouTube tutorials. I figured I’d run a crash course in rookie game dev before tackling my own Leap-Motion-Meets-Godot prototype. One week and an eight-part video series later:

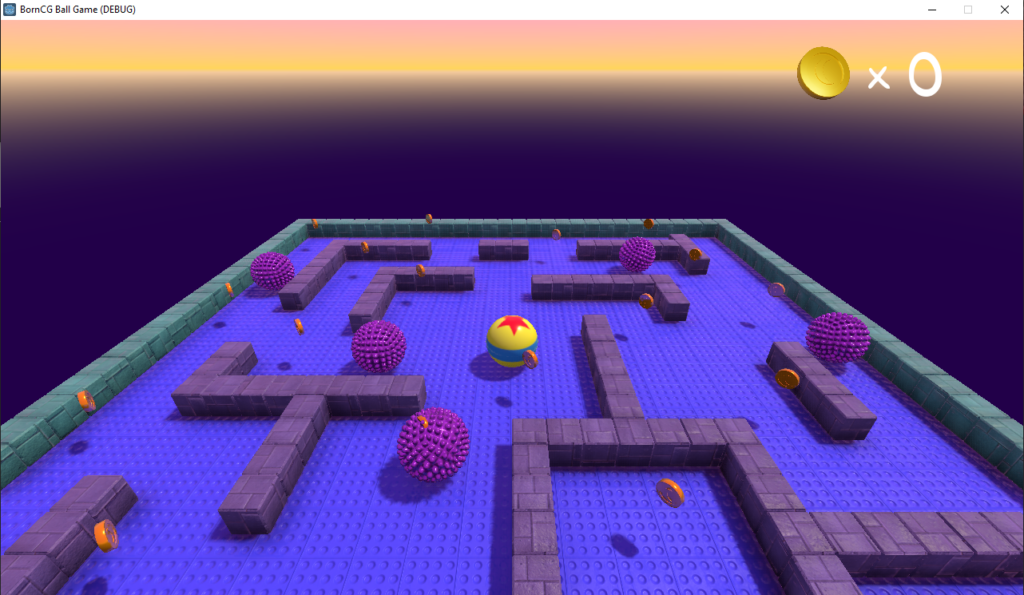

Et voilà, I made…someone else’s video game.

Difficulties

I mentioned to Alex that this achievement feels hollow. The BornCG channel is truly a monument to patient, thorough software skills instruction. I didn’t run into any significant difficulties while following this series because the teacher (who is an actual high school programming teacher, I believe) was so diligent in holding my hand the entire time. But I also completed the software equivalent of a Paint By Numbers picture. Because of that, I don’t feel true ownership of this project. Moving forward, I know I need to veer off the well-trodden, well-lit path and stumble around in the dark woods. It’ll be messy, yes. But it’ll at least be my mess.

Accomplishments

All of that being said, I will say there were a couple of lightbulb moments where I deviated from the script the tutorial instructor laid out before us. A commenter pointed out that the texture for the provided block asset was much larger than it needed to be, which resulted in slower game load times. I couldn’t quite parse the commenter’s proposed solution, so I hopped over into Photoshop and downscaled the texture maps in there and reapplied them in Blender. (Woo!) Later, someone else in the comments lamented that the built-in block randomization feature in Godot was buggy and prone to file size bloat, so I meticulously read, re-read, and re-re-read their solution for manually introducing block variety. (Huzzah!) Similarly, another commenter had a more straightforward solution to coin rotation transforms that didn’t include adding scores of empty objects; it took some time, but I successfully followed that little side trail as well. (아싸!)

Finally, like a teacher who shows you how to solve example problems before leaving you to your own devices, the instructor ended the series with the game only three quarters complete. I wanted to push it over the finish line, so using the skills I’d accrued, I populated the scene with additional enemies and coins to bring it wholly to life. I gotta say, while it may not feel like “my” game, I sure am proud of that low-poly assortment of randomized blocks, those spinning coins, and those gnarly, extra enemies.

Now, on to the next one!

(Link to video of completed game)

Pressure Project 3

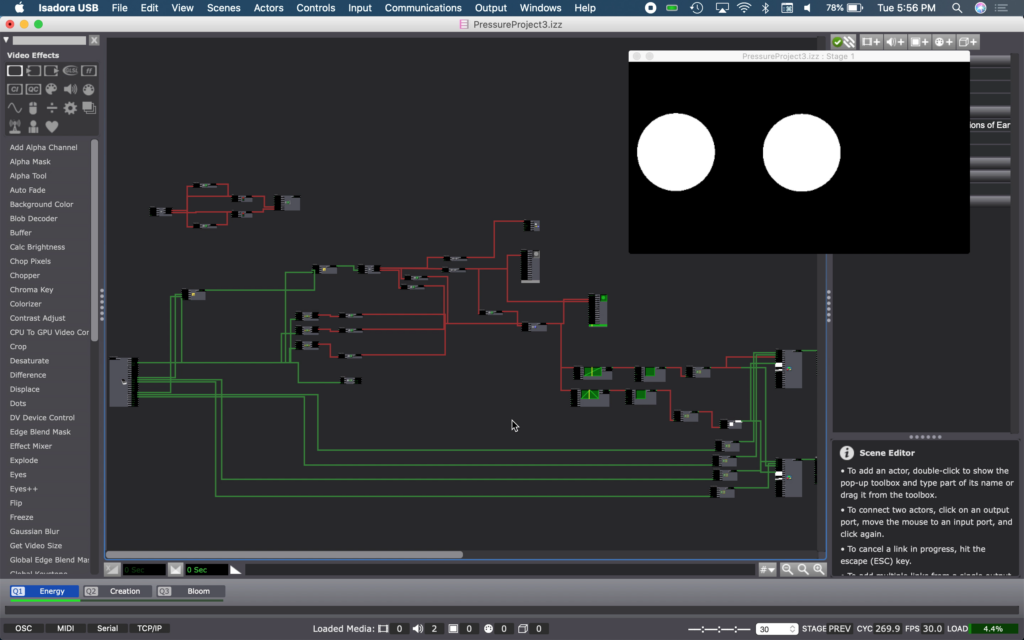

Posted: March 21, 2021 Filed under: Nick Romanowski, Pressure Project 3 | Tags: Nick Romanowski Leave a comment »For Pressure Project 3, I wanted to tell the story of creation of earth and humanity through instrumental music. One of my favorite pieces of media that has to do with the creation of earth and humanity, is a show that once ran at EPCOT at Walt Disney World. The nighttime spectacular was called Illuminations: Reflections of Earth and used music, lighting effects, pyrotechnics, and other elements to convey different acts showcasing the creation of the universe and our species.

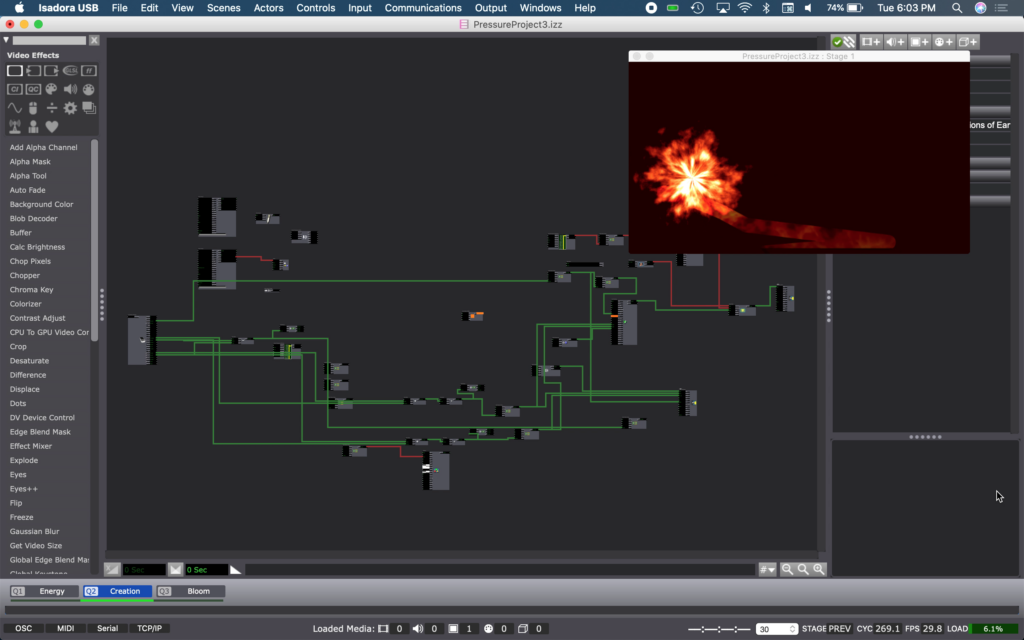

I took this piece of music into Adobe Audition and split the track up into different pieces of music and sounds that could be manipulated or “visualized” in Isadora. My idea was to allow someone to use their hands to “conduct” or influence each act of the story as it plays our through different scenes in Isadora. The beginning of the original score is a series of crashes that get more and more rapid as time approaches the big band and kicks off the creation of the universe. Using your hands in Isadora, you become the trigger of that fiery explosion. As you bring your hands closer to one another, the crashes become more and more rapid until you put them fully together and you hear a loud crash and screech and then immediately move into the chaos of the second scene which is the fires of the creation of the universe.

Act 2 tracks a fire ball to your hand using the Leap Motion. The flame effect is created using a GLSL shader I found on shader toy. A live-drawing actor allows a tail to follow it around. A red hue in the background flashes in sync with the music. This was an annoyingly complicated thing to accomplish. The sound frequency watcher that flashes the opacity of that color can’t listen to music within Isadora. So I had to run my audio through a movie player that outputted to something installed on my machine called Soundflower. I then set my live capture audio input to Soundflower. This little path trick allows my computer to listen to itself as if it were a mic/input.

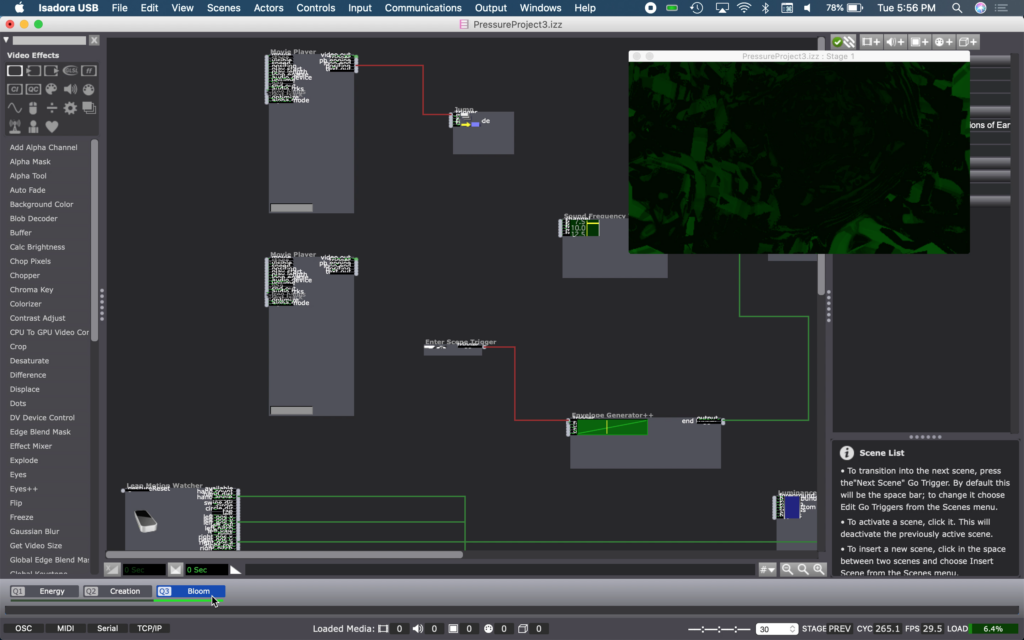

Act 3 is the calm that follows the conclusion of the flames of creation. This calm brings green to the earth as greenery and foliage takes over the planet. The tunnel effect is also a shader toy GLSL shader. Moving your hands changes how it’s positioned in frame. The colors also have opacity flashes timed with the same Soundflower effect described in Act 2.

I unfortunately ran out of time to finish the story, but I would’ve likely created a fourth and fifth act. Act 4 would’ve been the dawn of humans. Act 5 our progress as a species to today.

Pressure Project 2 (Murt)

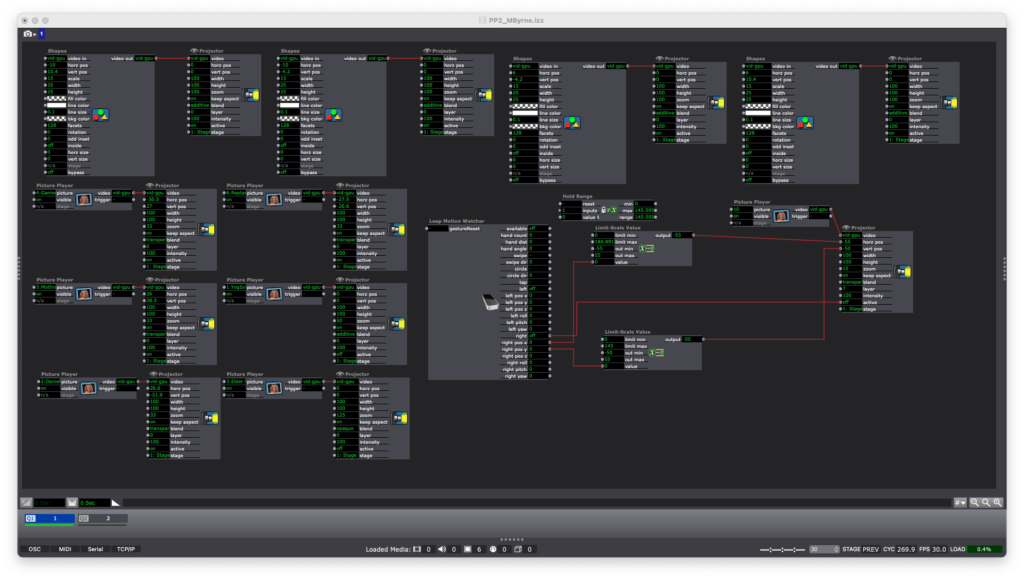

Posted: March 20, 2021 Filed under: Uncategorized Leave a comment »For this project, I decided to work with some design elements which I did not use in PP1: set travel paths and pre-existing image assets. While I was able to get the Leap Motion functioning as I wanted it(almost), I ran out of time before I could get the trigger system set up.

I call this piece Kaiju vs. Yog-Sothoth even though two of the four available characters are American cartoon characters. The characters are: Gamera, Mothra, Reptar, and Daniel Tiger. The user is intended to select characters by moving a cursor(a six-fingered hand pointer). Each character tries to fight the Lovecraftian “Outer God” Yog-Sothoth. Only Daniel Tiger is successful. Emotional intelligence is somehow effective against Yog-Sothoth’s non-corporeal intrusion of our world.

The first of the two Isadora scenes is the character select screen which includes the Leap Motion system, shapes, and character PNGs.

The second scene is Gamera going up against Yog-Sothoth. YS is animated with Gaussian Blur and some random waves which control X/Y dimensions. Gamera is animated in scale and path, plus a chat bubble. There is one additional animation which occurs after Gamera’s charge.

It would have been nice to see this finished, but I think what I was able to come up with is fun in itself.