Pressure Project 2

Posted: October 1, 2020 Filed under: Uncategorized Leave a comment »I learned from the last project and started with a written brainstorm of ideas before doing any actual work on this. Then I narrowed it down to what I actually wanted and drew a design, specified my goals, and the basic logic of the program. I decided to create a Spontaneous Dance Party Machine for my staircase. The goal was to randomly surprise someone coming down the stairs with an experience that would inspire them to dance and be in the moment. So while they might have been on their way to get food before trudging back up the stairs for more hours of Zoom, this gives them a chance to let loose for a minute of their day.

The resources I used for this were the Makey-Makey attached to aluminum for the trigger. I designed the aluminum on the staircase to be triggered by the normal way that someone might put their hand on the railing when descending.

Then the Makey-Makey plugged into my computer which ran the Isadora program.

Then I had to get the stage from Isadora into my projector. I did not have the right kind of cable to plug the projector directly into my computer but it would plug into my phone. So I used an app called ApowerMirror which was installed on both my phone and computer in order to mirror my computer screen onto my phone. This allowed me to transfer what was on my full screen stage on my computer into the projector that would serenade the person who triggered the stairs.

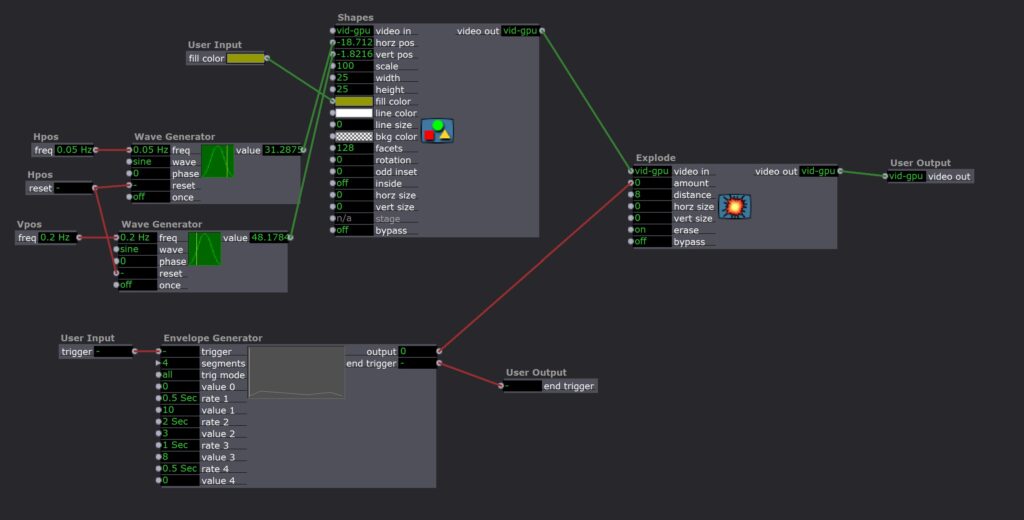

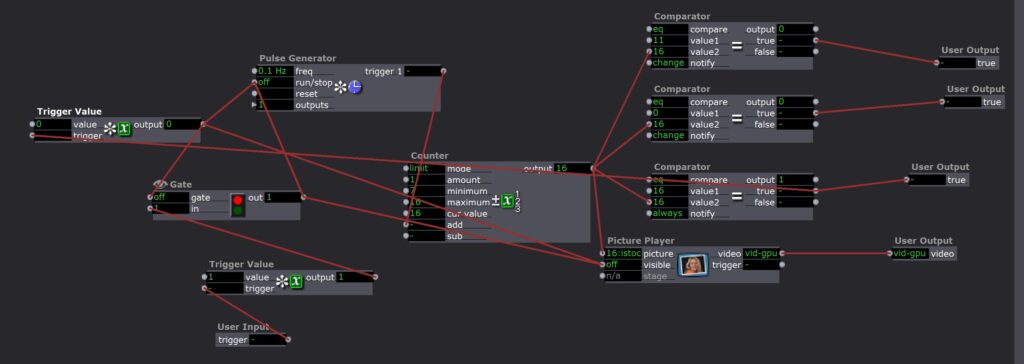

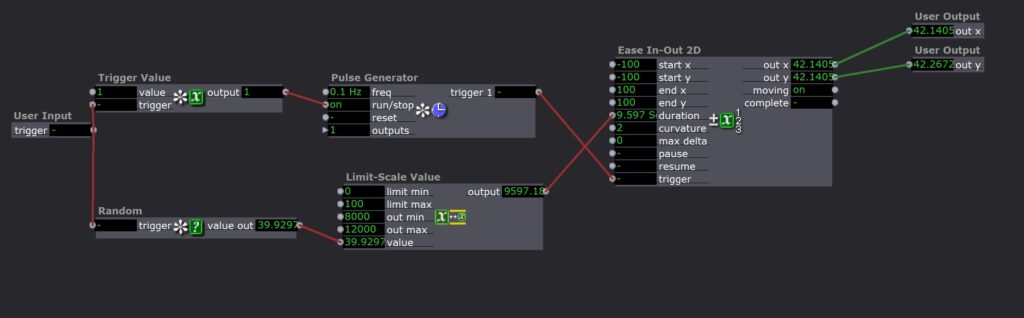

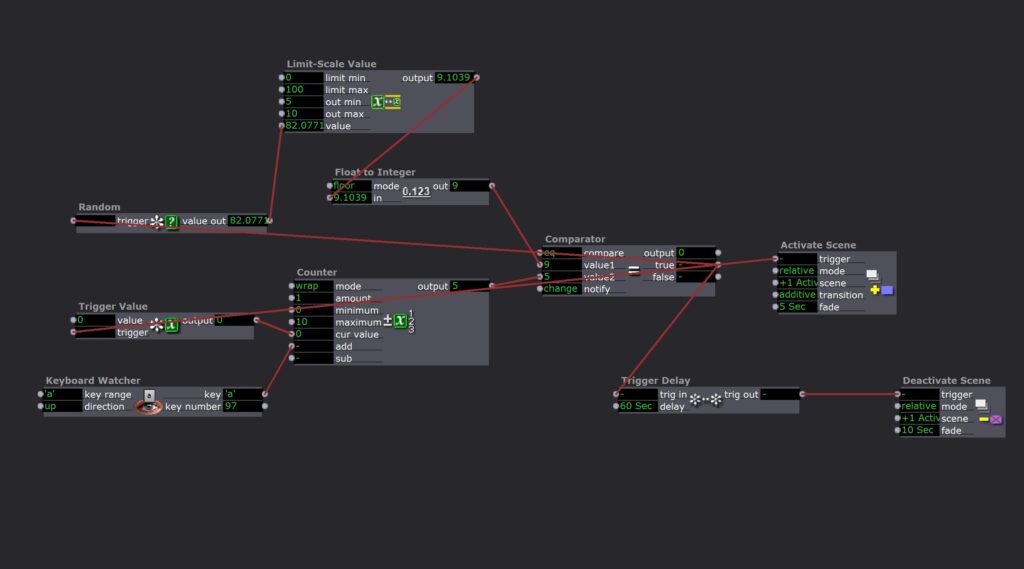

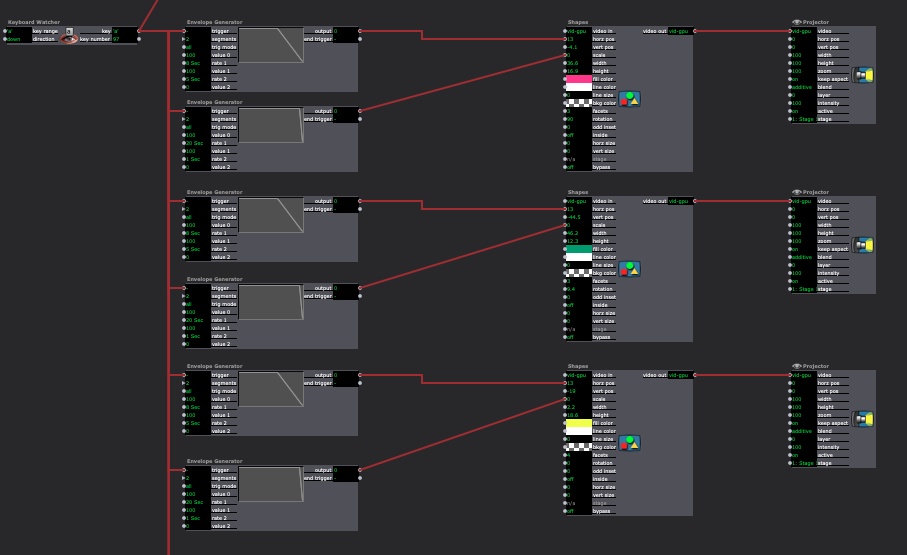

I used two scenes to manage this experience. The first one counted the triggers as well as determining how many times someone had to trigger the stairs before the projection and music would start. It randomly generated a new number from 5-10 for each round.

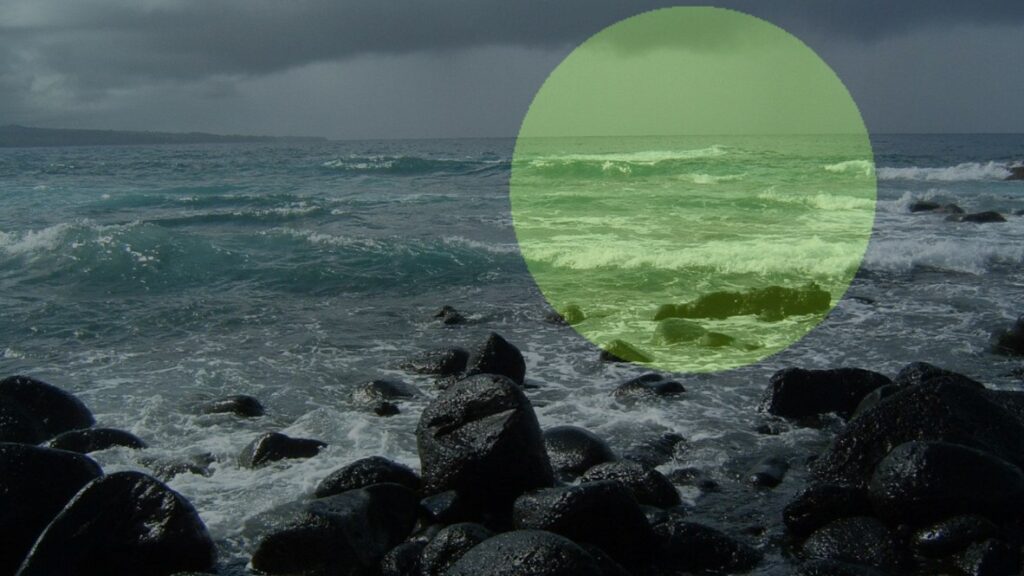

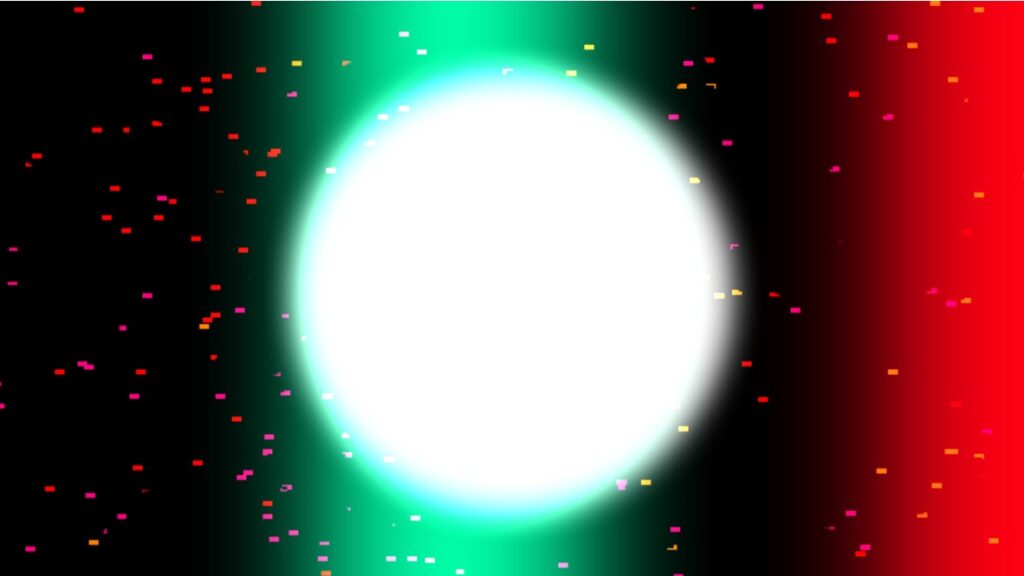

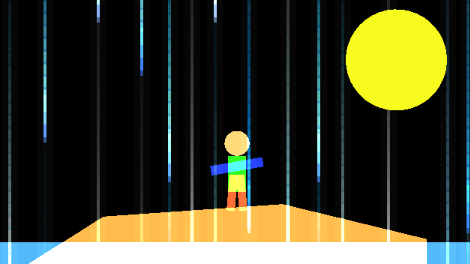

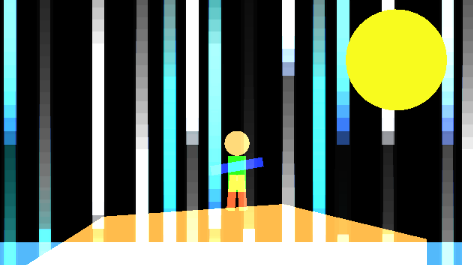

It would then activate the next scene which contained a colorful projection and started a random fun song to dance to. After one minute the scene would be slowly deactivated.

Overall I was really satisfied with this project. It took me a lot less time than the previous one I think because I took the time to plan at the beginning. It was also super fun to leave up in my house and spontaneously be able to jam out to a good song!

A few things to note for the future would be 1) I couldn’t projection map because I was just mirroring my computer screen; 2) my phone battery was used up very quickly my mirroring and projecting. So I would want to look for an app that could act as a second monitor or even better, get an HDMI to USB-A cord that would connect my projector directly to my computer.

Tara Burns – The Pressure is on (PP1)

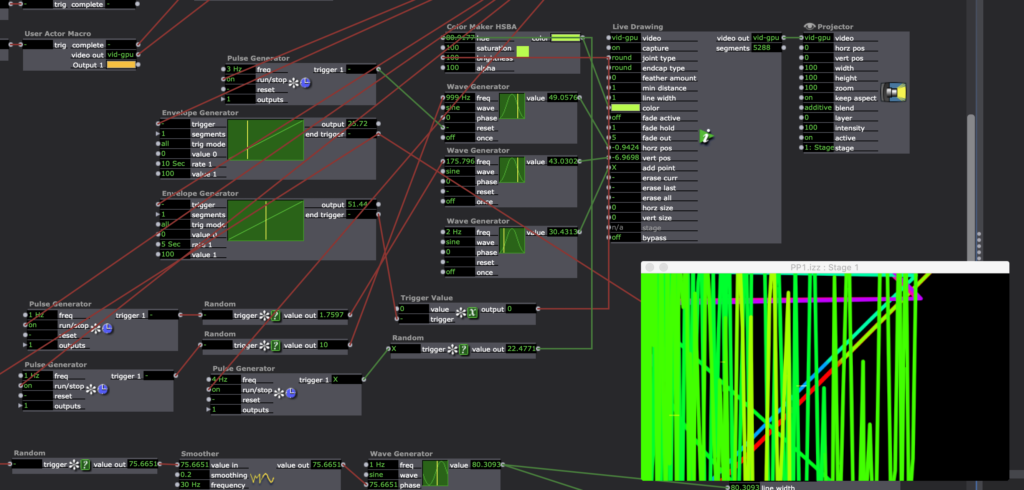

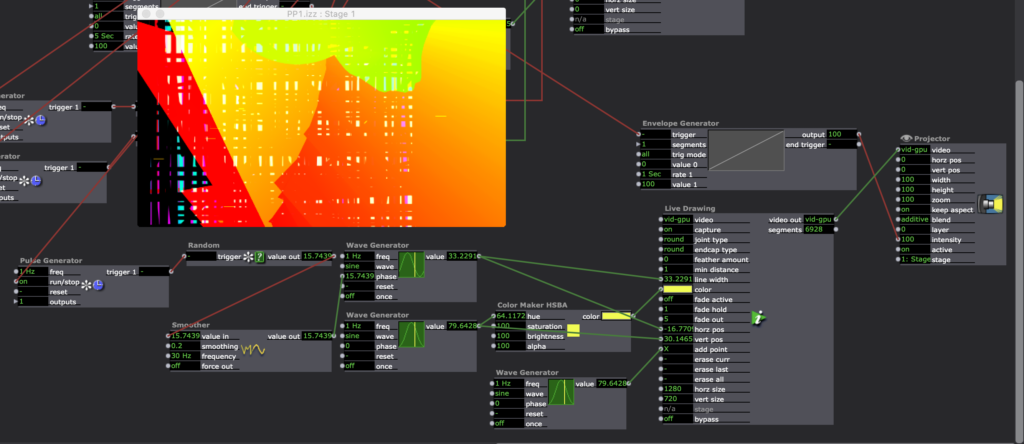

Posted: September 11, 2020 Filed under: Uncategorized Leave a comment »Goals:

To use the Live Drawing actor

To deepen my understanding of user actors and macros.

Challenges:

Finessing transitions between patches

Occasional re-setting glitches (it sometimes has a different outcome than the first 10x)

Making things random in the way you want them to be random is difficult.

Pressure Project 1

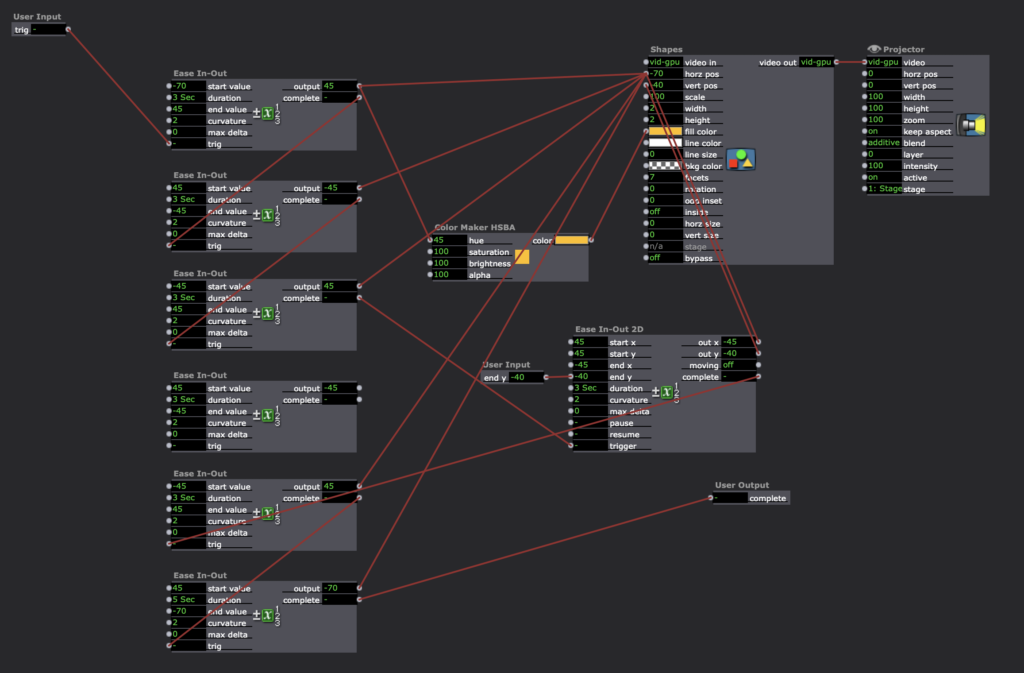

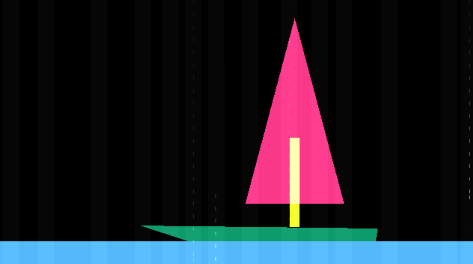

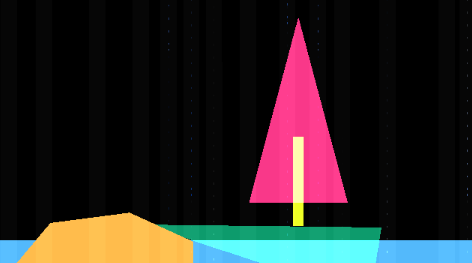

Posted: September 10, 2020 Filed under: Uncategorized Leave a comment »For Pressure Project 1 I created a narrative of a ship crashing on an island. A person is stranded and is eventually saved by a plane. I achieved this by using multiple shapes to create the different animations and envelope generators to move them across the screen. I also created a rain effect that slowly grew in intensity.

This project was a fun challenge. In trying to find a way to keep the audience guessing at what is going to happen next, I thought telling a story would be the best way. This wasn’t as easy as I thought it would be.

I ran into a few problems with layering of shapes/colors and keeping shapes together in a group as they moved across the screen. In the future, I would create virtual stages and create compositions that way first then manipulate the boat.

Pressure Project 1

Posted: September 10, 2020 Filed under: Uncategorized Leave a comment »Kenny Olson

This video was what I used as inspiration for this project: https://www.youtube.com/watch?v=yVkdfJ9PkRQ

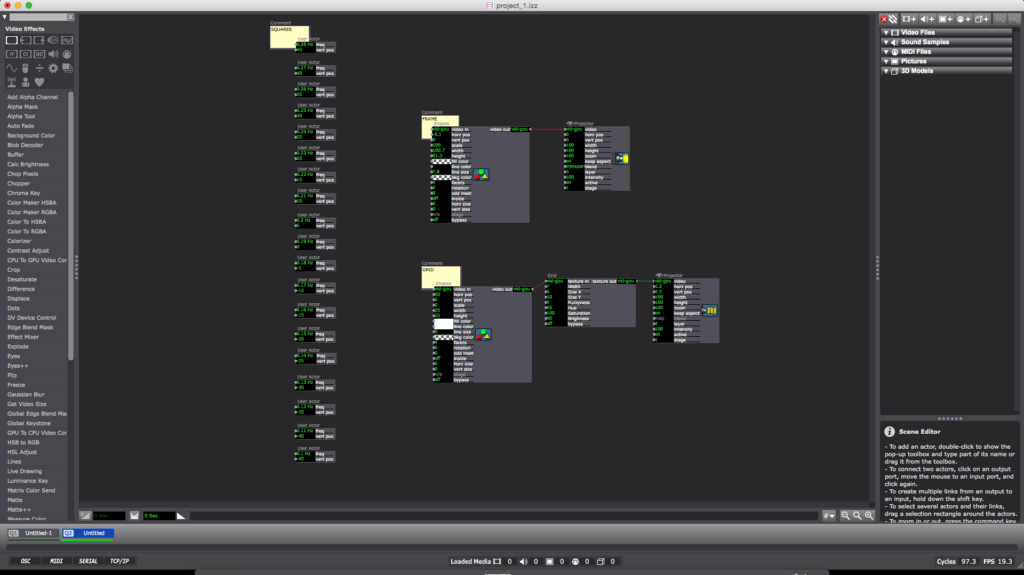

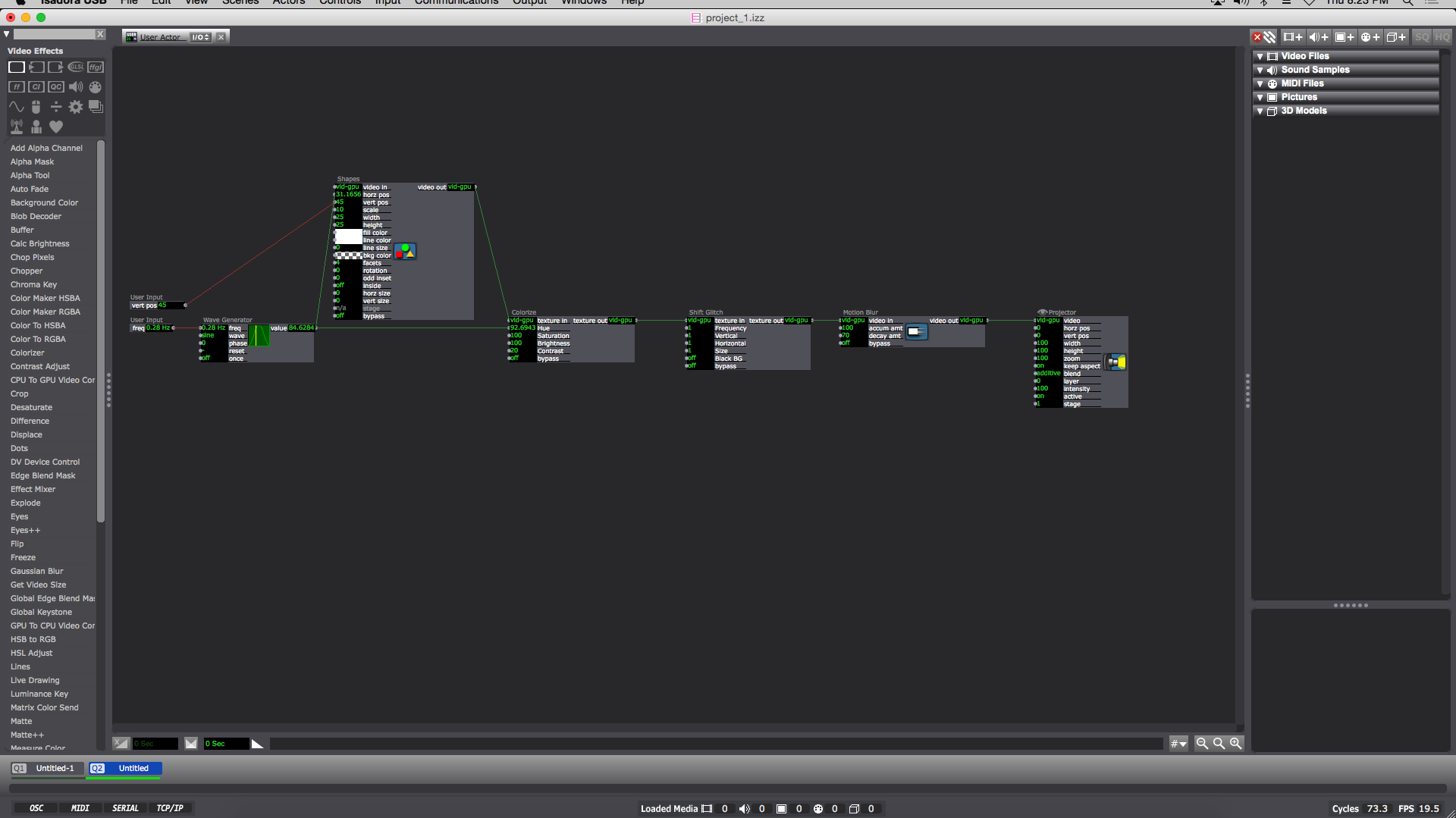

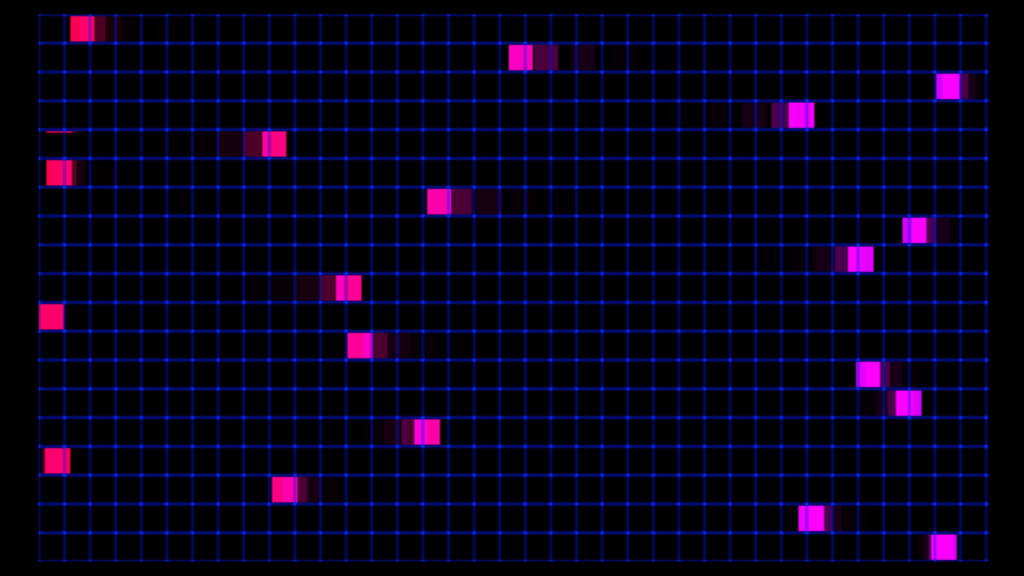

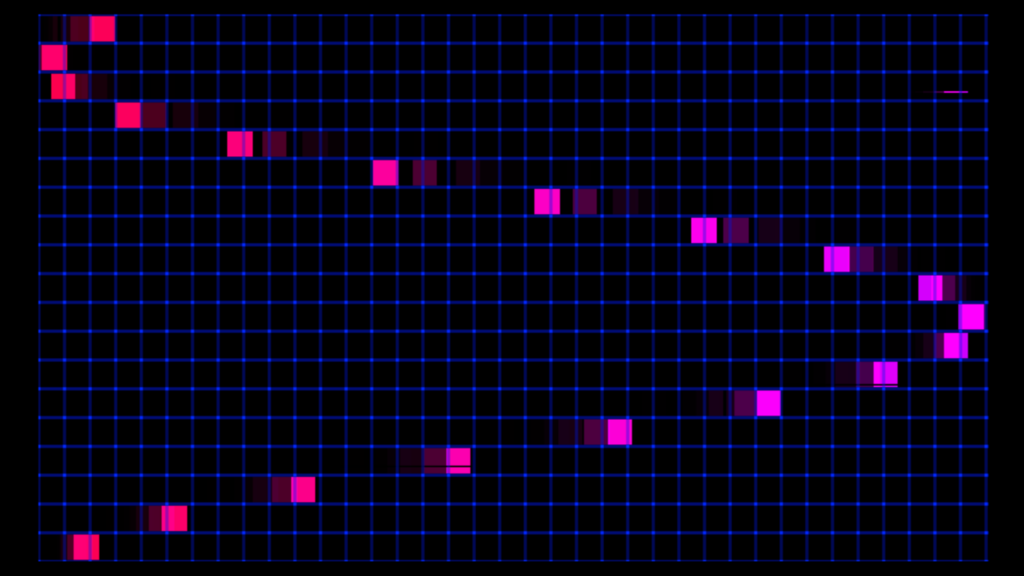

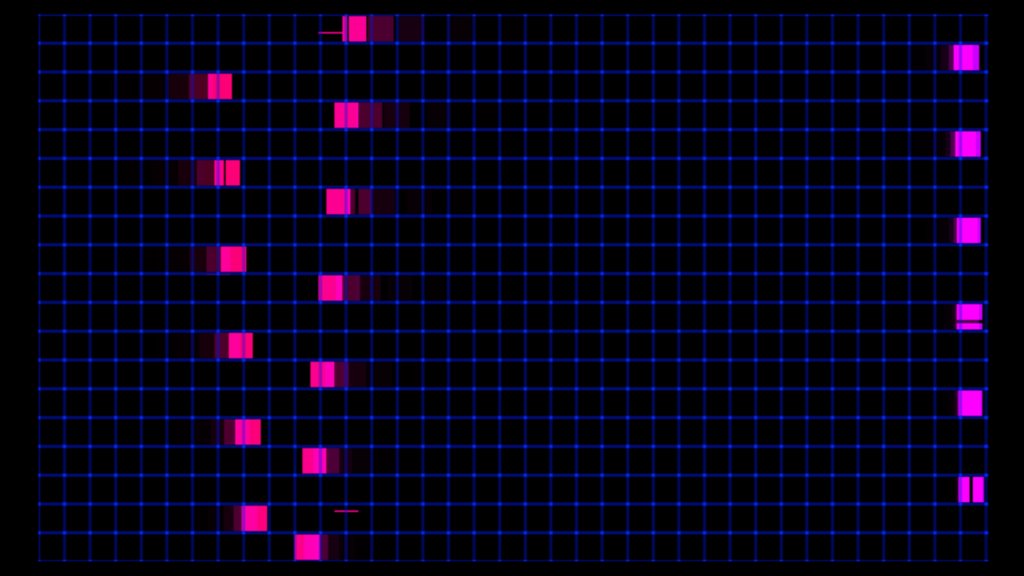

I wanted to create a looping and evolving “vaporwave aesthetic” visualization in Isadora. I found a video (linked above) of different length pendulums swinging and I wanted to try and recreate it. Once the Math was figured out the overall pattern was very simple. I added A black frame and a grid for aesthetic purposes (the ‘Nodes’ pictured above). The main chunk of the magic lives inside of the ‘User Actors’.

Inside each ‘User Actor’ Is a ‘Wave Generator’ feeding into the ‘Hue’ of a ‘Colorize Node’ (the min and max of the ‘Colorize Hue’ are min:80 max:95) and the ‘horizontal position’ of the ‘Shapes Node’. The ‘Shape Node’ ‘scale value’ is 10. Then the ‘Shapes Node’ ‘video out’ feeds into the ‘Colorize Node’ ‘texture in’. Next the ‘Colorize Node’ goes into the ‘texture in’ of a ‘shift Glitch Node’ (this is for looks and adds a fun glitching effect). I should also note the values of: ‘frequency’, ‘vertical’, ‘horizontal’, and ‘size’ on the ‘Shift Glitch Node’ are all set at 1. Then the ‘texture out’ of the ‘Shift Glitch’ goes into the ‘video in’ of a ‘Motion Blur Node’ with ‘accumulation amount’ set to 100 and ‘decay amount’ set to 70. Next the ‘video out’ of the Motion Blur Node’ goes into the ‘video’ of a ‘Projector Node’. Finally I added a ‘User Input Node’ into the ‘vertical position’ of the ‘Shapes Node’ and another ‘User Input’ into the ‘frequency’ of the ‘Wave Generator Node’. When completed the result should look like something similar to the above image. (you can now ‘save and update all’ and leave the ‘User Actor Window’ )

Now this is when the Fun starts. Back in the main composition window of Isadora you should have a newly created ‘User Actor’ with 2 adjustable values (‘frequency’ and ‘vertical position’). To get started adjust the ‘frequency’ to 0.28 Hz and the ‘vertical position’ to 45. You can then duplicate the ‘User Actor’ as many times as you please. The trick is to subtract 0.01Hz from the ‘frequency’ and subtract 5 from the ‘vertical position’ in a descending pattern with every additional ‘User Actor’ (My 1st ‘User Actor’ starts with a ‘frequency’ of 0.28 Hz and a ‘vertical position’ of 45 ending with my 19th ‘User Actor’ with a ‘frequency’ of 0.1 Hz and a ‘vertical position’ of -45)

To reset the program simply reenter the current scene from a previous scene (with a “Keyboard Watcher Node” and a “Jump++ Node” or by using the ‘space bar’)

I should also note: as long as all the ‘User Actors’ are separated by a constant ‘frequency’ and ‘vertical position’ (or ‘horizontal position’) value from each other (in either a positive or negative direction) the pattern will continue on forever in said direction.

A fun thing about creating this mathematical visualization digitally is there is no friction involved (as shown in the video example, eventually the balls will stop swinging) meaning this pattern will repeat and never stop until Isadora is closed.

Pressure Project #1

Posted: September 10, 2020 Filed under: Uncategorized Leave a comment »Working on this project, I wanted to play around with scenes as well as find a way to incorporate some randomly generated elements. I knew that I wanted to get away from a black background, so I found an image with several different colored blocks and zoomed in on it and then set it to move around the screen as a background.

I originally thought my project would consist of rectangular shapes, so I generated one to move in the layer above the background. However, then I started to think about drawing lines in front of the moving block. My first idea was to see if it would be possible to record a mouse movement and then play it back inside of the mouse watcher. After a little playing, I used a random actor to generate lines. This led me to the idea of more and more lines being generated before the project loops back to the beginning.

I began to explore ways of going to different scenes so that the lines would begin to draw on top of each other. As we discussed in class, I could have accomplished this more efficiently by using the jump actor.

One of the best parts about this project to me was seeing different ways people laid out their workspace as well as getting ideas for new actors. Through this project I feel much more confident about basic kinds of actors as well as working with scenes.

Link to Isadora file:

https://1drv.ms/u/s!Ai2N4YhYaKTvgY9sbl-j6aDoPcdJMg?e=9McCvN

Pressure Project One

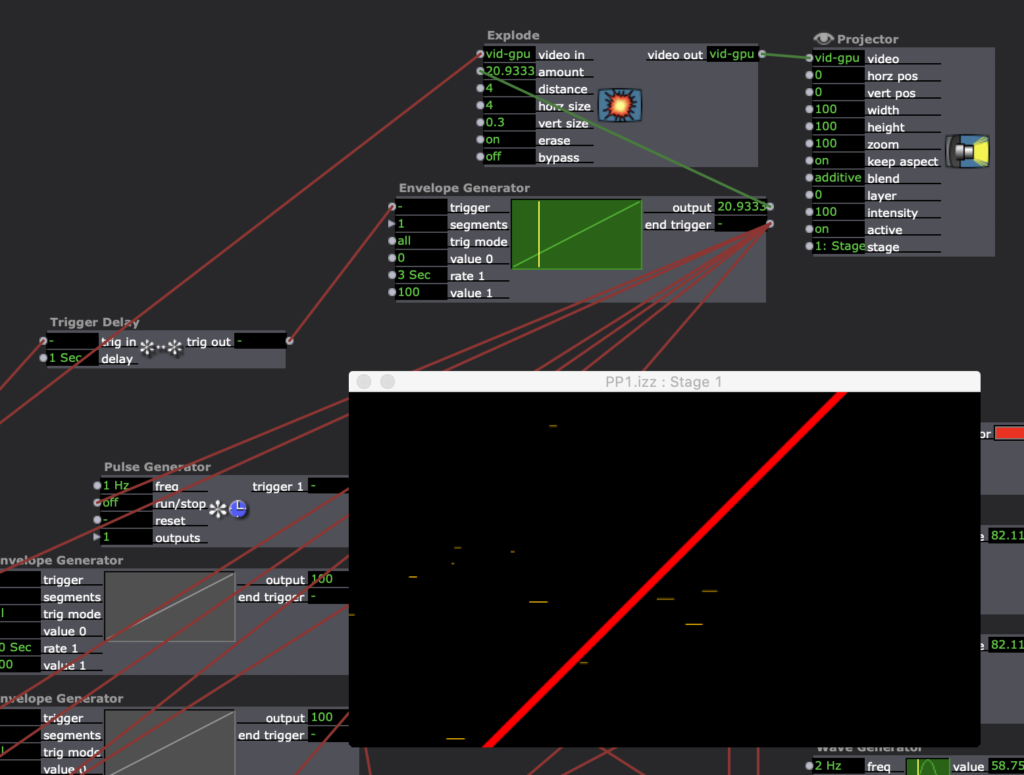

Posted: September 10, 2020 Filed under: Uncategorized | Tags: Pressure Project One Leave a comment »In this Pressure Project, while learning more of how the Isadora Program works, I was exploring chaos and randomness, and how to continually bring something new on screen before something would fully repeat itself. I went through and created figures that zoomed across the screen, that consistently changed in color, size, and shape. I wanted bursts of shapes that were stationary in their horizontal and vertical positions, but also rotated in order to keep the mind focused in different places. Overall, I learned much of how the program worked, and made some fun chaos in the process.

Project Bump

Posted: September 8, 2020 Filed under: Uncategorized Leave a comment »https://dems.asc.ohio-state.edu/wp-admin/post.php?post=1761&action=edit

The pictures of this project immediately got my attention. With a group of people standing in a space together with, what looks like, a projection on the floor and a wall with several dots. I love the idea of creating an experience that was playful, interactive, tricky, and physically engaging. Modernizing a board game like Candy Land with projections and body movement. so cool!

Project Bump

Posted: September 8, 2020 Filed under: Uncategorized Leave a comment »I found Aaron Cochran’s cycle project to have an interesting trajectory. https://dems.asc.ohio-state.edu/?p=2281

I like how Aaron went from working with the Kinect sensor and projector to create the interactive game. I thought the idea of this kind of augmented reality game was executed well and the environment seemed very responsive to the movements of the player. Throughout all three of his cycles Aaron seemed to have a logical process that arrived at a good result.

Project Bump

Posted: September 7, 2020 Filed under: Uncategorized Leave a comment »I really enjoyed reading about Parisa Ahmadi’s final project “Nostalgia” (https://dems.asc.ohio-state.edu/?p=1743). The way the visuals of the final project overlapped on various fabrics created a full world of ideas just like how I would imagine my memories swirling around my mind. The softness of the fabric and genuine content of the visuals develops a more intimate space and allows audience members to feel comfortable experiencing what ever they end up experiencing. Since the visuals were connected to specific triggers on objects the audience would be able to directly interact and have a sense of how they impact their environment. Overall it seemed like a really thoughtful project and the result was able to surround the viewer with activity that interacted with all of the senses.